由于共享内存拥有仅次于寄存器的读写速度,比全局内存快得多。因此,能够用共享内存访问替换全局内存访问的场景都可以考虑做对应的优化。

不利用共享内存的矩阵乘法

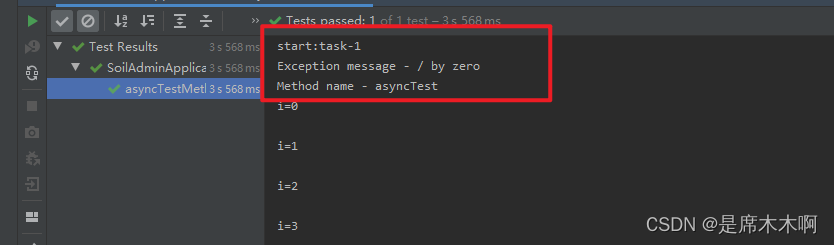

不利用共享内存的矩阵乘法的直接实现。每个线程读取A的一行和B的一列,并计算C的相应元素,如图。

访问次数 :

从全局内存中读取A的次数为B.width,读取B的次数为A.height。

#include <stdio.h>

#include "cuda_runtime.h"

#include "device_launch_parameters.h"

#include "error.cuh"

#include <stdlib.h>

#include<math.h>

#include <malloc.h>

#include <stdlib.h>//利用share memory 和统一内存优化矩阵乘

#define M 80

#define N 2000// 线程块尺寸

#define BLOCK_SIZE 16///-----------没有共享内存的矩阵乘法-----------

// 矩阵以行为主的顺序存储:

// M(row, col) = *(M.elements + row * M.stride + col)

typedef struct

{int width;int height;float* elements;

} Matrix;// MatMul()调用的矩阵乘法内核

__global__ void MatMulKernel(Matrix A, Matrix B, Matrix C)

{// 每个线程通过将结果累积到Cvalue中来计算C的一个元素float Cvalue = 0;int row = blockIdx.y * blockDim.y + threadIdx.y;int col = blockIdx.x * blockDim.x + threadIdx.x;for (int e = 0; e < A.width; ++e)Cvalue += A.elements[row * A.width + e] * B.elements[e * B.width + col];C.elements[row * C.width + col] = Cvalue;

}// 矩阵乘法核的前向声明

//__global__ void MatMulKernel(const Matrix, const Matrix, Matrix);

// 矩阵乘法-主机代码

//矩阵维度被假定为BLOCK_SIZE的倍数

void MatMul(const Matrix A, const Matrix B, Matrix C)

{// 将A和B加载到设备内存Matrix d_A;d_A.width = A.width; d_A.height = A.height;size_t size = A.width * A.height * sizeof(float);cudaMalloc(&d_A.elements, size);cudaMemcpy(d_A.elements, A.elements, size, cudaMemcpyHostToDevice);Matrix d_B;d_B.width = B.width; d_B.height = B.height;size = B.width * B.height * sizeof(float);cudaMalloc(&d_B.elements, size);cudaMemcpy(d_B.elements, B.elements, size, cudaMemcpyHostToDevice);// 在设备内存中分配CMatrix d_C;d_C.width = C.width; d_C.height = C.height;size = C.width * C.height * sizeof(float);cudaMalloc(&d_C.elements, size);// Invoke kerneldim3 dimBlock(BLOCK_SIZE, BLOCK_SIZE);dim3 dimGrid(B.width / dimBlock.x, A.height / dimBlock.y);MatMulKernel <<< dimGrid, dimBlock >>>(d_A, d_B, d_C);//从设备内存中读取CcudaMemcpy(C.elements, d_C.elements, size, cudaMemcpyDeviceToHost);// 释放设备内存cudaFree(d_A.elements);cudaFree(d_B.elements);cudaFree(d_C.elements);

}int main()

{ Matrix matrix_1, matrix_2, matrix_out;int memsize = sizeof(float) * M * N;int memsize_out = sizeof(float) * M * M;matrix_1.width = matrix_2.height = M;matrix_2.width = matrix_1.height = N;matrix_out.width = matrix_out.height = M;cudaMallocHost((void**)&matrix_1.elements, memsize);cudaMallocHost((void**)&matrix_2.elements, memsize);cudaMallocHost((void**)&matrix_out.elements, memsize_out);for (int y = 0; y < N; y++)for (int x = 0; x < M; x++)matrix_1.elements[y * M + x] = (float)rand() / 1.0E5 ;for (int y = 0; y < M; y++)for (int x = 0; x < N; x++)matrix_2.elements[y * N + x] = (float)rand() / 1.0E5;//for (int y = 0; y < N; y++)//{// printf("\n matrix_1[%d]:\n", y);// for (int x = 0; x < M; x++)// {// printf("%.2f ", matrix_1.elements[y * M + x]);// }//}//for (int y = 0; y < M; y++)//{// printf("\n matrix_2[%d]:\n", y);// for (int x = 0; x < N; x++)// {// printf("%.2f ", matrix_2.elements[y * N + x]);// }//}cudaEvent_t start, stop_gpu;cudaEventCreate(&start);//创建事件cudaEventCreate(&stop_gpu);//创建事件cudaEventRecord(start, 0);//记录事件MatMul(matrix_1, matrix_2, matrix_out);cudaEventRecord(stop_gpu,0);//记录事件cudaEventSynchronize(stop_gpu);float time_gpu;cudaEventElapsedTime(&time_gpu, start, stop_gpu);//事件计时//printf("\n GPU time: %.4f ms \n", time_gpu);cudaEventDestroy(start);//销毁事件cudaEventDestroy(stop_gpu);for (int y = 0; y < M; y++){printf("\n matrix_out[%d]:\n", y);for (int x = 0; x < M; x++){printf("%.2f ", matrix_out.elements[y * M + x]);}}cudaFreeHost(matrix_1.elements);cudaFreeHost(matrix_2.elements);cudaFreeHost(matrix_out.elements);system("pause");return 0;

}

利用共享内存的矩阵乘法

每个线程块负责计算矩阵C的一个方子矩阵Csub,块内的每个线程负责计算Csub的一个元素。

Csub等于两个矩形矩阵的乘积:维度为(A.width,block_size)的A的子矩阵与Csub具有相同的行索引,维度为(A.width,block_size)的B的子矩阵与Csub具有相同的列索引。

为了适应设备的资源,这两个矩形矩阵被分割成尽可能多的尺寸为block_size的方阵,Csub被计算为这些方阵乘积的和。

首先将两个对应的方阵从全局内存加载到共享内存,其中一个线程加载每个矩阵的一个元素,然后让每个线程计算乘积的一个元素。每个线程将这些产品的结果累积到寄存器中,完成后将结果写入全局内存。

访问次数 :

通过这种方式阻塞计算,我们利用了快速共享内存并节省了大量的全局内存带宽,矩阵A只从全局内存中读取(B.width/block_size)次,矩阵B只从全局内存中读取(A.height/block_size)次。

#include <stdio.h>

#include "cuda_runtime.h"

#include "device_launch_parameters.h"

#include "error.cuh"

#include <stdlib.h>

#include<math.h>

#include <malloc.h>

#include <stdlib.h>//利用share memory 和统一内存优化矩阵乘#define M 80

#define N 2000// 线程块尺寸

#define BLOCK_SIZE 16///-----------矩阵乘法与共享内存-----------

// 矩阵以行为主的顺序存储:

// M(row, col) = *(M.elements + row * M.stride + col)

typedef struct

{int width;int height;float* elements;

} Matrix;

// 得到一个矩阵元素

__device__ float GetElement(const Matrix A, int row, int col)

{return A.elements[row * A.width + col];

}

// 设置一个矩阵元素

__device__ void SetElement(Matrix A, int row, int col, float value)

{A.elements[row * A.width + col] = value;

}

// 获取A的BLOCK_SIZExBLOCK_SIZE子矩阵subb,它位于A的左上角的col子矩阵和行子矩阵

__device__ Matrix GetSubMatrix(Matrix A, int row, int col)

{Matrix Asub;Asub.width = BLOCK_SIZE;Asub.height = BLOCK_SIZE;Asub.elements = &A.elements[A.width * BLOCK_SIZE * row + BLOCK_SIZE * col];return Asub;

}// 矩阵乘法核的前向声明

__global__ void MatMulKernel(const Matrix, const Matrix, Matrix);

// 矩阵乘法-主机代码

// 矩阵维度被假定为BLOCK_SIZE的倍数

void MatMul(const Matrix A, const Matrix B, Matrix C)

{// 将A和B加载到设备内存Matrix d_A;d_A.width = A.width; d_A.height = A.height;size_t size = A.width * A.height * sizeof(float);cudaMalloc(&d_A.elements, size);cudaMemcpy(d_A.elements, A.elements, size, cudaMemcpyHostToDevice);Matrix d_B;d_B.width = B.width; d_B.height = B.height;size = B.width * B.height * sizeof(float);cudaMalloc(&d_B.elements, size);cudaMemcpy(d_B.elements, B.elements, size, cudaMemcpyHostToDevice);// 在设备内存中分配CMatrix d_C;d_C.width = C.width; d_C.height = C.height;size = C.width * C.height * sizeof(float);cudaMalloc(&d_C.elements, size);// 调用内核dim3 dimBlock(BLOCK_SIZE, BLOCK_SIZE);dim3 dimGrid(B.width / dimBlock.x, A.height / dimBlock.y);MatMulKernel <<< dimGrid, dimBlock >>>(d_A, d_B, d_C);// 从设备内存中读取CcudaMemcpy(C.elements, d_C.elements, size, cudaMemcpyDeviceToHost);// 空闲设备内存cudaFree(d_A.elements);cudaFree(d_B.elements);cudaFree(d_C.elements);

}

// MatMul()调用的矩阵乘法内核

__global__ void MatMulKernel(Matrix A, Matrix B, Matrix C)

{// 块行和列int blockRow = blockIdx.y;int blockCol = blockIdx.x;// 每个线程块计算C的一个子矩阵CsubMatrix Csub = GetSubMatrix(C, blockRow, blockCol);// 每个线程通过将结果累积到Cvalue中来计算Csub的一个元素float Cvalue = 0;// 线程行和列在Csubint row = threadIdx.y;int col = threadIdx.x;// 遍历计算Csub所需的A和B的所有子矩阵,将每对子矩阵相乘并累加结果for (int i = 0; i < (A.width / BLOCK_SIZE); ++i){// 得到A的子矩阵Matrix Asub = GetSubMatrix(A, blockRow, i);// 得到B的子矩阵BMatrix Bsub = GetSubMatrix(B, i, blockCol);// 用于存储sub和sub的共享内存tively__shared__ float As[BLOCK_SIZE][BLOCK_SIZE];__shared__ float Bs[BLOCK_SIZE][BLOCK_SIZE];// 将subb和Bsub从设备内存加载到共享内存,每个线程加载每个子矩阵的一个元素As[row][col] = GetElement(Asub, row, col);Bs[row][col] = GetElement(Bsub, row, col);// 同步以确保在开始计算之前加载子矩阵__syncthreads();// 将subb和Bsub相乘for (int j = 0; j < BLOCK_SIZE; ++j)Cvalue += As[row][j] * Bs[j][col];// 同步以确保在下一次迭代中加载两个新的子矩阵A和B之前完成前面的计算__syncthreads();}// 将Csub写入设备内存// 每个线程写入一个元素SetElement(Csub, row, col, Cvalue);

}int main()

{ Matrix matrix_1, matrix_2, matrix_out;int memsize = sizeof(float) * M * N;int memsize_out = sizeof(float) * M * M;matrix_1.width = matrix_2.height = M;matrix_2.width = matrix_1.height = N;matrix_out.width = matrix_out.height = M;cudaMallocHost((void**)&matrix_1.elements, memsize);cudaMallocHost((void**)&matrix_2.elements, memsize);cudaMallocHost((void**)&matrix_out.elements, memsize_out);for (int y = 0; y < N; y++)for (int x = 0; x < M; x++)matrix_1.elements[y * M + x] = (float)rand() / 1.0E5 ;for (int y = 0; y < M; y++)for (int x = 0; x < N; x++)matrix_2.elements[y * N + x] = (float)rand() / 1.0E5;cudaEvent_t start, stop_gpu;cudaEventCreate(&start);//创建事件cudaEventCreate(&stop_gpu);//创建事件cudaEventRecord(start, 0);//记录事件MatMul(matrix_1, matrix_2, matrix_out);cudaEventRecord(stop_gpu,0);//记录事件cudaEventSynchronize(stop_gpu);float time_gpu;cudaEventElapsedTime(&time_gpu, start, stop_gpu);//事件计时//printf("\n GPU time: %.4f ms \n", time_gpu);cudaEventDestroy(start);//销毁事件cudaEventDestroy(stop_gpu);for (int y = 0; y < M; y++){printf("\n matrix_out[%d]:\n", y);for (int x = 0; x < M; x++){printf("%.2f ", matrix_out.elements[y * M + x]);}}cudaFreeHost(matrix_1.elements);cudaFreeHost(matrix_2.elements);cudaFreeHost(matrix_out.elements);system("pause");return 0;

}