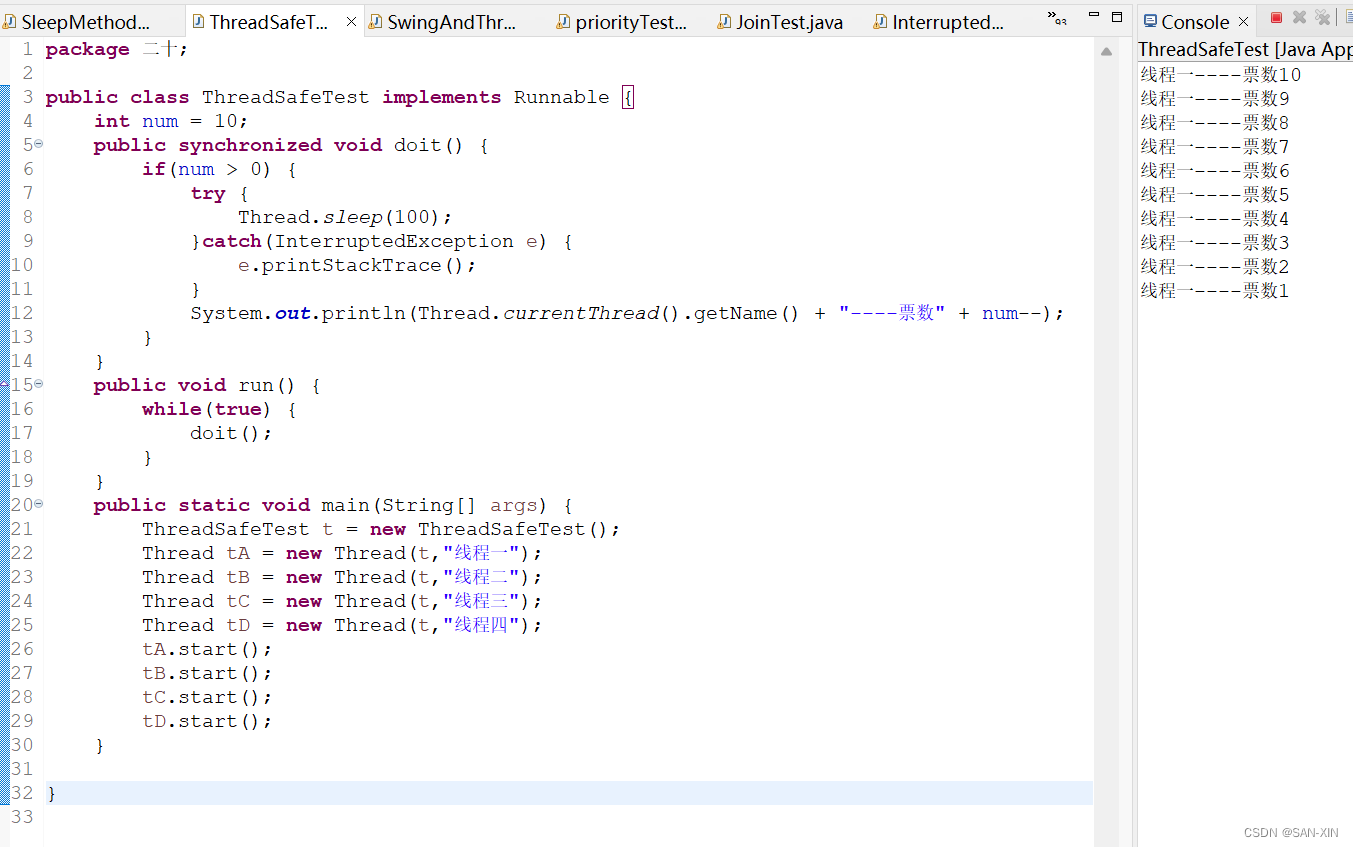

k8s部署官方提供了kind、minikube、kubeadmin等多种安装方式。

其中minikube安装在之前的文章中已经介绍过,部署比较简单。下面介绍通过kubeadmin部署k8s集群。

生产中提供了多种高可用方案:

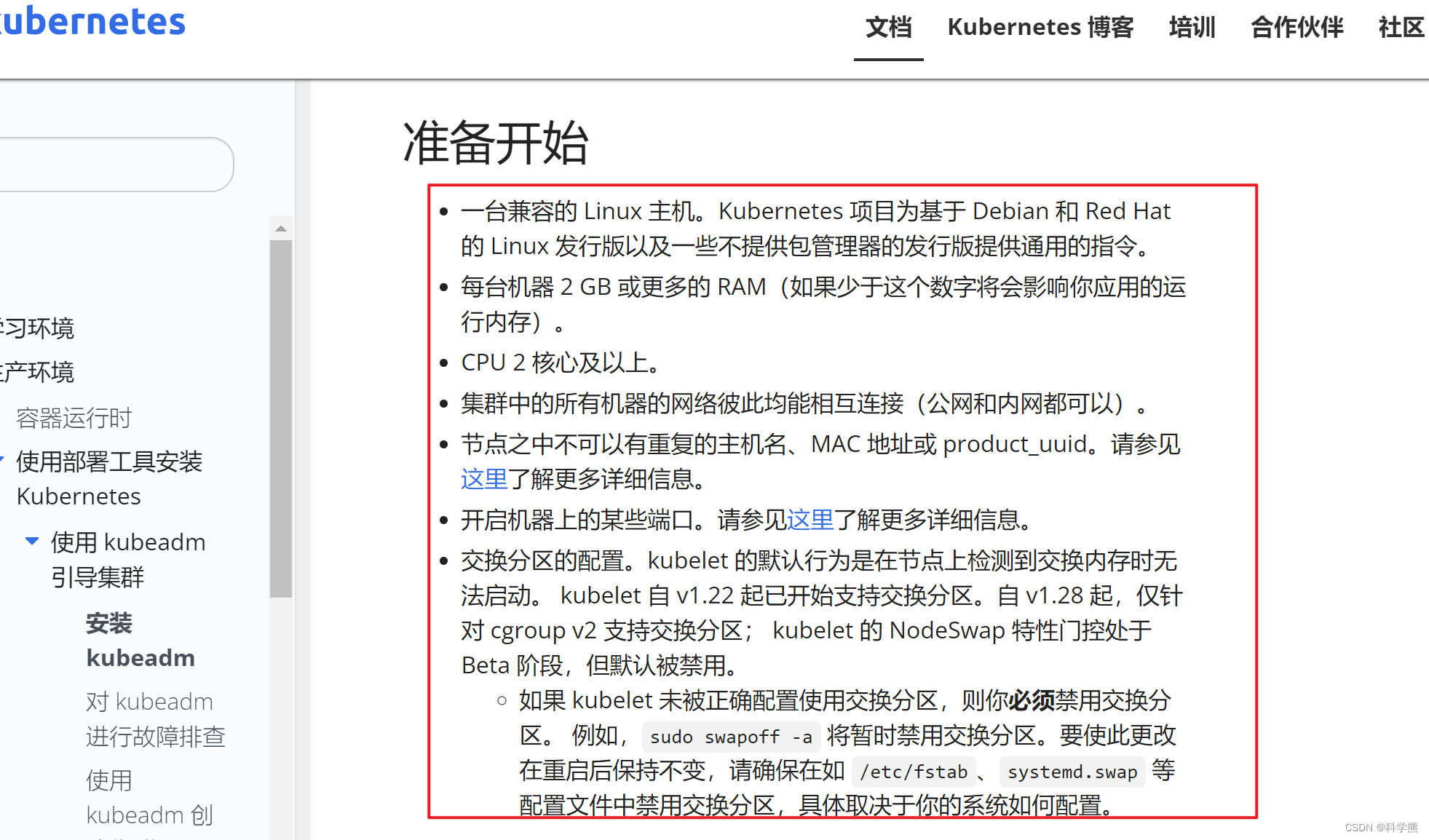

k8s官方文档

本文安装的是1.28.0版本。

建议去认真阅读一下官方文档,下面的操作基本是出自官方文档。

1、环境准备

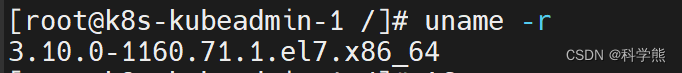

三台centos7虚拟机:2核4G(官网最低要求2核2G)

内核版本:

uname -r

| 角色 | ip | 主机名 |

|---|---|---|

| master | 192.168.213.9 | k8s-kubeadmin-1 |

| node1 | 192.168.213.10 | k8s-kubeadmin-2 |

| node2 | 192.168.213.11 | k8s-kubeadmin-3 |

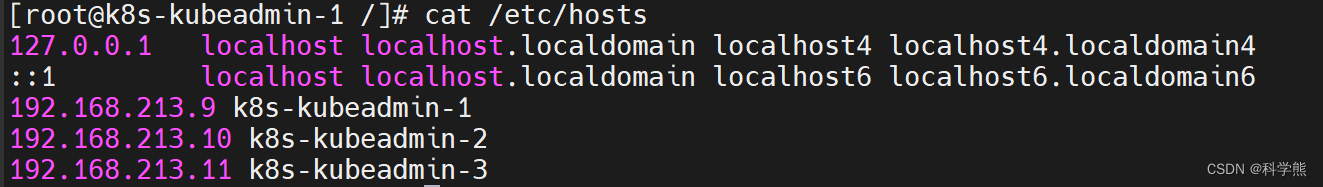

在三台虚拟机中修改hosts文件:

确保可以通过主机名ping通对方。

修改主机名:

查看:

hostname

修改:

sudo hostnamectl set-hostname k8s-kubeadmin-1

2、安装

2.1、所有节点操作:关闭防火墙

关闭防火墙,免得要配置开放端口,不想关闭也行,不怕麻烦的话,可以参考我之前的博客去设置开放防火墙端口。

systemctl stop firewalld #停止防火墙

systemctl disable firewalld #设置开机不启动

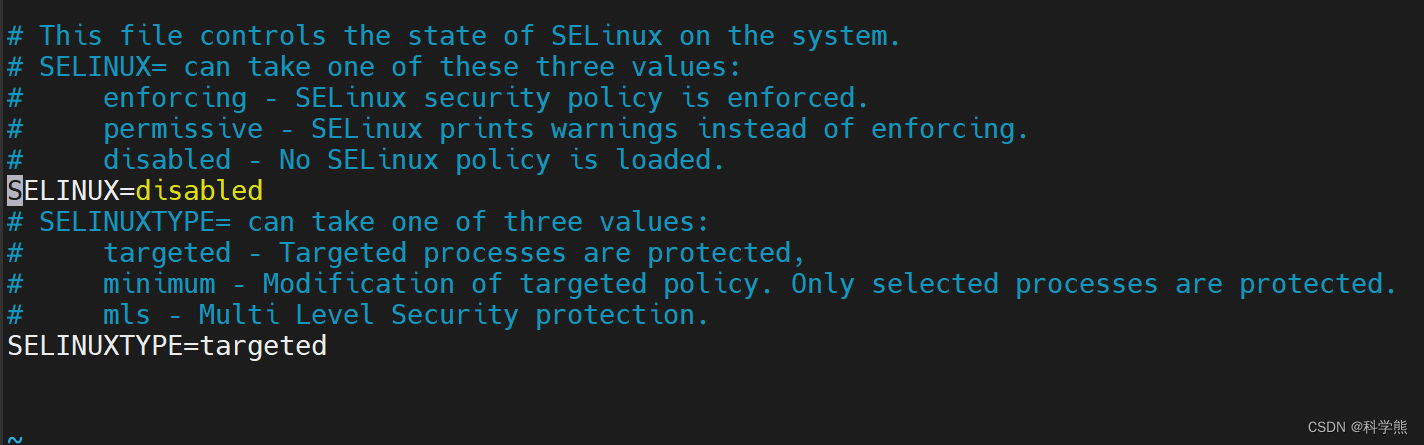

2.2、所有节点操作:禁用selinux

# 将 SELinux 设置为 permissive 模式(相当于将其禁用)

sudo setenforce 0

sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

或者设置为SELINUX=disabled

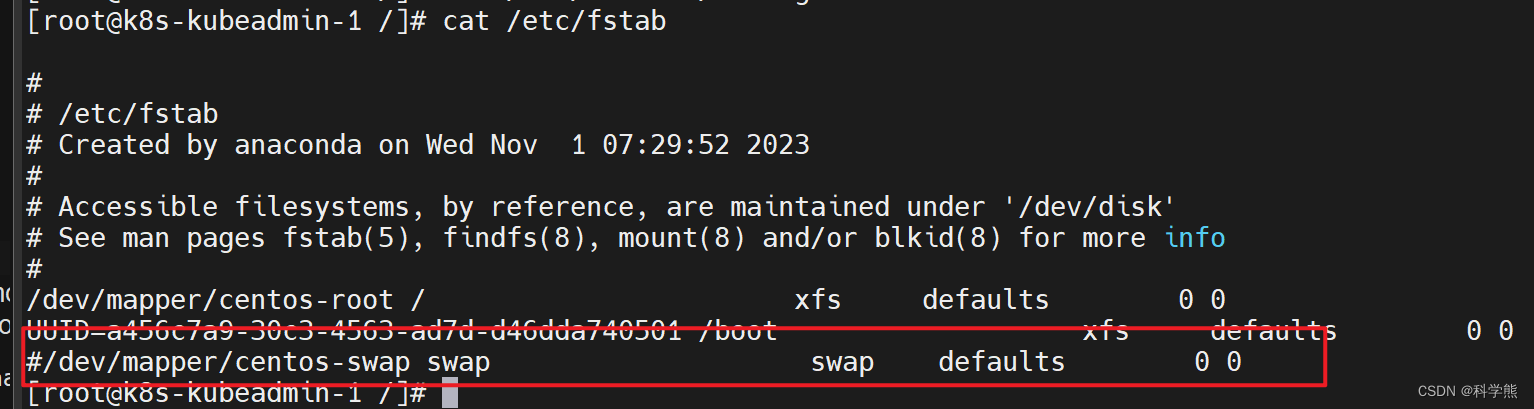

2.3、所有节点操作:关闭swap分区

#永久禁用swap,删除或注释掉/etc/fstab里的swap设备的挂载命令即可

nano /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 0

重启:

reboot

2.4、所有节点操作:设置同步时间

yum -y install ntp

systemctl start ntpd

systemctl enable ntpd

2.5、所有节点操作:开启bridge-nf-call-iptalbes

在Kubernetes环境中,iptables和IPVS都用于实现网络流量转发和负载均衡,但它们在实现方式和功能上有一些区别。

iptables是Linux系统内置的一个工具,可以对流量进行过滤和转发,支持NAT等网络功能。在Kubernetes中,iptables主要用于实现Service的ClusterIP和NodePort类型。当Service为ClusterIP类型时,iptables会在节点上为每个Service IP添加一条规则,将流量转发到后端Pod的IP上。当Service为NodePort类型时,iptables会在每个节点上添加一条规则,将流量从宿主机的NodePort转发到Service IP上。

相比之下,IPVS(IP Virtual Server)是一个基于Linux内核实现的高性能负载均衡工具,可以在内核态对流量进行处理,支持多种负载均衡算法,并能够进行会话保持。在Kubernetes中,IPVS可以用于实现Service的负载均衡,相比于iptables,IPVS具有更高的性能和更多的负载均衡算法选择,可以更好地应对高流量和高并发的场景。IPVS代理使用iptables做数据包过滤、SNAT或伪装。

总结来说,iptables和IPVS在Kubernetes中都用于实现网络流量的转发和负载均衡。iptables更适用于实现基于Service的负载均衡,而IPVS则更适合于高性能、高并发的场景。在实际使用中,可以根据需求选择合适的工具。

执行以下指令:

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOFsudo modprobe overlay

sudo modprobe br_netfilter# 设置所需的 sysctl 参数,参数在重新启动后保持不变

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF# 应用 sysctl 参数而不重新启动

sudo sysctl --system通过运行以下指令确认 `br_netfilter` 和 `overlay` 模块被加载:lsmod | grep br_netfilter

lsmod | grep overlay

通过运行以下指令确认 net.bridge.bridge-nf-call-iptables、net.bridge.bridge-nf-call-ip6tables 和 net.ipv4.ip_forward 系统变量在你的 sysctl 配置中被设置为 1:

sysctl net.bridge.bridge-nf-call-iptables net.bridge.bridge-nf-call-ip6tables net.ipv4.ip_forward

[root@k8s-kubeadmin-1 /]# sysctl net.bridge.bridge-nf-call-iptables net.bridge.bridge-nf-call-ip6tables net.ipv4.ip_forward

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

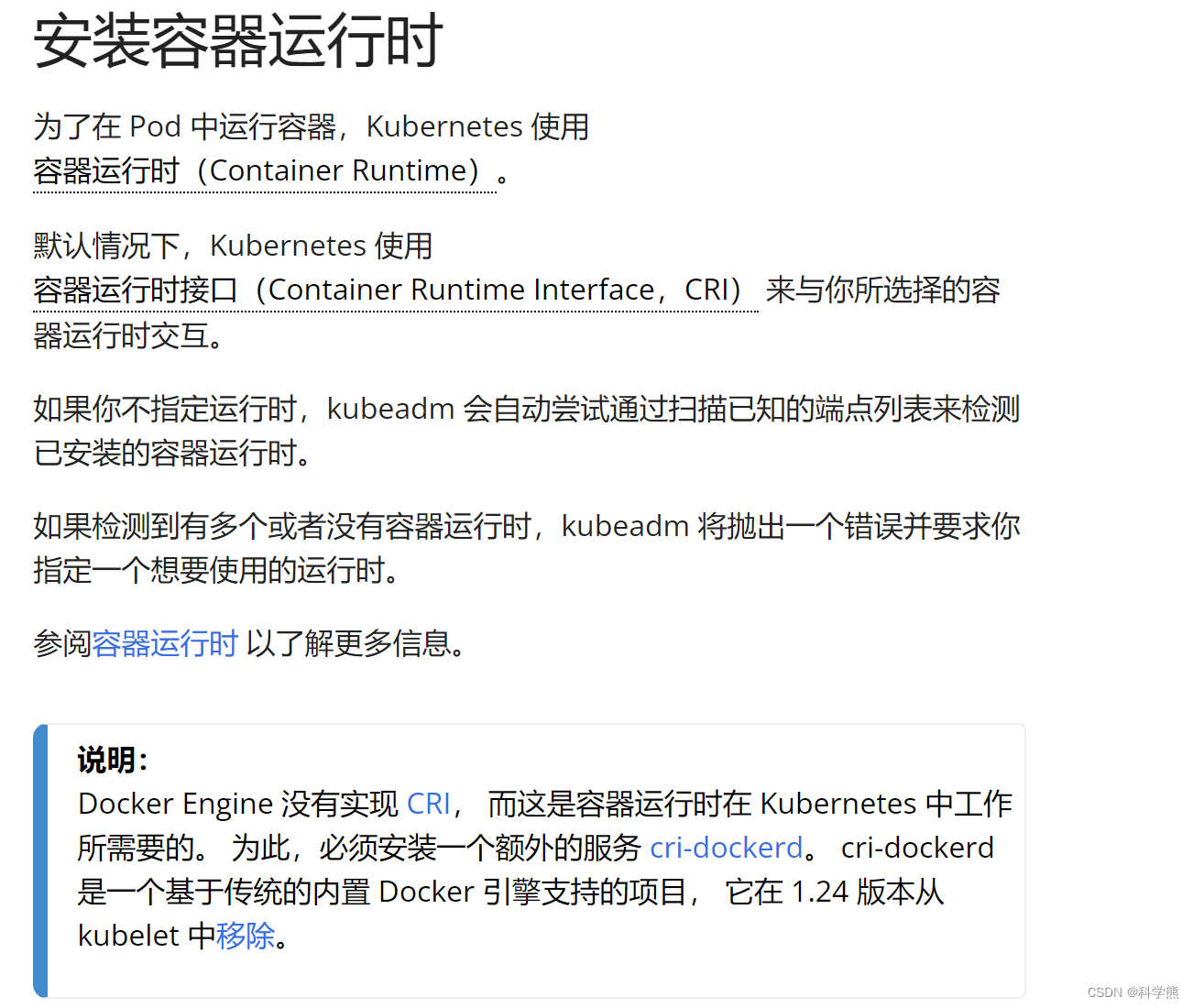

2.6、所有节点操作:安装容器运行时containerd

安装containerd:

yum install -y yum-utils

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

yum -y install containerd.io

生成config.toml配置

containerd config default > /etc/containerd/config.toml

配置 systemd cgroup 驱动:

在 /etc/containerd/config.toml 中设置

sed -i 's/SystemdCgroup = false/SystemdCgroup = true/g' /etc/containerd/config.toml

启动containerd 、开机自启动:

systemctl restart containerd && systemctl enable containerd

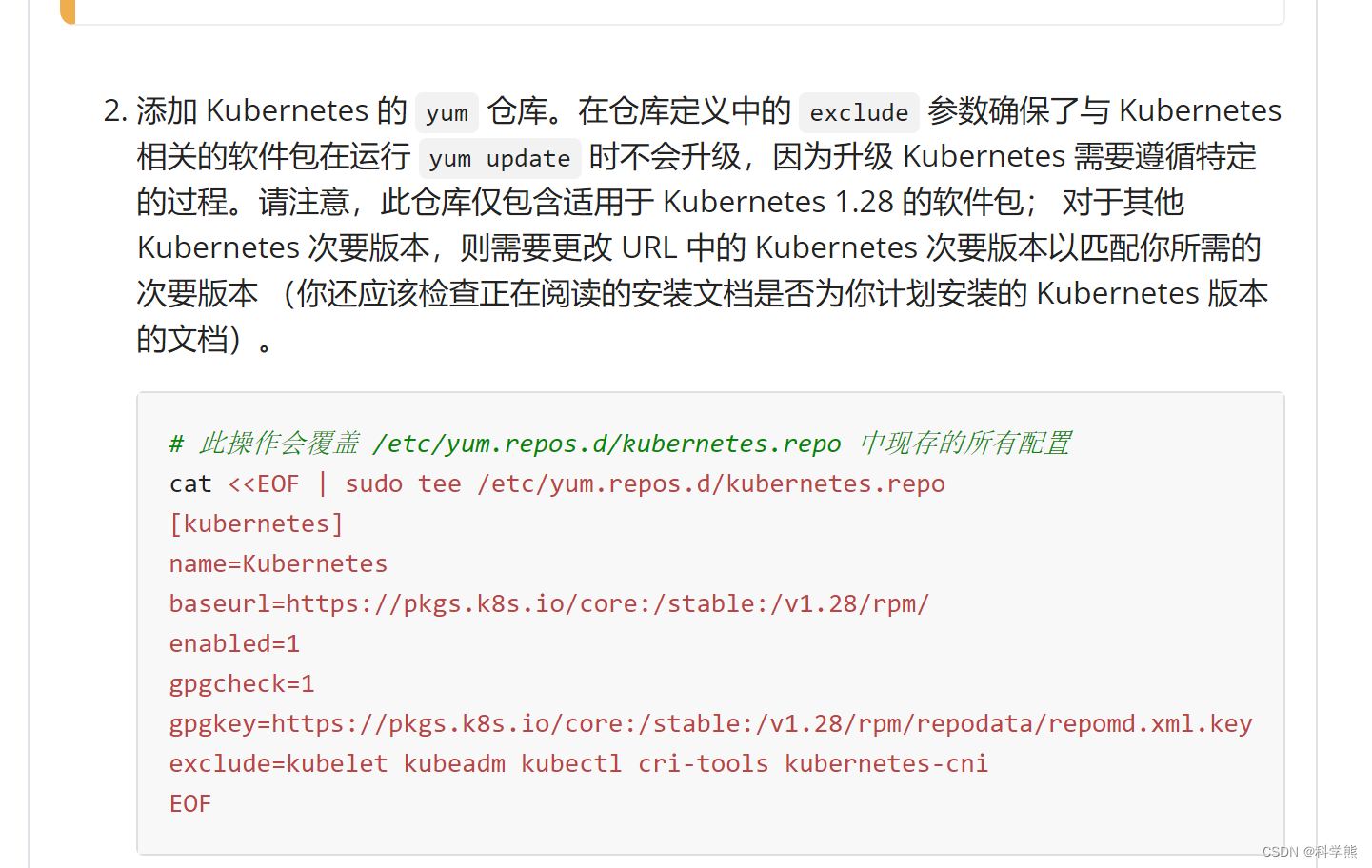

2.7、所有节点操作:k8s配置阿里云yum源

官网中配置的是国外的yum地址,速度较慢或者由于某些因素,所有配置为阿里云的yum源。

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name = Kubernetes

baseurl = https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled = 1

gpgcheck = 0

repo_gpgcheck = 0

gpgkey = https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

[root@k8s-kubeadmin-1 ~]# cd /etc/yum.repos.d

[root@k8s-kubeadmin-1 yum.repos.d]# ll

total 48

-rw-r--r--. 1 root root 1664 Nov 23 2020 CentOS-Base.repo

-rw-r--r--. 1 root root 1309 Nov 23 2020 CentOS-CR.repo

-rw-r--r--. 1 root root 649 Nov 23 2020 CentOS-Debuginfo.repo

-rw-r--r--. 1 root root 314 Nov 23 2020 CentOS-fasttrack.repo

-rw-r--r--. 1 root root 630 Nov 23 2020 CentOS-Media.repo

-rw-r--r--. 1 root root 1331 Nov 23 2020 CentOS-Sources.repo

-rw-r--r--. 1 root root 8515 Nov 23 2020 CentOS-Vault.repo

-rw-r--r--. 1 root root 616 Nov 23 2020 CentOS-x86_64-kernel.repo

-rw-r--r--. 1 root root 1919 Nov 21 03:56 docker-ce.repo

-rw-r--r-- 1 root root 287 Nov 29 00:54 kubernetes.repo

[root@k8s-kubeadmin-1 yum.repos.d]#

安装docker:

sudo yum install -y yum-utils

sudo yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

sudo yum install docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin

启动:

sudo systemctl start docker

开机启动:

systemctl enable docker

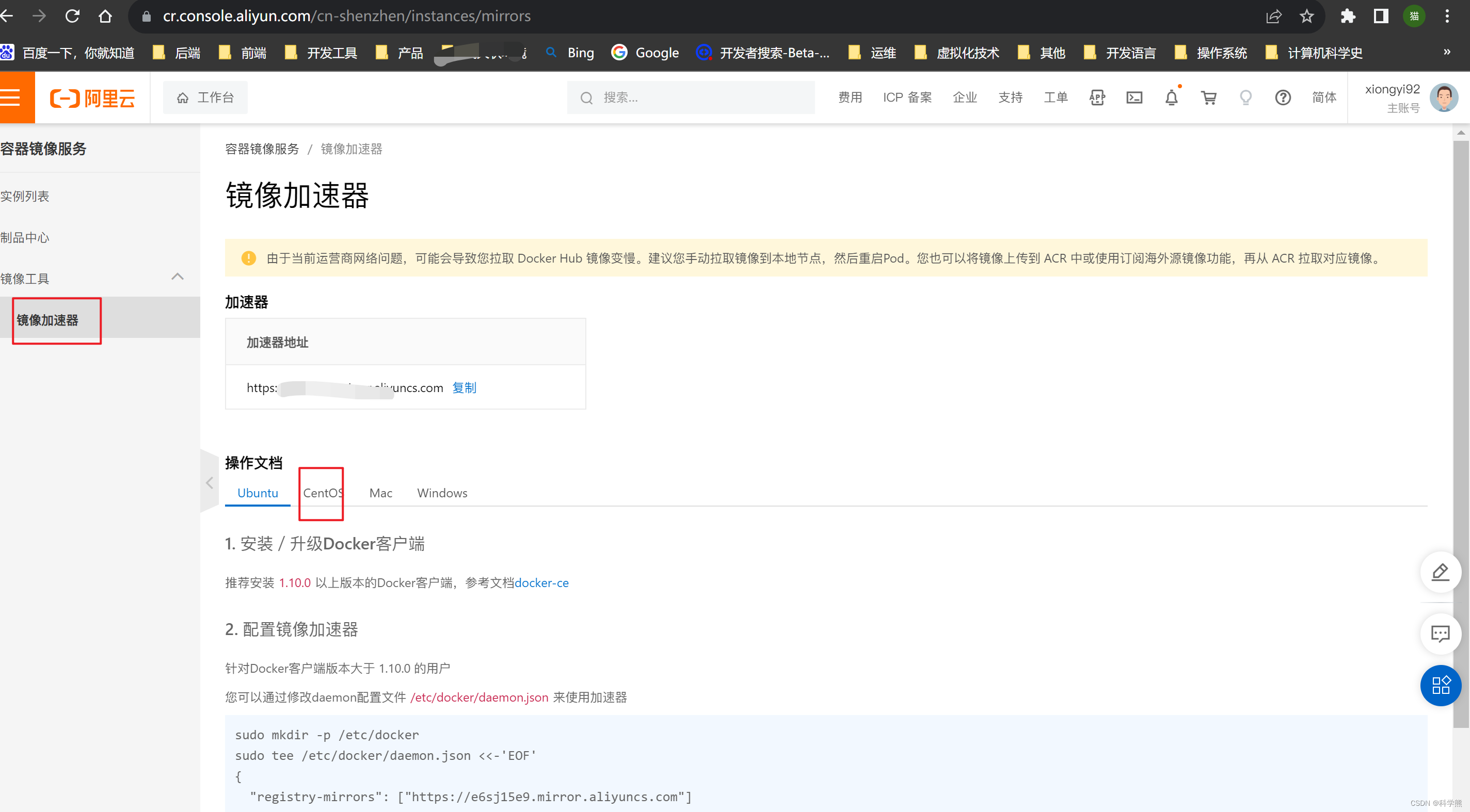

配置阿里云镜像加速:

可以通过修改daemon配置文件/etc/docker/daemon.json来使用加速器

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{"registry-mirrors": ["https://e6sj15e9.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

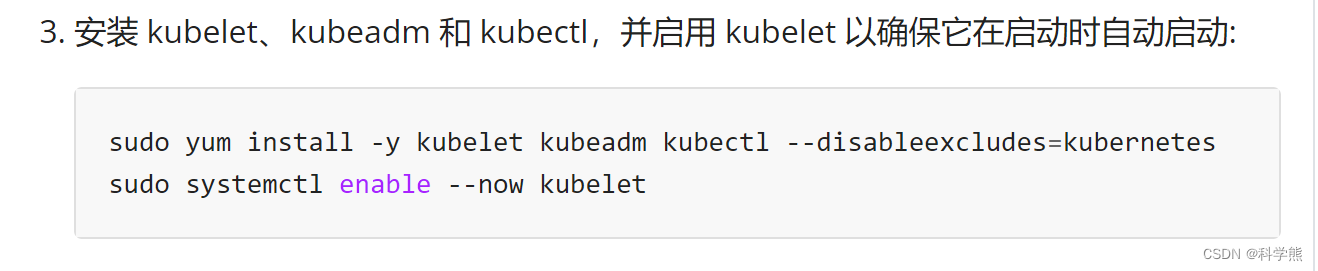

2.8、所有节点操作:yum安装kubeadm、kubelet、kubectl

这是官网的安装:

删除历史安装:历史曾经安装过的可以执行,卸载重新安装。

yum -y remove kubelet kubeadm kubectl

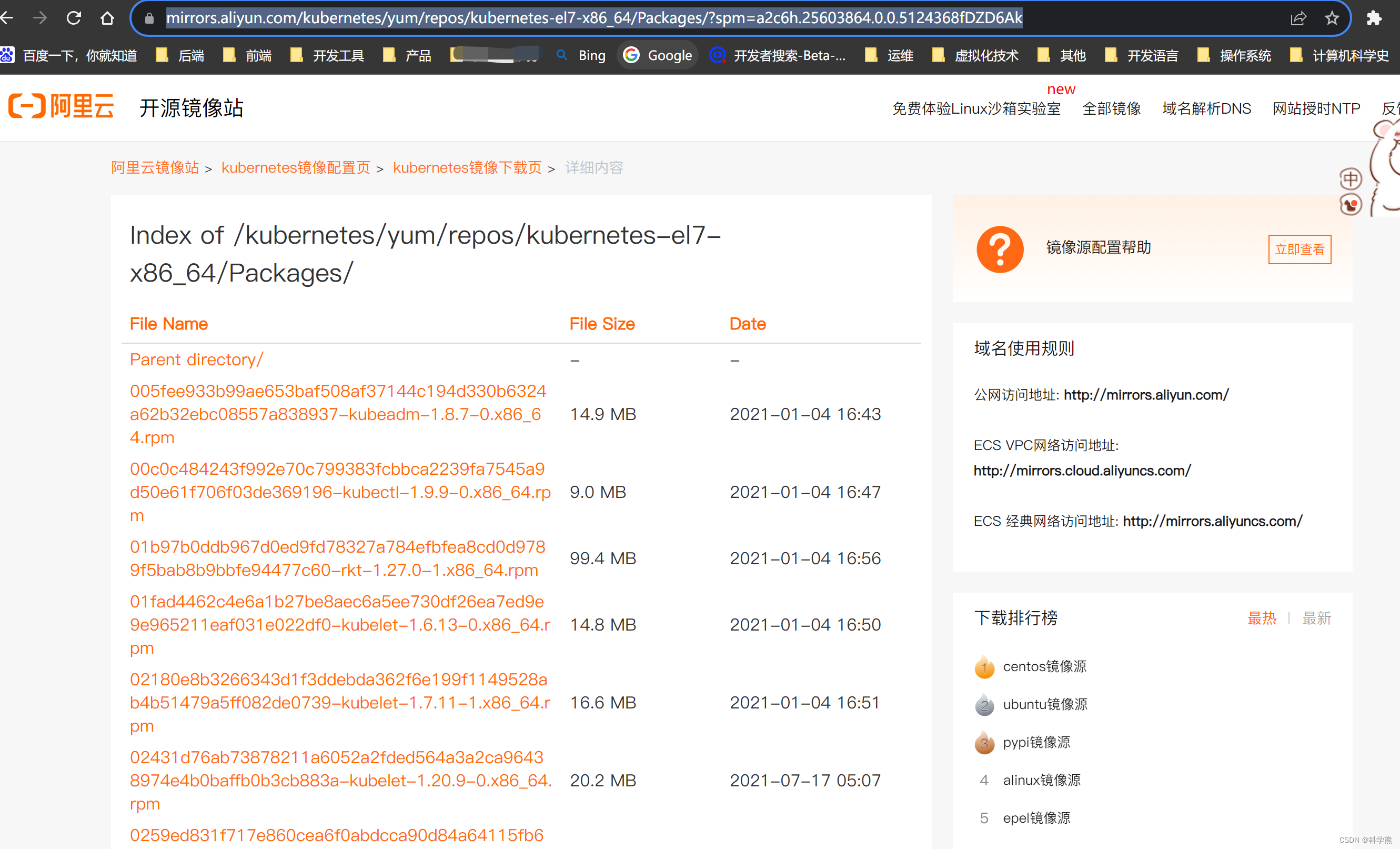

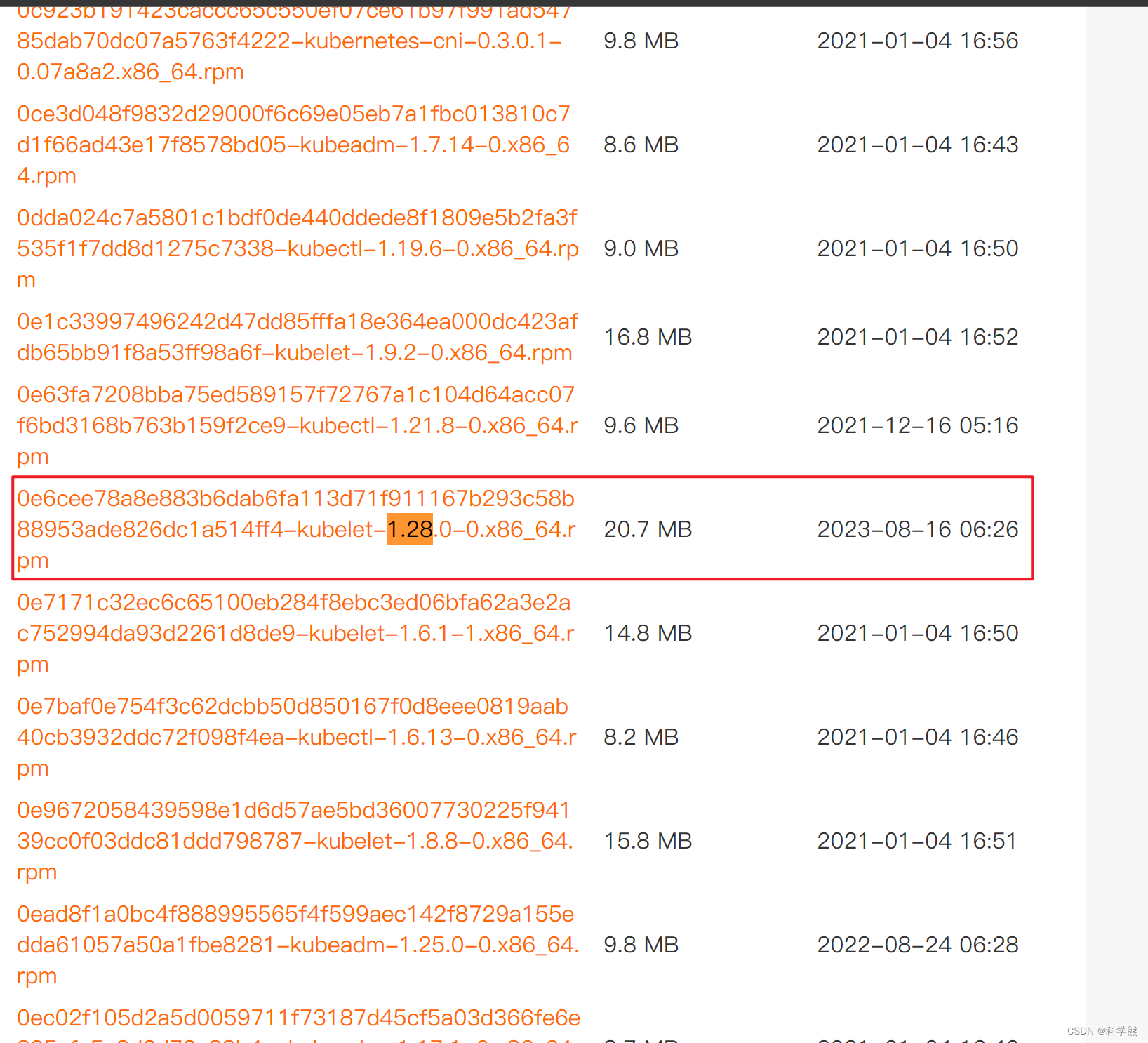

访问查看阿里云上面的安装包详情:

https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

看到1.28.0版本比较新的,2023-08-16更新的,选用这个。安装时加上版本号:

yum install -y kubelet-1.28.0 kubeadm-1.28.0 kubectl-1.28.0 --disableexcludes=kubernetes

systemctl enable kubelet

2.9、查看所需的镜像

需要准备镜像。

可以进行自定义镜像等操作。我采用的的查询阿里加速镜像器中存在的,然后修改标签为它需要的。

kubeadm config images list

[root@k8s-kubeadmin-1 yum.repos.d]# kubeadm config images list

registry.k8s.io/kube-apiserver:v1.28.4

registry.k8s.io/kube-controller-manager:v1.28.4

registry.k8s.io/kube-scheduler:v1.28.4

registry.k8s.io/kube-proxy:v1.28.4

registry.k8s.io/pause:3.9

registry.k8s.io/etcd:3.5.9-0

registry.k8s.io/coredns/coredns:v1.10.1

这些依赖镜像是阿里云镜像中没有的。

[root@k8s-kubeadmin-1 yum.repos.d]# docker search registry.k8s.io/kube-apiserver:v1.28.4

Error response from daemon: Unexpected status code 404

所以下面的kubeadmin init命令很可能是成功不了的。

需要拉去阿里云上面的镜像下来,然后tag修改为它需求的镜像标签。

docker tag registry.aliyuncs.com/google_containers/kube-apiserver:v1.28.0 registry.k8s.io/kube-apiserver:v1.28.4

docker tag registry.aliyuncs.com/google_containers/kube-controller-manager:v1.28.0 registry.k8s.io/kube-controller-manager:v1.28.4

docker tag registry.aliyuncs.com/google_containers/kube-scheduler:v1.28.0 registry.k8s.io/kube-scheduler:v1.28.4

docker tag registry.aliyuncs.com/google_containers/kube-proxy:v1.28.0 registry.k8s.io/kube-proxy:v1.28.4

docker tag registry.aliyuncs.com/google_containers/etcd:3.5.9-0 registry.k8s.io/etcd:3.5.9-0

docker tag registry.aliyuncs.com/google_containers/coredns:v1.10.1 registry.k8s.io/coredns/coredns:v1.10.1

docker tag registry.aliyuncs.com/google_containers/pause:3.9 registry.k8s.io/pause:3.6

[root@k8s-kubeadmin-1 yum.repos.d]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

flannel/flannel v0.22.3 e23f7ca36333 2 months ago 70.2MB

registry.aliyuncs.com/google_containers/kube-apiserver v1.28.0 bb5e0dde9054 3 months ago 126MB

registry.k8s.io/kube-apiserver v1.28.4 bb5e0dde9054 3 months ago 126MB

registry.aliyuncs.com/google_containers/kube-scheduler v1.28.0 f6f496300a2a 3 months ago 60.1MB

registry.k8s.io/kube-scheduler v1.28.4 f6f496300a2a 3 months ago 60.1MB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.28.0 4be79c38a4ba 3 months ago 122MB

registry.k8s.io/kube-controller-manager v1.28.4 4be79c38a4ba 3 months ago 122MB

registry.aliyuncs.com/google_containers/kube-proxy v1.28.0 ea1030da44aa 3 months ago 73.1MB

registry.k8s.io/kube-proxy v1.28.4 ea1030da44aa 3 months ago 73.1MB

flannel/flannel-cni-plugin v1.2.0 a55d1bad692b 4 months ago 8.04MB

registry.aliyuncs.com/google_containers/etcd 3.5.9-0 73deb9a3f702 6 months ago 294MB

registry.k8s.io/etcd 3.5.9-0 73deb9a3f702 6 months ago 294MB

registry.k8s.io/coredns/coredns v1.10.1 ead0a4a53df8 9 months ago 53.6MB

registry.aliyuncs.com/google_containers/coredns v1.10.1 ead0a4a53df8 9 months ago 53.6MB

registry.aliyuncs.com/google_containers/pause 3.9 e6f181688397 13 months ago 744kB

registry.k8s.io/pause 3.6 e6f181688397 13 months ago 744kB

registry.k8s.io/pause 3.9 e6f181688397 13 months ago 744kB

kubernetesui/dashboard latest 07655ddf2eeb 14 months ago 246MB

kubernetesui/dashboard v2.7.0 07655ddf2eeb 14 months ago 246MB

kubernetesui/metrics-scraper latest 421615ce8dbd 2 years ago 34.4MB

kubernetesui/metrics-scraper v1.0.8 421615ce8dbd 2 years ago 34.4MB

registry.aliyuncs.com/google_containers/kube-proxy v1.17.4 6dec7cfde1e5 3 years ago 116MB

registry.aliyuncs.com/google_containers/kube-apiserver v1.17.4 2e1ba57fe95a 3 years ago 171MB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.17.4 7f997fcf3e94 3 years ago 161MB

registry.aliyuncs.com/google_containers/kube-scheduler v1.17.4 5db16c1c7aff 3 years ago 94.4MB

registry.aliyuncs.com/google_containers/coredns 1.6.5 70f311871ae1 4 years ago 41.6MB

registry.aliyuncs.com/google_containers/etcd 3.4.3-0 303ce5db0e90 4 years ago 288MB

registry.aliyuncs.com/google_containers/pause 3.1 da86e6ba6ca1 5 years ago 742kB

kubernetes/pause latest f9d5de079539 9 years ago 240kB

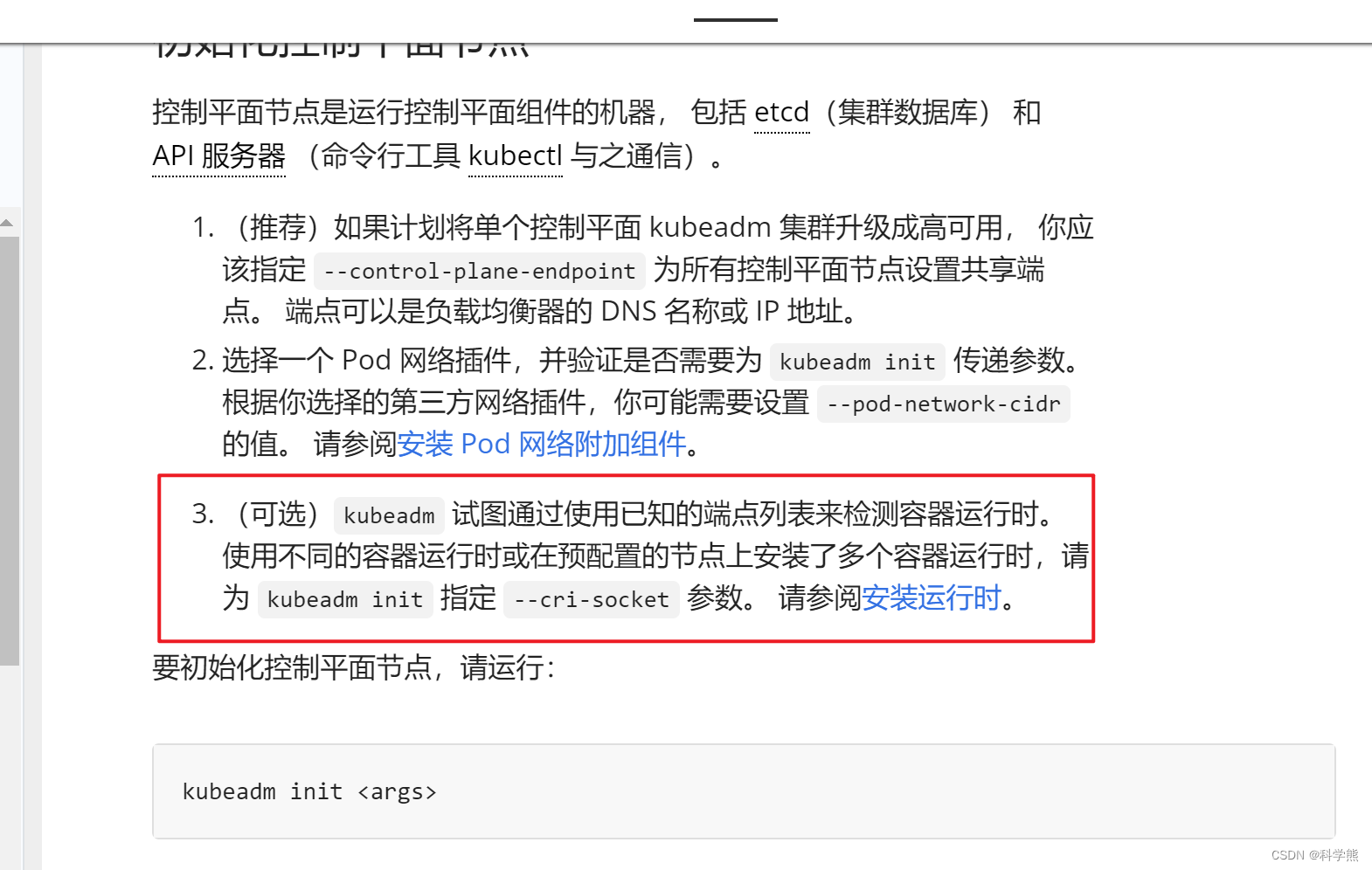

2.9、k8s-kubeadmin-1节点执行:安装master

kubeadm init \

--apiserver-advertise-address=192.168.213.9 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.28.0 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16 \

--cri-socket=unix:///var/run/cri-dockerd.sock \

--v=5

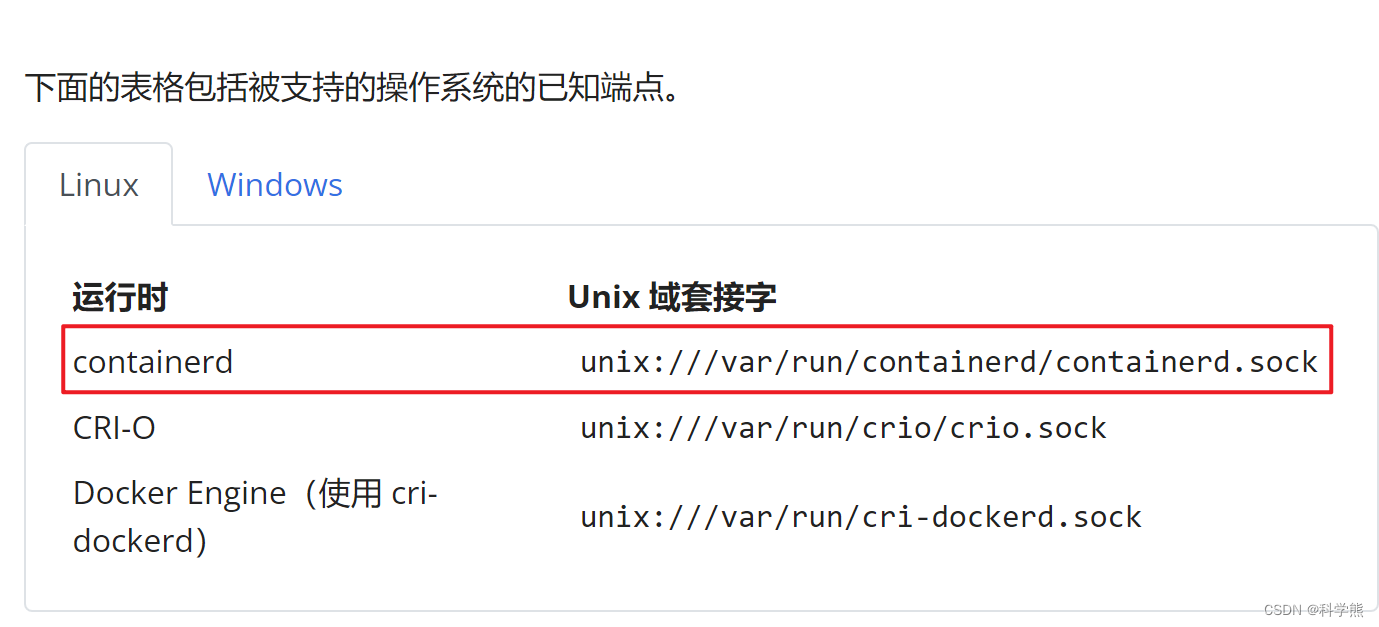

由于上面容器运行时安装了containerd和Docker Engine(使用 cri-dockerd),所以需要指定cri-socket参数。

安装的过程中要是出错了,重新安装,需要重置 kubeadm 安装的状态:

kubeadm reset --cri-socket=unix:///var/run/cri-dockerd.sock

重置过程不会重置或清除 iptables 规则或 IPVS 表。如果希望重置 iptables,则必须手动进行:

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

如果要重置 IPVS 表,则必须运行以下命令:

ipvsadm -C

our Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configAlternatively, if you are the root user, you can run:export KUBECONFIG=/etc/kubernetes/admin.confYou should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 192.168.213.9:6443 --token askdfkjsdfkljkldffj\--discovery-token-ca-cert-hash sha256:kjlksjdfkasdkjflksdfljdfkdf

然后根据提示操作:

要使非 root 用户可以运行 kubectl,请运行以下命令, 它们也是 kubeadm init 输出的一部分:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

或者,如果你是 root 用户,则可以运行:

export KUBECONFIG=/etc/kubernetes/admin.conf

这时执行:

kubectl get node

[root@k8s-kubeadmin-1 yum.repos.d]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-kubeadmin-1 NoReady control-plane 4h31m v1.28.0

子节点加入k8s-kubeadmin-1节点:

格式:

kubeadm join --token <token> <control-plane-host>:<control-plane-port> --discovery-token-ca-cert-hash sha256:<hash>

kubeadm join 192.168.213.9:6443 --token s5inwf.17rdxvhjalwyzj92 \--discovery-token-ca-cert-hash sha256:ce85d2ceaea7311ac3e58ee355d34ee9235702e3415d43b84f78da682210ee09 \--cri-socket=unix:///var/run/cri-dockerd.sock --v=5

有可能token过期了:

k8s-kubeadmin-1执行创建token:

kubeadm token create

会输出:

5didvk.d09sbcov8ph2amjw

如果你没有 --discovery-token-ca-cert-hash 的值,则可以通过在控制平面节点上执行以下命令链来获取它:

openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | \openssl dgst -sha256 -hex | sed 's/^.* //'

输出类似于以下内容:

8cb2de97839780a412b93877f8507ad6c94f73add17d5d7058e91741c9d5ec78

再执行:

kubectl get node

[root@k8s-kubeadmin-1 yum.repos.d]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-kubeadmin-1 NoReady control-plane 4h31m v1.28.0

k8s-kubeadmin-2 NoReady <none> 4h7m v1.28.0

k8s-kubeadmin-3 NoReady <none> 4h7m v1.28.0

需要安装 Pod 网络附加组件

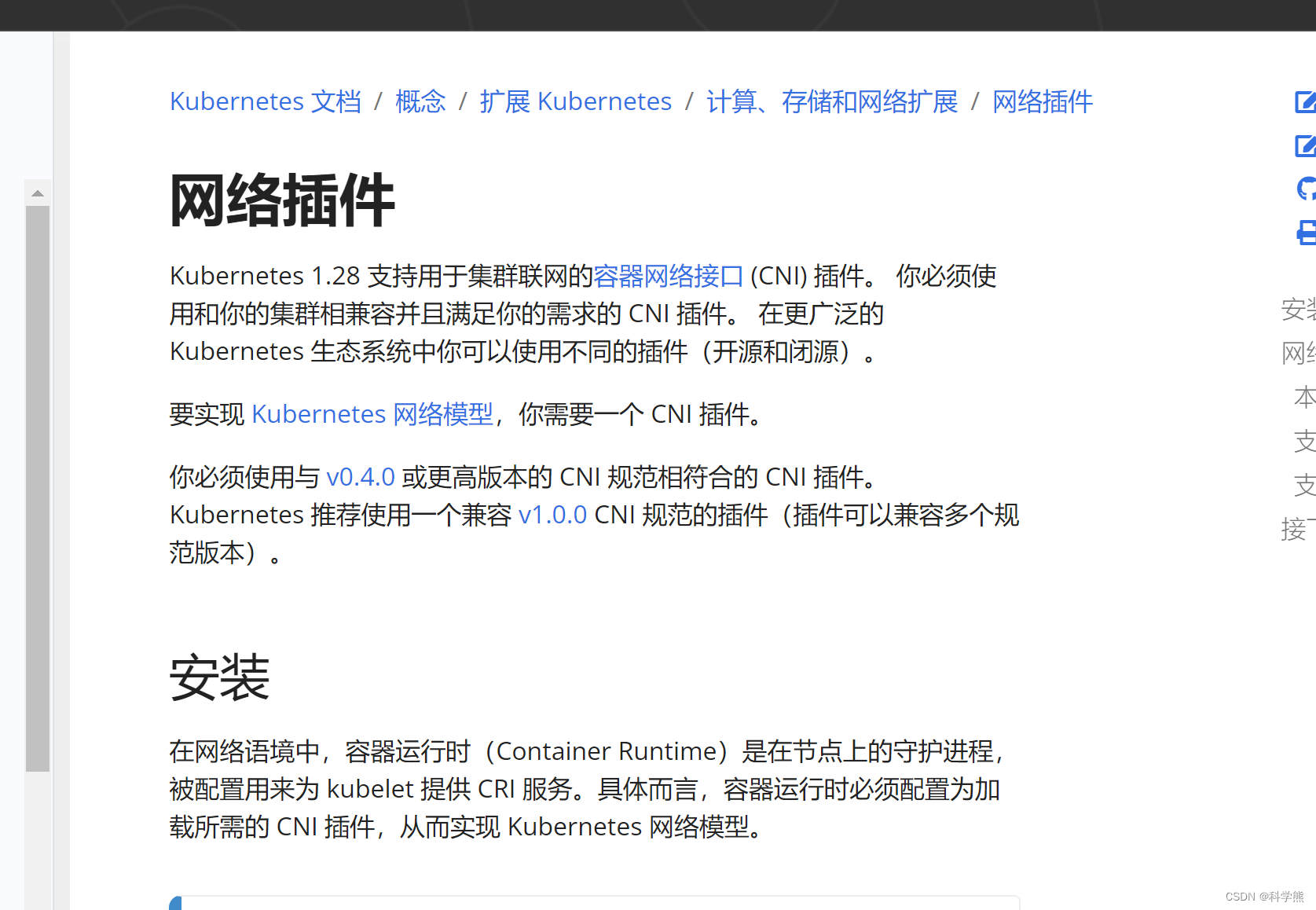

3.0、k8s-kubeadmin-1节点执行:安装 Pod 网络附加组件-容器网络接口 (CNI)

下载安装:

wget https://github.com/containernetworking/plugins/releases/download/v1.3.0/cni-plugins-linux-amd64-v1.3.0.tgz

mkdir -pv /opt/cni/bin

tar zxvf cni-plugins-linux-amd64-v1.3.0.tgz -C /opt/cni/bin/

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

再次执行kubectl get node

[root@k8s-kubeadmin-1 yum.repos.d]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-kubeadmin-1 Ready control-plane 4h31m v1.28.0

k8s-kubeadmin-2 Ready <none> 4h7m v1.28.0

k8s-kubeadmin-3 Ready <none> 4h7m v1.28.0

查看命名空间kube-system的pod的状态:

[root@k8s-kubeadmin-1 yum.repos.d]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-66f779496c-9tqbt 1/1 Running 0 4h42m

coredns-66f779496c-wzvts 1/1 Running 0 4h42m

dashboard-metrics-scraper-5657497c4c-v2dn4 1/1 Running 0 3h

etcd-k8s-kubeadmin-1 1/1 Running 0 4h42m

kube-apiserver-k8s-kubeadmin-1 1/1 Running 0 4h42m

kube-controller-manager-k8s-kubeadmin-1 1/1 Running 0 4h42m

kube-proxy-bwksp 1/1 Running 0 4h19m

kube-proxy-gdd49 1/1 Running 0 4h42m

kube-proxy-svj87 1/1 Running 0 4h18m

kube-scheduler-k8s-kubeadmin-1 1/1 Running 0 4h42m

kubernetes-dashboard-76f4b5bc7d-gjm79 0/1 CrashLoopBackOff 26 (4m14s ago) 124m

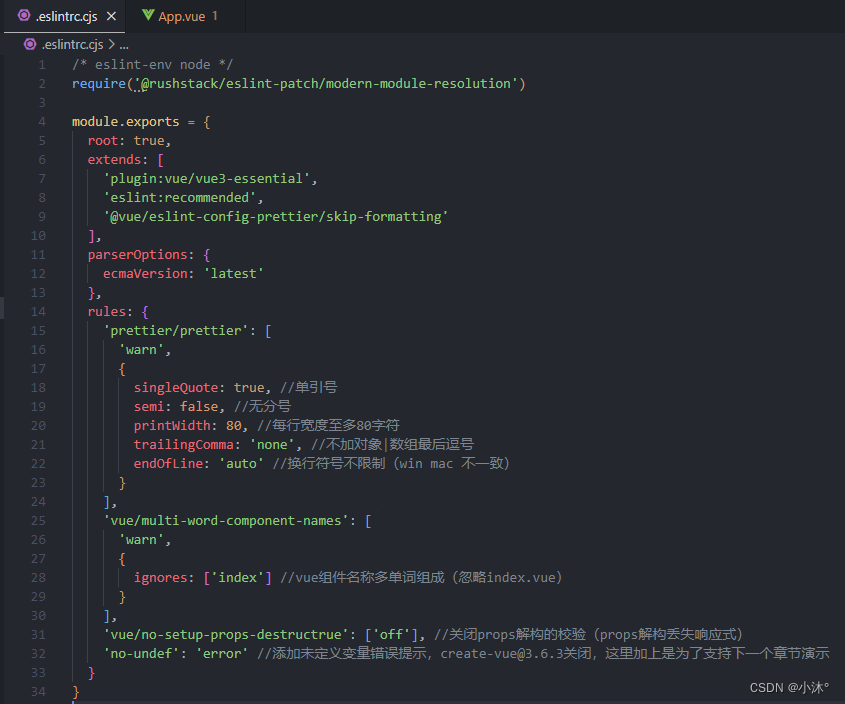

3.1 、安装kubernetes-dashboard

拉取kubernetes-dashboard资源配置清单yaml文件

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

没有其他手段看外面的世界的话可能会比较慢或者拉取失败,下面是我拉取下来的文件,可以复制使用:

其中用到的两个镜像:kubernetesui/dashboard:v2.7.0、kubernetesui/metrics-scraper:v1.0.8,阿里云镜像加速器上面没有。可以查找加速器上面有的,然后通过tag方式修改为它需要的。

在三个机器上都拉取配置。

下面的文件需要修改几个地方;

kind: Service

apiVersion: v1

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboardnamespace: kubernetes-dashboard

spec:ports:- port: 443targetPort: 8443name: https # 源文件没有namenodePort: 32001 # 源文件没有nodePorttype: NodePort # 源文件没有nodePortselector:k8s-app: kubernetes-dashboard

源文件:

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.apiVersion: v1

kind: Namespace

metadata:name: kubernetes-dashboard---apiVersion: v1

kind: ServiceAccount

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboardnamespace: kubernetes-dashboard---kind: Service

apiVersion: v1

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboardnamespace: kubernetes-dashboard

spec:ports:- port: 443targetPort: 8443selector:k8s-app: kubernetes-dashboard---apiVersion: v1

kind: Secret

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboard-certsnamespace: kubernetes-dashboard

type: Opaque---apiVersion: v1

kind: Secret

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboard-csrfnamespace: kubernetes-dashboard

type: Opaque

data:csrf: ""---apiVersion: v1

kind: Secret

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboard-key-holdernamespace: kubernetes-dashboard

type: Opaque---kind: ConfigMap

apiVersion: v1

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboard-settingsnamespace: kubernetes-dashboard---kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboardnamespace: kubernetes-dashboard

rules:# Allow Dashboard to get, update and delete Dashboard exclusive secrets.- apiGroups: [""]resources: ["secrets"]resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]verbs: ["get", "update", "delete"]# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.- apiGroups: [""]resources: ["configmaps"]resourceNames: ["kubernetes-dashboard-settings"]verbs: ["get", "update"]# Allow Dashboard to get metrics.- apiGroups: [""]resources: ["services"]resourceNames: ["heapster", "dashboard-metrics-scraper"]verbs: ["proxy"]- apiGroups: [""]resources: ["services/proxy"]resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]verbs: ["get"]---kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboard

rules:# Allow Metrics Scraper to get metrics from the Metrics server- apiGroups: ["metrics.k8s.io"]resources: ["pods", "nodes"]verbs: ["get", "list", "watch"]---apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboardnamespace: kubernetes-dashboard

roleRef:apiGroup: rbac.authorization.k8s.iokind: Rolename: kubernetes-dashboard

subjects:- kind: ServiceAccountname: kubernetes-dashboardnamespace: kubernetes-dashboard---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: kubernetes-dashboard

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: kubernetes-dashboard

subjects:- kind: ServiceAccountname: kubernetes-dashboardnamespace: kubernetes-dashboard---kind: Deployment

apiVersion: apps/v1

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboardnamespace: kubernetes-dashboard

spec:replicas: 1revisionHistoryLimit: 10selector:matchLabels:k8s-app: kubernetes-dashboardtemplate:metadata:labels:k8s-app: kubernetes-dashboardspec:securityContext:seccompProfile:type: RuntimeDefaultcontainers:- name: kubernetes-dashboardimage: kubernetesui/dashboard:v2.7.0imagePullPolicy: Alwaysports:- containerPort: 8443protocol: TCPargs:- --auto-generate-certificates- --namespace=kubernetes-dashboard# Uncomment the following line to manually specify Kubernetes API server Host# If not specified, Dashboard will attempt to auto discover the API server and connect# to it. Uncomment only if the default does not work.# - --apiserver-host=http://my-address:portvolumeMounts:- name: kubernetes-dashboard-certsmountPath: /certs# Create on-disk volume to store exec logs- mountPath: /tmpname: tmp-volumelivenessProbe:httpGet:scheme: HTTPSpath: /port: 8443initialDelaySeconds: 30timeoutSeconds: 30securityContext:allowPrivilegeEscalation: falsereadOnlyRootFilesystem: truerunAsUser: 1001runAsGroup: 2001volumes:- name: kubernetes-dashboard-certssecret:secretName: kubernetes-dashboard-certs- name: tmp-volumeemptyDir: {}serviceAccountName: kubernetes-dashboardnodeSelector:"kubernetes.io/os": linux# Comment the following tolerations if Dashboard must not be deployed on mastertolerations:- key: node-role.kubernetes.io/mastereffect: NoSchedule---kind: Service

apiVersion: v1

metadata:labels:k8s-app: dashboard-metrics-scrapername: dashboard-metrics-scrapernamespace: kubernetes-dashboard

spec:ports:- port: 8000targetPort: 8000selector:k8s-app: dashboard-metrics-scraper---kind: Deployment

apiVersion: apps/v1

metadata:labels:k8s-app: dashboard-metrics-scrapername: dashboard-metrics-scrapernamespace: kubernetes-dashboard

spec:replicas: 1revisionHistoryLimit: 10selector:matchLabels:k8s-app: dashboard-metrics-scrapertemplate:metadata:labels:k8s-app: dashboard-metrics-scraperspec:securityContext:seccompProfile:type: RuntimeDefaultcontainers:- name: dashboard-metrics-scraperimage: kubernetesui/metrics-scraper:v1.0.8ports:- containerPort: 8000protocol: TCPlivenessProbe:httpGet:scheme: HTTPpath: /port: 8000initialDelaySeconds: 30timeoutSeconds: 30volumeMounts:- mountPath: /tmpname: tmp-volumesecurityContext:allowPrivilegeEscalation: falsereadOnlyRootFilesystem: truerunAsUser: 1001runAsGroup: 2001serviceAccountName: kubernetes-dashboardnodeSelector:"kubernetes.io/os": linux# Comment the following tolerations if Dashboard must not be deployed on mastertolerations:- key: node-role.kubernetes.io/mastereffect: NoSchedulevolumes:- name: tmp-volumeemptyDir: {}

部署:

kubectl apply -f [你的本地路径]/recommended.yaml

本地创建dashboard-adminuser.yaml

apiVersion: v1

kind: ServiceAccount

metadata:name: admin-usernamespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: admin-user

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: cluster-admin

subjects:

- kind: ServiceAccountname: admin-usernamespace: kubernetes-dashboard

kubectl apply -f [你的文件路径]/dashboard-adminuser.yaml

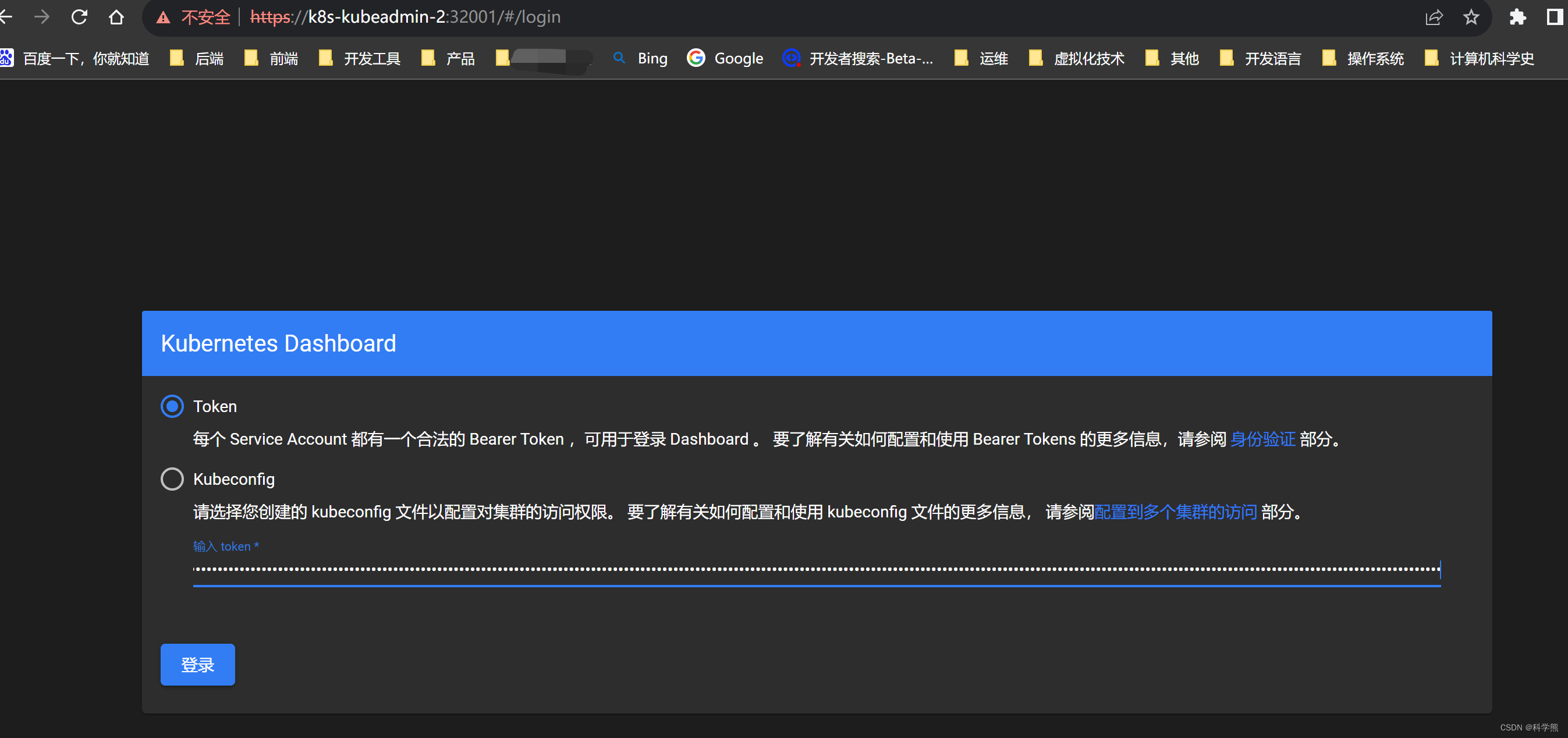

kubectl -n kubernetes-dashboard create token admin-user

保存输出的token,后面登录使用。

获取所有的命名空间下的pod:

[root@k8s-kubeadmin-1 yum.repos.d]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-flannel kube-flannel-ds-5r52b 1/1 Running 0 4h35m

kube-flannel kube-flannel-ds-9jvk4 1/1 Running 0 4h35m

kube-flannel kube-flannel-ds-jbc85 1/1 Running 0 4h35m

kube-system coredns-66f779496c-9tqbt 1/1 Running 0 5h8m

kube-system coredns-66f779496c-wzvts 1/1 Running 0 5h8m

kube-system dashboard-metrics-scraper-5657497c4c-v2dn4 1/1 Running 0 3h27m

kube-system etcd-k8s-kubeadmin-1 1/1 Running 0 5h9m

kube-system kube-apiserver-k8s-kubeadmin-1 1/1 Running 0 5h9m

kube-system kube-controller-manager-k8s-kubeadmin-1 1/1 Running 0 5h9m

kube-system kube-proxy-bwksp 1/1 Running 0 4h45m

kube-system kube-proxy-gdd49 1/1 Running 0 5h8m

kube-system kube-proxy-svj87 1/1 Running 0 4h45m

kube-system kube-scheduler-k8s-kubeadmin-1 1/1 Running 0 5h9m

kube-system kubernetes-dashboard-76f4b5bc7d-gjm79 0/1 CrashLoopBackOff 30 (7m54s ago) 150m

kubernetes-dashboard dashboard-metrics-scraper-5657497c4c-mk9hk 1/1 Running 0 4h28m

kubernetes-dashboard kubernetes-dashboard-78f87ddfc-v6l57 1/1 Running 0 4h28m查看所有的命名空间下的服务:NodePort(发布出去)型

kubectl get svc --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 5h10m

kube-system kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 5h10m

kubernetes-dashboard dashboard-metrics-scraper ClusterIP 10.109.201.223 <none> 8000/TCP 160m

kubernetes-dashboard kubernetes-dashboard NodePort 10.105.61.238 <none> 443:32001/TCP 157m

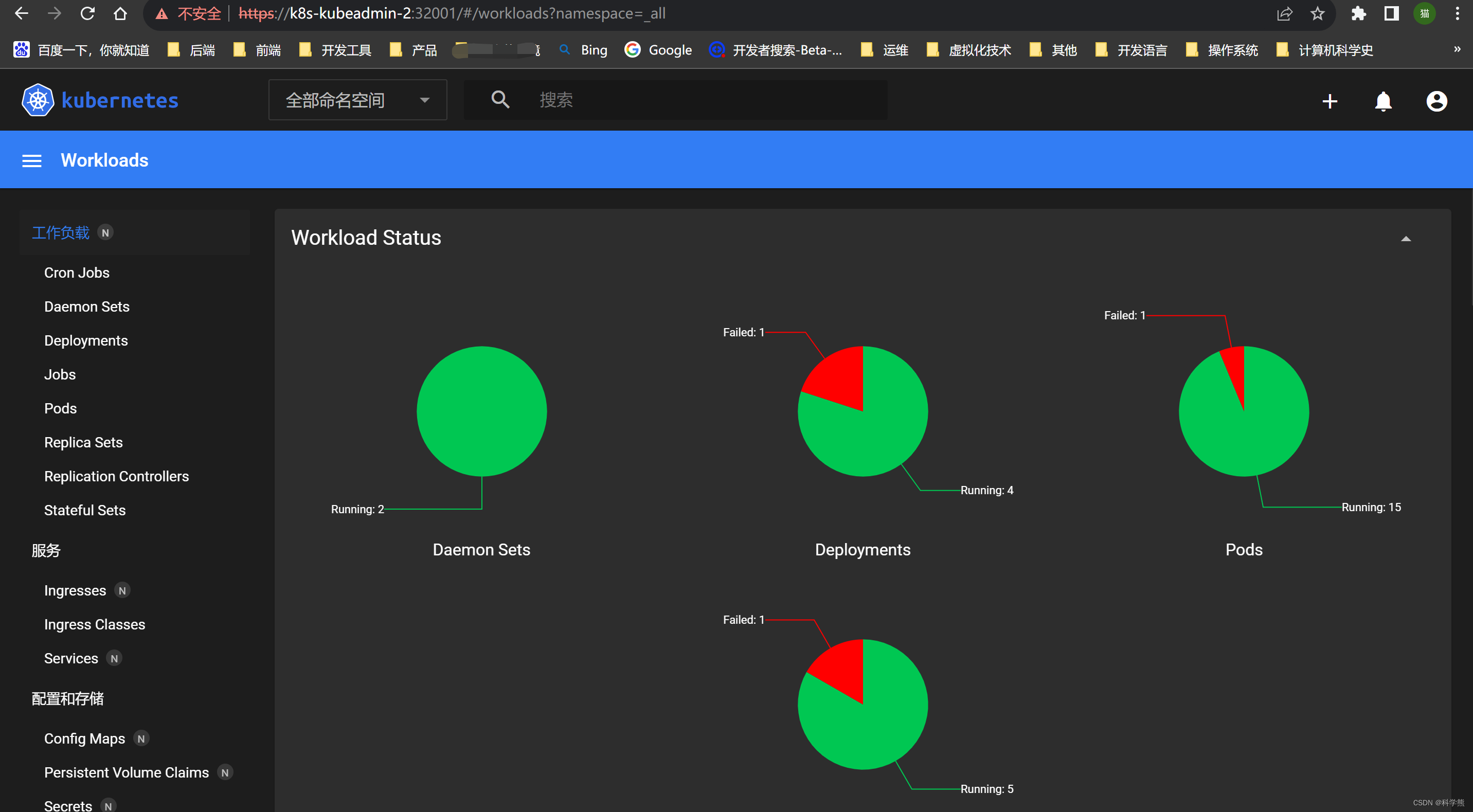

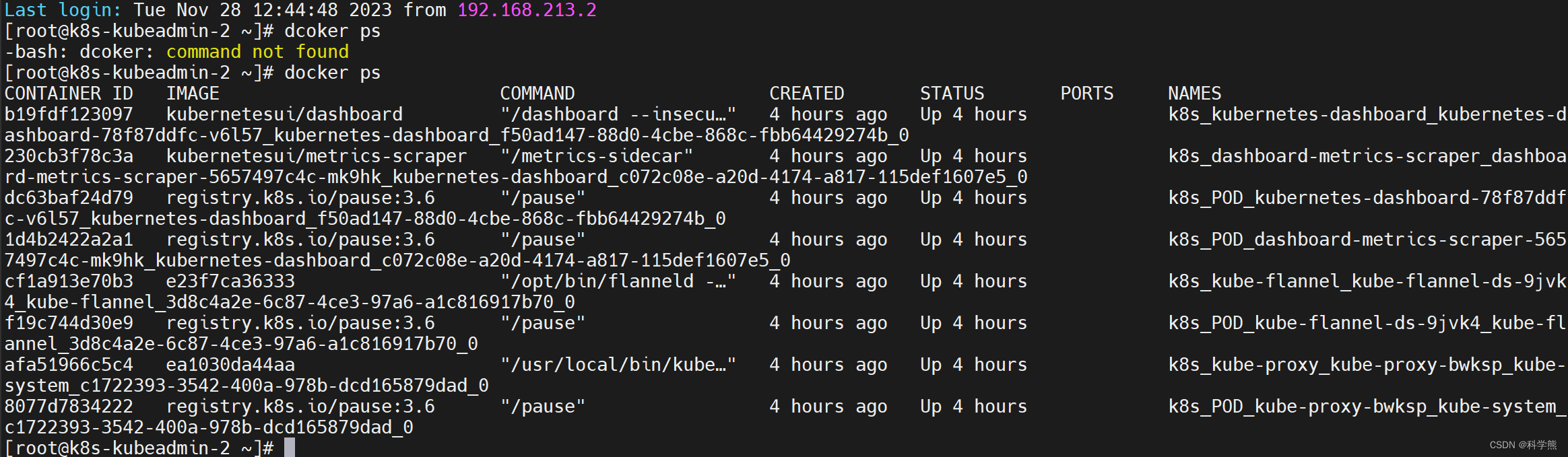

kubernetes-dashboard被部署到了k8s-kubeadmin-2节点。

访问:https://k8s-kubeadmin-2:32001/