原文:GPT function calling v2 - 知乎

OpenAI在2023年11月10号举行了第一次开发者大会(OpenAI DevDays),其中介绍了很多新奇有趣的新功能和新应用,而且更新了一波GPT的API,在1.0版本后的API调用与之前的0.X版本有了比较大的更新,尤其是GPT的function calling这个重要功能,所以这篇文章就来具体介绍如何使用新发布的API来实现function calling。虽然OpenAI在最新的API文档中将function calling改称为tools calling,但其实二者的差异不大,所以本文也还是继续使用function calling这个词来做相关的说明。

关于1.0版本之前的API使用,可以参考本人之前写的一篇文章,里面包含function calling的基本原理,流程和简单应用。

间断的连续:GPT function calling6 赞同 · 0 评论文章编辑

1. Openai API basic

以openai最常使用的chat API而言,以下的代码片段能够直观地体现如何利用新的API来实现简单的交流:

import os

import json

import loguru

from openai import OpenAI# Load from json configuration file

CONFIG_FILE = "configs/config.json"

API_KEY_TERM = "opeanai_api_key"

MODEL_TERM = "openai_chat_model"try:with open(CONFIG_FILE) as f:configs = json.load(f)API_KEY = configs[API_KEY_TERM]MODEL = configs[MODEL_TERM]

except FileNotFoundError:loguru.logger.error(f"Configuration file {CONFIG_FILE} not found")# Load from env variables

# API_KEY = os.environ.get("OPENAI_API_KEY")

# MODEL = "gpt-3.5-turbo-1106"# Create new OpenAI client object

client = OpenAI(api_key=API_KEY)# Get the response from OpenAI with system and user prompt

response = client.chat.completions.create(model = MODEL, messages = [{"role": "system", "content": "You are a helpful assistant."},{"role": "user", "content": "Introduce yourself."}]

)# Extract response messages

print(response.choices[0].message.content)API_KEY和chat模型可以从一个JSON配置文件中读取,或者从环境变量中获取(这个完全取决于个人的开发习惯)。之后创建OpenAI客户端对象。使用这个client对象来使用chat.completions.create来向OpenAI发送消息。在得到回复之后,使用response.choices[0].message.content来抽取信息。在终端运行得到的结果如下:

2. Function calling

2.1. function calling recap

简单来说,function calling是让GPT或者是大语言模型能够使用外部工具的能力。在API调用中,用户可以向gpt-3.5或者gpt-4.0描述需要调用的函数声明(function declaration)包括函数的名称和函数所需的参数,然后让模型智能选择输出一个包含调用函数的JSON对象。模型之后会生成一个JSON文件,用户可以在代码中用来调用该函数。

换句话说就是GPT虽然不能直接访问和使用外部数据源或者工具,但GPT能够根据语境知道何时需要访问外部资源,而且能够生成符合满足API调用的格式文件(一般为JSON文件),让用户可以在自己的代码中利用生成的格式文件和声明好的函数根据语境自动实现某种功能,如写邮件,网络搜索或者是实时天气查询。

2.2. Single function calling

针对简单的任务,可以使用单一的function calling来实现,如实现某个文件夹中的文件查询,实现如下。

首先得先有一个用于查询特定文件的函数实现。这里有一个实现细节,那就是return的files要转类型至string,因为GPT目前只能识别和处理信息中的文本和字符串,不能处理诸如列表,数组和字典等数据结构。

def list_files_in_directory(directory: str):try:files = os.listdir(directory)return str(files) if files else "The directory is empty."except FileNotFoundError:print(f"Directory '{directory}' not found")return []之后需要将该函数的签名,所需变量和返回值格式化为一个JSON格式:

tools = [{"type": "function","function": {"name": "list_files_in_directory","description": "List all files in a directory","parameters": {"type": "object","properties": {"directory": {"type": "string","description": "The name of directory to list files in"},},"required": ["directory"],},},}

]需要注意的是如果函数中不包含任何参数,也没有任何返回值,则可以写成以下格式:

tools = [{"type": "function","function": {"name": "YOUR FUNCTION NAME”,"description": "YOUR FUNCTION DESCRIPTION","parameters" : {"type": "object", "properties": {}}}},

]对于不包含任何参数和返回值的function calling其实可以作为一种柔性的条件判断来使用,也就是说它可以用于检测是否触发了某种意图如结束谈话或者是打招呼等。

通过函数实现和定义好的JSON格式,GPT就可以在函数调用时正确地生成相对应的参数,之后就可以调用GPT的API来实现工具调用了。

messages = [{"role": "system", "content": "You are a friendly chatbot that can use external tools to offer reliable assistance to human beings."},{"role": "user", "content": "List all the files in a directory in '../tools'."},

]response = client.chat.completions.create(model=OPENAI_CHAT_MODEL,messages=messages,tools=tools,

)messages.append(response.choices[0].message)此时,GPT返回的message中文本的内容是None,而是tool_calls则会包含需要调用的函数名和参数(与之前写好的函数实现是一致)。

ChatCompletion(id='chatcmpl-8cGeaG5CErdDUYDU2b5Pl4zZnKhXf', choices=[Choice(finish_reason='tool_calls', index=0, message=ChatCompletionMessage(content=None, role='assistant', function_call=None, tool_calls=[ChatCompletionMessageToolCall(id='call_k3GrVfDAt78erknnB8q1JNI3', function=Function(arguments='{"directory":"../tools"}', name='list_files_in_directory'), type='function')]), logprobs=None)], created=1704131172, model='gpt-3.5-turbo-1106', object='chat.completion', system_fingerprint='fp_772e8125bb', usage=CompletionUsage(completion_tokens=17, prompt_tokens=88, total_tokens=105))至此,GPT需要得到函数执行后的结果来得到最后的response。也就是说需要得到函数执行后的实际结果并再次发送给GPT来最终生成回复。在这个过程中,需要不断地将得到的中间结果加入到message中以此来构筑上下文,否则GPT会返回Bad Request error。

available_tools = {"list_files_in_directory": list_files_in_directory,

}tool_calls = response.choices[0].message.tool_callsfor tool_call in tool_calls:function_name = tool_call.function.namefunction_to_call = available_tools[function_name]function_args = json.loads(tool_call.function.arguments)function_response = function_to_call(**function_args)messages.append({"tool_call_id": tool_call.id,"role": "tool","name": function_name,"content": function_response,})之后发送新的message得到返回结果

second_response = client.chat.completions.create(model=OPENAI_CHAT_MODEL,messages=messages,tools=tools,

)print(second_response.choices[0].message.content)针对以下对应的文件目录得到的结果如下:

工程目录结构

GPT能够识别到给定目录下的文件

2.3. Sequential function calling

对于一些任务,仅使用一次function calling可能并不能实现最终的效果,如在上述的文件查询之后要求GPT能够去执行文件夹中的特定文件,从而得到某种结果。如果该文件不存在,就需要GPT自己去编写并且保存在该文件目录中,再去调用来得到结果。这就需要GPT能够多次调用不同的functions来实现最终的功能。这里可以用过while循环来实现,只有当finishi_resaon为“stop”的时候才停止生成。这里给出一种实现方式:

def list_files_in_directory(directory: str):try:files = os.listdir(directory)return str(files) if files else "The directory is empty."except FileNotFoundError:print(f"Directory '{directory}' not found")return []def is_file_in_directory(directory, filename):file_path = os.path.join(directory, filename)return os.path.exists(file_path)def save_to_file(filename, text):with open(filename, 'w') as f:f.write(text)return f"Content saved to {filename}"def execute_python_file(filename):try:result = subprocess.run(['python', filename], capture_output=True, text=True, check=True)return result.stdoutexcept subprocess.CalledProcessError as e:return f"Error: {e}"tools = [{"type": "function","function": {"name": "list_files_in_directory","description": "List all files in a directory","parameters": {"type": "object","properties": {"directory": {"type": "string","description": "The name of directory to list files in"},},"required": ["directory"],},},},{"type": "function","function": {"name": "is_file_in_directory","description": "Check if a file exists in a directory","parameters": {"type": "object","properties": {"directory": {"type": "string","description": "The name of directory to check file in"},"filename": {"type": "string","description": "The name of file to check"}, },"required": ["directory", "filename"],},},},{"type": "function","function": {"name": "save_to_file","description": "Save content to a file","parameters": {"type": "object","properties": {"filename": {"type": "string","description": "The name of file to save content to"},"text": {"type": "string","description": "The content to save to file"},},"required": ["filename", "text"],},},},{"type": "function","function": {"name": "execute_python_file","description": "Execute python file and get the output","parameters": {"type": "object","properties": {"filename": {"type": "string","description": "The name of file to execute"},},"required": ["filename"],},},}

]available_tools = {"list_files_in_directory": list_files_in_directory,"is_file_in_directory": is_file_in_directory,"save_to_file": save_to_file,"execute_python_file": execute_python_file,

}system_prompt = f"""

You are a friendly chatbot that can use external tools to offer reliable assistance to human beings.

"""

user_prompt = f"""

Write a Python file called 'platform_info.py' to check hardware information on a device if 'platform_info.py' does not exist in the directory called '../tools'.

Otherwise, get the result by executing the 'platform_info.py' and warp the result in natural language.

"""messages = [{"role": "system", "content": system_prompt},{"role": "user", "content": user_prompt},

]while True:response = client.chat.completions.create(model=OPENAI_CHAT_MODEL,messages=messages,tools=tools,tool_choice="auto")response_msg = response.choices[0].messagemessages.append(response_msg)print(response_msg.content) if response_msg.content else print(response_msg.tool_calls)finish_reason = response.choices[0].finish_reasonif finish_reason == "stop":breaktool_calls = response_msg.tool_callsif tool_calls:for tool_call in tool_calls:function_name = tool_call.function.namefunction_to_call = available_tools[function_name]function_args = json.loads(tool_call.function.arguments)function_response = function_to_call(**function_args)messages.append({"tool_call_id": tool_call.id,"role": "tool","name": function_name,"content": str(function_response),})基于以上文件目录,上述的程序运行完之后得到的结果如下:

2.4. Parallel function calling

这个其实是这次API更新的一大亮点,那就是如何能够让GPT可以实现一个API的并行调用。例如当用户希望同时查询北京,上海,广州和深圳四个城市的天气时,如果使用上述的sequential function calling也是可以实现的,但其实在每一次的调用中,其调用的函数都是一样的,只是不同的参数,这其实完全能够利用并行算法来实现更快地response生成。这个例子其实OpenAI在其官网已经给出了详细的代码,这里给出链接,就不再赘述。

https://platform.openai.com/docs/guides/function-callingplatform.openai.com/docs/guides/function-calling

2.5. JSON mode

在本次的API更新中还有一个比较有意思的点就是GPT的JSON mode,这其实也在给GPT的格式化输出提供了一个一个新方法,使用方法也很简单,代码如下:

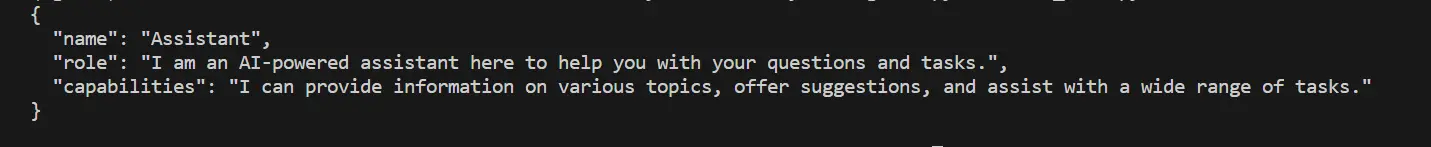

response = client.chat.completions.create(model = MODEL, messages = [{"role": "system", "content": "You are a helpful assistant and give answer in json format."},{"role": "user", "content": "Introduce yourself."}],response_format= { "type": "json_object" },max_tokens=1024,timeout=10)得到的结果如下:

需要注意的是JSON mode目前只有gpt-4-1106-previeworgpt-3.5-turbo-1106两个聊天模型可以使用。

3. 结语

关于更多细节的更新可以参考官方的网页:

https://platform.openai.com/docs/guides/text-generation/completions-apiplatform.openai.com/docs/guides/text-generation/completions-api

其中还包括有生成可重复性,tokens管理和参数调节等较为细节的更新,而本文则着重于function calling或者说tools calling这一功能的介绍。

OpenAI GPT的function calling很强大,但其闭源的特性或许是很多开发者或者是企业不太喜欢的,大家都喜欢自己可以掌握和完全可控的工具。所以基于开源模型的开源function calling其实已经逐步发展,我认为一个很好的例子就是chatGLM3,其API的调用就包含了function calling。而且也有很多研究者和开发者在不断开发新的开源function calling工具比如LLMCompiler。

https://github.com/SqueezeAILab/LLMCompilergithub.com/SqueezeAILab/LLMCompiler编辑

function calling结合其他功能其实可以实现一些很有意思的想法,例如模仿计算机内存层级架构来拓展语言模型上下文窗口长度的MemGPT,这个项目当中就需要使用function calling。

MemGPT: Towards LLMs as Operating Systemsarxiv.org/abs/2310.08560编辑

这其实也从侧面暗示了LLM结合function calling和memory等功能,很有可能演化为一种新型的操作系统的核心。