1 项目背景及意义

全球范围内的公共卫生安全和人脸识别技术的发展。在面对新型冠状病毒等传染病的爆发和传播风险时,佩戴口罩成为一种重要的防护措施。然而,现有的人脸识别系统在识别戴口罩的人脸时存在一定的困难。

通过口罩识别技术,可以更准确地辨别佩戴口罩的人员身份,有助于提高公共安全水平,减少犯罪行为和恶意攻击的风险,且传统的人脸识别技术在遇到戴口罩的情况下准确度较低。通过开发口罩识别的异构高性能项目,可以提高人脸识别系统在戴口罩情况下的准确性和可靠性。在口罩成为日常生活必备品的背景下,通过口罩识别技术可以实现更便捷的公共服务,如自助购物、自助取票等,提升人们的生活体验。

这一技术的应用有助于应对公共卫生安全挑战,并推动人脸识别技术的进一步发展与应用。

2 环境搭建

2.1 yolov5模型下载

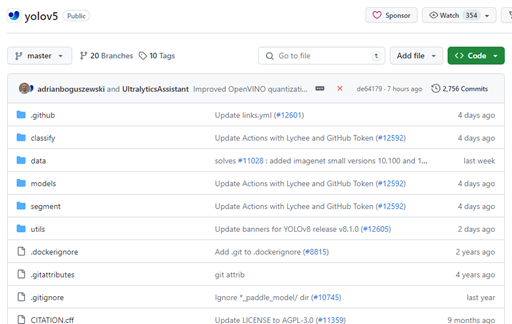

从github将yolov5的模型下载下来,github中yolov5首页如下图所示,通过右上角code下载全部代码。yolov5模型地址github地址

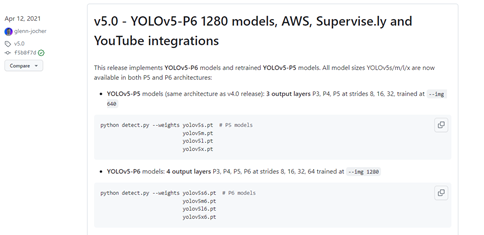

可通过release下载不同版本,本项目使用的是yolov5-5.0版本,可自由选择最新版本.

2.2 下载依赖

在已经有python环境的情况下,可以选用conda来构建虚拟环境,设置一个新的pytorch环境。

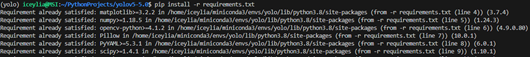

下载的yolov5模型根目录下有一个requirement.txt,其中包含了yolo所需的依赖包,通过指令pip install –r requirement.txt快速下载。如下图所示,图中的“already satisfied”是由于依赖已安装

3 数据集处理

实验中初始数据集为jpg格式图片与对应的txt格式标签文件,需要转为yolo格式的标签文件,以及划分数据集。代码部分主要参考目标检测数据集划分

3.1 txt转为xml

对于没有标签文件的图片可以使用labelImg标注,保存为xml文件,则不需要中txt转为xml,本次实验中没有xml标签,所以需要先转为xml。

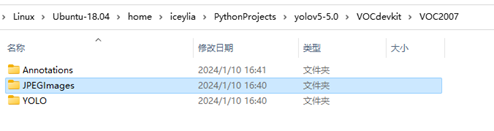

文件结构如下图所示,在项目根目录下创建VOCdevkit/VOC2007,分为三个文件夹,JPEGImages用以存放图片,YOLO存放待转txt标签文件,Annotaions目前为空,用以存放转化后的XML文件。

在根目录下创建txttoxml.py,用以执行代码转txt为xml,详细代码如下

from xml.dom.minidom import Document

import os

import cv2# def makexml(txtPath, xmlPath, picPath): # txt所在文件夹路径,xml文件保存路径,图片所在文件夹路径

def makexml(picPath, txtPath, xmlPath): # txt所在文件夹路径,xml文件保存路径,图片所在文件夹路径"""此函数用于将yolo格式txt标注文件转换为voc格式xml标注文件在自己的标注图片文件夹下建三个子文件夹,分别命名为picture、txt、xml"""dic = {'0': "face", '1': "mask",}files = os.listdir(txtPath)for i, name in enumerate(files):xmlBuilder = Document()annotation = xmlBuilder.createElement("annotation") # 创建annotation标签xmlBuilder.appendChild(annotation)txtFile = open(txtPath + name)txtList = txtFile.readlines()img = cv2.imread(picPath + name[0:-4] + ".jpg")Pheight, Pwidth, Pdepth = img.shapefolder = xmlBuilder.createElement("folder") # folder标签foldercontent = xmlBuilder.createTextNode("driving_annotation_dataset")folder.appendChild(foldercontent)annotation.appendChild(folder) # folder标签结束filename = xmlBuilder.createElement("filename") # filename标签filenamecontent = xmlBuilder.createTextNode(name[0:-4] + ".jpg")filename.appendChild(filenamecontent)annotation.appendChild(filename) # filename标签结束size = xmlBuilder.createElement("size") # size标签width = xmlBuilder.createElement("width") # size子标签widthwidthcontent = xmlBuilder.createTextNode(str(Pwidth))width.appendChild(widthcontent)size.appendChild(width) # size子标签width结束height = xmlBuilder.createElement("height") # size子标签heightheightcontent = xmlBuilder.createTextNode(str(Pheight))height.appendChild(heightcontent)size.appendChild(height) # size子标签height结束depth = xmlBuilder.createElement("depth") # size子标签depthdepthcontent = xmlBuilder.createTextNode(str(Pdepth))depth.appendChild(depthcontent)size.appendChild(depth) # size子标签depth结束annotation.appendChild(size) # size标签结束for j in txtList:oneline = j.strip().split(" ")object = xmlBuilder.createElement("object") # object 标签picname = xmlBuilder.createElement("name") # name标签namecontent = xmlBuilder.createTextNode(dic[oneline[0]])picname.appendChild(namecontent)object.appendChild(picname) # name标签结束pose = xmlBuilder.createElement("pose") # pose标签posecontent = xmlBuilder.createTextNode("Unspecified")pose.appendChild(posecontent)object.appendChild(pose) # pose标签结束truncated = xmlBuilder.createElement("truncated") # truncated标签truncatedContent = xmlBuilder.createTextNode("0")truncated.appendChild(truncatedContent)object.appendChild(truncated) # truncated标签结束difficult = xmlBuilder.createElement("difficult") # difficult标签difficultcontent = xmlBuilder.createTextNode("0")difficult.appendChild(difficultcontent)object.appendChild(difficult) # difficult标签结束bndbox = xmlBuilder.createElement("bndbox") # bndbox标签xmin = xmlBuilder.createElement("xmin") # xmin标签mathData = int(((float(oneline[1])) * Pwidth + 1) - (float(oneline[3])) * 0.5 * Pwidth)xminContent = xmlBuilder.createTextNode(str(mathData))xmin.appendChild(xminContent)bndbox.appendChild(xmin) # xmin标签结束ymin = xmlBuilder.createElement("ymin") # ymin标签mathData = int(((float(oneline[2])) * Pheight + 1) - (float(oneline[4])) * 0.5 * Pheight)yminContent = xmlBuilder.createTextNode(str(mathData))ymin.appendChild(yminContent)bndbox.appendChild(ymin) # ymin标签结束xmax = xmlBuilder.createElement("xmax") # xmax标签mathData = int(((float(oneline[1])) * Pwidth + 1) + (float(oneline[3])) * 0.5 * Pwidth)xmaxContent = xmlBuilder.createTextNode(str(mathData))xmax.appendChild(xmaxContent)bndbox.appendChild(xmax) # xmax标签结束ymax = xmlBuilder.createElement("ymax") # ymax标签mathData = int(((float(oneline[2])) * Pheight + 1) + (float(oneline[4])) * 0.5 * Pheight)ymaxContent = xmlBuilder.createTextNode(str(mathData))ymax.appendChild(ymaxContent)bndbox.appendChild(ymax) # ymax标签结束object.appendChild(bndbox) # bndbox标签结束annotation.appendChild(object) # object标签结束f = open(xmlPath + name[0:-4] + ".xml", 'w')xmlBuilder.writexml(f, indent='\t', newl='\n', addindent='\t', encoding='utf-8')f.close()if __name__ == "__main__":picPath = "VOCdevkit/VOC2007/JPEGImages/" txtPath = "VOCdevkit/VOC2007/YOLO/" xmlPath = "VOCdevkit/VOC2007/Annotations/" makexml(picPath, txtPath, xmlPath)

3.2 xml转为yolo

yolo标签文件也是txt格式的,但要直接使用txt很可能会出错,所以需要先转xml再转yolo。

实验通过文件命名划分数据集,将test_00003到test_00004作为验证集,大约1000张图片。test_00004以后作为测试集,大约800张。余下作为训练集,大约6000张,如果需要修改这个,可用正则。

import xml.etree.ElementTree as ET

import pickle

import os

from os import listdir, getcwd

from os.path import join

import random

import re

from shutil import copyfileclasses = ["face", "mask"]

#classes=["ball"]TRAIN_RATIO = 80def clear_hidden_files(path):dir_list = os.listdir(path)for i in dir_list:abspath = os.path.join(os.path.abspath(path), i)if os.path.isfile(abspath):if i.startswith("._"):os.remove(abspath)else:clear_hidden_files(abspath)def convert(size, box):dw = 1./size[0]dh = 1./size[1]x = (box[0] + box[1])/2.0y = (box[2] + box[3])/2.0w = box[1] - box[0]h = box[3] - box[2]x = x*dww = w*dwy = y*dhh = h*dhreturn (x,y,w,h)def convert_annotation(image_id):in_file = open('VOCdevkit/VOC2007/Annotations/%s.xml' %image_id)out_file = open('VOCdevkit/VOC2007/YOLO/%s.txt' %image_id, 'w')tree=ET.parse(in_file)root = tree.getroot()size = root.find('size')w = int(size.find('width').text)h = int(size.find('height').text)for obj in root.iter('object'):difficult = obj.find('difficult').textcls = obj.find('name').textif cls not in classes or int(difficult) == 1:continuecls_id = classes.index(cls)xmlbox = obj.find('bndbox')b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text), float(xmlbox.find('ymax').text))bb = convert((w,h), b)out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')in_file.close()out_file.close()wd = os.getcwd()

wd = os.getcwd()

data_base_dir = os.path.join(wd, "VOCdevkit/")

if not os.path.isdir(data_base_dir):os.mkdir(data_base_dir)

work_sapce_dir = os.path.join(data_base_dir, "VOC2007/")

if not os.path.isdir(work_sapce_dir):os.mkdir(work_sapce_dir)

annotation_dir = os.path.join(work_sapce_dir, "Annotations/")

if not os.path.isdir(annotation_dir):os.mkdir(annotation_dir)

clear_hidden_files(annotation_dir)

image_dir = os.path.join(work_sapce_dir, "JPEGImages/")

if not os.path.isdir(image_dir):os.mkdir(image_dir)

clear_hidden_files(image_dir)

yolo_labels_dir = os.path.join(work_sapce_dir, "YOLO/")

if not os.path.isdir(yolo_labels_dir):os.mkdir(yolo_labels_dir)

clear_hidden_files(yolo_labels_dir)

yolov5_images_dir = os.path.join(data_base_dir, "images/")

if not os.path.isdir(yolov5_images_dir):os.mkdir(yolov5_images_dir)

clear_hidden_files(yolov5_images_dir)

yolov5_labels_dir = os.path.join(data_base_dir, "labels/")

if not os.path.isdir(yolov5_labels_dir):os.mkdir(yolov5_labels_dir)

clear_hidden_files(yolov5_labels_dir)

yolov5_images_train_dir = os.path.join(yolov5_images_dir, "train/")

if not os.path.isdir(yolov5_images_train_dir):os.mkdir(yolov5_images_train_dir)

clear_hidden_files(yolov5_images_train_dir)

yolov5_images_val_dir = os.path.join(yolov5_images_dir, "val/")

if not os.path.isdir(yolov5_images_val_dir):os.mkdir(yolov5_images_val_dir)

clear_hidden_files(yolov5_images_val_dir)

yolov5_images_test_dir = os.path.join(yolov5_images_dir, "test/")

if not os.path.isdir(yolov5_images_test_dir):os.mkdir(yolov5_images_test_dir)

clear_hidden_files(yolov5_images_test_dir)

yolov5_labels_train_dir = os.path.join(yolov5_labels_dir, "train/")

if not os.path.isdir(yolov5_labels_train_dir):os.mkdir(yolov5_labels_train_dir)

clear_hidden_files(yolov5_labels_train_dir)

yolov5_labels_val_dir = os.path.join(yolov5_labels_dir, "val/")

if not os.path.isdir(yolov5_labels_val_dir):os.mkdir(yolov5_labels_val_dir)

clear_hidden_files(yolov5_labels_val_dir)

yolov5_labels_test_dir = os.path.join(yolov5_labels_dir, "test/")

if not os.path.isdir(yolov5_labels_test_dir):os.mkdir(yolov5_labels_test_dir)

clear_hidden_files(yolov5_labels_test_dir)train_file = open(os.path.join(wd, "yolov5_train.txt"), 'w')

val_file = open(os.path.join(wd, "yolov5_val.txt"), 'w')

test_file = open(os.path.join(wd, "yolov5_test.txt"), 'w')

train_file.close()

val_file.close()

test_file.close()

train_file = open(os.path.join(wd, "yolov5_train.txt"), 'a')

val_file = open(os.path.join(wd, "yolov5_val.txt"), 'a')

test_file = open(os.path.join(wd, "yolov5_test.txt"), 'a')

list_imgs = os.listdir(image_dir) # list image files

for i in range(0,len(list_imgs)):path = os.path.join(image_dir,list_imgs[i])if os.path.isfile(path):image_path = image_dir + list_imgs[i]voc_path = list_imgs[i](nameWithoutExtention, extention) = os.path.splitext(os.path.basename(image_path))(voc_nameWithoutExtention, voc_extention) = os.path.splitext(os.path.basename(voc_path))annotation_name = nameWithoutExtention + '.xml'annotation_path = os.path.join(annotation_dir, annotation_name)label_name = nameWithoutExtention + '.txt'label_path = os.path.join(yolo_labels_dir, label_name)if(nameWithoutExtention.startswith("test_00003")): # test datasetif os.path.exists(annotation_path):val_file.write(image_path + '\n')convert_annotation(nameWithoutExtention) # convert labelcopyfile(image_path, yolov5_images_val_dir + voc_path)copyfile(label_path, yolov5_labels_val_dir + label_name)elif(nameWithoutExtention.startswith("test_00004")):if os.path.exists(annotation_path):test_file.write(image_path + '\n')convert_annotation(nameWithoutExtention) # convert labelcopyfile(image_path, yolov5_images_test_dir + voc_path)copyfile(label_path, yolov5_labels_test_dir + label_name)else: # train datasetif os.path.exists(annotation_path):train_file.write(image_path + '\n')convert_annotation(nameWithoutExtention) # convert labelcopyfile(image_path, yolov5_images_train_dir + voc_path)copyfile(label_path, yolov5_labels_train_dir + label_name)train_file.close()

val_file.close()

test_file.close()

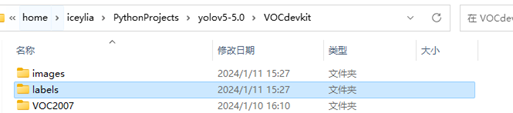

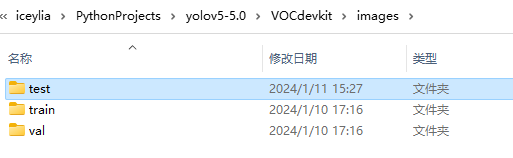

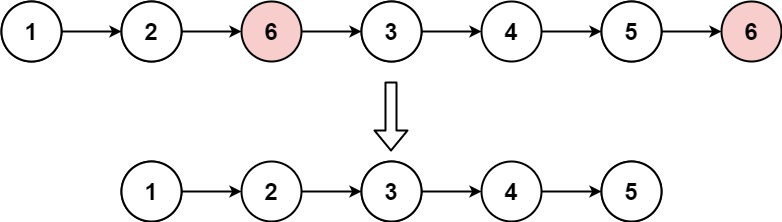

划分后文件结构如图所示,在VOCdevkit下生成了images与labels文件夹分别存放图片和yolo标签文件。

images下文件结构如图所示,test、train、val文件夹用以存放不同类型的数据集图片,labels文件夹同理

4 模型训练

4.1 下载yolov5预训练模型

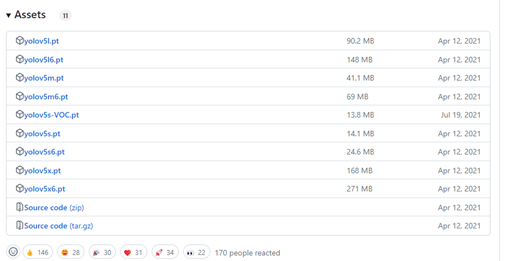

同样在github的yolov5官网release页面可找到发布的预训练模型,如图所示。使用预训练模型能加快训练速度。实验中选择最基础的yolo5s.pt,精度更高的预训练模型需要更长的训练时间,实验中选择5s即可。

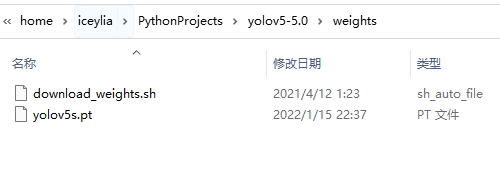

将下载后的模型放入weights目录下,如图所示

4.2 更改配置文件

4.2.1 修改模型配置文件

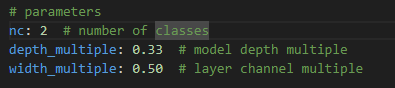

本着不破坏原文件的原则,将我们需要修改的models目录下的yolov5s.yaml复制,重命名为mask.yaml,修改其中nc为2,即改类型数量(number of classes)为2,如图所示

4.2.2 修改数据集配置文件

接着修改数据集配置文件,复制data下的voco.yaml,也重命名为mask.yaml,修改数据集对应位置,类型数量,类型名字,如图所示

4.2.3 修改训练代码参数

最后修改train.py中的参数加载修改后的配置,并设置需要的配置,那么训练的配置就已配置完毕

if __name__ == '__main__':parser = argparse.ArgumentParser()parser.add_argument('--weights', type=str, default='weights/yolov5s.pt', help='initial weights path')parser.add_argument('--cfg', type=str, default='models/mask.yaml', help='model.yaml path')parser.add_argument('--data', type=str, default='data/mask.yaml', help='data.yaml path')parser.add_argument('--hyp', type=str, default='data/hyp.scratch.yaml', help='hyperparameters path')parser.add_argument('--epochs', type=int, default=200)parser.add_argument('--batch-size', type=int, default=8, help='total batch size for all GPUs')parser.add_argument('--img-size', nargs='+', type=int, default=[640, 640], help='[train, test] image sizes')parser.add_argument('--rect', action='store_true', help='rectangular training')parser.add_argument('--resume', nargs='?', const=True, default=False, help='resume most recent training')parser.add_argument('--nosave', action='store_true', help='only save final checkpoint')parser.add_argument('--notest', action='store_true', help='only test final epoch')parser.add_argument('--noautoanchor', action='store_true', help='disable autoanchor check')parser.add_argument('--evolve', action='store_true', help='evolve hyperparameters')parser.add_argument('--bucket', type=str, default='', help='gsutil bucket')parser.add_argument('--cache-images', action='store_true', help='cache images for faster training')parser.add_argument('--image-weights', action='store_true', help='use weighted image selection for training')parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')parser.add_argument('--multi-scale', action='store_true', help='vary img-size +/- 50%%')parser.add_argument('--single-cls', action='store_true', help='train multi-class data as single-class')parser.add_argument('--adam', action='store_true', help='use torch.optim.Adam() optimizer')parser.add_argument('--sync-bn', action='store_true', help='use SyncBatchNorm, only available in DDP mode')parser.add_argument('--local_rank', type=int, default=-1, help='DDP parameter, do not modify')parser.add_argument('--workers', type=int, default=8, help='maximum number of dataloader workers')parser.add_argument('--project', default='runs/train', help='save to project/name')parser.add_argument('--entity', default=None, help='W&B entity')parser.add_argument('--name', default='exp', help='save to project/name')parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')parser.add_argument('--quad', action='store_true', help='quad dataloader')parser.add_argument('--linear-lr', action='store_true', help='linear LR')parser.add_argument('--label-smoothing', type=float, default=0.0, help='Label smoothing epsilon')parser.add_argument('--upload_dataset', action='store_true', help='Upload dataset as W&B artifact table')parser.add_argument('--bbox_interval', type=int, default=-1, help='Set bounding-box image logging interval for W&B')parser.add_argument('--save_period', type=int, default=-1, help='Log model after every "save_period" epoch')parser.add_argument('--artifact_alias', type=str, default="latest", help='version of dataset artifact to be used')opt = parser.parse_args()

4.3 训练模型

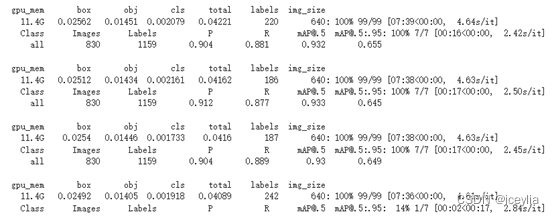

配置好参数后运行train.py即可开始训练,由于yolo训练时间较长,6000张的训练集,且云端GPU资源有限,CPU与GPU交替训练,实验只训练了80次,但也断断续续花费了10多个小时,部分训练情况如图所示。

4.3.1 查看训练情况

通过参数可及时查看训练情况

训练集

- box:bounding box的平均损失值

- obj: objectness的平均损失值

- cls: 分类的平均损失值

- total: 损失值的总和,即box+obj+cls

- labels: 每个batch中标注物体数量的平均值

- img_size: 图像大小

验证集

- Images: 类别中图片数量

- Labels: 类别中真实标注数量

- P: 该类别的预测精准度

- R: 找回率

- mAP@.5: 平均精度均值,即在loU阈值为0.5时的平均精度

- mAP@.5:.95: loU阈值从0.5到0.95之间的平均精度均值

这些指标的意义在于能够在训练过程中查看训练情况,及时调整训练参数。

yolo同时支持tensorboard可视化查看训练情况,由于实验在云端进行,使用这些参数监控模型的训练过程。

4.3.2 训练参数的调整

5 系统测试

已训练模型会被放在runs/train/exp/weights文件夹中,其中best是最好的权重参数文件,选择best.pt作为最终的模型。

yolov5本身有测试模块。

![[足式机器人]Part2 Dr. CAN学习笔记-Advanced控制理论 Ch04-8 状态观测器设计 Linear Observer Design](https://img-blog.csdnimg.cn/direct/3ae9839c835048658a5f7b85342012d0.png#pic_center)