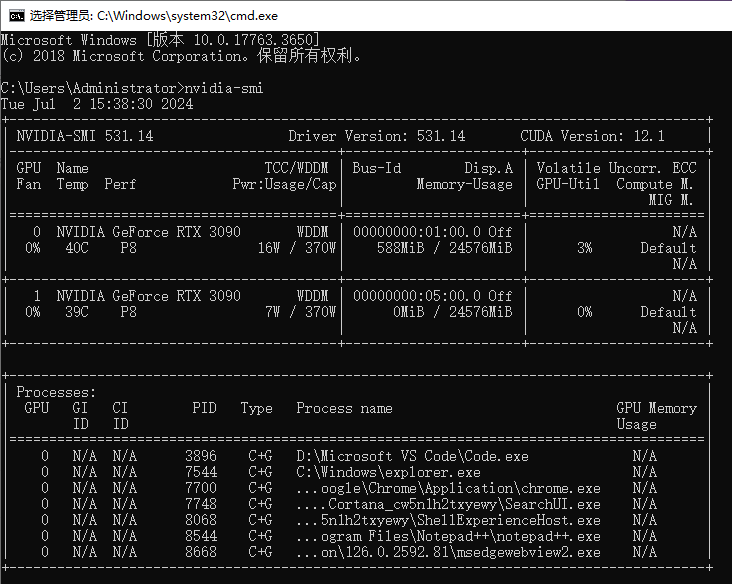

一、确认本机显卡配置

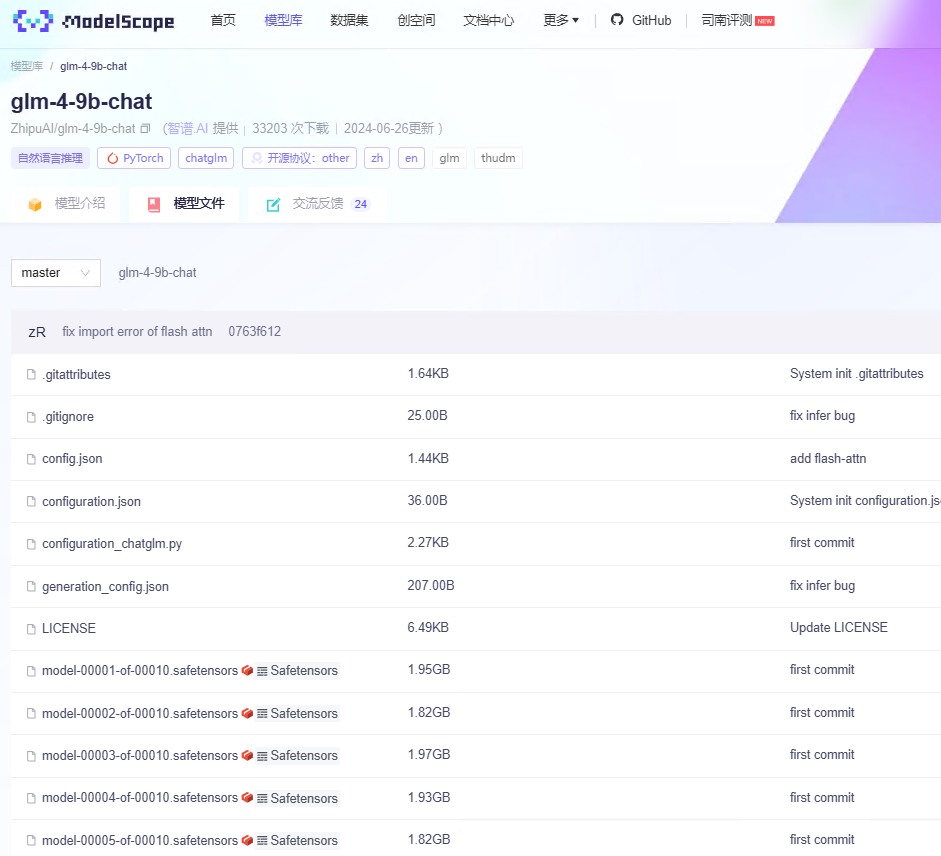

二、下载大模型

国内可以从魔搭社区下载,

下载地址:https://modelscope.cn/models/ZhipuAI/glm-4-9b-chat/files

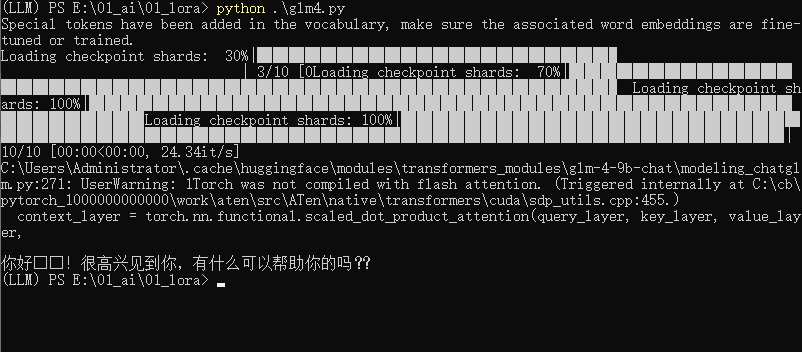

三、运行官方代码

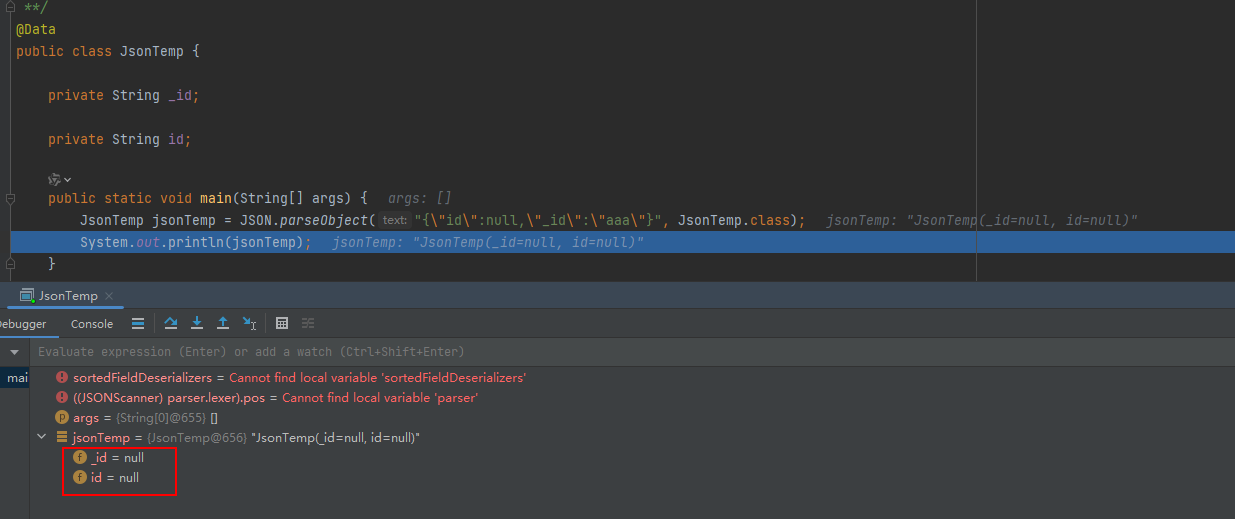

import torch from transformers import AutoModelForCausalLM, AutoTokenizerdevice = "cuda"tokenizer = AutoTokenizer.from_pretrained("E:\openai\ChatGLM4\glm-4-9b-chat", trust_remote_code=True)query = "你好"inputs = tokenizer.apply_chat_template([{"role": "user", "content": query}],add_generation_prompt=True,tokenize=True,return_tensors="pt",return_dict=True)inputs = inputs.to(device) model = AutoModelForCausalLM.from_pretrained("E:\openai\ChatGLM4\glm-4-9b-chat",torch_dtype=torch.bfloat16,low_cpu_mem_usage=True,trust_remote_code=True ).to(device).eval()gen_kwargs = {"max_length": 2500, "do_sample": True, "top_k": 1} with torch.no_grad():outputs = model.generate(**inputs, **gen_kwargs)outputs = outputs[:, inputs['input_ids'].shape[1]:]print(tokenizer.decode(outputs[0], skip_special_tokens=True))

结果如下

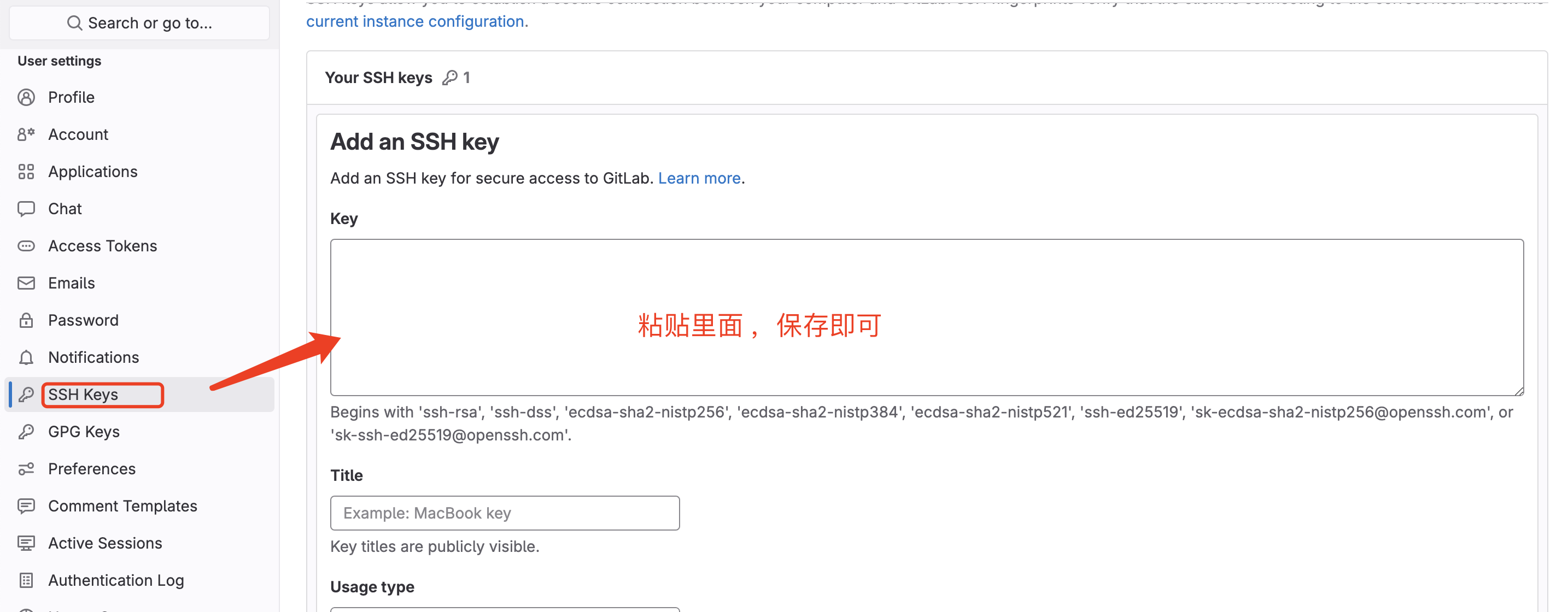

中间需要安装环境,建议用anaconda来安装