github:https://github.com/icey-zhang/SuperYOLO

article:https://www.sfu.ca/~zhenman/files/J12-TGRS2023-SuperYOLO.pdf

环境:

PyTorch 2.5.1

Python 3.12(ubuntu22.04)

CUDA 12.4

GPU RTX 4090D(24GB) * 1升降配置

CPU 18 vCPU AMD EPYC 9754 128-Core Processor

内存 60GB

由于不习惯SuperYOLO的数据集读取方式,此处使用原YOLOv5方式。

此处使用的数据集为VisDrone2019。

大中小三种尺度划分:

Small < 32×32 < Medium < 96×96 < Large

data.yaml内容示例:

点击查看代码

train: /root/autodl-tmp/VisDrone/VisDrone2019-DET-train/images # train images (relative to 'path') 6471 images

val: /root/autodl-tmp/VisDrone/VisDrone2019-DET-val/images # val images (relative to 'path') 548 images

test: /root/autodl-tmp/VisDrone/VisDrone2019-DET-test-dev/images # test images (optional) 1610 images

# Classes

nc: 10

names: ['pedestrian', 'people', 'bicycle', 'car', 'van', 'truck', 'tricycle', 'awning-tricycle', 'bus', 'motor']

相应的改动:

utils/general.py:

点击查看代码

# 替换原check_dataset()函数

def check_dataset(data, autodownload=True):# Download (optional)extract_dir = ''if isinstance(data, (str, Path)) and str(data).endswith('.zip'): # i.e. gs://bucket/dir/coco128.zipdownload(data, dir='../datasets', unzip=True, delete=False, curl=False, threads=1)data = next((Path('../datasets') / Path(data).stem).rglob('*.yaml'))extract_dir, autodownload = data.parent, False# Read yaml (optional)if isinstance(data, (str, Path)):with open(data, errors='ignore') as f:data = yaml.safe_load(f) # dictionary# Parse yamlpath = extract_dir or Path(data.get('path') or '') # optional 'path' default to '.'for k in 'train', 'val', 'test':if data.get(k): # prepend pathdata[k] = str(path / data[k]) if isinstance(data[k], str) else [str(path / x) for x in data[k]]assert 'nc' in data, "Dataset 'nc' key missing."if 'names' not in data:data['names'] = [f'class{i}' for i in range(data['nc'])] # assign class names if missingtrain, val, test, s = (data.get(x) for x in ('train', 'val', 'test', 'download'))if val:val = [Path(x).resolve() for x in (val if isinstance(val, list) else [val])] # val pathif not all(x.exists() for x in val):print('\nWARNING: Dataset not found, nonexistent paths: %s' % [str(x) for x in val if not x.exists()])if s and autodownload: # download scriptroot = path.parent if 'path' in data else '..' # unzip directory i.e. '../'if s.startswith('http') and s.endswith('.zip'): # URLf = Path(s).name # filenameprint(f'Downloading {s} to {f}...')torch.hub.download_url_to_file(s, f)Path(root).mkdir(parents=True, exist_ok=True) # create rootZipFile(f).extractall(path=root) # unzipPath(f).unlink() # remove zipr = None # successelif s.startswith('bash '): # bash scriptprint(f'Running {s} ...')r = os.system(s)else: # python scriptr = exec(s, {'yaml': data}) # return Noneprint(f"Dataset autodownload {f'success, saved to {root}' if r in (0, None) else 'failure'}\n")else:raise Exception('Dataset not found.')return data # dictionary

单RGB分支评估完整代码(test.py):

点击查看代码

import argparse

import json

import os

from pathlib import Path

from threading import Threadimport numpy as np

import torch

import yaml

from tqdm import tqdmfrom models.experimental import attempt_load

from utils.datasets import create_dataloader, create_dataloader_sr

from utils.general import coco80_to_coco91_class, check_dataset, check_file, check_img_size, check_requirements, \box_iou, non_max_suppression,weighted_boxes, scale_coords, xyxy2xywh, xywh2xyxy, set_logging, increment_path, colorstr

from utils.metrics import ap_per_class, ConfusionMatrix

from utils.plots import plot_images, output_to_target, plot_study_txt

from utils.torch_utils import select_device, time_synchronizedfrom torchvision import transforms

from PIL import Image

unloader = transforms.ToPILImage()

def tensor_to_PIL(tensor):image = tensor.cpu().clone()image = image.squeeze(0)image = unloader(image)image.save('a.png')return imagedef process_batch(detections, labels, iouv):correct = torch.zeros(detections.shape[0], iouv.shape[0], dtype=torch.bool, device=iouv.device)iou = box_iou(labels[:, 1:], detections[:, :4])x = torch.where((iou >= iouv[0]) & (labels[:, 0:1] == detections[:, 5])) # IoU above threshold and classes matchif x[0].shape[0]:matches = torch.cat((torch.stack(x, 1), iou[x[0], x[1]][:, None]), 1).cpu().numpy() # [label, detection, iou]if x[0].shape[0] > 1:matches = matches[matches[:, 2].argsort()[::-1]]matches = matches[np.unique(matches[:, 1], return_index=True)[1]]matches = matches[np.unique(matches[:, 0], return_index=True)[1]]matches = torch.Tensor(matches).to(iouv.device)correct[matches[:, 1].long()] = matches[:, 2:3] >= iouvreturn correctdef test(data,weights=None,batch_size=32,imgsz=640,input_mode = None,conf_thres=0.001,iou_thres=0.6, # for NMSsave_json=False,single_cls=False,augment=False,verbose=False,model=None,dataloader=None,save_dir=Path(''), # for saving imagessave_txt=False, # for auto-labellingsave_hybrid=False, # for hybrid auto-labellingsave_conf=False, # save auto-label confidencesplots=True,wandb_logger=None,compute_loss=None,is_coco=False):print(f"*******************当前权重:{weights}*******************")# Initialize/load model and set devicetraining = model is not Noneif training: # called by train.pydevice = next(model.parameters()).device # get model deviceelse: # called directlyset_logging()device = select_device(opt.device, batch_size=batch_size)# Directoriessave_dir = Path(increment_path(Path(opt.project) / opt.name, exist_ok=opt.exist_ok)) # increment run(save_dir / 'labels' if save_txt else save_dir).mkdir(parents=True, exist_ok=True) # make dir# Load modelmodel = attempt_load(weights, map_location=device) # load FP32 modelprint(model.yaml_file)gs = max(int(model.stride.max()), 32) # grid size (max stride)imgsz = check_img_size(imgsz, s=gs) # check img_sizedata = check_dataset(data) # checkhalf = device.type != 'cpu'model.half() if half else model.float()# Configuremodel.eval()# print(model)if isinstance(data, str):is_coco = data.endswith('coco.yaml')with open(data) as f:data = yaml.load(f, Loader=yaml.SafeLoader)check_dataset(data) # checknc = 1 if single_cls else int(data['nc']) # number of classesiouv = torch.linspace(0.5, 0.95, 10).to(device) # iou vector for mAP@0.5:0.95 zjqniou = iouv.numel()stats_small, stats_medium, stats_large = [], [], []# Logginglog_imgs = 0if wandb_logger and wandb_logger.wandb:log_imgs = min(wandb_logger.log_imgs, 100)if not training:task = opt.task if opt.task in ('train', 'val', 'test') else 'val' # path to train/val/test imagesif opt.data.endswith('vedai.yaml') or opt.data.endswith('SRvedai.yaml'):from utils.datasets import create_dataloader_sr as create_dataloaderelse:from utils.datasets import create_dataloaderdataloader, dataset = create_dataloader(data[task], imgsz, batch_size, gs, opt, augment=augment, cache=True, rect=True, quad=False, prefix=colorstr(f'{task}: '))seen = 0confusion_matrix = ConfusionMatrix(nc=nc)names = {k: v for k, v in enumerate(model.names if hasattr(model, 'names') else model.module.names)}coco91class = coco80_to_coco91_class()s = ('%20s' + '%12s' * 6) % ('Class', 'Images', 'Labels', 'P', 'R', 'mAP@.5', 'mAP@.5:.95')p, r, f1, mp, mr, map50, map, t0, t1 = 0., 0., 0., 0., 0., 0., 0., 0., 0.loss = torch.zeros(3, device=device)jdict, stats, ap, ap_class, wandb_images = [], [], [], [], []for batch_i, (img, ir, targets, paths, shapes) in enumerate(tqdm(dataloader, desc=s)): #zjq# t1 = time_sync()img = img.to(device, non_blocking=True).float()img = img.half() if half else img.float() # uint8 to fp16/32img /= 255.0 # 0 - 255 to 0.0 - 1.0ir = ir.to(device, non_blocking=True).float()# ir = ir.half() if half else ir.float() # uint8 to fp16/32ir /= 255.0 # 0 - 255 to 0.0 - 1.0targets = targets.to(device)nb, _, height, width = img.shape # batch size, channels, height, widthwith torch.no_grad():# Run modelt = time_synchronized()try:out, train_out = model(img,ir,input_mode=input_mode) #zjq inference and training outputsexcept:out, train_out,_ = model(img,ir,input_mode=input_mode) #zjq inference and training outputst0 += time_synchronized() - t# Compute lossif compute_loss:loss += compute_loss([x.float() for x in train_out], targets)[1][:3] # box, obj, cls# Run NMStargets[:, 2:] *= torch.Tensor([width, height, width, height]).to(device) # to pixelslb = [targets[targets[:, 0] == i, 1:] for i in range(nb)] if save_hybrid else [] # for autolabellingt = time_synchronized()out = non_max_suppression(out, conf_thres=conf_thres, iou_thres=iou_thres, labels=lb, multi_label=True)# out = weighted_boxes(out,image_size=imgsz, conf_thres=conf_thres, iou_thres=iou_thres, labels=lb, multi_label=True)t1 += time_synchronized() - t# Statistics per imagefor si, pred in enumerate(out):labels = targets[targets[:, 0] == si, 1:]nl = len(labels)tcls = labels[:, 0].tolist() if nl else [] # target class# path = Path(paths[si])path, shape = Path(paths[si]), shapes[si][0]seen += 1if len(pred) == 0:if nl:stats.append((torch.zeros(0, niou, dtype=torch.bool), torch.Tensor(), torch.Tensor(), tcls))# Calculate the xyxy format of the ground truthtbox = xywh2xyxy(labels[:, 1:5])scale_coords(img[si].shape[1:], tbox, shape, shapes[si][1])gt_boxes = torch.cat((labels[:, 0:1], tbox), 1)else:stats.append((torch.zeros(0, niou, dtype=torch.bool), torch.Tensor(), torch.Tensor(), []))gt_boxes = torch.empty((0, 5), device=img.device)# Divide ground truth by scalesmall_thresh = 32 * 32medium_thresh = 96 * 96if gt_boxes.shape[0] > 0:gt_areas = (gt_boxes[:, 3] - gt_boxes[:, 1]) * (gt_boxes[:, 4] - gt_boxes[:, 2])small_gt_idx = gt_areas < small_threshmedium_gt_idx = (gt_areas >= small_thresh) & (gt_areas < medium_thresh)large_gt_idx = gt_areas >= medium_threshsmall_gts = gt_boxes[small_gt_idx]medium_gts = gt_boxes[medium_gt_idx]large_gts = gt_boxes[large_gt_idx]else:small_gts = medium_gts = large_gts = torch.empty((0, 5), device=img.device)stats_small.append((torch.zeros(0, iouv.shape[0], dtype=torch.bool),torch.Tensor(), torch.Tensor(),small_gts[:, 0].cpu() if small_gts.shape[0] > 0 else torch.Tensor()))stats_medium.append((torch.zeros(0, iouv.shape[0], dtype=torch.bool),torch.Tensor(), torch.Tensor(),medium_gts[:, 0].cpu() if medium_gts.shape[0] > 0 else torch.Tensor()))stats_large.append((torch.zeros(0, iouv.shape[0], dtype=torch.bool),torch.Tensor(), torch.Tensor(),large_gts[:, 0].cpu() if large_gts.shape[0] > 0 else torch.Tensor()))continue# If there are prediction results, convert them to the original image scaleif single_cls:pred[:, 5] = 0# Predictionspredn = pred.clone()scale_coords(img[si].shape[1:], predn[:, :4], shape, shapes[si][1]) # native-space predif nl:tbox = xywh2xyxy(labels[:, 1:5])scale_coords(img[si].shape[1:], tbox, shape, shapes[si][1])labelsn = torch.cat((labels[:, 0:1], tbox), 1)correct = process_batch(predn, labelsn, iouv)if plots:confusion_matrix.process_batch(predn, labelsn)else:correct = torch.zeros(pred.shape[0], niou, dtype=torch.bool)stats.append((correct.cpu(), pred[:, 4].cpu(), pred[:, 5].cpu(), tcls))# Calculate the statistical information of scale groupingif nl:gt_boxes = torch.cat((labels[:, 0:1], tbox), 1)else:gt_boxes = torch.empty((0, 5), device=img.device)def compute_area(boxes):return (boxes[:, 2] - boxes[:, 0]) * (boxes[:, 3] - boxes[:, 1])if predn.shape[0] > 0:pred_areas = compute_area(predn[:, :4])else:pred_areas = torch.tensor([], device=img.device)if gt_boxes.shape[0] > 0:gt_areas = compute_area(gt_boxes[:, 1:5])else:gt_areas = torch.tensor([], device=img.device)small_thresh = 32 * 32medium_thresh = 96 * 96if pred_areas.numel() > 0:small_pred_idx = pred_areas < small_threshmedium_pred_idx = (pred_areas >= small_thresh) & (pred_areas < medium_thresh)large_pred_idx = pred_areas >= medium_threshsmall_preds = predn[small_pred_idx]medium_preds = predn[medium_pred_idx]large_preds = predn[large_pred_idx]else:small_preds = medium_preds = large_preds = torch.empty((0, 6), device=img.device)if gt_boxes.shape[0] > 0:small_gt_idx = gt_areas < small_threshmedium_gt_idx = (gt_areas >= small_thresh) & (gt_areas < medium_thresh)large_gt_idx = gt_areas >= medium_threshsmall_gts = gt_boxes[small_gt_idx]medium_gts = gt_boxes[medium_gt_idx]large_gts = gt_boxes[large_gt_idx]else:small_gts = medium_gts = large_gts = torch.empty((0, 5), device=img.device)# Smallif small_preds.shape[0] == 0 and small_gts.shape[0] == 0:stats_small.append((torch.zeros(0, iouv.shape[0], dtype=torch.bool),torch.Tensor(), torch.Tensor(), torch.Tensor()))else:if small_preds.shape[0] > 0 and small_gts.shape[0] > 0:correct_small = process_batch(small_preds, small_gts, iouv)else:correct_small = torch.zeros(small_preds.shape[0], iouv.shape[0], dtype=torch.bool, device=iouv.device) if small_preds.shape[0] > 0 else torch.zeros(0, iouv.shape[0], dtype=torch.bool, device=iouv.device)stats_small.append((correct_small.cpu(),small_preds[:, 4].cpu() if small_preds.shape[0] > 0 else torch.Tensor(),small_preds[:, 5].cpu() if small_preds.shape[0] > 0 else torch.Tensor(),small_gts[:, 0].cpu() if small_gts.shape[0] > 0 else torch.Tensor()))# Mediumif medium_preds.shape[0] == 0 and medium_gts.shape[0] == 0:stats_medium.append((torch.zeros(0, iouv.shape[0], dtype=torch.bool),torch.Tensor(), torch.Tensor(), torch.Tensor()))else:if medium_preds.shape[0] > 0 and medium_gts.shape[0] > 0:correct_medium = process_batch(medium_preds, medium_gts, iouv)else:correct_medium = torch.zeros(medium_preds.shape[0], iouv.shape[0], dtype=torch.bool, device=iouv.device) if medium_preds.shape[0] > 0 else torch.zeros(0, iouv.shape[0], dtype=torch.bool, device=iouv.device)stats_medium.append((correct_medium.cpu(),medium_preds[:, 4].cpu() if medium_preds.shape[0] > 0 else torch.Tensor(),medium_preds[:, 5].cpu() if medium_preds.shape[0] > 0 else torch.Tensor(),medium_gts[:, 0].cpu() if medium_gts.shape[0] > 0 else torch.Tensor()))# Largeif large_preds.shape[0] == 0 and large_gts.shape[0] == 0:stats_large.append((torch.zeros(0, iouv.shape[0], dtype=torch.bool),torch.Tensor(), torch.Tensor(), torch.Tensor()))else:if large_preds.shape[0] > 0 and large_gts.shape[0] > 0:correct_large = process_batch(large_preds, large_gts, iouv)else:correct_large = torch.zeros(large_preds.shape[0], iouv.shape[0], dtype=torch.bool, device=iouv.device) if large_preds.shape[0] > 0 else torch.zeros(0, iouv.shape[0], dtype=torch.bool, device=iouv.device)stats_large.append((correct_large.cpu(),large_preds[:, 4].cpu() if large_preds.shape[0] > 0 else torch.Tensor(),large_preds[:, 5].cpu() if large_preds.shape[0] > 0 else torch.Tensor(),large_gts[:, 0].cpu() if large_gts.shape[0] > 0 else torch.Tensor()))# end for each image in batch# Append to text fileif save_txt:gn = torch.tensor(shapes[si][0])[[1, 0, 1, 0]] # normalization gain whwhfor *xyxy, conf, cls in predn.tolist():xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4)) / gn).view(-1).tolist() # normalized xywhline = (cls, *xywh, conf) if save_conf else (cls, *xywh) # label formatwith open(save_dir / 'labels' / (path.stem + '.txt'), 'a') as f:f.write(('%g ' * len(line)).rstrip() % line + '\n')# W&B logging - Media Panel Plotsif len(wandb_images) < log_imgs and wandb_logger.current_epoch > 0: # Check for test operationif wandb_logger.current_epoch % wandb_logger.bbox_interval == 0:box_data = [{"position": {"minX": xyxy[0], "minY": xyxy[1], "maxX": xyxy[2], "maxY": xyxy[3]},"class_id": int(cls),"box_caption": "%s %.3f" % (names[cls], conf),"scores": {"class_score": conf},"domain": "pixel"} for *xyxy, conf, cls in pred.tolist()]boxes = {"predictions": {"box_data": box_data, "class_labels": names}} # inference-spacewandb_images.append(wandb_logger.wandb.Image(img[si], boxes=boxes, caption=path.name))wandb_logger.log_training_progress(predn, path, names) if wandb_logger and wandb_logger.wandb_run else None# Append to pycocotools JSON dictionaryif save_json:# [{"image_id": 42, "category_id": 18, "bbox": [258.15, 41.29, 348.26, 243.78], "score": 0.236}, ...image_id = int(path.stem) if path.stem.isnumeric() else path.stembox = xyxy2xywh(predn[:, :4]) # xywhbox[:, :2] -= box[:, 2:] / 2 # xy center to top-left cornerfor p, b in zip(pred.tolist(), box.tolist()):jdict.append({'image_id': image_id,'category_id': coco91class[int(p[5])] if is_coco else int(p[5]),'bbox': [round(x, 3) for x in b],'score': round(p[4], 5)})# Plot imagesif plots: #and batch_i < 3: #zjqf = save_dir / f'test_batch{batch_i}_labels.png' # labels# f = '/home/data/zhangjiaqing/dataset/VEDAI/train_label/'+paths[0].split('/')[-1].replace('_co','_label') #zjqif input_mode == 'IR':Thread(target=plot_images, args=(ir, targets, paths, f, names), daemon=True).start()else:Thread(target=plot_images, args=(img, targets, paths, f, names), daemon=True).start()f = save_dir / f'test_batch{batch_i}_pred.png' # predictionsif input_mode == 'IR':Thread(target=plot_images, args=(ir, output_to_target(out), paths, f, names), daemon=True).start()else:Thread(target=plot_images, args=(img, output_to_target(out), paths, f, names), daemon=True).start()# Compute statisticsstats = [np.concatenate(x, 0) for x in zip(*stats)] # to numpyif len(stats) and stats[0].any():p, r, ap, f1, ap_class = ap_per_class(*stats, plot=plots, save_dir=save_dir, names=names)ap50, ap = ap[:, 0], ap.mean(1) # AP@0.5, AP@0.5:0.95mp, mr, map50, map = p.mean(), r.mean(), ap50.mean(), ap.mean()nt = np.bincount(stats[3].astype(np.int64), minlength=nc) # number of targets per classelse:nt = torch.zeros(1)# Calculate s-m-l mAPif len(stats_small) and np.concatenate(list(zip(*stats_small))[0]).size > 0:stats_small_agg = [np.concatenate(x, 0) for x in zip(*stats_small)]p_small, r_small, ap_small, f1_small, ap_class_small = ap_per_class(*stats_small_agg, plot=False, names=names)mAP_small = ap_small[:, 0].mean()else:mAP_small = 0.0if len(stats_medium) and np.concatenate(list(zip(*stats_medium))[0]).size > 0:stats_medium_agg = [np.concatenate(x, 0) for x in zip(*stats_medium)]p_medium, r_medium, ap_medium, f1_medium, ap_class_medium = ap_per_class(*stats_medium_agg, plot=False, names=names)mAP_medium = ap_medium[:, 0].mean()else:mAP_medium = 0.0if len(stats_large) and np.concatenate(list(zip(*stats_large))[0]).size > 0:stats_large_agg = [np.concatenate(x, 0) for x in zip(*stats_large)]p_large, r_large, ap_large, f1_large, ap_class_large = ap_per_class(*stats_large_agg, plot=False, names=names)mAP_large = ap_large[:, 0].mean()else:mAP_large = 0.0# Print resultspf = '%20s' + '%12i' * 2 + '%12.4g' * 4 # print formatprint(pf % ('all', seen, nt.sum(), mp, mr, map50, map))# with open("trying.txt", 'a+') as f:# f.write((pf % ('all', seen, nt.sum(), mp, mr, map50, map)) + '\n') # append metrics, val_loss# Print results per classif (verbose or (nc < 50 and not training)) and nc > 1 and len(stats):for i, c in enumerate(ap_class):print(pf % (names[c], seen, nt[c], p[i], r[i], ap50[i], ap[i]))# Print s-m-l Scale mAPprint("\nScale-based mAP results:")print(f" Small objects mAP: {mAP_small:.4f}")print(f" Medium objects mAP: {mAP_medium:.4f}")print(f" Large objects mAP: {mAP_large:.4f}")# Print speedst = tuple(x / seen * 1E3 for x in (t0, t1, t0 + t1)) + (imgsz, imgsz, batch_size) # tupleif not training:print('Speed: %.3f/%.3f/%.3f ms inference/NMS/total per %gx%g image at batch-size %g' % t)fps = 1000 / (t[0] + t[1] + t[2])print(f'fps = {fps:.2f}')# Plotsif plots:confusion_matrix.plot(save_dir=save_dir, names=list(names.values()))if wandb_logger and wandb_logger.wandb:val_batches = [wandb_logger.wandb.Image(str(f), caption=f.name) for f in sorted(save_dir.glob('test*.jpg'))]wandb_logger.log({"Validation": val_batches})if wandb_images:wandb_logger.log({"Bounding Box Debugger/Images": wandb_images})# Save JSONif save_json and len(jdict):w = Path(weights[0] if isinstance(weights, list) else weights).stem if weights is not None else '' # weightsanno_json = '../coco/annotations/instances_val2017.json' # annotations jsonpred_json = str(save_dir / f"{w}_predictions.json") # predictions jsonprint('\nEvaluating pycocotools mAP... saving %s...' % pred_json)with open(pred_json, 'w') as f:json.dump(jdict, f)try: # https://github.com/cocodataset/cocoapi/blob/master/PythonAPI/pycocoEvalDemo.ipynbfrom pycocotools.coco import COCOfrom pycocotools.cocoeval import COCOevalanno = COCO(anno_json) # init annotations apipred = anno.loadRes(pred_json) # init predictions apieval = COCOeval(anno, pred, 'bbox')if is_coco:eval.params.imgIds = [int(Path(x).stem) for x in dataloader.dataset.img_files] # image IDs to evaluateeval.evaluate()eval.accumulate()eval.summarize()map, map50 = eval.stats[:2] # update results (mAP@0.5:0.95, mAP@0.5)except Exception as e:print(f'pycocotools unable to run: {e}')# Return resultsmodel.float() # for trainingif not training:s = f"\n{len(list(save_dir.glob('labels/*.txt')))} labels saved to {save_dir / 'labels'}" if save_txt else ''print(f"Results saved to {save_dir}{s}")maps = np.zeros(nc) + mapfor i, c in enumerate(ap_class):maps[c] = ap[i]return (mp, mr, map50, map, *(loss.cpu() / len(dataloader)).tolist()), maps, tif __name__ == '__main__':parser = argparse.ArgumentParser(prog='test.py')parser.add_argument('--weights', nargs='+', type=str, default='/root/SuperYOLO-main/runs/train/exp20/weights/best.pt', help='model.pt path(s)')parser.add_argument('--data', type=str, default='/root/SuperYOLO-main/visDrone-Copy1.yaml', help='*.data path')parser.add_argument('--batch-size', type=int, default=8, help='size of each image batch')parser.add_argument('--img-size', type=int, default=640, help='inference size (pixels)')parser.add_argument('--input_mode', type=str, default='RGB') #RGB IR RGB+IR RGB+IR+fusionparser.add_argument('--conf-thres', type=float, default=0.001, help='object confidence threshold')parser.add_argument('--iou-thres', type=float, default=0.6, help='IOU threshold for NMS')parser.add_argument('--task', default='val', help='train, val, test, speed or study')parser.add_argument('--device', default='0', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')parser.add_argument('--single-cls', action='store_true', help='treat as single-class dataset')parser.add_argument('--augment', action='store_true', help='augmented inference')parser.add_argument('--verbose', action='store_true', help='report mAP by class')parser.add_argument('--save-txt', action='store_true', help='save results to *.txt')parser.add_argument('--save-hybrid', action='store_true', help='save label+prediction hybrid results to *.txt')parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels')parser.add_argument('--save-json', action='store_true', help='save a cocoapi-compatible JSON results file')parser.add_argument('--project', default='runs/test', help='save to project/name')parser.add_argument('--name', default='exp', help='save to project/name')parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')opt = parser.parse_args()opt.save_json |= opt.data.endswith('coco.yaml')opt.data = check_file(opt.data) # check fileprint(opt)check_requirements()if opt.task in ('train', 'val', 'test'): # run normallytest(opt.data,opt.weights,opt.batch_size,opt.img_size,opt.input_mode,opt.conf_thres,opt.iou_thres,opt.save_json,opt.single_cls,opt.augment,opt.verbose,save_txt=opt.save_txt | opt.save_hybrid,save_hybrid=opt.save_hybrid,save_conf=opt.save_conf,)elif opt.task == 'speed': # speed benchmarksfor w in opt.weights:test(opt.data, w, opt.batch_size, opt.img_size, 0.25, 0.45, save_json=False, plots=False)elif opt.task == 'study': # run over a range of settings and save/plot# python test.py --task study --data coco.yaml --iou 0.7 --weights yolov5s.pt yolov5m.pt yolov5l.pt yolov5x.ptx = list(range(256, 1536 + 128, 128)) # x axis (image sizes)for w in opt.weights:f = f'study_{Path(opt.data).stem}_{Path(w).stem}.txt' # filename to save toy = [] # y axisfor i in x: # img-sizeprint(f'\nRunning {f} point {i}...')r, _, t = test(opt.data, w, opt.batch_size, i, opt.conf_thres, opt.iou_thres, opt.save_json,plots=False)y.append(r + t) # results and timesnp.savetxt(f, y, fmt='%10.4g') # saveos.system('zip -r study.zip study_*.txt')plot_study_txt(x=x) # plot碎碎念:

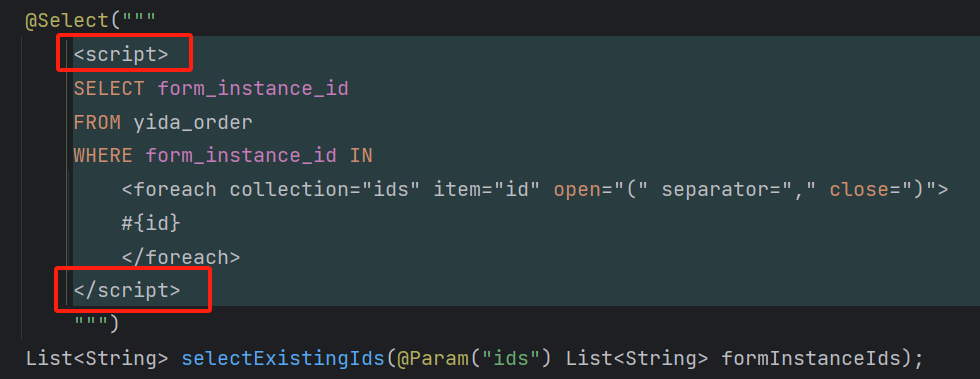

作为以YOLO为baseline改进而来的模型,如果是初次使用SuperYOLO,想必会遇到各种奇怪的问题(

相当一部分是因为长期未得到更新导致的,其中不少报错可以在YOLOv5官方github的issue中找到解决方法。

至于剩下的,在我眼中可称为“开门劝退”的,就是那奇怪的数据集加载方式。个人的建议是,如果像我一样只需要使用RGB分支,还是想办法datasets.py中的代码调整回YOLOv5的经典形式最为省事,可以回避掉许多奇奇怪怪的问题。

当然,就效果而言,SuperYOLO还是相当不错的,即使不使用“RGB+IR”的双分支结构(VisDrone2019数据集)

![B3699 [语言月赛202301] 就要 62](https://img2024.cnblogs.com/blog/3619440/202503/3619440-20250331132221464-811514626.png)