1、修改集群中各物理机主机名hostname文件

# 查看

cat /etc/hostname

# 命令修改

hostnamectl set-hostname k8s-master

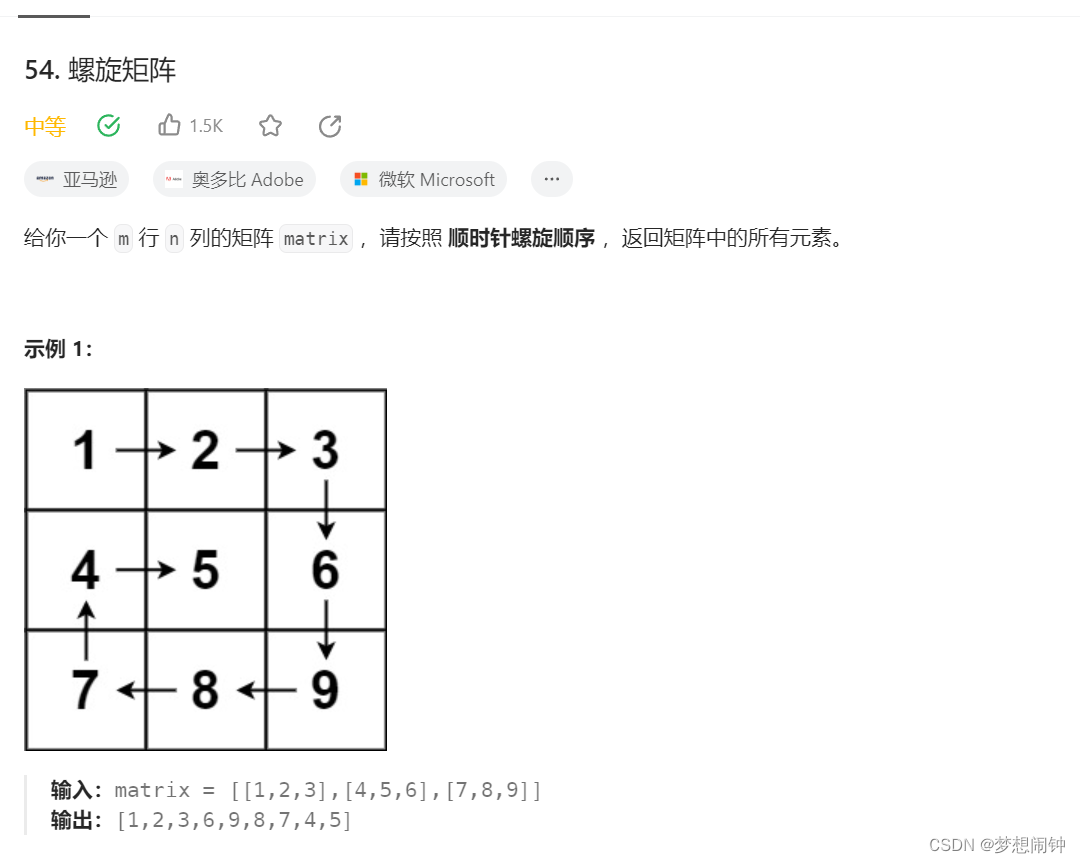

2、实现主机名与ip地址解析

# 查看cat /etc/hosts

# 修改

vi /etc/hosts

3、配置ip_forward过滤机制

# 修改

vi /etc/sysctl.conf

net.ipv4.ip_forward=1

net.bridge.bridge-nf-call-ip6tables=1

net.bridge.bridge-nf-call-iptables=1

# 查看

sysctl -p

# 执行sysctl -p 报错执行

modprobe br_netfilter

4、关闭防火墙

# 停止放火墙

# systemctl stop firewalld

# 禁用防火墙

# systemctl disable firewalld

# 查看防火墙状态

# systemctl status firewalld

# 查看防火墙状态

# firewall-cmd --state

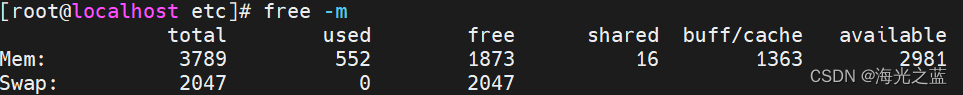

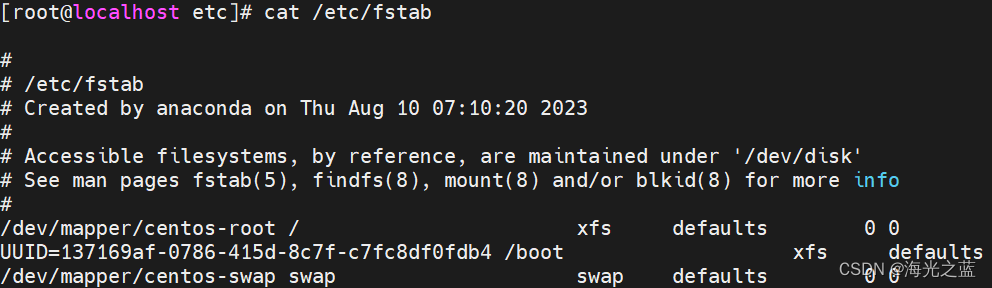

5、禁用swap

# 查看swap

free -m

# 查看swap文件

cat /etc/fstab

# 注释掉fstab的swap配置

vi /etc/fstab

# 使配置文件生效

swapoff -a

6、添加时间同步

# 查看时间

date

# 安装插件

yum -y install update

# 设置

crontab -e

# 设置内容

0 */1 * * * ntpdate ntp.aliyun.com

# 查看

crontab -l

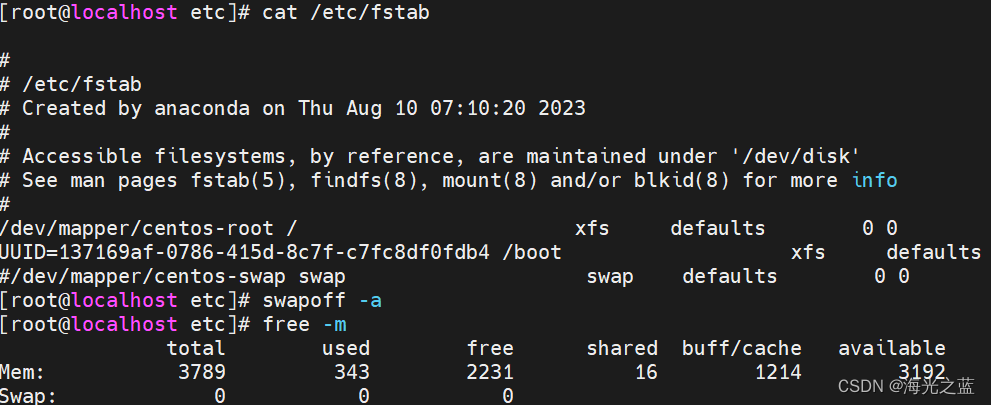

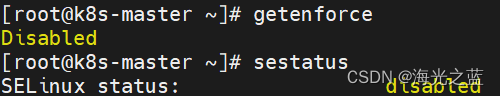

7、关闭selinux

# 查看

getenforce

# 查看

sestatus

# 编辑配置文件

vi /etc/selinux/config

SELINUX=disabled

重启系统后

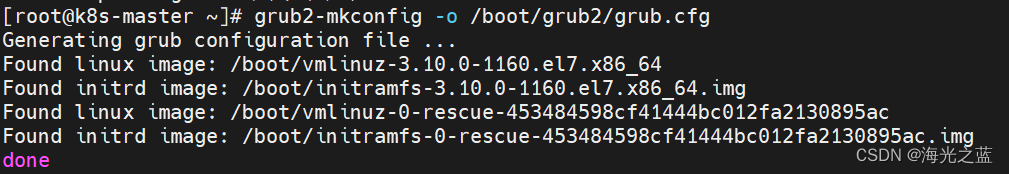

8、启用Cgroup;修改配置文件/etc/default/grub,启用cgroup内存限额功能,配置两个参数:

vi /etc/default/grub

GRUB_CMDLINE_LINUX_DEFAULT="cgroup_enable=memory swapaccount=1"

GRUB_CMDLINE_LINUX="cgroup_enable=memory swapaccount=1"

# 更新grub

grub2-mkconfig -o /boot/grub2/grub.cfg

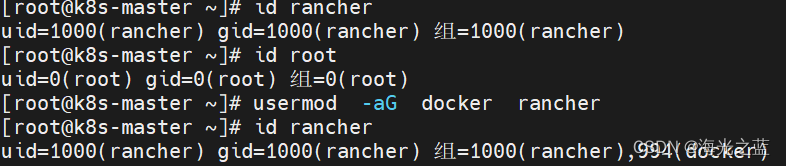

9、添加rancher用户

# 添加用户

useradd -m rancher

# 添加至docker组

usermod -aG docker rancher

# 添加用户密码

passwd rancher

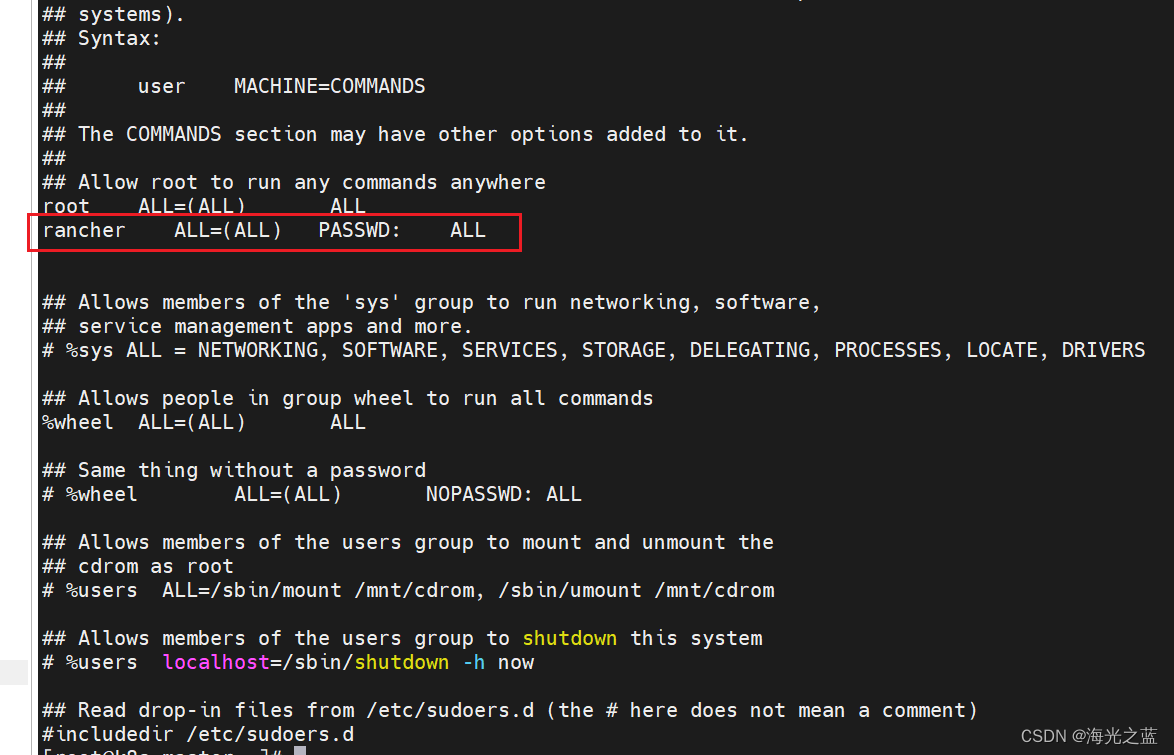

# 如果rancher用户没有root权限,可以使用visudo编辑/etc/hosts文件

visudo

根据需要可以选择下面四行中的一行:

youuser ALL=(ALL) ALL

%youuser ALL=(ALL) ALL

youuser ALL=(ALL) NOPASSWD: ALL

%youuser ALL=(ALL) NOPASSWD: ALL

第一行:允许用户youuser执行sudo命令(需要输入密码).

第二行:允许用户组youuser里面的用户执行sudo命令(需要输入密码).

第三行:允许用户youuser执行sudo命令,并且在执行的时候不输入密码.

第四行:允许用户组youuser里面的用户执行sudo命令,并且在执行的时候不输入密码.

参考:linux给普通用户添加sudo权限

10、配置ssh,需要切换到rancher用户

# 切换至rancher用户

su rancher

# master生成ssh

ssh-keygen

# 将ssh密钥复制到其它node节点上

cd .ssh/

ssh-copy-id rancher@k8s-master

ssh-copy-id rancher@k8s-node1

ssh-copy-id rancher@k8s-node2

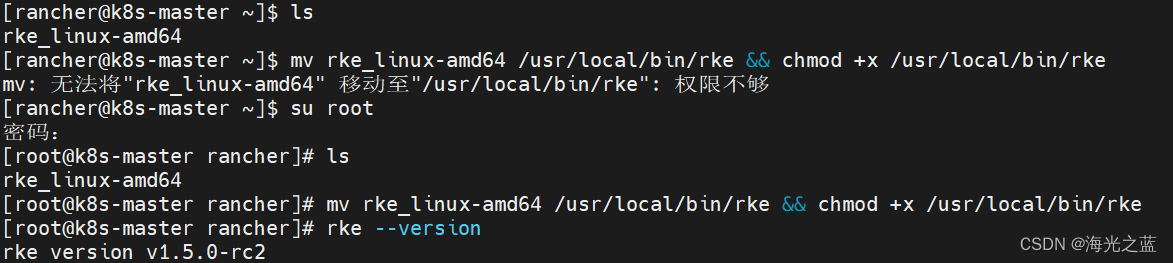

11、下载rke安装包

https://github.com/rancher/rke/releases

下载后上传到master上

切换至root用户执行

su root

mv rke_linux-amd64 /usr/local/bin/rke && chmod +x /usr/local/bin/rke

rke --version

12、使用rancher用户创建rke安装k8s集群产生的配置文件

# 在rancher的用户目录/root/rancher下创建集群部署cluster.yml文件

rke config --name cluster.yml

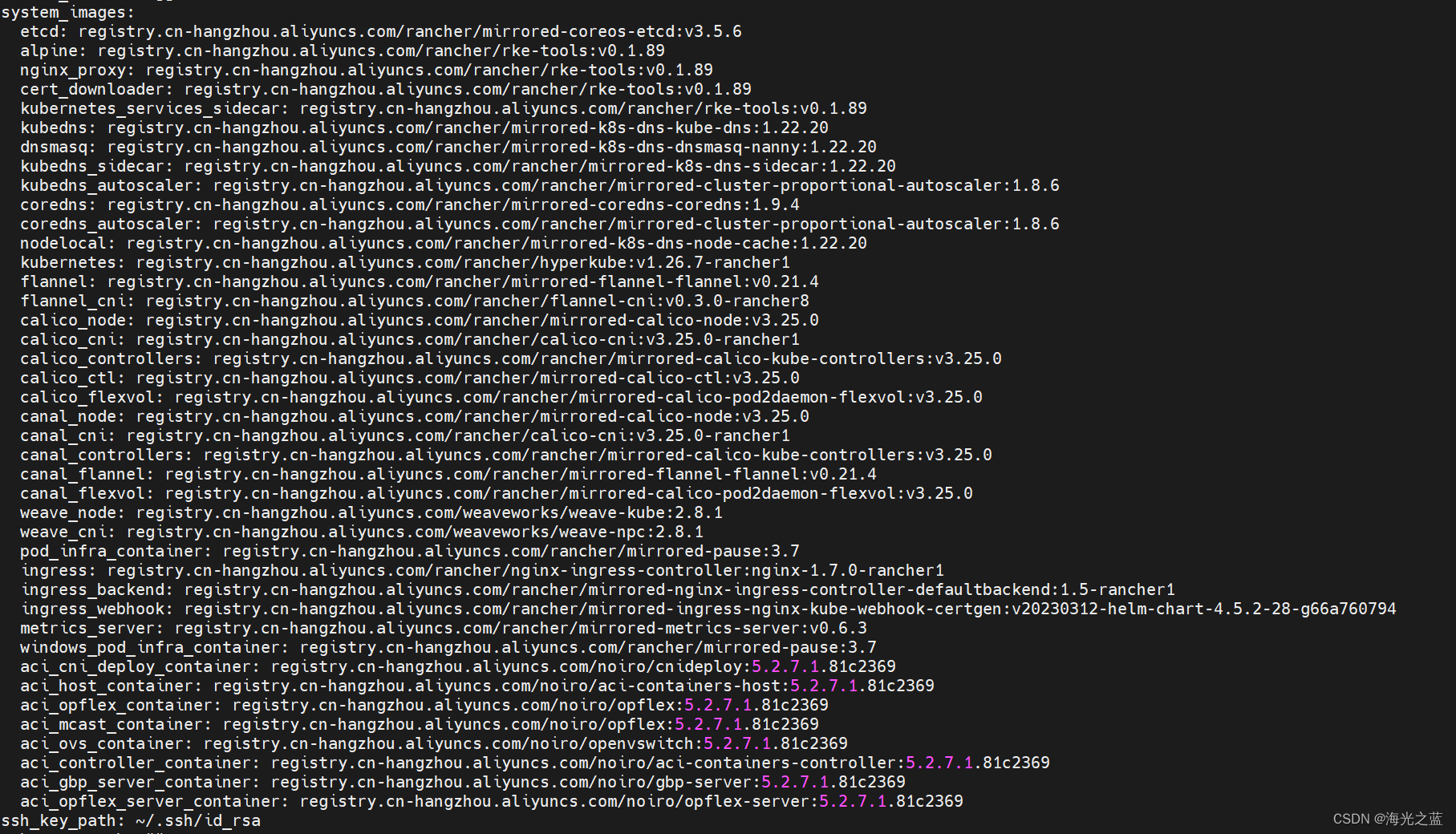

# 创建完之后,默认镜像是从dockerhub上拉取的,镜像需要替换成阿里云的仓库镜像

默认仓库配置如下:

官方配置参考

命令生成cluster.yml文件

[+] Cluster Level SSH Private Key Path [~/.ssh/id_rsa]: 集群私钥路径:~/.ssh/id_rsa[+] Number of Hosts [1]: 3 集群拥有几个节点:3[+] SSH Address of host (1) [none]: 192.168.149.200 第一个节点ip地址:192.168.149.200 [+] SSH Port of host (1) [22]: 22 第一个节点端口:22[+] SSH Private Key Path of host (192.168.149.200) [none]: ~/.ssh/id_rsa 第一个节点私钥路径:~/.ssh/id_rsa[+] SSH User of host (192.168.149.200) [ubuntu]: rancher 远程用户名:rancher[+] Is host (192.168.149.200) a Control Plane host (y/n)? [y]: y 是否是k8s集群控制节点:y[+] Is host (192.168.149.200) a Worker host (y/n)? [n]: n 是否是k8s集群工作节点:n[+] Is host (192.168.149.200) an etcd host (y/n)? [n]: n 是否是k8s集群etcd节点:n[+] Override Hostname of host (192.168.149.200) [none]: 不覆盖现有主机:回车默认[+] Internal IP of host (192.168.149.200) [none]: 主机局域网地址:没有更改回车默认[+] Docker socket path on host (192.168.149.200) [/var/run/docker.sock]: /var/run/docker.sock 主机上docker.sock路径:/var/run/docker.sock[+] SSH Address of host (2) [none]: 192.168.149.205 第二个节点ip地址:192.168.149.205[+] SSH Port of host (2) [22]: 22 第二个节点远程端口:22[+] SSH Private Key Path of host (192.168.149.205) [none]: ~/.ssh/id_rsa 第二个节点私钥路径:~/.ssh/id_rsa[+] SSH User of host (192.168.149.205) [ubuntu]: rancher 第二个节点远程用户名:rancher[+] Is host (192.168.149.205) a Control Plane host (y/n)? [y]: n 是否是k8s集群控制节点:n[+] Is host (192.168.149.205) a Worker host (y/n)? [n]: y 是否是k8s集群工作节点:y[+] Is host (192.168.149.205) an etcd host (y/n)? [n]: n 是否是k8s集群etcd节点:n[+] Override Hostname of host (192.168.149.205) [none]: 不覆盖现有主机:回车默认[+] Internal IP of host (192.168.149.205) [none]: 主机局域网地址:没有更改回车默认[+] Docker socket path on host (192.168.149.205) [/var/run/docker.sock]: /var/run/docker.sock 主机上docker.sock路径:/var/run/docker.sock[+] SSH Address of host (3) [none]: 192.168.149.210 第三个节点ip地址:192.168.149.210[+] SSH Port of host (3) [22]: 22 第三个节点远程端口:22[+] SSH Private Key Path of host (192.168.149.210) [none]: ~/.ssh/id_rsa 第三个节点私钥路径:~/.ssh/id_rsa [+] SSH User of host (192.168.149.210) [ubuntu]: rancher 第三个节点远程用户名:rancher[+] Is host (192.168.149.210) a Control Plane host (y/n)? [y]: n 是否是k8s集群控制节点:n[+] Is host (192.168.149.210) a Worker host (y/n)? [n]: n 是否是k8s集群工作节点:n[+] Is host (192.168.149.210) an etcd host (y/n)? [n]: y 是否是k8s集群etcd节点:y[+] Override Hostname of host (192.168.149.210) [none]: 不覆盖现有主机:回车默认[+] Internal IP of host (192.168.149.210) [none]: 主机局域网地址:没有更改回车默认[+] Docker socket path on host (192.168.149.210) [/var/run/docker.sock]: /var/run/docker.sock 主机上docker.sock路径:/var/run/docker.sock[+] Network Plugin Type (flannel, calico, weave, canal, aci) [canal]: calico 网络插件类型:自选,我选择的是calico[+] Authentication Strategy [x509]: 认证策略形式:X509[+] Authorization Mode (rbac, none) [rbac]: rbac 认证模式:rbac[+] Kubernetes Docker image [rancher/hyperkube:v1.25.9-rancher2]: rancher/hyperkube:v1.25.9-rancher2 k8s集群使用的docker镜像:rancher/hyperkube:v1.25.9-rancher2[+] Cluster domain [cluster.local]: sbcinfo.com 集群域名:默认即可 [+] Service Cluster IP Range [10.43.0.0/16]: 集群IP、server地址:默认即可[+] Enable PodSecurityPolicy [n]: 开启pod安全策略:n[+] Cluster Network CIDR [10.42.0.0/16]: 集群pod ip地址:默认即可[+] Cluster DNS Service IP [10.43.0.10]: 集群DNS ip地址:默认即可[+] Add addon manifest URLs or YAML files [no]: 添加加载项清单url或yaml文件:回车默认即可或者no

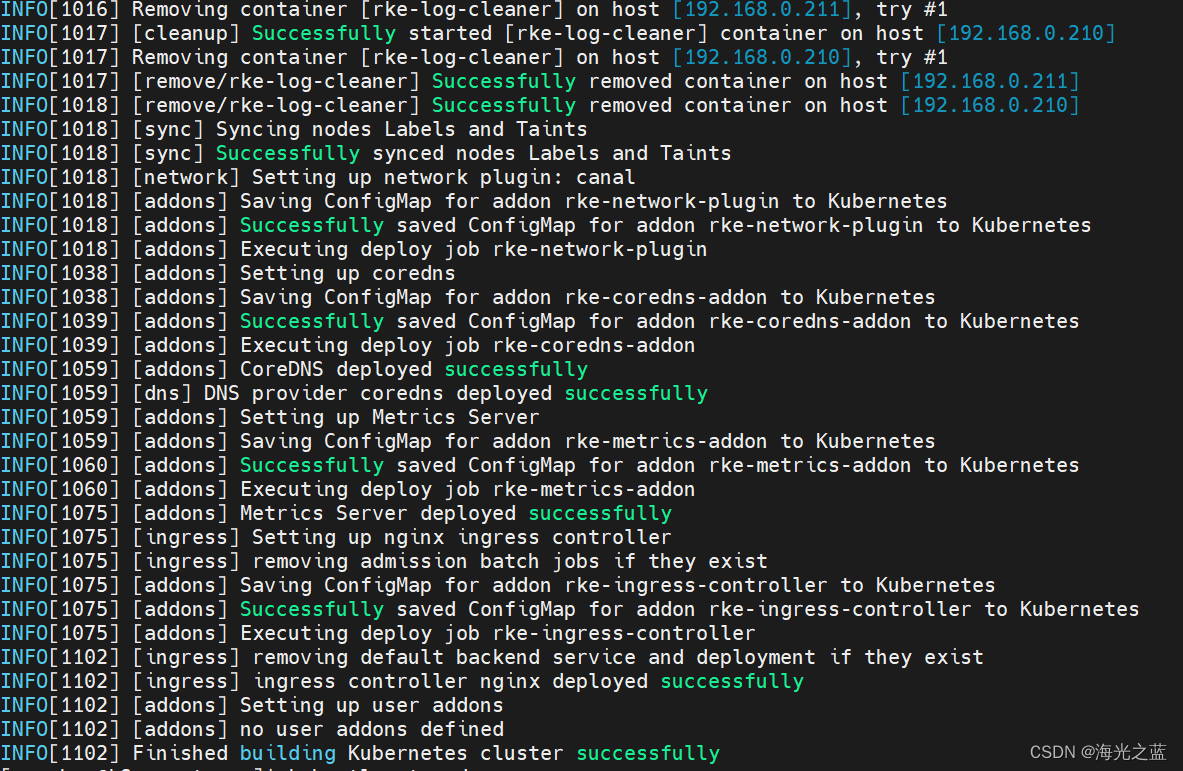

13、开始安装集群,使用rancher用户

# 开始执行部署前先确保个机器上的docker全部未运行,docker ps 和docker ps -a都有删除掉,清理所有不是从阿里云下载的镜像,确保集群部署使用镜像和环境的干净,然后执行下面的部署命令,观察日志直到成功

rke up

成功部署截图:

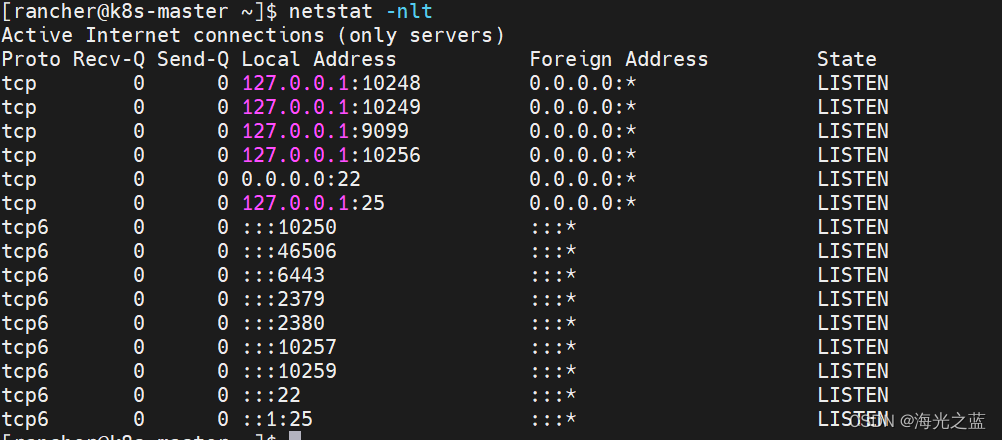

master成功后的端口监听情况

查看监听端口命令netstat,如果没有则需使用root用户安装

yum install net-tools

常见参数

-a (all)显示所有选项,默认不显示LISTEN相关

-t (tcp)仅显示tcp相关选项

-u (udp)仅显示udp相关选项

-n 拒绝显示别名,能显示数字的全部转化成数字。

-l 仅列出有在 Listen (监听) 的服務状态

-p 显示建立相关链接的程序名

-r 显示路由信息,路由表

-e 显示扩展信息,例如uid等

-s 按各个协议进行统计

-c 每隔一个固定时间,执行该netstat命令。

参考:CentOS下netstat 命令详解

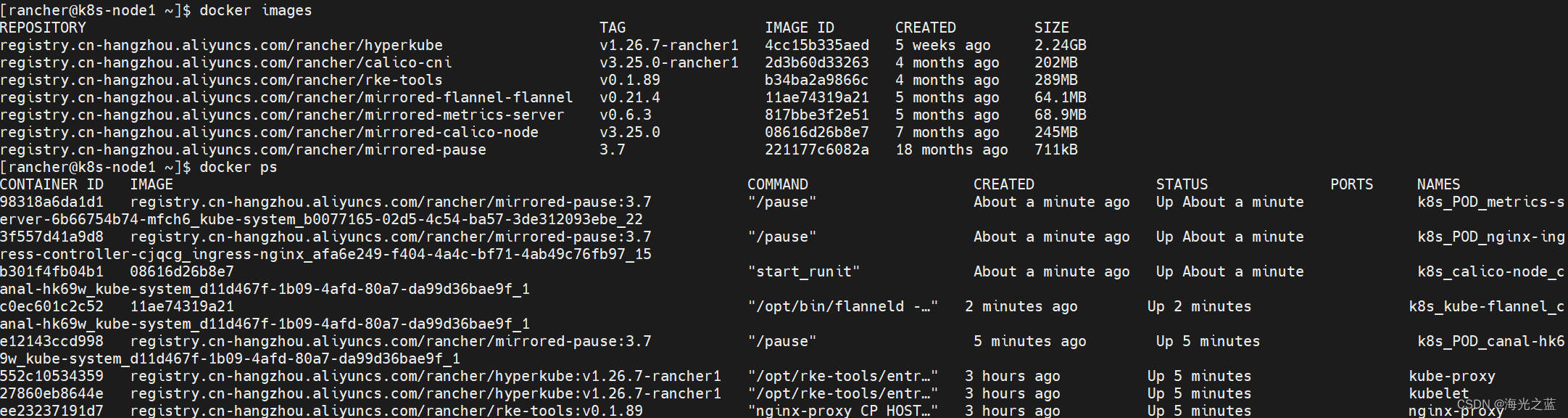

worke工作节点镜像和启动情况截图

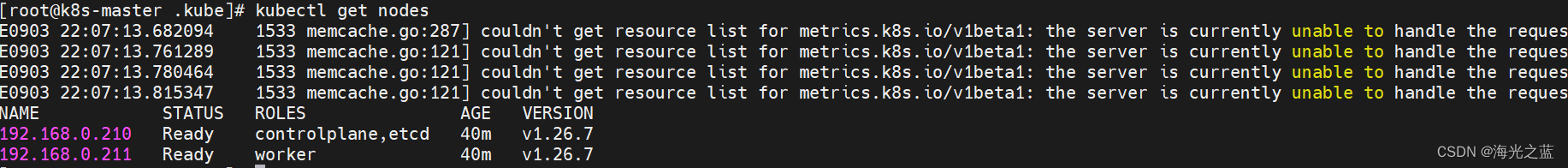

14、安装kubectl客户端管理工具

cat <<EOF > /etc/yum.repos.d/kubernetes.repo[kubernetes]name=Kubernetesbaseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1gpgcheck=1repo_gpgcheck=1gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpgEOF# 安装

yum install -y kubectl

# kubectl的默认配置文件: ~/.kube/config(这个文件是在root用户目录下的,这个config是文件不是目录)

# rke安装完成后将kube_config_cluster.yml复制并更名为config

# root用户

mkdir .kube

cd /root/.kube

cp /home/rancher/kube_config_cluster.yml config

# 更改完成后执行

kubectl get nodes

rke高可用k8s集群安装和实现手册

CentOS7下,RKE部署k8s集群,及Helm Chart 安装Rancher高可用

测试可用的cluster.yml

# If you intended to deploy Kubernetes in an air-gapped environment,

# please consult the documentation on how to configure custom RKE images.

nodes:

- address: 192.168.0.210port: "22"internal_address: ""role:- controlplane- etcdhostname_override: ""user: rancherdocker_socket: /var/run/docker.sockssh_key: ""ssh_key_path: ~/.ssh/id_rsassh_cert: ""ssh_cert_path: ""labels: {}taints: []

- address: 192.168.0.211port: "22"internal_address: ""role:- workerhostname_override: ""user: rancherdocker_socket: /var/run/docker.sockssh_key: ""ssh_key_path: ~/.ssh/id_rsassh_cert: ""ssh_cert_path: ""labels: {}taints: []

- address: 192.168.0.212port: "22"internal_address: ""role:- workerhostname_override: ""user: rancherdocker_socket: /var/run/docker.sockssh_key: ""ssh_key_path: ~/.ssh/id_rsassh_cert: ""ssh_cert_path: ""labels: {}taints: []

services:etcd:image: ""extra_args: {}extra_args_array: {}extra_binds: []extra_env: []win_extra_args: {}win_extra_args_array: {}win_extra_binds: []win_extra_env: []external_urls: []ca_cert: ""cert: ""key: ""path: ""uid: 0gid: 0snapshot: nullretention: ""creation: ""backup_config: nullkube-api:image: ""extra_args: {}extra_args_array: {}extra_binds: []extra_env: []win_extra_args: {}win_extra_args_array: {}win_extra_binds: []win_extra_env: []service_cluster_ip_range: 10.43.0.0/16service_node_port_range: ""pod_security_policy: falsepod_security_configuration: ""always_pull_images: falsesecrets_encryption_config: nullaudit_log: nulladmission_configuration: nullevent_rate_limit: nullkube-controller:image: ""extra_args: {}extra_args_array: {}extra_binds: []extra_env: []win_extra_args: {}win_extra_args_array: {}win_extra_binds: []win_extra_env: []cluster_cidr: 10.42.0.0/16service_cluster_ip_range: 10.43.0.0/16scheduler:image: ""extra_args: {}extra_args_array: {}extra_binds: []extra_env: []win_extra_args: {}win_extra_args_array: {}win_extra_binds: []win_extra_env: []kubelet:image: ""extra_args: {}extra_args_array: {}extra_binds: []extra_env: []win_extra_args: {}win_extra_args_array: {}win_extra_binds: []win_extra_env: []cluster_domain: cluster.localinfra_container_image: ""cluster_dns_server: 10.43.0.10fail_swap_on: falsegenerate_serving_certificate: falsekubeproxy:image: ""extra_args: {}extra_args_array: {}extra_binds: []extra_env: []win_extra_args: {}win_extra_args_array: {}win_extra_binds: []win_extra_env: []

network:plugin: canaloptions: {}mtu: 0node_selector: {}update_strategy: nulltolerations: []

authentication:strategy: x509sans: []webhook: null

addons: ""

addons_include: []

system_images:etcd: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-coreos-etcd:v3.5.6alpine: registry.cn-hangzhou.aliyuncs.com/rancher/rke-tools:v0.1.89nginx_proxy: registry.cn-hangzhou.aliyuncs.com/rancher/rke-tools:v0.1.89cert_downloader: registry.cn-hangzhou.aliyuncs.com/rancher/rke-tools:v0.1.89kubernetes_services_sidecar: registry.cn-hangzhou.aliyuncs.com/rancher/rke-tools:v0.1.89kubedns: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-k8s-dns-kube-dns:1.22.20dnsmasq: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-k8s-dns-dnsmasq-nanny:1.22.20kubedns_sidecar: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-k8s-dns-sidecar:1.22.20kubedns_autoscaler: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-cluster-proportional-autoscaler:1.8.6coredns: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-coredns-coredns:1.9.4coredns_autoscaler: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-cluster-proportional-autoscaler:1.8.6nodelocal: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-k8s-dns-node-cache:1.22.20kubernetes: registry.cn-hangzhou.aliyuncs.com/rancher/hyperkube:v1.26.7-rancher1flannel: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-flannel-flannel:v0.21.4flannel_cni: registry.cn-hangzhou.aliyuncs.com/rancher/flannel-cni:v0.3.0-rancher8calico_node: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-calico-node:v3.25.0calico_cni: registry.cn-hangzhou.aliyuncs.com/rancher/calico-cni:v3.25.0-rancher1calico_controllers: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-calico-kube-controllers:v3.25.0calico_ctl: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-calico-ctl:v3.25.0calico_flexvol: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-calico-pod2daemon-flexvol:v3.25.0canal_node: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-calico-node:v3.25.0canal_cni: registry.cn-hangzhou.aliyuncs.com/rancher/calico-cni:v3.25.0-rancher1canal_controllers: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-calico-kube-controllers:v3.25.0canal_flannel: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-flannel-flannel:v0.21.4canal_flexvol: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-calico-pod2daemon-flexvol:v3.25.0weave_node: registry.cn-hangzhou.aliyuncs.com/weaveworks/weave-kube:2.8.1weave_cni: registry.cn-hangzhou.aliyuncs.com/weaveworks/weave-npc:2.8.1pod_infra_container: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-pause:3.7ingress: registry.cn-hangzhou.aliyuncs.com/rancher/nginx-ingress-controller:nginx-1.7.0-rancher1ingress_backend: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-nginx-ingress-controller-defaultbackend:1.5-rancher1ingress_webhook: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-ingress-nginx-kube-webhook-certgen:v20230312-helm-chart-4.5.2-28-g66a760794metrics_server: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-metrics-server:v0.6.3windows_pod_infra_container: registry.cn-hangzhou.aliyuncs.com/rancher/mirrored-pause:3.7aci_cni_deploy_container: registry.cn-hangzhou.aliyuncs.com/noiro/cnideploy:5.2.7.1.81c2369aci_host_container: registry.cn-hangzhou.aliyuncs.com/noiro/aci-containers-host:5.2.7.1.81c2369aci_opflex_container: registry.cn-hangzhou.aliyuncs.com/noiro/opflex:5.2.7.1.81c2369aci_mcast_container: registry.cn-hangzhou.aliyuncs.com/noiro/opflex:5.2.7.1.81c2369aci_ovs_container: registry.cn-hangzhou.aliyuncs.com/noiro/openvswitch:5.2.7.1.81c2369aci_controller_container: registry.cn-hangzhou.aliyuncs.com/noiro/aci-containers-controller:5.2.7.1.81c2369aci_gbp_server_container: registry.cn-hangzhou.aliyuncs.com/noiro/gbp-server:5.2.7.1.81c2369aci_opflex_server_container: registry.cn-hangzhou.aliyuncs.com/noiro/opflex-server:5.2.7.1.81c2369

ssh_key_path: ~/.ssh/id_rsa

ssh_cert_path: ""

ssh_agent_auth: false

authorization:mode: rbacoptions: {}

ignore_docker_version: null

enable_cri_dockerd: null

kubernetes_version: ""

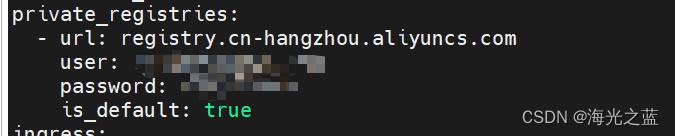

private_registries:- url: registry.cn-hangzhou.aliyuncs.comuser: abcpassword: abcis_default: true

ingress:provider: ""options: {}node_selector: {}extra_args: {}dns_policy: ""extra_envs: []extra_volumes: []extra_volume_mounts: []update_strategy: nullhttp_port: 0https_port: 0network_mode: ""tolerations: []default_backend: nulldefault_http_backend_priority_class_name: ""nginx_ingress_controller_priority_class_name: ""default_ingress_class: null

cluster_name: ""

cloud_provider:name: ""

prefix_path: ""

win_prefix_path: ""

addon_job_timeout: 0

bastion_host:address: ""port: ""user: ""ssh_key: ""ssh_key_path: ""ssh_cert: ""ssh_cert_path: ""ignore_proxy_env_vars: false

monitoring:provider: ""options: {}node_selector: {}update_strategy: nullreplicas: nulltolerations: []metrics_server_priority_class_name: ""

restore:restore: falsesnapshot_name: ""

rotate_encryption_key: false

dns: null

![java八股文面试[多线程]——synchronized锁升级过程](https://img-blog.csdnimg.cn/img_convert/399d9aa5ad854da2f9363cc98784e060.png)

![[国产MCU]-W801开发实例-用户报文协议(UDP)数据接收和发送](https://img-blog.csdnimg.cn/eabfef81b3ba4aaf89bf563ffdfad44b.png#pic_center)