介绍

神经网络当中的激活函数用来提升网络的非线性,以增强网络的表征能力。它有这样几个特点:有界,必须为非常数,单调递增且连续可求导。我们常用的有sigmoid或者tanh,但我们都知道这两个都存在一定的缺点,有的甚至是无脑用Relu。所以今天就来学习并实现一些其他的激活函数。

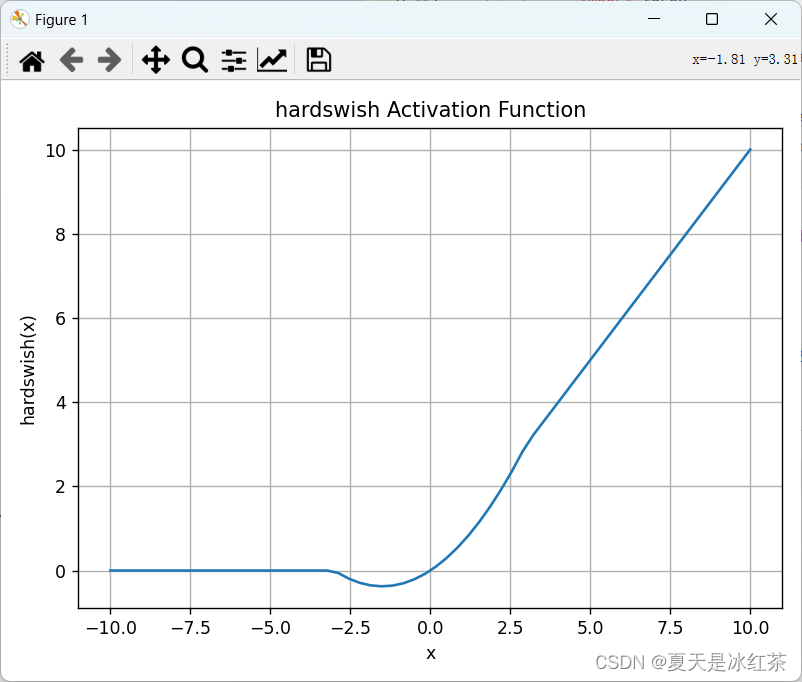

下面激活函数使用的图像都是可以通过这个脚本就行修改:

import torch

import matplotlib.pyplot as plt

import torch.nn.functional as F

x = torch.linspace(-10, 10, 60)

y = F.silu(x)plt.plot(x.numpy(), y.numpy())

plt.title('Silu Activation Function')

plt.xlabel('x')

plt.ylabel('silu(x)')

plt.grid()

plt.tight_layout()

plt.show()SiLU

import torch

import torch.nn as nn

import torch.nn.functional as Fclass SiLU(nn.Module):@staticmethoddef forward(x):return x * torch.sigmoid(x)if __name__=="__main__":m = nn.SiLU()input = torch.randn(2)output = m(input)print("官方实现:",output)n = SiLU()output = n(input)print("自定义:",output)官方实现: tensor([ 0.2838, -0.2578])

自定义: tensor([ 0.2838, -0.2578])

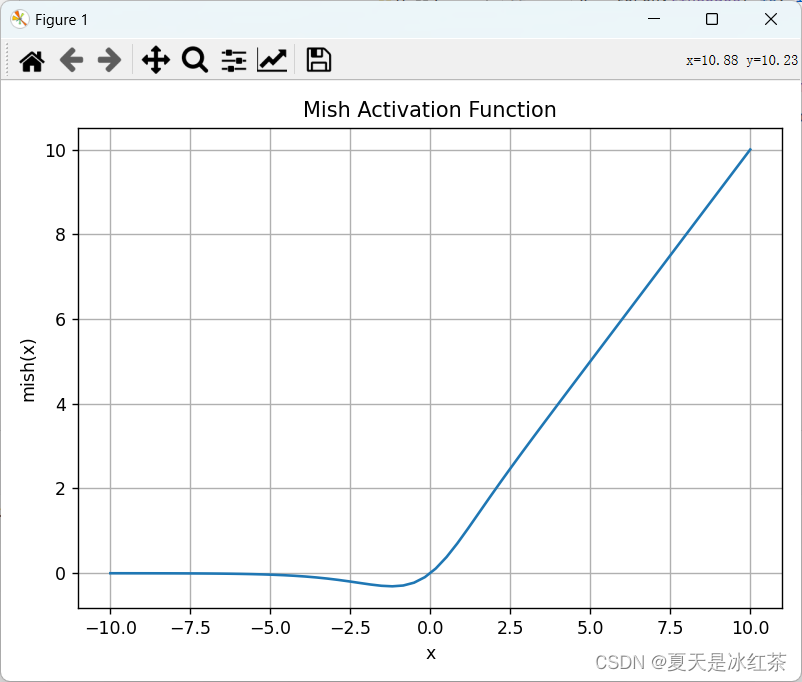

Mish

import torch

import torch.nn as nn

import torch.nn.functional as Fclass Mish(nn.Module):@staticmethoddef forward(x):return x * F.softplus(x).tanh()if __name__=="__main__":m = nn.Mish()input = torch.randn(2)output = m(input)print("官方实现:",output)n = Mish()output = n(input)print("自定义:",output)官方实现: tensor([2.8559, 0.2204])

自定义: tensor([2.8559, 0.2204])

Hard-SiLU

import torch

import torch.nn as nn

import torch.nn.functional as Fclass Hardswish(nn.Module):# Hard-SiLU activation https://arxiv.org/abs/1905.02244@staticmethoddef forward(x):return x * F.hardtanh(x + 3, 0.0, 6.0) / 6.0if __name__=="__main__":m = nn.Hardswish()input = torch.randn(2)output = m(input)print("官方实现:",output)n = Hardswish()output = n(input)print("自定义:",output)官方实现: tensor([-0.1857, -0.0061])

自定义: tensor([-0.1857, -0.0061])