前言

本文主要以源码+注释为主,可以了解到从模型的输出到损失计算这个过程每个步骤的具体实现方法。

流程梳理

一、选取有效 anchor

以 640x640 的输入为例,模型最终有8400个 anchor,每个 anchor 都有其对应的检测输出(4+n)和分割输出(32),而这些 anchor 并不会每个都参与到 loss 的计算。

满足以下条件可成为有效 anchor,参与 loss 计算:

(1)anchor 坐标在 gt_bbox 范围中;

(2)对于每个 gt_bbox 综合得分前十,此得分由 IoU 和预测的 class_score 融合而得;

(3)若对于多个 gt_bbox 都满足前两个条件,则只保留综合得分最高的。

二、loss 计算

loss 总共分为4个部分,把交叉熵损失记作 f ( x , y ) f(x,y) f(x,y)

(1) L box L_{\text{box}} Lbox

l i = w i ( 1 − IoU i ) l_i=w_i(1-\text{IoU}_i) li=wi(1−IoUi)

L box = ∑ l i / ∑ w i L_{\text{box}}=\sum{l_i} / \sum{w_i} Lbox=∑li/∑wi

这里 w i w_i wi 同样是 IoU 和分类得分融合后的得分,以此作为权重,并做类似求均值的操作得到最终 loss

(2) L seg L_{\text{seg}} Lseg

l i = f ( mask i , gt_mask i ) / area l_i=f(\text{mask}_i, \text{gt\_mask}_i) / \text{area} li=f(maski,gt_maski)/area

L seg = mean ( l ) L_{\text{seg}}=\text{mean}(l) Lseg=mean(l)

mask 获取方式与 predict 中相同,然后与标签计算交叉熵损失,area 为对应 gt_bbox 的面积。

(3) L cls L_{\text{cls}} Lcls

L cls = sum ( f (cls,gt_cls) ) / ∑ w i L_{\text{cls}}=\text{sum}(f\text{(cls,gt\_cls)}) / \sum{w_i} Lcls=sum(f(cls,gt_cls))/∑wi

交叉熵损失,取均值的方式与 L box L_{\text{box}} Lbox 类似

(4) L dfl L_{\text{dfl}} Ldfl

L dfl L_{\text{dfl}} Ldfl 也是用于收敛检测框的,这里要回溯到 DFL 模块的输出 Tensor(b,4,8400),其对应的坐标是检测框左上角和右下角到 anchor 坐标的距离。把 gt_bbox 转化到同样的形式后,对其计算损失。

l = f ( x , floor ( y ) ) × ( ceil ( y ) − y ) + f ( x , ceil ( y ) ) × ( y − floor ( y ) ) l=f(x, \text{floor}(y))\times(\text{ceil}(y)-y) + f(x, \text{ceil}(y))\times (y - \text{floor}(y)) l=f(x,floor(y))×(ceil(y)−y)+f(x,ceil(y))×(y−floor(y))

上面的公式中的 x x x 对应某个 anchor 的4个坐标值中的一个, y y y 是其对应的 gt 值。这里简单举一个例子更方便理解这个损失,例如 x = x , y = 3.7 x=x,y=3.7 x=x,y=3.7

l = f ( x , 3 ) × 0.3 + f ( x , 4 ) × 0.7 l=f(x,3)\times 0.3+f(x,4)\times 0.7 l=f(x,3)×0.3+f(x,4)×0.7

对于每个坐标值,模型会输出16个总和为1的概率值,分别与0~15加权求和成为最终的坐标值。这意味这当 y = 3.7 y=3.7 y=3.7 时,理想情况下是 3 的概率为 0.3、4 的概率为 0.7,从而 0.3 ∗ 3 + 0.7 ∗ 4 = 3.7 0.3*3+0.7*4=3.7 0.3∗3+0.7∗4=3.7,而这个损失便是通过权重和两个交叉熵损失,让分类结果不同程度的向 3 和 4 收敛。

代码与细节

0. 模型原始输出

preds: (tuple:3)0(feats): (list:3)0: (Tensor:(b,64+cls_n,80,80))1: (Tensor:(b,64+cls_n,40,40))2: (Tensor:(b,64+cls_n,20,20))1(pred_masks): (Tensor:(b,32,8400))2(proto): (Tensor:(b,32,160,160)) cls_n=1

1. 获取原始标签

"""

gt_labels: (Tensor:(b,8,1))

gt_bboxes: (Tensor:(b,8,4))

mask_gt: (Tensor:(b,8,1))这里的8是每个图像的最大目标个数(max_num_obj), 设定成统一数量方便后续矩阵运算,

而目标数量不够的会以坐标全为0进行填充, 而 mask_gt 就是记录是否为真的目标的01矩阵

"""

batch_idx = batch['batch_idx'].view(-1, 1)

targets = torch.cat((batch_idx, batch['cls'].view(-1, 1), batch['bboxes']), 1)

targets = self.preprocess(targets.to(self.device), batch_size, scale_tensor=imgsz[[1, 0, 1, 0]])

gt_labels, gt_bboxes = targets.split((1, 4), 2) # cls, xyxy

mask_gt = gt_bboxes.sum(2, keepdim=True).gt_(0)

2. 模型原始输出处理与转化

"""对 feats, pred_masks 进行合并和维度变换"""

pred_scores: (Tensor:(b,8400,1))

pred_distri: (Tensor:(b,8400,64))

pred_masks: (Tensor:(b,8400,32))"""把 pred_distri 转换为目标框输出 pred_bboxes: (Tensor:(b,8400,4))"""

pred_bboxes = self.bbox_decode(anchor_points, pred_distri)def bbox_decode(self, anchor_points, pred_dist):"""Decode predicted object bounding box coordinates from anchor points and distribution."""if self.use_dfl:b, a, c = pred_dist.shape # batch, anchors, channels"""self.proj = torch.arange(m.reg_max, dtype=torch.float, device=device), 即0~15的向量这意味着 pred_dist 中的数值在 0~15 之间根据后续的 dist2bbox 可以看出在 20x20 和 40x40 的输出上都有检测覆盖全图的大目标的能力在这里计算的坐标都还是在特征图分辨率的坐标系上, 并未根据步长统一到 640x640 坐标系上"""pred_dist = pred_dist.view(b, a, 4, c // 4).softmax(3).matmul(self.proj.type(pred_dist.dtype))return dist2bbox(pred_dist, anchor_points, xywh=False)def dist2bbox(distance, anchor_points, xywh=True, dim=-1):"""Transform distance(ltrb) to box(xywh or xyxy)."""lt, rb = distance.chunk(2, dim)x1y1 = anchor_points - ltx2y2 = anchor_points + rbif xywh:c_xy = (x1y1 + x2y2) / 2wh = x2y2 - x1y1return torch.cat((c_xy, wh), dim) # xywh bboxreturn torch.cat((x1y1, x2y2), dim) # xyxy bbox

3. 获取用于计算loss的标签与输出

_, target_bboxes, target_scores, fg_mask, target_gt_idx = self.assigner(pred_scores.detach().sigmoid(), (pred_bboxes.detach() * stride_tensor).type(gt_bboxes.dtype),anchor_points * stride_tensor, gt_labels, gt_bboxes, mask_gt

)

@torch.no_grad()

def forward(self, pd_scores, pd_bboxes, anc_points, gt_labels, gt_bboxes, mask_gt):"""Args:pd_scores (Tensor): shape(bs, num_total_anchors, num_classes), sigmoid(pred_scores)pd_bboxes (Tensor): shape(bs, num_total_anchors, 4), 坐标统一到输入640x640anc_points (Tensor): shape(num_total_anchors, 2), 坐标统一到输入640x640gt_labels (Tensor): shape(bs, n_max_boxes, 1)gt_bboxes (Tensor): shape(bs, n_max_boxes, 4)mask_gt (Tensor): shape(bs, n_max_boxes, 1)Returns:target_labels (Tensor): shape(bs, num_total_anchors)target_bboxes (Tensor): shape(bs, num_total_anchors, 4)target_scores (Tensor): shape(bs, num_total_anchors, num_classes)fg_mask (Tensor): shape(bs, num_total_anchors)target_gt_idx (Tensor): shape(bs, num_total_anchors) """"""mask_pos: (Tensor:(b,8,8400)), 01矩阵, 每个目标得分top10的anchor取1align_metric: (Tensor:(b,8,8400)), iou与分类得分融合指标overlaps: (Tensor:(b,8,8400)), iou"""mask_pos, align_metric, overlaps = self.get_pos_mask(pd_scores, pd_bboxes, gt_labels, gt_bboxes, anc_points, mask_gt)"""mask_pos: (Tensor:(b,8,8400)), 去重后的结果fg_mask: (Tensor:(b,8400)), fg_mask=mask_pos.sum(-2), anchor是否与gt匹配target_gt_idx: (Tensor:(b,8400)), target_gt_idx=mask_pos.argmax(-2), anchor匹配目标的索引"""target_gt_idx, fg_mask, mask_pos = select_highest_overlaps(mask_pos, overlaps, self.n_max_boxes)# Assigned target"""target_labels: (Tensor:(b,8400))target_bboxes: (Tensor:(b,8400,4))target_scores: (Tensor:(b,8400,cls_n)), one-hot label"""target_labels, target_bboxes, target_scores = self.get_targets(gt_labels, gt_bboxes, target_gt_idx, fg_mask)# Normalizealign_metric *= mask_pospos_align_metrics = align_metric.amax(axis=-1, keepdim=True) # b, max_num_objpos_overlaps = (overlaps * mask_pos).amax(axis=-1, keepdim=True) # b, max_num_objnorm_align_metric = (align_metric * pos_overlaps / (pos_align_metrics + self.eps)).amax(-2).unsqueeze(-1) # b, a, 1target_scores = target_scores * norm_align_metricreturn target_labels, target_bboxes, target_scores, fg_mask.bool(), target_gt_idx

3.1 get_pos_mask

def get_pos_mask(self, pd_scores, pd_bboxes, gt_labels, gt_bboxes, anc_points, mask_gt):"""mask_in_gts: (Tensor:(b,8,8400))01矩阵, 若anchor坐标在某个gt_bboxes内部则为1这里把(mask_in_gts*mask_gt)称作候选anchor"""mask_in_gts = select_candidates_in_gts(anc_points, gt_bboxes)"""align_metric: (Tensor:(b,8,8400)), iou与分类得分融合指标overlaps: (Tensor:(b,8,8400)), iou仅候选anchor部分计算指标, 其余位置为0"""align_metric, overlaps = self.get_box_metrics(pd_scores, pd_bboxes, gt_labels, gt_bboxes, mask_in_gts * mask_gt)"""mask_pos: (b,8,8400)01矩阵, 候选anchor中得分前10取1"""mask_topk = self.select_topk_candidates(align_metric, topk_mask=mask_gt.expand(-1, -1, self.topk).bool())mask_pos = mask_topk * mask_in_gts * mask_gtreturn mask_pos, align_metric, overlaps

(1)挑出在 gt_bbox 内部的 anchor

def select_candidates_in_gts(xy_centers, gt_bboxes, eps=1e-9):n_anchors = xy_centers.shape[0]bs, n_boxes, _ = gt_bboxes.shapelt, rb = gt_bboxes.view(-1, 1, 4).chunk(2, 2) # left-top, right-bottombbox_deltas = torch.cat((xy_centers[None] - lt, rb - xy_centers[None]), dim=2).view(bs, n_boxes, n_anchors, -1)return bbox_deltas.amin(3).gt_(eps)

(2)计算目标框指标

def get_box_metrics(self, pd_scores, pd_bboxes, gt_labels, gt_bboxes, mask_gt):"""Compute alignment metric given predicted and ground truth bounding boxes."""na = pd_bboxes.shape[-2]mask_gt = mask_gt.bool() # b, max_num_obj, h*woverlaps = torch.zeros([self.bs, self.n_max_boxes, na], dtype=pd_bboxes.dtype, device=pd_bboxes.device)bbox_scores = torch.zeros([self.bs, self.n_max_boxes, na], dtype=pd_scores.dtype, device=pd_scores.device)ind = torch.zeros([2, self.bs, self.n_max_boxes], dtype=torch.long) # 2, b, max_num_objind[0] = torch.arange(end=self.bs).view(-1, 1).expand(-1, self.n_max_boxes) # b, max_num_objind[1] = gt_labels.squeeze(-1) # b, max_num_obj# Get the scores of each grid for each gt cls"""bbox_scores: (Tensor:(b,8,8400))把候选anchor对应的正确类别的 cls_score 记录下来"""bbox_scores[mask_gt] = pd_scores[ind[0], :, ind[1]][mask_gt] # b, max_num_obj, h*w# (b, max_num_obj, 1, 4), (b, 1, h*w, 4)pd_boxes = pd_bboxes.unsqueeze(1).expand(-1, self.n_max_boxes, -1, -1)[mask_gt]gt_boxes = gt_bboxes.unsqueeze(2).expand(-1, -1, na, -1)[mask_gt]overlaps[mask_gt] = bbox_iou(gt_boxes, pd_boxes, xywh=False, CIoU=True).squeeze(-1).clamp_(0)"""alpha=0.5, beta=6"""align_metric = bbox_scores.pow(self.alpha) * overlaps.pow(self.beta)return align_metric, overlaps

(3)选取得分 Top-10 的 anchor

def select_topk_candidates(self, metrics, largest=True, topk_mask=None):"""Select the top-k candidates based on the given metrics.Args:metrics (Tensor): A tensor of shape (b, max_num_obj, h*w), where b is the batch size,max_num_obj is the maximum number of objects, and h*w represents thetotal number of anchor points.largest (bool): If True, select the largest values; otherwise, select the smallest values.topk_mask (Tensor): An optional boolean tensor of shape (b, max_num_obj, topk), wheretopk is the number of top candidates to consider. If not provided,the top-k values are automatically computed based on the given metrics.Returns:(Tensor): A tensor of shape (b, max_num_obj, h*w) containing the selected top-k candidates."""# (b, max_num_obj, topk)topk_metrics, topk_idxs = torch.topk(metrics, self.topk, dim=-1, largest=largest)if topk_mask is None:topk_mask = (topk_metrics.max(-1, keepdim=True)[0] > self.eps).expand_as(topk_idxs)# (b, max_num_obj, topk)topk_idxs.masked_fill_(~topk_mask, 0)# (b, max_num_obj, topk, h*w) -> (b, max_num_obj, h*w)"""count_tensor: (b,8,8400)"""count_tensor = torch.zeros(metrics.shape, dtype=torch.int8, device=topk_idxs.device)"""ones: (b,8,1)"""ones = torch.ones_like(topk_idxs[:, :, :1], dtype=torch.int8, device=topk_idxs.device)for k in range(self.topk):# Expand topk_idxs for each value of k and add 1 at the specified positionscount_tensor.scatter_add_(-1, topk_idxs[:, :, k:k + 1], ones)# filter invalid bboxes"""这里去除的无效框其实就是与mask_gt对应的假目标"""count_tensor.masked_fill_(count_tensor > 1, 0)return count_tensor.to(metrics.dtype)

3.2 select_highest_overlaps

当某个anchor与多个目标适配时,选取得分最高的目标保留为1,其他置零。

def select_highest_overlaps(mask_pos, overlaps, n_max_boxes):"""If an anchor box is assigned to multiple gts, the one with the highest IoI will be selected.Args:mask_pos (Tensor): shape(b, n_max_boxes, h*w)overlaps (Tensor): shape(b, n_max_boxes, h*w)Returns:target_gt_idx (Tensor): shape(b, h*w)fg_mask (Tensor): shape(b, h*w)mask_pos (Tensor): shape(b, n_max_boxes, h*w)"""# (b, n_max_boxes, h*w) -> (b, h*w)fg_mask = mask_pos.sum(-2)if fg_mask.max() > 1: # one anchor is assigned to multiple gt_bboxesmask_multi_gts = (fg_mask.unsqueeze(1) > 1).expand(-1, n_max_boxes, -1) # (b, n_max_boxes, h*w)max_overlaps_idx = overlaps.argmax(1) # (b, h*w)is_max_overlaps = torch.zeros(mask_pos.shape, dtype=mask_pos.dtype, device=mask_pos.device)is_max_overlaps.scatter_(1, max_overlaps_idx.unsqueeze(1), 1)mask_pos = torch.where(mask_multi_gts, is_max_overlaps, mask_pos).float() # (b, n_max_boxes, h*w)fg_mask = mask_pos.sum(-2)# Find each grid serve which gt(index)target_gt_idx = mask_pos.argmax(-2) # (b, h*w)return target_gt_idx, fg_mask, mask_pos

3.3 get_targets

def get_targets(self, gt_labels, gt_bboxes, target_gt_idx, fg_mask):"""Compute target labels, target bounding boxes, and target scores for the positive anchor points.Args:gt_labels (Tensor): Ground truth labels of shape (b, max_num_obj, 1), where b is thebatch size and max_num_obj is the maximum number of objects.gt_bboxes (Tensor): Ground truth bounding boxes of shape (b, max_num_obj, 4).target_gt_idx (Tensor): Indices of the assigned ground truth objects for positiveanchor points, with shape (b, h*w), where h*w is the totalnumber of anchor points.fg_mask (Tensor): A boolean tensor of shape (b, h*w) indicating the positive(foreground) anchor points.Returns:(Tuple[Tensor, Tensor, Tensor]): A tuple containing the following tensors:- target_labels (Tensor): Shape (b, h*w), containing the target labels forpositive anchor points.- target_bboxes (Tensor): Shape (b, h*w, 4), containing the target bounding boxesfor positive anchor points.- target_scores (Tensor): Shape (b, h*w, num_classes), containing the target scoresfor positive anchor points, where num_classes is the numberof object classes."""# Assigned target labels, (b, 1)batch_ind = torch.arange(end=self.bs, dtype=torch.int64, device=gt_labels.device)[..., None]target_gt_idx = target_gt_idx + batch_ind * self.n_max_boxes # (b, h*w)target_labels = gt_labels.long().flatten()[target_gt_idx] # (b, h*w)# Assigned target boxes, (b, max_num_obj, 4) -> (b, h*w)target_bboxes = gt_bboxes.view(-1, 4)[target_gt_idx]# Assigned target scorestarget_labels.clamp_(0)# 10x faster than F.one_hot()target_scores = torch.zeros((target_labels.shape[0], target_labels.shape[1], self.num_classes),dtype=torch.int64,device=target_labels.device) # (b, h*w, 80)target_scores.scatter_(2, target_labels.unsqueeze(-1), 1)fg_scores_mask = fg_mask[:, :, None].repeat(1, 1, self.num_classes) # (b, h*w, 80)target_scores = torch.where(fg_scores_mask > 0, target_scores, 0)return target_labels, target_bboxes, target_scores

4. class loss

target_scores_sum = max(target_scores.sum(), 1)

# cls loss

loss[2] = self.bce(pred_scores, target_scores.to(dtype)).sum() / target_scores_sum # BCEself.bce = nn.BCEWithLogitsLoss(reduction='none')

5. bbox loss

target_bboxes /= stride_tensor

loss[0], loss[3] = self.bbox_loss(pred_distri, pred_bboxes, anchor_points, target_bboxes / stride_tensor, target_scores, target_scores_sum, fg_mask)def forward(self, pred_dist, pred_bboxes, anchor_points, target_bboxes, target_scores, target_scores_sum, fg_mask):"""IoU loss."""weight = target_scores.sum(-1)[fg_mask].unsqueeze(-1)iou = bbox_iou(pred_bboxes[fg_mask], target_bboxes[fg_mask], xywh=False, CIoU=True)loss_iou = ((1.0 - iou) * weight).sum() / target_scores_sum# DFL lossif self.use_dfl:target_ltrb = bbox2dist(anchor_points, target_bboxes, self.reg_max)loss_dfl = self._df_loss(pred_dist[fg_mask].view(-1, self.reg_max + 1), target_ltrb[fg_mask]) * weightloss_dfl = loss_dfl.sum() / target_scores_sumelse:loss_dfl = torch.tensor(0.0).to(pred_dist.device)return loss_iou, loss_dfl@staticmethod

def _df_loss(pred_dist, target):"""Return sum of left and right DFL losses."""# Distribution Focal Loss (DFL) proposed in Generalized Focal Loss https://ieeexplore.ieee.org/document/9792391tl = target.long() # target lefttr = tl + 1 # target rightwl = tr - target # weight leftwr = 1 - wl # weight rightreturn (F.cross_entropy(pred_dist, tl.view(-1), reduction='none').view(tl.shape) * wl +F.cross_entropy(pred_dist, tr.view(-1), reduction='none').view(tl.shape) * wr).mean(-1, keepdim=True)

6. mask loss

"""下采样到160x160"""

masks = batch['masks'].to(self.device).float()

if tuple(masks.shape[-2:]) != (mask_h, mask_w): # downsamplemasks = F.interpolate(masks[None], (mask_h, mask_w), mode='nearest')[0]for i in range(batch_size):if fg_mask[i].sum():mask_idx = target_gt_idx[i][fg_mask[i]]if self.overlap:"""得到每个有效anchor对应的gt_mask (n,160,160)"""gt_mask = torch.where(masks[[i]] == (mask_idx + 1).view(-1, 1, 1), 1.0, 0.0)else:gt_mask = masks[batch_idx.view(-1) == i][mask_idx]xyxyn = target_bboxes[i][fg_mask[i]] / imgsz[[1, 0, 1, 0]]marea = xyxy2xywh(xyxyn)[:, 2:].prod(1)mxyxy = xyxyn * torch.tensor([mask_w, mask_h, mask_w, mask_h], device=self.device)loss[1] += self.single_mask_loss(gt_mask, pred_masks[i][fg_mask[i]], proto[i], mxyxy, marea) # segelse:loss[1] += (proto * 0).sum() + (pred_masks * 0).sum() # inf sums may lead to nan lossdef single_mask_loss(self, gt_mask, pred, proto, xyxy, area):"""Mask loss for one image."""pred_mask = (pred @ proto.view(self.nm, -1)).view(-1, *proto.shape[1:]) # (n, 32) @ (32,80,80) -> (n,80,80)"""loss:(n,160,160)"""loss = F.binary_cross_entropy_with_logits(pred_mask, gt_mask, reduction='none')"""每个anchor的损失求均值后除以对应box的面积再求均值"""return (crop_mask(loss, xyxy).mean(dim=(1, 2)) / area).mean()

7. loss 融合

"""

box=7.5, cls=0.5, dfl=1.5

"""

loss[0] *= self.hyp.box # box gain

loss[1] *= self.hyp.box / batch_size # seg gain

loss[2] *= self.hyp.cls # cls gain

loss[3] *= self.hyp.dfl # dfl gainreturn loss.sum() * batch_size, loss.detach()

8. IoU 细节

def bbox_iou(box1, box2, xywh=True, GIoU=False, DIoU=False, CIoU=False, eps=1e-7):"""Calculate Intersection over Union (IoU) of box1(1, 4) to box2(n, 4).Args:box1 (torch.Tensor): A tensor representing a single bounding box with shape (1, 4).box2 (torch.Tensor): A tensor representing n bounding boxes with shape (n, 4).xywh (bool, optional): If True, input boxes are in (x, y, w, h) format. If False, input boxes are in(x1, y1, x2, y2) format. Defaults to True.GIoU (bool, optional): If True, calculate Generalized IoU. Defaults to False.DIoU (bool, optional): If True, calculate Distance IoU. Defaults to False.CIoU (bool, optional): If True, calculate Complete IoU. Defaults to False.eps (float, optional): A small value to avoid division by zero. Defaults to 1e-7.Returns:(torch.Tensor): IoU, GIoU, DIoU, or CIoU values depending on the specified flags."""# Get the coordinates of bounding boxesif xywh: # transform from xywh to xyxy(x1, y1, w1, h1), (x2, y2, w2, h2) = box1.chunk(4, -1), box2.chunk(4, -1)w1_, h1_, w2_, h2_ = w1 / 2, h1 / 2, w2 / 2, h2 / 2b1_x1, b1_x2, b1_y1, b1_y2 = x1 - w1_, x1 + w1_, y1 - h1_, y1 + h1_b2_x1, b2_x2, b2_y1, b2_y2 = x2 - w2_, x2 + w2_, y2 - h2_, y2 + h2_else: # x1, y1, x2, y2 = box1b1_x1, b1_y1, b1_x2, b1_y2 = box1.chunk(4, -1)b2_x1, b2_y1, b2_x2, b2_y2 = box2.chunk(4, -1)w1, h1 = b1_x2 - b1_x1, b1_y2 - b1_y1 + epsw2, h2 = b2_x2 - b2_x1, b2_y2 - b2_y1 + eps# Intersection areainter = (b1_x2.minimum(b2_x2) - b1_x1.maximum(b2_x1)).clamp_(0) * \(b1_y2.minimum(b2_y2) - b1_y1.maximum(b2_y1)).clamp_(0)# Union Areaunion = w1 * h1 + w2 * h2 - inter + eps# IoUiou = inter / unionif CIoU or DIoU or GIoU:cw = b1_x2.maximum(b2_x2) - b1_x1.minimum(b2_x1) # convex (smallest enclosing box) widthch = b1_y2.maximum(b2_y2) - b1_y1.minimum(b2_y1) # convex heightif CIoU or DIoU: # Distance or Complete IoU https://arxiv.org/abs/1911.08287v1c2 = cw ** 2 + ch ** 2 + eps # convex diagonal squaredrho2 = ((b2_x1 + b2_x2 - b1_x1 - b1_x2) ** 2 + (b2_y1 + b2_y2 - b1_y1 - b1_y2) ** 2) / 4 # center dist ** 2if CIoU: # https://github.com/Zzh-tju/DIoU-SSD-pytorch/blob/master/utils/box/box_utils.py#L47v = (4 / math.pi ** 2) * (torch.atan(w2 / h2) - torch.atan(w1 / h1)).pow(2)with torch.no_grad():alpha = v / (v - iou + (1 + eps))return iou - (rho2 / c2 + v * alpha) # CIoUreturn iou - rho2 / c2 # DIoUc_area = cw * ch + eps # convex areareturn iou - (c_area - union) / c_area # GIoU https://arxiv.org/pdf/1902.09630.pdfreturn iou # IoU

虽然注释中说这个函数是计算一个框 box1(1,4) 与多个框 box2(n,4) 的 IoU,但实际也能计算多个框 box1(n,4) 与多个框 box2(n,4) 的 IoU(n相同)。

(1)IoU

IoU = S I S 1 + S 2 − S I \text{IoU}=\frac{S_I}{S_1+S_2-S_I} IoU=S1+S2−SISI

(2)GIoU

GIoU = IoU − S C − S I S C \text{GIoU}=\text{IoU}-\frac{S_C-S_I}{S_C} GIoU=IoU−SCSC−SI

当2个box无交集时,GIoU 可以额外衡量两个 box 的距离,距离越近,GIoU 越大

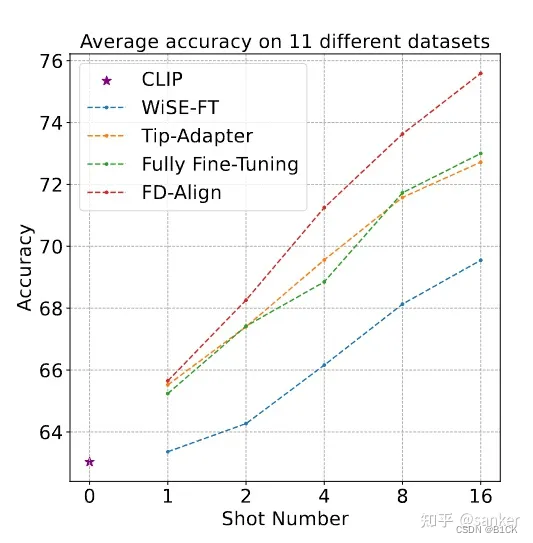

(3)DIoU

DIoU = IoU − d 2 c 2 \text{DIoU}=\text{IoU}-\frac{d^2}{c^2} DIoU=IoU−c2d2

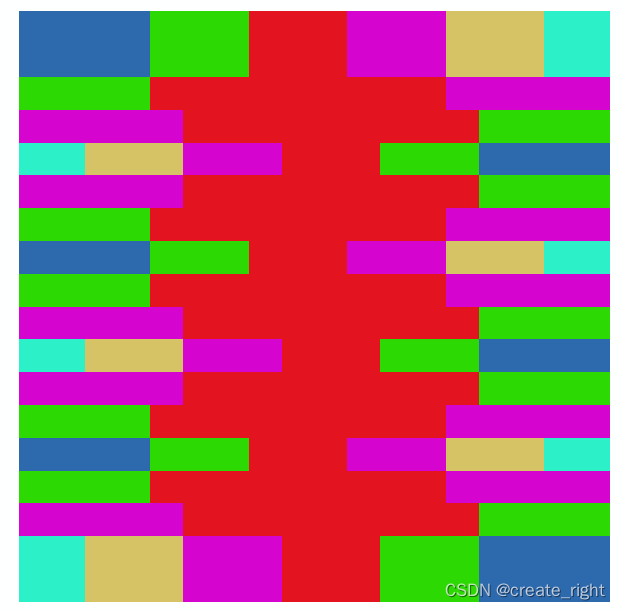

在上图这些情况下 GIoU 降级成了 IoU,但是 DIoU 仍可以进行区分。绿色框为目标框,红色框为预测框。

第一行为 GIoU,第二行为 DIoU。黑色框为 Anchor,绿色框为目标框,蓝色和红色框为预测框。

GIoU 通常会增大预测框使其与目标框重叠,而 DIoU 会直接最小化中心点距离,收敛速度更快。

(4)CIoU

CIoU = DIoU − v 2 1 − IoU + v \text{CIoU}=\text{DIoU}-\frac{v^2}{1-\text{IoU}+v} CIoU=DIoU−1−IoU+vv2

v = ( arctan ( w 2 / h 2 ) π / 2 − arctan ( w 1 / h 1 ) π / 2 ) 2 v=(\frac{\text{arctan}(w_2/h_2)}{\pi/2}-\frac{\text{arctan}(w_1/h_1)}{\pi/2})^2 v=(π/2arctan(w2/h2)−π/2arctan(w1/h1))2

当两个面积相同但长宽比不同的 box1 在 box2 内部且中心点距离相同时,DIoU 无法区分,而 CIoU 能进一步优化预测框的长宽比。