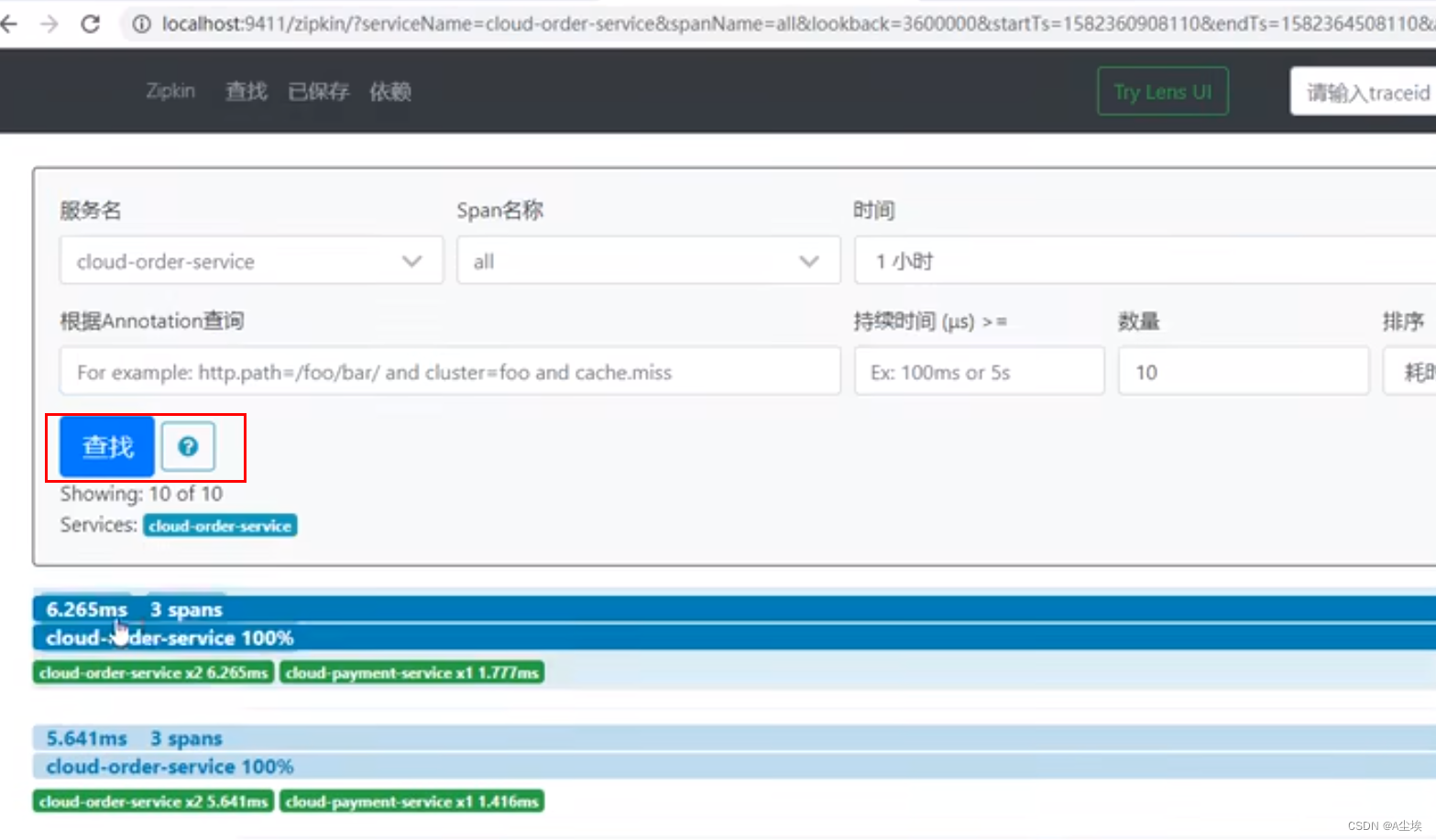

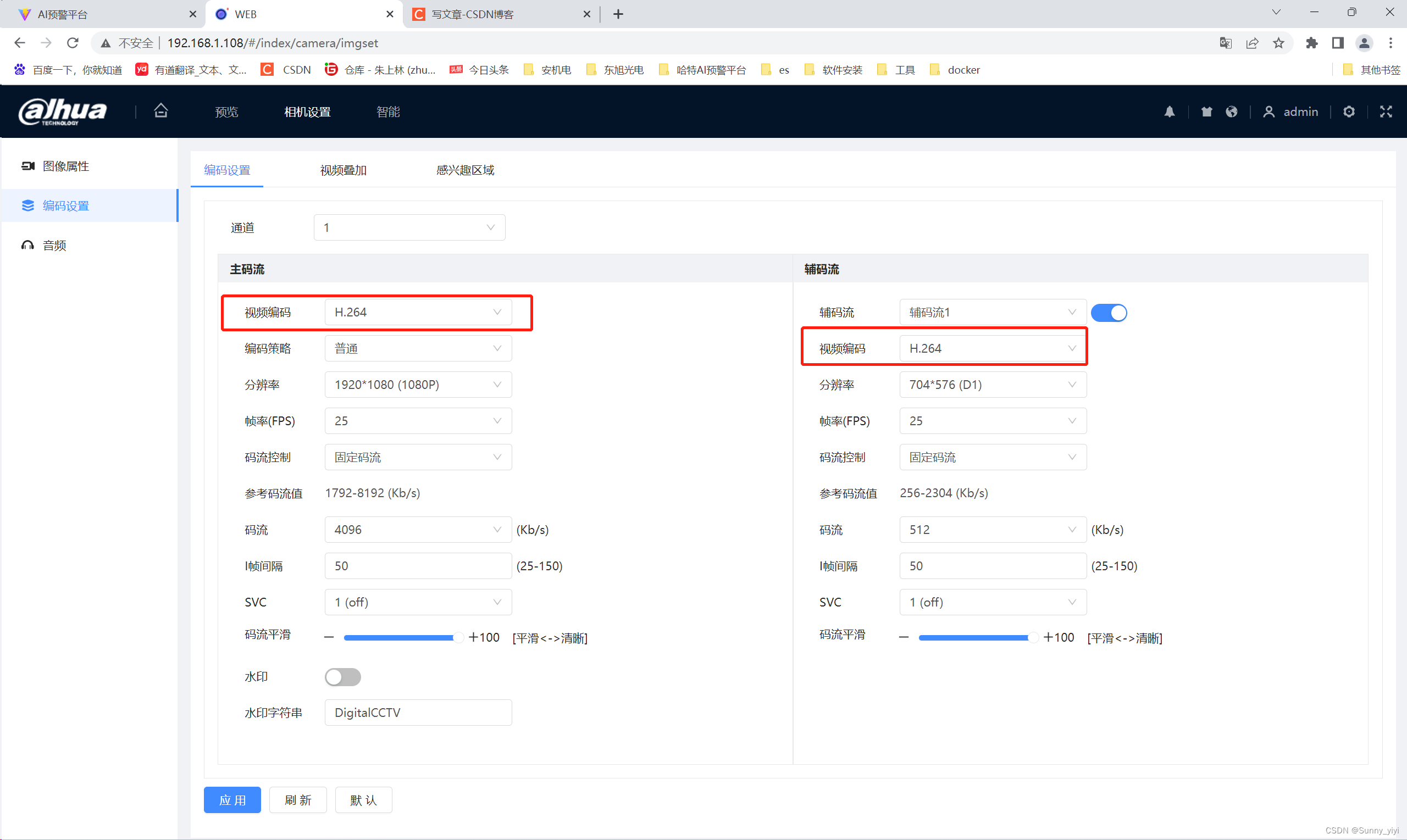

先看下效果,中间主视频流就是大华相机(视频编码H.264),海康相机(视屏编码H.265)

前端接入视屏流代码

<!--视频流--><div id="col2"><div class="cell" style="flex: 7; background: none"><div class="cell-box" style="position: relative"><video autoplay muted id="video" class="video" /><div class="cell div-faces"><div class="cell-box"><!--人脸识别--><div class="faces-wrapper"><div v-for="i in 5" :key="i" class="face-wrapper"><div class="face-arrow"></div><divclass="face-image":style="{background: faceImages[i - 1]? `url(data:image/jpeg;base64,${faceImages[i - 1]}) 0 0 / 100% 100% no-repeat`: ''}"></div></div></div></div></div></div></div>

api.post('screen2/init').then((attach) => {const { streamerIp, streamerPort, cameraIp, cameraPort, cameraAdmin, cameraPsw } = attachwebRtcServer = new WebRtcStreamer('video', `${location.protocol}//${streamerIp}:${streamerPort}`)webRtcServer.connect(`rtsp://${cameraAdmin}:${cameraPsw}@${cameraIp}:${cameraPort}`)})

后台部署需要启动:webrtc-streamer.exe 用来解码视屏流,这样就能实现web页面接入视屏流。

主视屏流下面的相机抓拍图片和预警数据接口是怎么实现的呢?

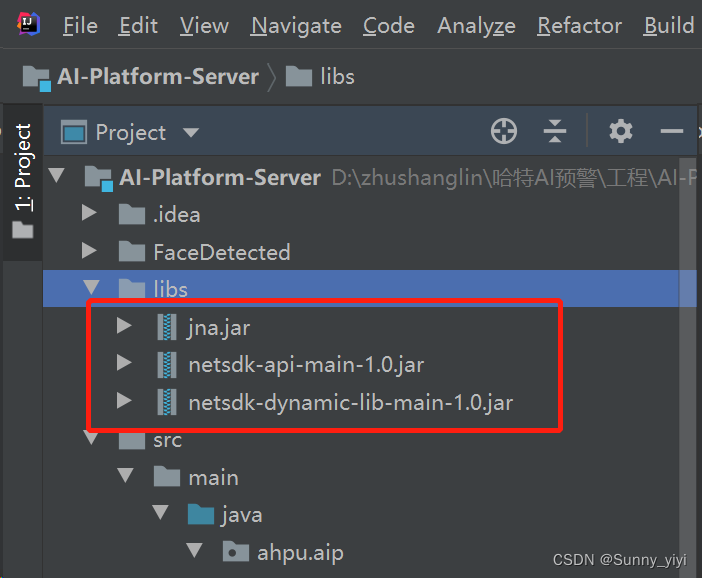

1、需要把大华相机的sdk加载到项目中sdk下载

在maven的pom.xml中添加依赖,将上面jar包 依赖到项目中

<!--外部依赖--><dependency><!--groupId和artifactId不知道随便写--><groupId>com.dahua.netsdk</groupId><artifactId>netsdk-api-main</artifactId><!--依赖范围,必须system--><scope>system</scope><version>1.0-SNAPSHOT</version><!--依赖所在位置--><systemPath>${project.basedir}/libs/netsdk-api-main-1.0.jar</systemPath></dependency><dependency><!--groupId和artifactId不知道随便写--><groupId>com.dahua.netsdk</groupId><artifactId>netsdk-dynamic</artifactId><!--依赖范围,必须system--><scope>system</scope><version>1.0-SNAPSHOT</version><!--依赖所在位置--><systemPath>${project.basedir}/libs/netsdk-dynamic-lib-main-1.0.jar</systemPath></dependency><dependency><!--groupId和artifactId不知道随便写--><groupId>com.dahua.netsdk</groupId><artifactId>netsdk-jna</artifactId><!--依赖范围,必须system--><scope>system</scope><version>1.0-SNAPSHOT</version><!--依赖所在位置--><systemPath>${project.basedir}/libs/jna.jar</systemPath></dependency>

然后写一个大华初始化,登录,订阅类 InitDahua

package ahpu.aip.controller.dahua;import com.netsdk.lib.NetSDKLib;

import com.netsdk.lib.ToolKits;

import com.sun.jna.Pointer;

import com.sun.jna.ptr.IntByReference;

import org.springframework.beans.factory.DisposableBean;

import org.springframework.boot.ApplicationArguments;

import org.springframework.boot.ApplicationRunner;

import org.springframework.stereotype.Component;@Component

public class InitDahua implements ApplicationRunner {@Overridepublic void run(ApplicationArguments args) throws Exception {//NetSDK 库初始化boolean bInit = false;NetSDKLib netsdkApi = NetSDKLib.NETSDK_INSTANCE;// 智能订阅句柄NetSDKLib.LLong attachHandle = new NetSDKLib.LLong(0);//设备断线回调: 通过 CLIENT_Init 设置该回调函数,当设备出现断线时,SDK会调用该函数class DisConnect implements NetSDKLib.fDisConnect {public void invoke(NetSDKLib.LLong m_hLoginHandle, String pchDVRIP, int nDVRPort, Pointer dwUser) {System.out.printf("Device[%s] Port[%d] DisConnect!\n", pchDVRIP, nDVRPort);}}//网络连接恢复,设备重连成功回调// 通过 CLIENT_SetAutoReconnect 设置该回调函数,当已断线的设备重连成功时,SDK会调用该函数class HaveReConnect implements NetSDKLib.fHaveReConnect {public void invoke(NetSDKLib.LLong m_hLoginHandle, String pchDVRIP, int nDVRPort, Pointer dwUser) {System.out.printf("ReConnect Device[%s] Port[%d]\n", pchDVRIP, nDVRPort);}}//登陆参数String m_strIp = "192.168.1.108";int m_nPort = 37777;String m_strUser = "admin";String m_strPassword = "admin123456";//设备信息NetSDKLib.NET_DEVICEINFO_Ex m_stDeviceInfo = new NetSDKLib.NET_DEVICEINFO_Ex(); // 对应CLIENT_LoginEx2NetSDKLib.LLong m_hLoginHandle = new NetSDKLib.LLong(0); // 登陆句柄NetSDKLib.LLong m_hAttachHandle = new NetSDKLib.LLong(0); // 智能订阅句柄// 初始化bInit = netsdkApi.CLIENT_Init(new DisConnect(), null);if(!bInit) {System.out.println("Initialize SDK failed");}else{System.out.println("Initialize SDK Success");}// 登录int nSpecCap = NetSDKLib.EM_LOGIN_SPAC_CAP_TYPE.EM_LOGIN_SPEC_CAP_TCP; //=0IntByReference nError = new IntByReference(0);m_hLoginHandle = netsdkApi.CLIENT_LoginEx2(m_strIp, m_nPort, m_strUser, m_strPassword, nSpecCap, null, m_stDeviceInfo, nError);if(m_hLoginHandle.longValue() == 0) {System.err.printf("Login Device[%s] Port[%d]Failed.\n", m_strIp, m_nPort, ToolKits.getErrorCode());} else {System.out.println("Login Success [ " + m_strIp + " ]");}// 订阅int bNeedPicture = 1; // 是否需要图片m_hAttachHandle = netsdkApi.CLIENT_RealLoadPictureEx(m_hLoginHandle, 0, NetSDKLib.EVENT_IVS_ALL, bNeedPicture, new AnalyzerDataCB(), null, null);if(m_hAttachHandle.longValue() == 0) {System.err.println("CLIENT_RealLoadPictureEx Failed, Error:" + ToolKits.getErrorCode());}else {System.out.println("订阅成功~");}}}回调类,具体识别结果在回调中获取

package ahpu.aip.controller.dahua;import ahpu.aip.AiPlatformServerApplication;

import ahpu.aip.util.RedisUtils;

import ahpu.aip.util.StringUtils;

import cn.hutool.core.codec.Base64;

import cn.hutool.core.collection.CollUtil;

import cn.hutool.core.util.ObjUtil;

import cn.hutool.core.util.StrUtil;

import com.alibaba.fastjson2.JSON;

import com.alibaba.fastjson2.JSONArray;

import com.netsdk.lib.NetSDKLib;

import com.netsdk.lib.ToolKits;

import com.sun.jna.Pointer;import javax.imageio.ImageIO;

import java.awt.image.BufferedImage;

import java.io.ByteArrayInputStream;

import java.io.File;

import java.io.IOException;

import java.util.ArrayList;

import java.util.Collections;

import java.util.HashMap;

import java.util.List;import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.stereotype.Component;

import org.springframework.util.CollectionUtils;@Component

public class AnalyzerDataCB implements NetSDKLib.fAnalyzerDataCallBack {private static final Logger log = LoggerFactory.getLogger(AnalyzerDataCB.class);public static HashMap<String, Object> temMap;private int bGlobalScenePic; //全景图是否存在, 类型为BOOL, 取值为0或者1private NetSDKLib.NET_PIC_INFO stuGlobalScenePicInfo; //全景图片信息private NetSDKLib.NET_PIC_INFO stPicInfo; // 人脸图private NetSDKLib.NET_FACE_DATA stuFaceData; // 人脸数据private int nCandidateNumEx; // 当前人脸匹配到的候选对象数量private NetSDKLib.CANDIDATE_INFOEX[] stuCandidatesEx; // 当前人脸匹配到的候选对象信息扩展// 全景大图、人脸图、对比图private BufferedImage globalBufferedImage = null;private BufferedImage personBufferedImage = null;private BufferedImage candidateBufferedImage = null;String[] faceSexStr = {"未知", "男", "女"};// 用于保存对比图的图片缓存,用于多张图片显示private ArrayList<BufferedImage> arrayListBuffer = new ArrayList<BufferedImage>();@Overridepublic int invoke(NetSDKLib.LLong lAnalyzerHandle, int dwAlarmType,Pointer pAlarmInfo, Pointer pBuffer, int dwBufSize,Pointer dwUser, int nSequence, Pointer reserved) {// 获取相关事件信息getObjectInfo(dwAlarmType, pAlarmInfo);/*if(dwAlarmType == NetSDKLib.EVENT_IVS_FACERECOGNITION) { // 目标识别// 保存图片savePicture(pBuffer, dwBufSize, bGlobalScenePic, stuGlobalScenePicInfo, stPicInfo, nCandidateNumEx, stuCandidatesEx);// 刷新UI时,将目标识别事件抛出处理EventQueue eventQueue = Toolkit.getDefaultToolkit().getSystemEventQueue();if (eventQueue != null) {eventQueue.postEvent(new FaceRecognitionEvent(this,globalBufferedImage,personBufferedImage,stuFaceData,arrayListBuffer,nCandidateNumEx,stuCandidatesEx));}} else*/ if(dwAlarmType == NetSDKLib.EVENT_IVS_FACEDETECT) { // 人脸检测// 保存图片savePicture(pBuffer, dwBufSize, stPicInfo);}return 0;}/*** 获取相关事件信息* @param dwAlarmType 事件类型* @param pAlarmInfo 事件信息指针*/public void getObjectInfo(int dwAlarmType, Pointer pAlarmInfo) {if(pAlarmInfo == null) {return;}switch(dwAlarmType){case NetSDKLib.EVENT_IVS_FACERECOGNITION: ///< 目标识别事件{NetSDKLib.DEV_EVENT_FACERECOGNITION_INFO msg = new NetSDKLib.DEV_EVENT_FACERECOGNITION_INFO();ToolKits.GetPointerData(pAlarmInfo, msg);bGlobalScenePic = msg.bGlobalScenePic;stuGlobalScenePicInfo = msg.stuGlobalScenePicInfo;stuFaceData = msg.stuFaceData;stPicInfo = msg.stuObject.stPicInfo;nCandidateNumEx = msg.nRetCandidatesExNum;stuCandidatesEx = msg.stuCandidatesEx;break;}case NetSDKLib.EVENT_IVS_FACEDETECT: ///< 人脸检测{NetSDKLib.DEV_EVENT_FACEDETECT_INFO msg = new NetSDKLib.DEV_EVENT_FACEDETECT_INFO();ToolKits.GetPointerData(pAlarmInfo, msg);stPicInfo = msg.stuObject.stPicInfo; // 检测到的人脸// System.out.println("sex:" + faceSexStr[msg.emSex]);log.info("口罩状态(0-未知,1-未识别,2-没戴口罩,3-戴口罩了):" + msg.emMask);log.info("时间:"+msg.UTC);RedisUtils.set("mask",msg.emMask==3?"戴口罩":"未戴口罩");RedisUtils.set("time",msg.UTC+"");break;}default:break;}}/*** 保存目标识别事件图片* @param pBuffer 抓拍图片信息* @param dwBufSize 抓拍图片大小*//* public void savePicture(Pointer pBuffer, int dwBufSize,int bGlobalScenePic, NetSDKLib.NET_PIC_INFO stuGlobalScenePicInfo,NetSDKLib.NET_PIC_INFO stPicInfo,int nCandidateNum, NetSDKLib.CANDIDATE_INFOEX[] stuCandidatesEx) {File path = new File("./FaceRegonition/");if (!path.exists()) {path.mkdir();}if (pBuffer == null || dwBufSize <= 0) {return;}/// 保存全景图 ///if(bGlobalScenePic == 1 && stuGlobalScenePicInfo != null) {String strGlobalPicPathName = path + "\\" + System.currentTimeMillis() + "Global.jpg";byte[] bufferGlobal = pBuffer.getByteArray(stuGlobalScenePicInfo.dwOffSet, stuGlobalScenePicInfo.dwFileLenth);ByteArrayInputStream byteArrInputGlobal = new ByteArrayInputStream(bufferGlobal);try {globalBufferedImage = ImageIO.read(byteArrInputGlobal);if(globalBufferedImage == null) {return;}ImageIO.write(globalBufferedImage, "jpg", new File(strGlobalPicPathName));} catch (IOException e2) {e2.printStackTrace();}}/// 保存人脸图 /if(stPicInfo != null) {String strPersonPicPathName = path + "\\" + System.currentTimeMillis() + "Person.jpg";byte[] bufferPerson = pBuffer.getByteArray(stPicInfo.dwOffSet, stPicInfo.dwFileLenth);ByteArrayInputStream byteArrInputPerson = new ByteArrayInputStream(bufferPerson);try {personBufferedImage = ImageIO.read(byteArrInputPerson);if(personBufferedImage == null) {return;}ImageIO.write(personBufferedImage, "jpg", new File(strPersonPicPathName));} catch (IOException e2) {e2.printStackTrace();}}/ 保存对比图 //arrayListBuffer.clear();if(nCandidateNum > 0 && stuCandidatesEx != null) {for(int i = 0; i < nCandidateNum; i++) {String strCandidatePicPathName = path + "\\" + System.currentTimeMillis() + "Candidate.jpg";// 多张对比图for(int j = 0; j < stuCandidatesEx[i].stPersonInfo.wFacePicNum; j++) {byte[] bufferCandidate = pBuffer.getByteArray(stuCandidatesEx[i].stPersonInfo.szFacePicInfo[j].dwOffSet, stuCandidatesEx[i].stPersonInfo.szFacePicInfo[j].dwFileLenth);ByteArrayInputStream byteArrInputCandidate = new ByteArrayInputStream(bufferCandidate);try {candidateBufferedImage = ImageIO.read(byteArrInputCandidate);if(candidateBufferedImage == null) {return;}ImageIO.write(candidateBufferedImage, "jpg", new File(strCandidatePicPathName));} catch (IOException e2) {e2.printStackTrace();}arrayListBuffer.add(candidateBufferedImage);}}}}*//*** 保存人脸检测事件图片 ===* @param pBuffer 抓拍图片信息* @param dwBufSize 抓拍图片大小*/public void savePicture(Pointer pBuffer, int dwBufSize, NetSDKLib.NET_PIC_INFO stPicInfo) {File path = new File("./FaceDetected/");if (!path.exists()) {path.mkdir();}if (pBuffer == null || dwBufSize <= 0) {return;}/// 保存全景图 ////* String strGlobalPicPathName = path + "\\" + System.currentTimeMillis() + "Global.jpg";byte[] bufferGlobal = pBuffer.getByteArray(0, dwBufSize);ByteArrayInputStream byteArrInputGlobal = new ByteArrayInputStream(bufferGlobal);try {globalBufferedImage = ImageIO.read(byteArrInputGlobal);if(globalBufferedImage == null) {return;}ImageIO.write(globalBufferedImage, "jpg", new File(strGlobalPicPathName));} catch (IOException e2) {e2.printStackTrace();}*//// 保存人脸图 /if(stPicInfo != null) {String strPersonPicPathName = path + "\\" + System.currentTimeMillis() + "Person.jpg";byte[] bufferPerson = pBuffer.getByteArray(stPicInfo.dwOffSet, stPicInfo.dwFileLenth);ByteArrayInputStream byteArrInputPerson = new ByteArrayInputStream(bufferPerson);try {personBufferedImage = ImageIO.read(byteArrInputPerson);if(personBufferedImage == null) {return;}ImageIO.write(personBufferedImage, "jpg", new File(strPersonPicPathName));// 把图片保存到resultMap中String base64 = Base64.encode(new File(strPersonPicPathName));log.info("base64图片:data:image/jpeg;base64,"+base64);RedisUtils.set("img","data:image/jpeg;base64,"+base64);String listStr = (String) RedisUtils.get("dahuaList");List<HashMap> list = JSONArray.parseArray(listStr,HashMap.class);HashMap<String,String> tmpResult = new HashMap<String,String>();tmpResult.put("img",(String) RedisUtils.get("img"));tmpResult.put("time",(String) RedisUtils.get("time"));tmpResult.put("mask",(String) RedisUtils.get("mask"));if(CollectionUtils.isEmpty(list)){list = new ArrayList<>();list.add(tmpResult);}else {list.add(tmpResult);}if(list.size()>5){RedisUtils.set("dahuaList",JSON.toJSONString(list.subList(list.size()-5,list.size())));}else {RedisUtils.set("dahuaList",JSON.toJSONString(list));}} catch (IOException e2) {e2.printStackTrace();}}}}人脸识别事件类

package ahpu.aip.controller.dahua;import com.netsdk.lib.NetSDKLib;import java.awt.*;

import java.awt.image.BufferedImage;

import java.io.UnsupportedEncodingException;

import java.util.ArrayList;

import org.springframework.stereotype.Component;public class FaceRecognitionEvent extends AWTEvent {private static final long serialVersionUID = 1L;public static final int EVENT_ID = AWTEvent.RESERVED_ID_MAX + 1;private BufferedImage globalImage = null;private BufferedImage personImage = null;private NetSDKLib.NET_FACE_DATA stuFaceData;private ArrayList<BufferedImage> arrayList = null;private int nCandidateNum;private ArrayList<String[]> candidateList;// 用于保存对比图的人脸库id、名称、人员名称、相似度private static String[] candidateStr = new String[4];private static final String encode = "UTF-8";public FaceRecognitionEvent(Object target,BufferedImage globalImage,BufferedImage personImage,NetSDKLib.NET_FACE_DATA stuFaceData,ArrayList<BufferedImage> arrayList,int nCandidateNum,NetSDKLib.CANDIDATE_INFOEX[] stuCandidatesEx) {super(target,EVENT_ID);this.globalImage = globalImage;this.personImage = personImage;this.stuFaceData = stuFaceData;this.arrayList = arrayList;this.nCandidateNum = nCandidateNum;this.candidateList = new ArrayList<String[]>();this.candidateList.clear();for(int i = 0; i < nCandidateNum; i++) {try {candidateStr[0] = new String(stuCandidatesEx[i].stPersonInfo.szGroupID, encode).trim();candidateStr[1] = new String(stuCandidatesEx[i].stPersonInfo.szGroupName, encode).trim();candidateStr[2] = new String(stuCandidatesEx[i].stPersonInfo.szPersonName, encode).trim();} catch (UnsupportedEncodingException e) {e.printStackTrace();}candidateStr[3] = String.valueOf(0xff & stuCandidatesEx[i].bySimilarity);this.candidateList.add(candidateStr);}}

}获取结果接口类

@RestController

@Validated

@RequestMapping("dahua/")

public class DahuaController {@ApiOperation(value = "大华人脸",tags = "大华人脸")@GetMapping("getFaceList")public R face() {String dahuaList = (String) RedisUtils.get("dahuaList");List<HashMap> list = JSONArray.parseArray(dahuaList,HashMap.class);return R.succ().attach(list);}}