2 Paddle3D 雷达点云CenterPoint模型训练–包含KITTI格式数据地址

2.0 数据集 百度DAIR-V2X开源路侧数据转kitti格式。

2.0.1 DAIR-V2X-I\velodyne中pcd格式的数据转为bin格式

参考源码:雷达点云数据.pcd格式转.bin格式

def pcd2bin():import numpy as npimport open3d as o3dfrom tqdm import tqdmimport ospcdPath = r'E:\DAIR-V2X-I\velodyne'binPath = r'E:\DAIR-V2X-I\kitti\training\velodyne'files = os.listdir(pcdPath)files = [f for f in files if f[-4:]=='.pcd']for ic in tqdm(range(len(files)), desc='进度 '):f = files[ic]pcdname = os.path.join(pcdPath, f)binname = os.path.join(binPath, f[:-4] + '.bin')# 读取PCD文件# pcd = o3d.io.read_point_cloud("./data/002140.ply")pcd = o3d.io.read_point_cloud(pcdname)# print('==============pcd\n', pcd)# print('==============pcd.points\n', pcd.points)points = np.asarray(pcd.points)# print('==============points\n', points)# print(type(points))# print(points.shape)# 添加全0列point0 = np.zeros((points.shape[0], 1))points = np.column_stack((points,point0))# print(points.shape)# 查看点云图像# o3d.visualization.draw_geometries([pcd])# 将PCD格式保存为BIN格式,使用.tofile实现;# 理论上o3d.io.write_point_cloud也可以实现,但是运行的时候,没有报错,但也并没有保存文件points.tofile(binname)o3d.io.write_point_cloud(os.path.join(binPath, f[:-4]+'.bin'), pcd) # if ic == 1:# break

可视化查看bin文件

def visBinData():"""可视化的形式查看点云数据的Bin文件:return:"""import numpy as npfrom tqdm import tqdmimport mayavi.mlabimport osbinPath = r'D:\lidar3D\data\Lidar0\bin'# binPath = r'./data'files = os.listdir(binPath)files = [f for f in files if f[-4:] == '.bin']for ic in tqdm(range(len(files)), desc='进度 '):f = files[ic]binname = os.path.join(binPath, f)pointcloud = np.fromfile(binname, dtype=np.float32, count=-1).reshape([-1,4])x = pointcloud[:, 0]y = pointcloud[:, 1]z = pointcloud[:, 2]r = pointcloud[:, 3]d = np.sqrt(x ** 2 + y ** 2) # Map Distance from sensordegr = np.degrees(np.arctan(z / d))vals = 'height'if vals == "height":col = zelse:col = dfig = mayavi.mlab.figure(bgcolor=(0, 0, 0), size=(640, 500))mayavi.mlab.points3d(x, y, z,col, # Values used for Colormode="point",colormap='spectral', # 'bone', 'copper', 'gnuplot'# color=(0, 1, 0), # Used a fixed (r,g,b) insteadfigure=fig,)mayavi.mlab.show()break

def visBinData():import open3d as o3dimport numpy as npimport os# 替换为你的 bin 文件路径bin_file_path = r'E:\DAIR-V2X-I\kitti_s\training\velodyne'files = os.listdir(bin_file_path)for f in files[:]:# 读取 bin 文件bin_file = os.path.join(bin_file_path, f)print(bin_file)points_np = np.fromfile(bin_file, dtype=np.float32)print(points_np.shape)points_np = points_np.reshape(-1, 4)print(points_np.shape)# 创建 Open3D 点云对象pcd = o3d.geometry.PointCloud()pcd.points = o3d.utility.Vector3dVector(points_np[:, :3])# 可视化点云o3d.visualization.draw_geometries([pcd])

打印查看bin文件中的数据

def readbinfiles():import numpy as npprint('\n' + '*' * 10 + 'myData' + '*' * 10)path = r'D:\lidar3D\data\mydatas1\kitti_my\training\velodyne\000003.bin'# b1 = np.fromfile(path, dtype=np.float)b2 = np.fromfile(path, dtype=np.float32, count=-1).reshape([-1, 4])print(type(b2))print(b2.shape)print(b2)

2.0.2 先对应training中的所有数据准备好

————————training

______________calib

______________image_2

______________Label_2

______________velodyne

【1】velodyne

这里是所有的.bin格式的点云文件

【2】iamge_2

这里是velodyne中的点云文件对应的图片文件。从原文件里面相同名称的图片复制过来就可以了,这里原图片文件是.jpg的格式,kitti里面查找.png的格式好像是写死的,为避免麻烦,可以先把图片直接重命名为.png的格式。()

def jpg2png():import ospath = r'E:\DAIR-V2X-I\example71\training\image_2'files = os.listdir(path)for f in files:fsrc = os.path.join(path, f)fdes = os.path.join(path, f[:-4]+'.png')os.rename(fsrc, fdes)

【3】calib

两种方式,一种方式是忽略DAIR-V2X数据中的标定,直接复制kitti中calib/000000.txt

if calibFlag:'''copy kitti-mini/training/calib/00000.txt cotent to my calib'''calibPath = r'E:\DAIR-V2X-I\training\calib'binPath = r'E:\DAIR-V2X-I\training\velodyne'kitti_miniCalibPath = r'D:\lidar3D\data\kitti_mini\training\calib/000000.txt'with open(kitti_miniCalibPath, "r") as calib_file:content = calib_file.read()for f in os.listdir(binPath):with open(os.path.join(calibPath, f[:-4]+".txt"), "w") as wf:wf.write(content)

另外一种方式是提取single-infrastructure-side\calib中的标定信息写进calib/xxxxxx.txt文件。因为DAIR-V2X只有一台相机,写到p0: ,R0_rect 和 Tr_velo_to_cam 也从中读取。由于Tr_velo_to_cam 是固定的,所以写死在了代码里面

if calibFlag1:V2XVelodynePath = r'E:\DAIR-V2X-I\training\velodyne'files = os.listdir(V2XVelodynePath)files = [f[:-4]+'.txt' for f in files]for f in files:content=[]cameintriPath = r'E:\DAIR-V2X-I\single-infrastructure-side\calib\camera_intrinsic'with open(os.path.join(cameintriPath,f[:-4]+'.json'), "r") as rf1:data = json.load(rf1)p0 = 'P0:'for d in data["P"]:p0 += ' {}'.format(d)R0_rect = 'R0_rect:'for r in data["R"]:R0_rect += ' {}'.format(r)content.append(p0+'\n')content.append('P1: 1 0 0 0 0 1 0 0 0 0 1 0\n')content.append('P2: 1 0 0 0 0 1 0 0 0 0 1 0\n')content.append('P3: 1 0 0 0 0 1 0 0 0 0 1 0\n')content.append('R0_rect: 1 0 0 0 1 0 0 0 1\n')# vir2camePath = os.path.join(V2XCalibPath, cliblist[1])# with open(os.path.join(vir2camePath,f[:-4]+'.json'), "r") as rf1:# data = json.load(rf1)# R = data["rotation"]# t = data["translation"]Tr_velo_to_cam = 'Tr_velo_to_cam: -0.032055018882740139 -0.9974518923884874 0.020551248965447915 -2.190444561668236 -0.2240930139414797 0.002986041494130043 -0.8756800120708629 5.6360862566491909 0.9737455440255373 -0.041678350017788 -0.2023375046787095 1.4163664770754852\n'content.append(Tr_velo_to_cam)content.append('Tr_imu_to_velo: 1 0 0 0 0 1 0 0 0 0 1 0')fcalibname = os.path.join(mykittiCalibPath, f)# content[-1] = content[-1].replace('\n', '')with open(fcalibname, 'w', encoding='utf-8') as wf:wf.writelines(content)

【4】Label_2

方法{{{见 《 2.1.3中——【4】问题记录3 —— 重新生成label_2文件过程如下》章节}}}

点云的标签文件single-infrastructure-side\label\virtuallidar*.json转为txt文件存储,这里3Class,故只取类型([“Car”, “Cyclist”, “Pedestrian”])([‘Cyclist’, ‘Car’, ‘Truck’])。

这里将每个标签(目标)记作temp, temp [0:16] 共计16列,含义分别为:

类别[0]+是否截断[1]+是否遮挡[2]+观察角度[3]+图像左上右下[4:8]+高宽长[8:11]+相机坐标系xyz[11:14]+方向角[14]+置信度[15]

注意:测试数据集才有最后一列的置信度。

json转txt代码如下,因为只训练点云数据,所以与图片相关的值这里用0代替。

如下代码是直接取json文件中的数据,尺寸取雷达坐标系下的,位置取相机坐标系下的。

if label_2Flag:# 1 创建文件夹 label_2label_2Path = r'E:\DAIR-V2X-I\kitti_s\training\label_22_kitti3clsNewXYZ'judgefolderExit_mkdir(label_2Path)V2XVelodynePath = r'E:\DAIR-V2X-I\kitti_s\training\velodyne'files = os.listdir(V2XVelodynePath)files = [f[:-4] + '.txt' for f in files]V2XLabelPathcamera = r'E:\DAIR-V2X-I\single-infrastructure-side\label\camera'V2XLabelPathlidar = r'E:\DAIR-V2X-I\single-infrastructure-side\label\virtuallidar'# 2 转化生成对应的.txt标签文件labelsList = {}labelsListnew = {}for f in files[:]:# print(f)with open(os.path.join(V2XLabelPathcamera, f[:-4] + '.json'), "r") as rf1:dataca = json.load(rf1)with open(os.path.join(V2XLabelPathlidar, f[:-4] + '.json'), "r") as rf1:datali = json.load(rf1)label_list = []if len(dataca) != len(datali):print('Error: len(dataca) != len(datali)')continuefor oi in range(len(dataca)):obj_ca = dataca[oi]obj_li = datali[oi]label_name = obj_ca["type"]label_nameli = obj_li["type"]if label_name != label_nameli:print('Error: label_name != label_nameli')continue# static labelsif label_name in labelsList.keys():labelsList[label_name] += 1else:labelsList[label_name] = 1# updata label type# Car 、 Cyclist、if label_name == 'Trafficcone' or label_name == 'ScooterRider' or label_name == 'Barrowlist':continueelif label_name == 'Motorcyclist':label_name = 'Cyclist'elif label_name == 'Van':label_name = 'Car'elif label_name == 'Bus':label_name = 'Car'elif label_name == 'Truck':label_name = 'Car'if label_name in labelsListnew.keys():labelsListnew[label_name] += 1else:labelsListnew[label_name] = 1scale = obj_li["3d_dimensions"] # 尺寸取雷达坐标系下的pos = obj_ca["3d_location"] # 位置取相机坐标系下的rot = obj_li["rotation"]alpha = obj_ca["alpha"] # 观察角度[3]cabox = obj_ca["2d_box"] # 图像左上右下[4:8]occluded_state = obj_ca["occluded_state"] # 是否遮挡[2]truncated_state = obj_ca["truncated_state"] # 是否截断[1]tempFlag = True # label_list.append(temp)if tempFlag == True:# 2.1 temp 追加目标类型# temp [0:16] 共计16列# 类别[0]+是否截断[1]+是否遮挡[2]+观察角度[3]+图像左上右下[4:8]+高宽长[8:11]+# 相机坐标系xyz[11:14]+方向角[14]+置信度[15]# 注意:测试数据集才有最后一列的置信度temp = label_name + ' '+ truncated_state +' '+ occluded_state +' 0 ' + \cabox["xmin"] + ' ' + cabox["ymin"] + ' ' + cabox["xmax"] + ' ' + cabox["ymax"] + ' 'temp += (scale['h'].split('.')[0] + "." + scale['h'].split('.')[1][:2] + ' ')temp += (scale['w'].split('.')[0] + "." + scale['w'].split('.')[1][:2] + ' ')temp += (scale['l'].split('.')[0] + "." + scale['l'].split('.')[1][:2] + ' ')# 2.2.1 pos_xyz# temp += (pos['x'].split('.')[0] + "." + pos['x'].split('.')[1][:2] + ' ')# temp += (pos['y'].split('.')[0] + "." + pos['y'].split('.')[1][:2] + ' ')# temp += (pos['z'].split('.')[0] + "." + pos['z'].split('.')[1][:2] + ' ')# 2.2 固定使用转换矩阵lidar_to_cam后# 此处的矩阵目的将lidar转到cam下,再转换到rect下,同样追加到temp中(temp追加pos_xyz)# trans_Mat: calib中的 Tr_velo_to_camtrans_Mat = np.array([[-3.205501888274e-02, -9.974518923885e-01, 2.055124896545e-02, -2.190444561668e+00],[-2.240930139415e-01, 2.986041494130e-03, -8.756800120709e-01, 5.636086256649e+00],[9.737455440255e-01, -4.167835001779e-02, -2.023375046787e-01, 1.416366477075e+00],[0, 0, 0, 1]])# R0_rect: 三维单位矩阵rect_Mat = np.array([[1, 0, 0, 0],[0, 1, 0, 0],[0, 0, 1, 0],[0, 0, 0, 1]])ptx = str2float(pos["x"])pty = str2float(pos["y"])# print('pos["z"], scale["l"], type(scale["l"])\n', pos["z"], scale["l"], type(scale["l"]))ptz = str2float(pos["z"]) - (0.5 * str2float(scale["l"]))# lidar坐标系下的相机中心点pt_in_lidar = np.array([[ptx],[pty],[ptz],[1.]])pt_in_camera = np.matmul(trans_Mat, pt_in_lidar)pt_in_rect = np.matmul(rect_Mat, pt_in_camera)temp += str(pt_in_rect[0, 0]).split('.')[0] + "." + str(pt_in_rect[0, 0]).split('.')[1][0:2]temp += " "temp += str(pt_in_rect[1, 0]).split('.')[0] + "." + str(pt_in_rect[1, 0]).split('.')[1][0:2]temp += " "temp += str(pt_in_rect[2, 0]).split('.')[0] + "." + str(pt_in_rect[2, 0]).split('.')[1][0:2]temp += " "## 2.3 temp 追加 rot_xyz(先将 rot 航向角 返回到0-360°之间)rot = - str2float(rot) - (np.pi / 2)if rot > np.pi:rot = rot - 2 * np.pielif rot < -np.pi:rot = rot + 2 * np.pitemp += str(rot).split('.')[0] + "." + str(rot).split('.')[1][0:2] + "\n"label_list.append(temp)label_list[-1] = label_list[-1].replace('\n', '')# print(label_list)with open(os.path.join(label_2Path, f[:-4]+'.txt'), "w") as wf:wf.writelines(label_list)print('labels Statics = ', labelsList)print('labels Statics = ', labelsListnew)

如下代码考虑重新计算了位置信息,固定使用转换矩阵lidar_to_cam。[“Car”, “Cyclist”, “Pedestrian”]

if label_2Flag:# 1 创建文件夹 label_2label_2Path = r'E:\DAIR-V2X-I\kitti\training\Label_2'judgefolderExit_mkdir(label_2Path)V2XVelodynePath = r'E:\DAIR-V2X-I\kitti\training\velodyne'files = os.listdir(V2XVelodynePath)files = [f[:-4] + '.txt' for f in files]V2XLabelPathcamera = r'E:\DAIR-V2X-I\single-infrastructure-side\label\camera'V2XLabelPathlidar = r'E:\DAIR-V2X-I\single-infrastructure-side\label\virtuallidar'# 2 转化生成对应的.txt标签文件labelsList = {}labelsListnew = {}for f in files[:]:# print(f)with open(os.path.join(V2XLabelPathcamera, f[:-4] + '.json'), "r") as rf1:dataca = json.load(rf1)with open(os.path.join(V2XLabelPathlidar, f[:-4] + '.json'), "r") as rf1:datali = json.load(rf1)label_list = []if len(dataca) != len(datali):print('Error: len(dataca) != len(datali)')continuefor oi in range(len(dataca)):obj_ca = dataca[oi]obj_li = datali[oi]label_name = obj_ca["type"]label_nameli = obj_li["type"]if label_name != label_nameli:print('Error: label_name != label_nameli')continue# static labelsif label_name in labelsList.keys():labelsList[label_name] += 1else:labelsList[label_name] = 1# updata label type# Car 、 Cyclist、if label_name == 'Trafficcone' or label_name == 'ScooterRider' or label_name == 'Barrowlist':continueelif label_name == 'Motorcyclist':label_name = 'Cyclist'elif label_name == 'Van':label_name = 'Car'elif label_name == 'Bus':label_name = 'Car'elif label_name == 'Truck':label_name = 'Car'if label_name in labelsListnew.keys():labelsListnew[label_name] += 1else:labelsListnew[label_name] = 1scale = obj_li["3d_dimensions"] # 尺寸取雷达坐标系下的pos = obj_ca["3d_location"] # 位置取相机坐标系下的rot = obj_li["rotation"]tempFlag = False # label_list.append(temp)if tempFlag == True:# 2.1 temp 追加目标类型# temp [0:16] 共计16列# 类别[0]+是否截断[1]+是否遮挡[2]+观察角度[3]+图像左上右下[4:8]+高宽长[8:11]+# 相机坐标系xyz[11:14]+方向角[14]+置信度[15]# 注意:测试数据集才有最后一列的置信度temp = label_name + ' 0 0 0 0 0 0 0 'temp += (scale['h'].split('.')[0] + "." + scale['h'].split('.')[1][:2] + ' ')temp += (scale['w'].split('.')[0] + "." + scale['w'].split('.')[1][:2] + ' ')temp += (scale['l'].split('.')[0] + "." + scale['l'].split('.')[1][:2] + ' ')# 2.2.1 pos_xyz# temp += (pos['x'].split('.')[0] + "." + pos['x'].split('.')[1][:2] + ' ')# temp += (pos['y'].split('.')[0] + "." + pos['y'].split('.')[1][:2] + ' ')# temp += (pos['z'].split('.')[0] + "." + pos['z'].split('.')[1][:2] + ' ')# 2.2 固定使用转换矩阵lidar_to_cam后# 此处的矩阵目的将lidar转到cam下,再转换到rect下,同样追加到temp中(temp追加pos_xyz)trans_Mat = np.array([[6.927964000000e-03, -9.999722000000e-01, -2.757829000000e-03, -2.457729000000e-02],[-1.162982000000e-03, 2.749836000000e-03, -9.999955000000e-01, -6.127237000000e-02],[9.999753000000e-01, 6.931141000000e-03, -1.143899000000e-03, -3.321029000000e-01],[0, 0, 0, 1]])rect_Mat = np.array([[9.999128000000e-01, 1.009263000000e-02, -8.511932000000e-03, 0],[-1.012729000000e-02, 9.999406000000e-01, -4.037671000000e-03, 0],[8.470675000000e-03, 4.123522000000e-03, 9.999556000000e-01, 0],[0, 0, 0, 1]])ptx = str2float(pos["x"])pty = str2float(pos["y"])# print('pos["z"], scale["l"], type(scale["l"])\n', pos["z"], scale["l"], type(scale["l"]))ptz = str2float(pos["z"]) - (0.5 * str2float(scale["l"]))# lidar坐标系下的相机中心点pt_in_lidar = np.array([[ptx],[pty],[ptz],[1.]])pt_in_camera = np.matmul(trans_Mat, pt_in_lidar)pt_in_rect = np.matmul(rect_Mat, pt_in_camera)temp += str(pt_in_rect[0, 0]).split('.')[0] + "." + str(pt_in_rect[0, 0]).split('.')[1][0:2]temp += " "temp += str(pt_in_rect[1, 0]).split('.')[0] + "." + str(pt_in_rect[1, 0]).split('.')[1][0:2]temp += " "temp += str(pt_in_rect[2, 0]).split('.')[0] + "." + str(pt_in_rect[2, 0]).split('.')[1][0:2]temp += " "## 2.3 temp 追加 rot_xyz(先将 rot 航向角 返回到0-360°之间)rot = - str2float(rot) - (np.pi / 2)if rot > np.pi:rot = rot - 2 * np.pielif rot < -np.pi:rot = rot + 2 * np.pitemp += str(rot).split('.')[0] + "." + str(rot).split('.')[1][0:2] + "\n"label_list.append(temp)# label_list[-1] = label_list[-1].replace('\n', '')# with open(os.path.join(label_2Path, f[:-4]+'.txt'), "w") as wf:# wf.writelines(label_list)print('labels Statics = ', labelsList )print('labels Statics = ', labelsListnew )

2.0.3 kitti\testing中的数据类似training中数据

仅需移动部分数据作为test是数据即可

————————testing

______________calib

______________image_2

______________Label_2

______________velodyne

2.0.4 写kitti/ImageSets 中的txt文件

注意:如下代码中val.txt 与test.txt中的文件是一致的

def filesPath2txt():import os, randompathtrain = 'E:\DAIR-V2X-I\kitti_s/training/velodyne/'pathtest = 'E:\DAIR-V2X-I\kitti_s/testing/velodyne/'txtPath = 'E:\DAIR-V2X-I\kitti_s/ImageSets/'txt_path_train = os.path.join(txtPath, 'train.txt')txt_path_val = os.path.join(txtPath, 'val.txt')# txt_path_trainval = os.path.join(txtPath, 'trainval.txt')txt_path_test = os.path.join(txtPath, 'test.txt')# mkdirImageSets(txt_path_train, txt_path_val, txt_path_trainval, txt_path_test)files = os.listdir(pathtrain)filesList = [f[:-4] + '\n' for f in files]filestr = filesListfilestr[-1] = filestr[-1].replace('\n', '')with open(txt_path_train, 'w', encoding='utf-8') as wf:wf.writelines(filestr)files = os.listdir(pathtest)filesList = [f[:-4] + '\n' for f in files]filesval = filesList # random.sample(filesList, 700)filesval[-1] = filesval[-1].replace('\n', '')with open(txt_path_val, 'w', encoding='utf-8') as wf:wf.writelines(filesval)# filestest = random.sample(filesList, 400)# filestest[-1] = filestest[-1].replace('\n', '')with open(txt_path_test, 'w', encoding='utf-8') as wf:wf.writelines(filesval)

2.1 paddle3D训练

cd ./Paddle3D

2.1.1 数据

【1】数据

数据存放在Paddle3D/datasets目录下,结构如下:

datasets/

datasets/KITTI/

————datasets/KITTI/ImageSets

————datasets/KITTI/testing

————datasets/KITTI/training

【2】数据预处理

使用如下代码完成数据的预处理操作

python tools/create_det_gt_database.py --dataset_name kitti --dataset_root ./datasets/KITTI --save_dir ./datasets/KITTI

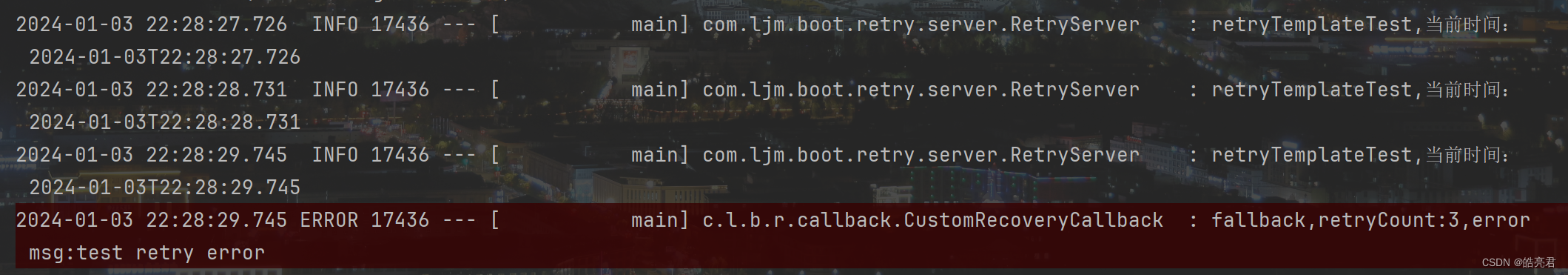

上述过程打印如下,运行结束会生成datasets/KITTI/kitti_train_gt_database目录。

root/anaconda3/envs/pip_paddle_env/lib/python3.8/site-packages/setuptools/sandbox.py:13: DeprecationWarning: pkg_resources is deprecated as an API. See https://setuptools.pypa.io/en/latest/pkg_resources.htmlimport pkg_resources

root/anaconda3/envs/pip_paddle_env/lib/python3.8/site-packages/pkg_resources/__init__.py:2871: DeprecationWarning: Deprecated call to `pkg_resources.declare_namespace('mpl_toolkits')`.

Implementing implicit namespace packages (as specified in PEP 420) is preferred to `pkg_resources.declare_namespace`. See https://setuptools.pypa.io/en/latest/references/keywords.html#keyword-namespace-packagesdeclare_namespace(pkg)

root/anaconda3/envs/pip_paddle_env/lib/python3.8/site-packages/pkg_resources/__init__.py:2871: DeprecationWarning: Deprecated call to `pkg_resources.declare_namespace('google')`.

Implementing implicit namespace packages (as specified in PEP 420) is preferred to `pkg_resources.declare_namespace`. See https://setuptools.pypa.io/en/latest/references/keywords.html#keyword-namespace-packagesdeclare_namespace(pkg)

ortools not installed, install it by "pip install ortools==9.1.9490" if you run BEVLaneDet model

2023-12-26 17:45:46,823 - INFO - Begin to generate a database for the KITTI dataset.

2023-12-26 17:46:06,774 - INFO - [##################################################] 100.00%

2023-12-26 17:46:07,012 - INFO - The database generation has been done.

2.1.2 模型配置文件

为避免修改原模型配置文件,先复制一份并命名为centerpoint_pillars_016voxel_kitti_my.yml

cp ./configs/centerpoint/centerpoint_pillars_016voxel_kitti.yml ./configs/centerpoint/centerpoint_pillars_016voxel_kitti_my.yml

核对文件中的相关配置信息

train_dataset:type: KittiPCDatasetdataset_root: datasets/KITTI... ...class_names: ["Car", "Cyclist", "Pedestrian"]

2.1.3 训练流程及问题调试

【1】使用如下代码进行训练

# python -m paddle.distributed.launch --gpus 0,1,2,3,4,5,6,7 tools/train.py --config configs/centerpoint/centerpoint_pillars_016voxel_kitti.yml --save_dir ./output_kitti --num_workers 4 --save_interval 5python tools/train.py --config configs/centerpoint/centerpoint_pillars_016voxel_kitti_my.yml --save_dir ./output_kitti --save_interval 5 > 112.log

参数介绍

-m:使用python -m paddle.distributed.launch方法启动分布式训练任务。

参考:https://www.paddlepaddle.org.cn/documentation/docs/zh/api/paddle/distributed/launch_cn.html

> 112.log : 将其中的打印保存在112.log中

【2】问题记录1

【2-1】SystemError: (Fatal) Blocking queue is killed because the data reader raises an exception.

【2-2】KeyError: ‘DataLoader worker(1) caught KeyError with message:\nTraceback (most recent call last):\n File “/home/… …”… … self.sampler_per_class[cls_name].sampling(num_samples)\n KeyError: ‘Car’\n’

【2-1、2-2】解决方法

参考1:SystemError: (Fatal) Blocking queue is killed because the data reader raises an exception : 没找到源码中的相关位置。

参考2:SystemError: (Fatal) Blocking queue is killed (baidu.com)

利用“参考2”中提供的方法, 训练的时候,将num_worker设置为0。如下:

python tools/train.py --config configs/centerpoint/centerpoint_pillars_016voxel_kitti_my.yml --save_dir ./output_kitti --num_workers 0 --save_interval 5

将num_workers 设置为0训练的时候,报如下错误

/Paddle3D/paddle3d/transforms/sampling.py", line 172, in samplingsampling_annos = self.sampler_per_class[cls_name].sampling(num_samples)

KeyError: 'Car'

采用如下代码打印/Paddle3D/paddle3d/transforms/sampling.py", line 172中的信息,结果是{}。

print('self.sampler_per_class', self.sampler_per_class)

》》》》》》self.sampler_per_class {}

发现是配置文件中class_balanced_sampling参数是设置为False的原因。修改class_balanced_sampling为True如下

train_dataset:type: KittiPCDatasetdataset_root: datasets/KITTI... ...mode: trainclass_balanced_sampling: Trueclass_names: ["Car", "Cyclist", "Pedestrian"]

【3】问题记录2

【3-1】ZeroDivisionError: float division by zero

详细报错包含如下

File "/home/mec/hulijuan/Paddle3D/paddle3d/datasets/kitti/kitti_det.py", line 89, in <listcomp>sampling_ratios = [balanced_frac / frac for frac in fracs]

ZeroDivisionError: float division by zero

上述问题打印 print(‘kitti_det.py’, cls_dist) 发现是自己数据集中没有"Pedestrian"类别,而class_names中包含该类别。解决办法是增加包含 "Pedestrian"类 的数据。

【4】问题记录3

【4-1】又报错问题同“【2】问题记录1 SystemError: … KeyError: …”,只能是大概上面的问题没有从根本上解决掉

首先看KeyError的问题。

在paddle3d/transforms/sampling.py/class SamplingDatabase(TransformABC):/def __ init __()中增加打印。如下

def __init__(self,min_num_points_in_box_per_class: Dict[str, int],max_num_samples_per_class: Dict[str, int],database_anno_path: str,database_root: str,class_names: List[str],ignored_difficulty: List[int] = None):self.min_num_points_in_box_per_class = min_num_points_in_box_per_classself.max_num_samples_per_class = max_num_samples_per_classself.database_anno_path = database_anno_pathwith open(database_anno_path, "rb") as f:database_anno = pickle.load(f)print('sampling.py__line58~~~~~~~~~~~~~~~~~~~~58database_anno: ', database_anno)if not osp.exists(database_root):raise ValueError(f"Database root path {database_root} does not exist!!!")self.database_root = database_rootself.class_names = class_namesself.database_anno = self._filter_min_num_points_in_box(database_anno)self.ignored_difficulty = ignored_difficultyif ignored_difficulty is not None:self.database_anno = self._filter_ignored_difficulty(self.database_anno)self.sampler_per_class = dict()print('sampling.py__line70~~~~~~~~~~~~~~~70database_anno: ', self.database_anno)for cls_name, annos in self.database_anno.items():self.sampler_per_class[cls_name] = Sampler(cls_name, annos)

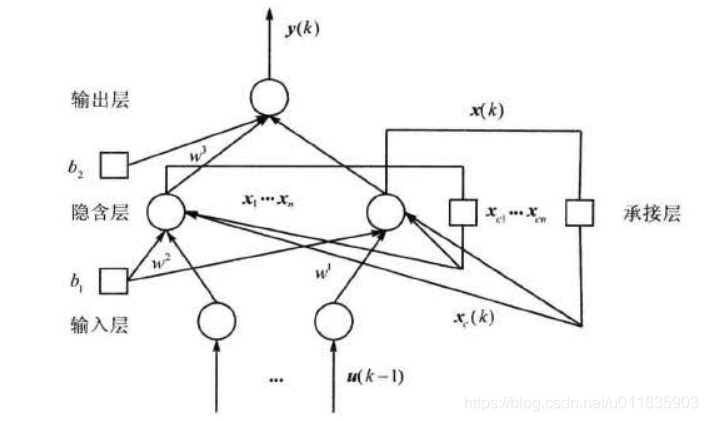

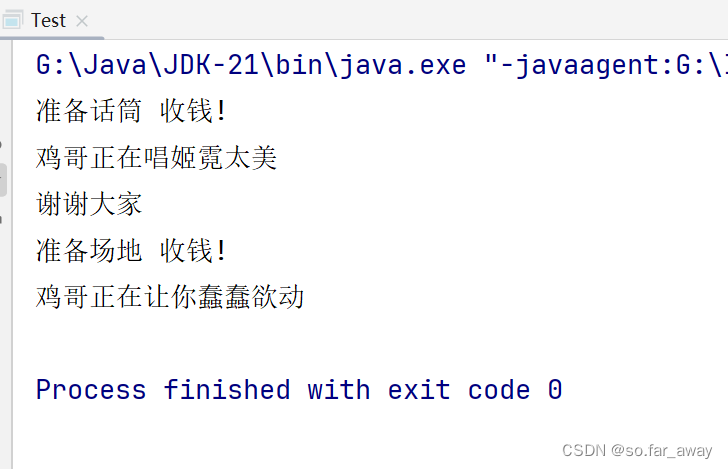

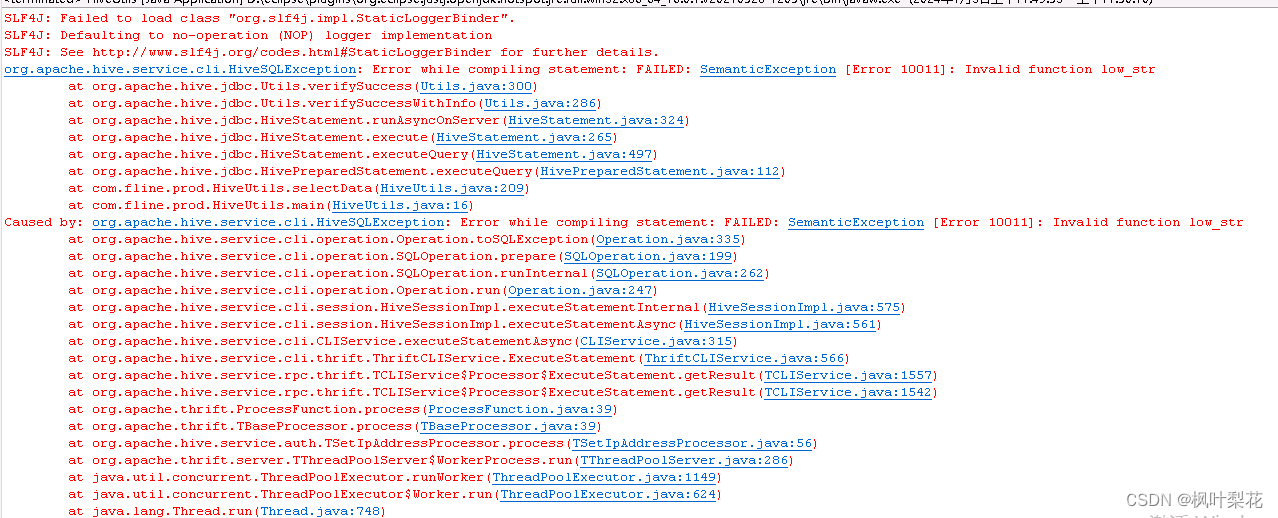

通过打印,可以看出sampling.py–line70的打印是空字典,而sampling.py–line58的打印部分如下:

上图可以看出,num_points_in_box 的值为 0。导致如下代码运行后,database_anno 变成了空字典

self.database_anno = self._filter_min_num_points_in_box(database_anno)

上述问题应该是针对点云文件生成标签文件的时候,方法错了。

重新生成label_2文件过程如下:

1 创建环境

conda create -n pcd2bin_env python=3.8

2 激活环境

conda activate pcd2bin_env

3 安装pypcd

3.1 参考:https://blog.csdn.net/weixin_44450684/article/details/92812746

如下流程:

git clone https://github.com/dimatura/pypcd

cd pypcd

git fetch origin pull/9/head:python3

git checkout python3

python3 setup.py install --user

python3

from pypcd import pypcd

pc = pypcd.PointCloud.from_path('pointcloud.pcd')利用开源程序重新生成label_2文件过程如下:

源码百度网盘地址:执行程序:2.2 数据集地址KITTI格式(DAIR-V2X-I(7058帧数据)):(长期有效)

大小:22G

链接:https://pan.baidu.com/s/1gG_S6Vtx4iWAfAVAfOxWWw

提取码:p48l

数据没有问题 但是label_2中的XYZ需要根据前述lanel_2的方法重新生成。需下载该数据集的标签文件single-infrastructure-side-json.zip

2.3 模型

2.3.1 模型评估

2.3.2 模型测试

2.3.3 导出模型