前言

VioManager类里还有VioManagerHelper.cpp,VioManagerOptions.h这两个文件,也包含了一些函数,这次接着看这个 。

整体分析

void VioManager::initialize_with_gt(Eigen::Matrix<double, 17, 1> imustate)

给一个状态,然后初始化IMU的状态

bool VioManager::try_to_initialize(const ov_core::CameraData &message)

尝试初始化状态

void VioManager::retriangulate_active_tracks(const ov_core::CameraData &message)

此函数将对当前帧中的所有特征重新进行三角测量。

对于系统当前正在跟踪的所有特征,重新对它们进行三角测量。

这对于需要当前点云(例如闭环)的下游应用程序非常有用。

这将尝试对所有点进行三角测量,而不仅仅是更新中使用的点。

cv::Mat VioManager::get_historical_viz_image()

获取我们拥有的轨迹的清晰可视化图像。

std::vectorEigen::Vector3d VioManager::get_features_SLAM()

返回全局框架中的 3d SLAM 特征。

std::vectorEigen::Vector3d VioManager::get_features_ARUCO()

返回全局框架中的 3d ARUCO 特征。

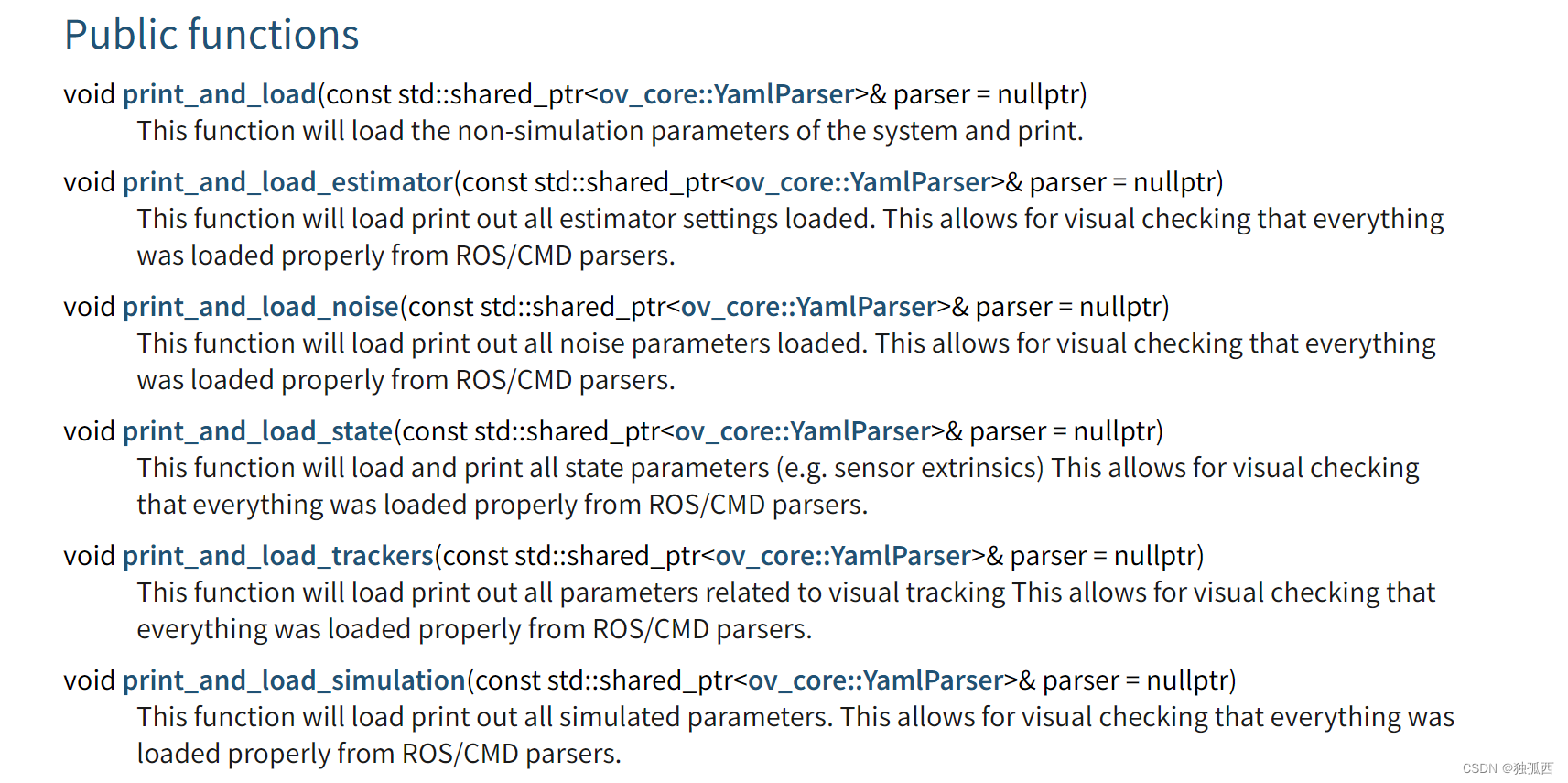

VioManagerOptions.h的主要函数如下:

它分为几个不同的部分:估计器、跟踪器和模拟。如

源码注释

/** OpenVINS: An Open Platform for Visual-Inertial Research* Copyright (C) 2018-2023 Patrick Geneva* Copyright (C) 2018-2023 Guoquan Huang* Copyright (C) 2018-2023 OpenVINS Contributors* Copyright (C) 2018-2019 Kevin Eckenhoff** This program is free software: you can redistribute it and/or modify* it under the terms of the GNU General Public License as published by* the Free Software Foundation, either version 3 of the License, or* (at your option) any later version.** This program is distributed in the hope that it will be useful,* but WITHOUT ANY WARRANTY; without even the implied warranty of* MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the* GNU General Public License for more details.** You should have received a copy of the GNU General Public License* along with this program. If not, see <https://www.gnu.org/licenses/>.*/#include "VioManager.h"#include "feat/Feature.h"

#include "feat/FeatureDatabase.h"

#include "feat/FeatureInitializer.h"

#include "types/LandmarkRepresentation.h"

#include "utils/print.h"#include "init/InertialInitializer.h"#include "state/Propagator.h"

#include "state/State.h"

#include "state/StateHelper.h"using namespace ov_core;

using namespace ov_type;

using namespace ov_msckf;void VioManager::initialize_with_gt(Eigen::Matrix<double, 17, 1> imustate) {//给一个状态,然后初始化IMU的状态// Initialize the system//初始化系统state->_imu->set_value(imustate.block(1, 0, 16, 1));state->_imu->set_fej(imustate.block(1, 0, 16, 1));// Fix the global yaw and position gauge freedoms// TODO: Why does this break out simulation consistency metrics?// 修复全局偏航和位置仪自由度// TODO:为什么要打破模拟一致性指标?std::vector<std::shared_ptr<ov_type::Type>> order = {state->_imu};Eigen::MatrixXd Cov = std::pow(0.02, 2) * Eigen::MatrixXd::Identity(state->_imu->size(), state->_imu->size());Cov.block(0, 0, 3, 3) = std::pow(0.017, 2) * Eigen::Matrix3d::Identity(); // qCov.block(3, 3, 3, 3) = std::pow(0.05, 2) * Eigen::Matrix3d::Identity(); // pCov.block(6, 6, 3, 3) = std::pow(0.01, 2) * Eigen::Matrix3d::Identity(); // v (static)StateHelper::set_initial_covariance(state, Cov, order);// Set the state time//设置状态时间state->_timestamp = imustate(0, 0);startup_time = imustate(0, 0);is_initialized_vio = true;// Cleanup any features older then the initialization time//清除所有比初始化时间早的特征trackFEATS->get_feature_database()->cleanup_measurements(state->_timestamp);if (trackARUCO != nullptr) {trackARUCO->get_feature_database()->cleanup_measurements(state->_timestamp);}// Print what we init'ed with//打印信息PRINT_DEBUG(GREEN "[INIT]: INITIALIZED FROM GROUNDTRUTH FILE!!!!!\n" RESET);PRINT_DEBUG(GREEN "[INIT]: orientation = %.4f, %.4f, %.4f, %.4f\n" RESET, state->_imu->quat()(0), state->_imu->quat()(1),state->_imu->quat()(2), state->_imu->quat()(3));PRINT_DEBUG(GREEN "[INIT]: bias gyro = %.4f, %.4f, %.4f\n" RESET, state->_imu->bias_g()(0), state->_imu->bias_g()(1),state->_imu->bias_g()(2));PRINT_DEBUG(GREEN "[INIT]: velocity = %.4f, %.4f, %.4f\n" RESET, state->_imu->vel()(0), state->_imu->vel()(1), state->_imu->vel()(2));PRINT_DEBUG(GREEN "[INIT]: bias accel = %.4f, %.4f, %.4f\n" RESET, state->_imu->bias_a()(0), state->_imu->bias_a()(1),state->_imu->bias_a()(2));PRINT_DEBUG(GREEN "[INIT]: position = %.4f, %.4f, %.4f\n" RESET, state->_imu->pos()(0), state->_imu->pos()(1), state->_imu->pos()(2));

}bool VioManager::try_to_initialize(const ov_core::CameraData &message) {//尝试初始化状态

//这应该调用我们的初始化程序并尝试初始化状态。

//将来我们应该从这里调用结构运动代码。

//该功能还可以用于在发生故障后重新初始化系统。// Directly return if the initialization thread is running// Note that we lock on the queue since we could have finished an update// And are using this queue to propagate the state forward. We should wait in this case// 如果初始化线程正在运行则直接返回// 请注意,我们锁定队列,因为我们本来可以完成更新// 并使用该队列向前传播状态。在这种情况下我们应该等待if (thread_init_running) {std::lock_guard<std::mutex> lck(camera_queue_init_mtx);camera_queue_init.push_back(message.timestamp);return false;}// If the thread was a success, then return success!//如果线程成功,返回成功if (thread_init_success) {return true;}// Run the initialization in a second thread so it can go as slow as it desires//在第二个线程运行初始化,从而可以随心所欲地慢下来thread_init_running = true;std::thread thread([&] {// Returns from our initializer//从我们的初始化器返回double timestamp;Eigen::MatrixXd covariance;std::vector<std::shared_ptr<ov_type::Type>> order;auto init_rT1 = boost::posix_time::microsec_clock::local_time();// Try to initialize the system// We will wait for a jerk if we do not have the zero velocity update enabled// Otherwise we can initialize right away as the zero velocity will handle the stationary case// 尝试初始化系统// 如果我们没有启用零速度更新,我们将等待一个急动// 否则我们可以立即初始化,因为零速度将处理静止情况bool wait_for_jerk = (updaterZUPT == nullptr);bool success = initializer->initialize(timestamp, covariance, order, state->_imu, wait_for_jerk);// If we have initialized successfully we will set the covariance and state elements as needed// TODO: set the clones and SLAM features here so we can start updating right away...// 如果初始化成功,我们将根据需要设置协方差和状态元素// TODO:在此处设置克隆和 SLAM 特征,以便我们可以立即开始更新...if (success) {// Set our covariance (state should already be set in the initializer)// 设置我们的协方差(状态应该已经在初始化器中设置)StateHelper::set_initial_covariance(state, covariance, order);// Set the state time//设置状态时间state->_timestamp = timestamp;startup_time = timestamp;// Cleanup any features older than the initialization time// Also increase the number of features to the desired amount during estimation// NOTE: we will split the total number of features over all cameras uniformly// 清理所有早于初始化时间的特征// 在估计期间还将特征数量增加到所需的数量// 注意:我们将统一划分所有相机的特征总数trackFEATS->get_feature_database()->cleanup_measurements(state->_timestamp);trackFEATS->set_num_features(std::floor((double)params.num_pts / (double)params.state_options.num_cameras));if (trackARUCO != nullptr) {trackARUCO->get_feature_database()->cleanup_measurements(state->_timestamp);}// If we are moving then don't do zero velocity update4// 如果我们正在移动,则不要进行零速度更新if (state->_imu->vel().norm() > params.zupt_max_velocity) {has_moved_since_zupt = true;}// Else we are good to go, print out our stats// 否则我们就可以开始了,打印出我们的统计数据auto init_rT2 = boost::posix_time::microsec_clock::local_time();PRINT_INFO(GREEN "[init]: successful initialization in %.4f seconds\n" RESET, (init_rT2 - init_rT1).total_microseconds() * 1e-6);PRINT_INFO(GREEN "[init]: orientation = %.4f, %.4f, %.4f, %.4f\n" RESET, state->_imu->quat()(0), state->_imu->quat()(1),state->_imu->quat()(2), state->_imu->quat()(3));PRINT_INFO(GREEN "[init]: bias gyro = %.4f, %.4f, %.4f\n" RESET, state->_imu->bias_g()(0), state->_imu->bias_g()(1),state->_imu->bias_g()(2));PRINT_INFO(GREEN "[init]: velocity = %.4f, %.4f, %.4f\n" RESET, state->_imu->vel()(0), state->_imu->vel()(1), state->_imu->vel()(2));PRINT_INFO(GREEN "[init]: bias accel = %.4f, %.4f, %.4f\n" RESET, state->_imu->bias_a()(0), state->_imu->bias_a()(1),state->_imu->bias_a()(2));PRINT_INFO(GREEN "[init]: position = %.4f, %.4f, %.4f\n" RESET, state->_imu->pos()(0), state->_imu->pos()(1), state->_imu->pos()(2));// Remove any camera times that are order then the initialized time// This can happen if the initialization has taken a while to perform// 删除所有顺序为初始化时间的相机时间// 如果初始化需要一段时间才能执行,则可能会发生这种情况std::lock_guard<std::mutex> lck(camera_queue_init_mtx);std::vector<double> camera_timestamps_to_init;for (size_t i = 0; i < camera_queue_init.size(); i++) {if (camera_queue_init.at(i) > timestamp) {camera_timestamps_to_init.push_back(camera_queue_init.at(i));}}// Now we have initialized we will propagate the state to the current timestep// In general this should be ok as long as the initialization didn't take too long to perform// Propagating over multiple seconds will become an issue if the initial biases are bad// 现在我们已经初始化了,我们将把状态传播到当前时间步// 一般来说,只要初始化执行时间不长就应该没问题// 如果初始偏差不好,那么在多秒内传播将成为一个问题size_t clone_rate = (size_t)((double)camera_timestamps_to_init.size() / (double)params.state_options.max_clone_size) + 1;for (size_t i = 0; i < camera_timestamps_to_init.size(); i += clone_rate) {propagator->propagate_and_clone(state, camera_timestamps_to_init.at(i));StateHelper::marginalize_old_clone(state);}PRINT_DEBUG(YELLOW "[init]: moved the state forward %.2f seconds\n" RESET, state->_timestamp - timestamp);thread_init_success = true;camera_queue_init.clear();} else {auto init_rT2 = boost::posix_time::microsec_clock::local_time();PRINT_DEBUG(YELLOW "[init]: failed initialization in %.4f seconds\n" RESET, (init_rT2 - init_rT1).total_microseconds() * 1e-6);thread_init_success = false;std::lock_guard<std::mutex> lck(camera_queue_init_mtx);camera_queue_init.clear();}// Finally, mark that the thread has finished running//最后,标记线程已经运行完毕thread_init_running = false;});// If we are single threaded, then run single threaded// Otherwise detach this thread so it runs in the background!// 如果我们是单线程,则运行单线程// 否则分离该线程,使其在后台运行!if (!params.use_multi_threading_subs) {thread.join();} else {thread.detach();}return false;

}void VioManager::retriangulate_active_tracks(const ov_core::CameraData &message) {//此函数将对当前帧中的所有特征重新进行三角测量。//对于系统当前正在跟踪的所有特征,重新对它们进行三角测量。//这对于需要当前点云(例如闭环)的下游应用程序非常有用。//这将尝试对所有点进行三角测量,而不仅仅是更新中使用的点。// Start timing//开始计时boost::posix_time::ptime retri_rT1, retri_rT2, retri_rT3;retri_rT1 = boost::posix_time::microsec_clock::local_time();// Clear old active track data//清楚以前活跃的跟踪数据assert(state->_clones_IMU.find(message.timestamp) != state->_clones_IMU.end());active_tracks_time = message.timestamp;active_image = cv::Mat();trackFEATS->display_active(active_image, 255, 255, 255, 255, 255, 255, " ");if (!active_image.empty()) {active_image = active_image(cv::Rect(0, 0, message.images.at(0).cols, message.images.at(0).rows));}active_tracks_posinG.clear();active_tracks_uvd.clear();// Current active tracks in our frontend// TODO: should probably assert here that these are at the message time...// 我们前端中当前活跃的跟踪// TODO:可能应该在这里断言这些是在消息时间...auto last_obs = trackFEATS->get_last_obs();auto last_ids = trackFEATS->get_last_ids();// New set of linear systems that only contain the latest track info// 一套新的线性系统,仅包含最新的跟踪信息std::map<size_t, Eigen::Matrix3d> active_feat_linsys_A_new;std::map<size_t, Eigen::Vector3d> active_feat_linsys_b_new;std::map<size_t, int> active_feat_linsys_count_new;std::unordered_map<size_t, Eigen::Vector3d> active_tracks_posinG_new;// Append our new observations for each camera//加入每个相机的新观测std::map<size_t, cv::Point2f> feat_uvs_in_cam0;for (auto const &cam_id : message.sensor_ids) {// IMU historical clone//IMU历史的克隆Eigen::Matrix3d R_GtoI = state->_clones_IMU.at(active_tracks_time)->Rot();Eigen::Vector3d p_IinG = state->_clones_IMU.at(active_tracks_time)->pos();// Calibration for this cam_id//对当前cam_id的标定Eigen::Matrix3d R_ItoC = state->_calib_IMUtoCAM.at(cam_id)->Rot();Eigen::Vector3d p_IinC = state->_calib_IMUtoCAM.at(cam_id)->pos();// Convert current CAMERA position relative to global//将当前相机坐标转变为全局坐标Eigen::Matrix3d R_GtoCi = R_ItoC * R_GtoI;Eigen::Vector3d p_CiinG = p_IinG - R_GtoCi.transpose() * p_IinC;// Loop through each measurement//每个测量循环assert(last_obs.find(cam_id) != last_obs.end());assert(last_ids.find(cam_id) != last_ids.end());for (size_t i = 0; i < last_obs.at(cam_id).size(); i++) {// Record this feature uv if is seen from cam0//如果能从cam0看到则记录这个特征size_t featid = last_ids.at(cam_id).at(i);cv::Point2f pt_d = last_obs.at(cam_id).at(i).pt;if (cam_id == 0) {feat_uvs_in_cam0[featid] = pt_d;}// Skip this feature if it is a SLAM feature (the state estimate takes priority)//如果是一个SLAM特征则跳过(状态估算优先)if (state->_features_SLAM.find(featid) != state->_features_SLAM.end()) {continue;}// Get the UV coordinate normal//获取法线UV坐标cv::Point2f pt_n = state->_cam_intrinsics_cameras.at(cam_id)->undistort_cv(pt_d);Eigen::Matrix<double, 3, 1> b_i;b_i << pt_n.x, pt_n.y, 1;b_i = R_GtoCi.transpose() * b_i;b_i = b_i / b_i.norm();Eigen::Matrix3d Bperp = skew_x(b_i);// Append to our linear system//加入线性系统Eigen::Matrix3d Ai = Bperp.transpose() * Bperp;Eigen::Vector3d bi = Ai * p_CiinG;if (active_feat_linsys_A.find(featid) == active_feat_linsys_A.end()) {active_feat_linsys_A_new.insert({featid, Ai});active_feat_linsys_b_new.insert({featid, bi});active_feat_linsys_count_new.insert({featid, 1});} else {active_feat_linsys_A_new[featid] = Ai + active_feat_linsys_A[featid];active_feat_linsys_b_new[featid] = bi + active_feat_linsys_b[featid];active_feat_linsys_count_new[featid] = 1 + active_feat_linsys_count[featid];}// For this feature, recover its 3d position if we have enough observations!//对这个特征,如果我们有足够观测则恢复3d坐标if (active_feat_linsys_count_new.at(featid) > 3) {// Recover feature estimate//恢复特征估计Eigen::Matrix3d A = active_feat_linsys_A_new[featid];Eigen::Vector3d b = active_feat_linsys_b_new[featid];Eigen::MatrixXd p_FinG = A.colPivHouseholderQr().solve(b);Eigen::MatrixXd p_FinCi = R_GtoCi * (p_FinG - p_CiinG);// Check A and p_FinCi//检查A和p_FinCiEigen::JacobiSVD<Eigen::Matrix3d> svd(A);Eigen::MatrixXd singularValues;singularValues.resize(svd.singularValues().rows(), 1);singularValues = svd.singularValues();double condA = singularValues(0, 0) / singularValues(singularValues.rows() - 1, 0);// If we have a bad condition number, or it is too close// Then set the flag for bad (i.e. set z-axis to nan)// 如果我们的条件数不好,或者太接近// 然后设置 bad 标志(即将 z 轴设置为 nan)if (std::abs(condA) <= params.featinit_options.max_cond_number && p_FinCi(2, 0) >= params.featinit_options.min_dist &&p_FinCi(2, 0) <= params.featinit_options.max_dist && !std::isnan(p_FinCi.norm())) {active_tracks_posinG_new[featid] = p_FinG;}}}}size_t total_triangulated = active_tracks_posinG.size();// Update active set of linear systems//更新线性系统活跃集active_feat_linsys_A = active_feat_linsys_A_new;active_feat_linsys_b = active_feat_linsys_b_new;active_feat_linsys_count = active_feat_linsys_count_new;active_tracks_posinG = active_tracks_posinG_new;retri_rT2 = boost::posix_time::microsec_clock::local_time();// Return if no features//没有特征则返回if (active_tracks_posinG.empty() && state->_features_SLAM.empty())return;// Append our SLAM features we have//如果有加入SLAM特征for (const auto &feat : state->_features_SLAM) {Eigen::Vector3d p_FinG = feat.second->get_xyz(false);if (LandmarkRepresentation::is_relative_representation(feat.second->_feat_representation)) {// Assert that we have an anchor pose for this feature//确保当前特征有一个锚点位姿assert(feat.second->_anchor_cam_id != -1);// Get calibration for our anchor camera//从我们的锚点相机得到标定Eigen::Matrix3d R_ItoC = state->_calib_IMUtoCAM.at(feat.second->_anchor_cam_id)->Rot();Eigen::Vector3d p_IinC = state->_calib_IMUtoCAM.at(feat.second->_anchor_cam_id)->pos();// Anchor pose orientation and position//锚点位姿旋转和位置Eigen::Matrix3d R_GtoI = state->_clones_IMU.at(feat.second->_anchor_clone_timestamp)->Rot();Eigen::Vector3d p_IinG = state->_clones_IMU.at(feat.second->_anchor_clone_timestamp)->pos();// Feature in the global frame//全局坐标系中的特征p_FinG = R_GtoI.transpose() * R_ItoC.transpose() * (feat.second->get_xyz(false) - p_IinC) + p_IinG;}active_tracks_posinG[feat.second->_featid] = p_FinG;}// Calibration of the first camera (cam0)//第一个相机的标定std::shared_ptr<Vec> distortion = state->_cam_intrinsics.at(0);std::shared_ptr<PoseJPL> calibration = state->_calib_IMUtoCAM.at(0);Eigen::Matrix<double, 3, 3> R_ItoC = calibration->Rot();Eigen::Matrix<double, 3, 1> p_IinC = calibration->pos();// Get current IMU clone state//得到当前IMU的克隆状态std::shared_ptr<PoseJPL> clone_Ii = state->_clones_IMU.at(active_tracks_time);Eigen::Matrix3d R_GtoIi = clone_Ii->Rot();Eigen::Vector3d p_IiinG = clone_Ii->pos();// 4. Next we can update our variable with the global position// We also will project the features into the current frame// 4. 接下来我们可以用全局位置更新我们的变量// 我们还将把特征投影到当前帧中for (const auto &feat : active_tracks_posinG) {// For now skip features not seen from current frame// TODO: should we publish other features not tracked in cam0??// 现在跳过当前帧中未看到的特征// TODO: 我们应该发布 cam0 中未跟踪的其他功能吗?if (feat_uvs_in_cam0.find(feat.first) == feat_uvs_in_cam0.end())continue;// Calculate the depth of the feature in the current frame// Project SLAM feature and non-cam0 features into the current frame of reference// 计算当前帧中特征的深度// 将 SLAM 特征和非 cam0 特征投影到当前参考系中Eigen::Vector3d p_FinIi = R_GtoIi * (feat.second - p_IiinG);Eigen::Vector3d p_FinCi = R_ItoC * p_FinIi + p_IinC;double depth = p_FinCi(2);Eigen::Vector2d uv_dist;if (feat_uvs_in_cam0.find(feat.first) != feat_uvs_in_cam0.end()) {uv_dist << (double)feat_uvs_in_cam0.at(feat.first).x, (double)feat_uvs_in_cam0.at(feat.first).y;} else {Eigen::Vector2d uv_norm;uv_norm << p_FinCi(0) / depth, p_FinCi(1) / depth;uv_dist = state->_cam_intrinsics_cameras.at(0)->distort_d(uv_norm);}// Skip if not valid (i.e. negative depth, or outside of image)// 如果无效则跳过(即负深度或图像外部)if (depth < 0.1) {continue;}// Skip if not valid (i.e. negative depth, or outside of image)// 如果无效则跳过(即负深度或图像外部)int width = state->_cam_intrinsics_cameras.at(0)->w();int height = state->_cam_intrinsics_cameras.at(0)->h();if (uv_dist(0) < 0 || (int)uv_dist(0) >= width || uv_dist(1) < 0 || (int)uv_dist(1) >= height) {// PRINT_DEBUG("feat %zu -> depth = %.2f | u_d = %.2f | v_d = %.2f\n",(*it2)->featid,depth,uv_dist(0),uv_dist(1));continue;}// Finally construct the uv and depth//最后构建uv和深度Eigen::Vector3d uvd;uvd << uv_dist, depth;active_tracks_uvd.insert({feat.first, uvd});}retri_rT3 = boost::posix_time::microsec_clock::local_time();// Timing informationPRINT_ALL(CYAN "[RETRI-TIME]: %.4f seconds for triangulation (%zu tri of %zu active)\n" RESET,(retri_rT2 - retri_rT1).total_microseconds() * 1e-6, total_triangulated, active_feat_linsys_A.size());PRINT_ALL(CYAN "[RETRI-TIME]: %.4f seconds for re-projection into current\n" RESET, (retri_rT3 - retri_rT2).total_microseconds() * 1e-6);PRINT_ALL(CYAN "[RETRI-TIME]: %.4f seconds total\n" RESET, (retri_rT3 - retri_rT1).total_microseconds() * 1e-6);

}cv::Mat VioManager::get_historical_viz_image() {//获取我们拥有的轨迹的清晰可视化图像。// Return if not ready yet//没准备好返回if (state == nullptr || trackFEATS == nullptr)return cv::Mat();// Build an id-list of what features we should highlight (i.e. SLAM)// 构建一个我们应该突出显示的功能的 ID 列表(即 SLAM)std::vector<size_t> highlighted_ids;for (const auto &feat : state->_features_SLAM) {highlighted_ids.push_back(feat.first);}// Text we will overlay if needed// 如果需要,我们将覆盖文本std::string overlay = (did_zupt_update) ? "zvupt" : "";overlay = (!is_initialized_vio) ? "init" : overlay;// Get the current active tracks// 获取当前活动跟踪cv::Mat img_history;trackFEATS->display_history(img_history, 255, 255, 0, 255, 255, 255, highlighted_ids, overlay);if (trackARUCO != nullptr) {trackARUCO->display_history(img_history, 0, 255, 255, 255, 255, 255, highlighted_ids, overlay);// trackARUCO->display_active(img_history, 0, 255, 255, 255, 255, 255, overlay);}// Finally return the image//最后返回图像return img_history;

}std::vector<Eigen::Vector3d> VioManager::get_features_SLAM() {//返回全局框架中的 3d SLAM 特征。std::vector<Eigen::Vector3d> slam_feats;for (auto &f : state->_features_SLAM) {if ((int)f.first <= 4 * state->_options.max_aruco_features)continue;if (ov_type::LandmarkRepresentation::is_relative_representation(f.second->_feat_representation)) {// Assert that we have an anchor pose for this feature// 确保我们有这个特征的锚点姿态assert(f.second->_anchor_cam_id != -1);// Get calibration for our anchor camera// 获取锚点相机的校准Eigen::Matrix<double, 3, 3> R_ItoC = state->_calib_IMUtoCAM.at(f.second->_anchor_cam_id)->Rot();Eigen::Matrix<double, 3, 1> p_IinC = state->_calib_IMUtoCAM.at(f.second->_anchor_cam_id)->pos();// Anchor pose orientation and position// 锚点姿态方向和位置Eigen::Matrix<double, 3, 3> R_GtoI = state->_clones_IMU.at(f.second->_anchor_clone_timestamp)->Rot();Eigen::Matrix<double, 3, 1> p_IinG = state->_clones_IMU.at(f.second->_anchor_clone_timestamp)->pos();// Feature in the global frame//全局坐标系中的位置slam_feats.push_back(R_GtoI.transpose() * R_ItoC.transpose() * (f.second->get_xyz(false) - p_IinC) + p_IinG);} else {slam_feats.push_back(f.second->get_xyz(false));}}return slam_feats;

}std::vector<Eigen::Vector3d> VioManager::get_features_ARUCO() {//返回全局框架中的 3d ARUCO 特征。std::vector<Eigen::Vector3d> aruco_feats;for (auto &f : state->_features_SLAM) {if ((int)f.first > 4 * state->_options.max_aruco_features)continue;if (ov_type::LandmarkRepresentation::is_relative_representation(f.second->_feat_representation)) {// Assert that we have an anchor pose for this featureassert(f.second->_anchor_cam_id != -1);// Get calibration for our anchor cameraEigen::Matrix<double, 3, 3> R_ItoC = state->_calib_IMUtoCAM.at(f.second->_anchor_cam_id)->Rot();Eigen::Matrix<double, 3, 1> p_IinC = state->_calib_IMUtoCAM.at(f.second->_anchor_cam_id)->pos();// Anchor pose orientation and positionEigen::Matrix<double, 3, 3> R_GtoI = state->_clones_IMU.at(f.second->_anchor_clone_timestamp)->Rot();Eigen::Matrix<double, 3, 1> p_IinG = state->_clones_IMU.at(f.second->_anchor_clone_timestamp)->pos();// Feature in the global framearuco_feats.push_back(R_GtoI.transpose() * R_ItoC.transpose() * (f.second->get_xyz(false) - p_IinC) + p_IinG);} else {aruco_feats.push_back(f.second->get_xyz(false));}}return aruco_feats;

}