Python:Spider爬虫工程化入门到进阶系列:

- Python:Spider爬虫工程化入门到进阶(1)创建Scrapy爬虫项目

- Python:Spider爬虫工程化入门到进阶(2)使用Spider Admin Pro管理scrapy爬虫项目

本文通过简单的小例子,亲自动手创建一个Spider爬虫工程化的Scrapy项目

本文默认读着已经掌握基本的Python编程知识

目录

- 1、环境准备

- 1.1、创建虚拟环境

- 1.2、安装Scrapy

- 1.3、创建爬虫项目

- 2、爬虫示例-爬取壁纸

- 2.1、分析目标网站

- 2.2、生成爬虫文件

- 2.3、编写爬虫代码

- 2.4、运行爬虫代码

- 3、总结

- 4、参考文章

1、环境准备

确保已经安装Python3

$ python3 --version

Python 3.10.6

1.1、创建虚拟环境

创建一个虚拟环境,可以很好的和其他项目的依赖进行隔离,避免相互冲突

# 创建项目目录

$ mkdir scrapy-project# 进入项目目录

$ cd scrapy-project# 创建名为:venv 的python3虚拟环境

$ python3 -m venv venv# 目录下会创建一个名为:venv 的目录

$ ls

venv# 激活虚拟环境,激活后命令行前面会出现虚拟环境标记(venv)

$ source venv/bin/activate

(venv)$ 1.2、安装Scrapy

Scrapy也是一个Python库,通过pip 可以很容易的安装Scrapy

$ pip install Scrapy# 查看scrapy版本

$ scrapy version

Scrapy 2.9.0

1.3、创建爬虫项目

在当前目录下,创建名为:web_spiders 的爬虫项目

注意命令行中最后一个.不能少

# 命令格式:scrapy startproject <project_name> [project_dir]# 注意:项目名称只能是数字、字母、下划线

$ scrapy startproject web_spiders .

项目结构

$ tree -I venv

.

├── scrapy.cfg

└── web_spiders├── __init__.py├── items.py├── middlewares.py├── pipelines.py├── settings.py└── spiders└── __init__.py

我们暂时不用管这写目录都是做什么的,后面根据需求会逐步使用到

2、爬虫示例-爬取壁纸

程序目的:爬取壁纸数据

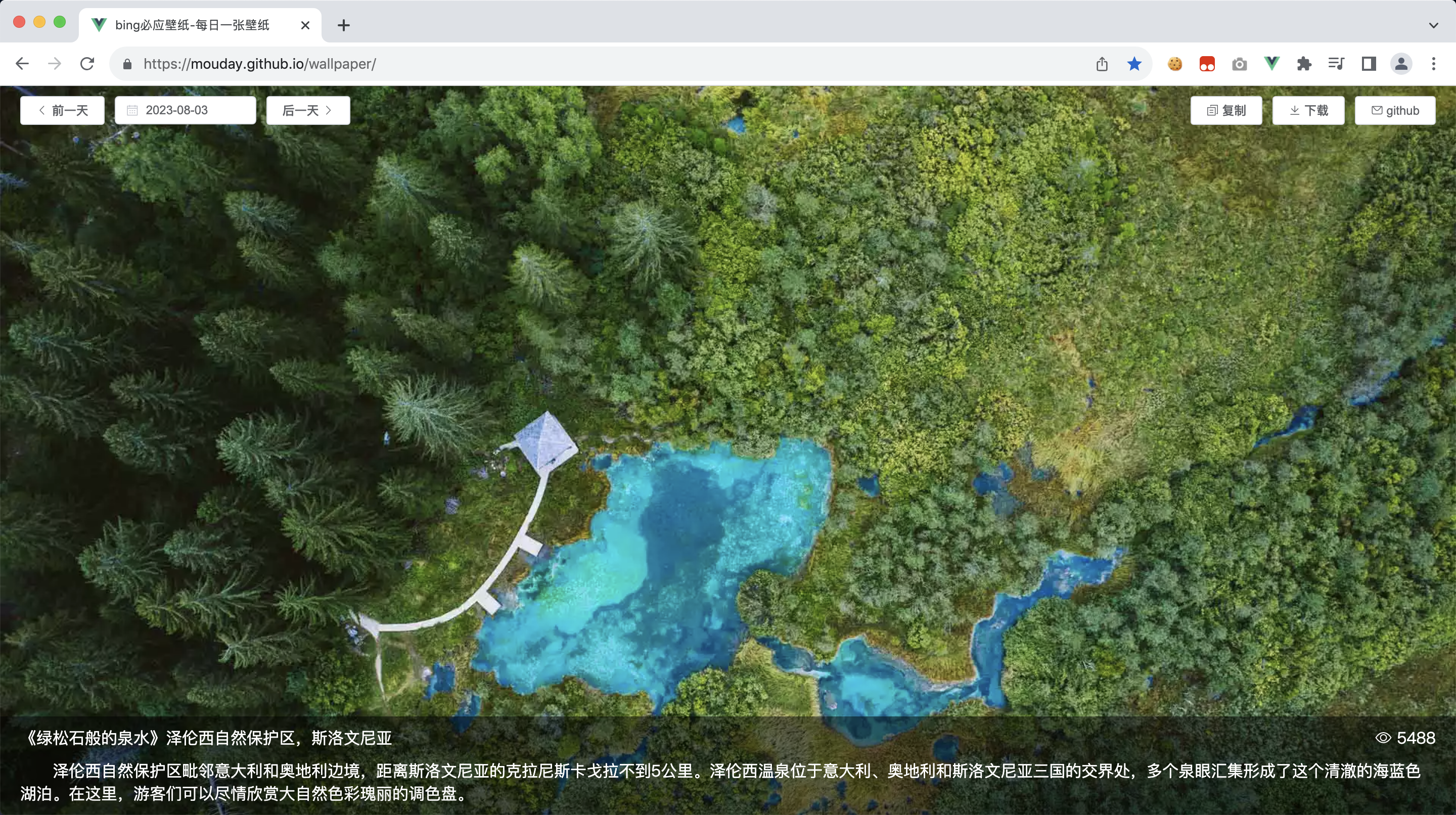

目标网站:https://mouday.github.io/wallpaper/

2.1、分析目标网站

通过分析,不难找到数据接口

https://mouday.github.io/wallpaper-database/2023/08/03.json

返回的数据结构如下:

{"date":"2023-08-03","headline":"绿松石般的泉水","title":"泽伦西自然保护区,斯洛文尼亚","description":"泽伦西温泉位于意大利、奥地利和斯洛文尼亚三国的交界处,多个泉眼汇集形成了这个清澈的海蓝色湖泊。在这里,游客们可以尽情欣赏大自然色彩瑰丽的调色盘。","image_url":"https://cn.bing.com/th?id=OHR.ZelenciSprings_ZH-CN8022746409_1920x1080.webp","main_text":"泽伦西自然保护区毗邻意大利和奥地利边境,距离斯洛文尼亚的克拉尼斯卡戈拉不到5公里。"

}

2.2、生成爬虫文件

Scrapy同样提供了命令行工具,可以快速的生成爬虫文件

# 生成爬虫文件命令:scrapy genspider <spider_name> <domain>

scrapy genspider wallpaper mouday.github.io

此时,目录下生成了一个爬虫文件wallpaper.py

$ tree -I venv

.

├── scrapy.cfg

└── web_spiders├── __init__.py├── items.py├── middlewares.py├── pipelines.py├── settings.py└── spiders├── __init__.py└── wallpaper.py # 可以看到我们新建的爬虫文件

生成 wallpaper.py 的内容

import scrapyclass WallpaperSpider(scrapy.Spider):name = "wallpaper"allowed_domains = ["mouday.github.io"]start_urls = ["https://mouday.github.io"]def parse(self, response):pass2.3、编写爬虫代码

将爬虫文件wallpaper.py 修改如下,编写我们的业务代码

import scrapy

from scrapy.http import Responseclass WallpaperSpider(scrapy.Spider):# 爬虫名称name = "wallpaper"# 爬取目标的域名allowed_domains = ["mouday.github.io"]# 替换爬虫开始爬取的地址为我们需要的地址# start_urls = ["https://mouday.github.io"]start_urls = ["https://mouday.github.io/wallpaper-database/2023/08/03.json"]# 将类型标注加上,便于我们在IDE中快速编写代码# def parse(self, response):def parse(self, response: Response, **kwargs):# 我们什么也不做,仅打印爬取的文本print(response.text)2.4、运行爬虫代码

# 运行爬虫命令:scrapy crawl <spider_name>

$ scrapy crawl wallpaper2023-08-03 22:57:34 [scrapy.utils.log] INFO: Scrapy 2.9.0 started (bot: web_spiders)

2023-08-03 22:57:34 [scrapy.utils.log] INFO: Versions: lxml 4.9.3.0, libxml2 2.9.4, cssselect 1.2.0, parsel 1.8.1, w3lib 2.1.2, Twisted 22.10.0, Python 3.10.6 (main, Aug 13 2022, 09:17:23) [Clang 10.0.1 (clang-1001.0.46.4)], pyOpenSSL 23.2.0 (OpenSSL 3.1.2 1 Aug 2023), cryptography 41.0.3, Platform macOS-10.14.4-x86_64-i386-64bit

2023-08-03 22:57:34 [scrapy.crawler] INFO: Overridden settings:

{'BOT_NAME': 'web_spiders','FEED_EXPORT_ENCODING': 'utf-8','NEWSPIDER_MODULE': 'web_spiders.spiders','REQUEST_FINGERPRINTER_IMPLEMENTATION': '2.7','ROBOTSTXT_OBEY': True,'SPIDER_MODULES': ['web_spiders.spiders'],'TWISTED_REACTOR': 'twisted.internet.asyncioreactor.AsyncioSelectorReactor'}

2023-08-03 22:57:34 [asyncio] DEBUG: Using selector: KqueueSelector

2023-08-03 22:57:34 [scrapy.utils.log] DEBUG: Using reactor: twisted.internet.asyncioreactor.AsyncioSelectorReactor

2023-08-03 22:57:34 [scrapy.utils.log] DEBUG: Using asyncio event loop: asyncio.unix_events._UnixSelectorEventLoop

2023-08-03 22:57:34 [scrapy.extensions.telnet] INFO: Telnet Password: 5083c2db86c14a1f

2023-08-03 22:57:34 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats','scrapy.extensions.telnet.TelnetConsole','scrapy.extensions.memusage.MemoryUsage','scrapy.extensions.logstats.LogStats']

2023-08-03 22:57:34 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.robotstxt.RobotsTxtMiddleware','scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware','scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware','scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware','scrapy.downloadermiddlewares.useragent.UserAgentMiddleware','scrapy.downloadermiddlewares.retry.RetryMiddleware','scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware','scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware','scrapy.downloadermiddlewares.redirect.RedirectMiddleware','scrapy.downloadermiddlewares.cookies.CookiesMiddleware','scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware','scrapy.downloadermiddlewares.stats.DownloaderStats']

2023-08-03 22:57:34 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware','scrapy.spidermiddlewares.offsite.OffsiteMiddleware','scrapy.spidermiddlewares.referer.RefererMiddleware','scrapy.spidermiddlewares.urllength.UrlLengthMiddleware','scrapy.spidermiddlewares.depth.DepthMiddleware']

2023-08-03 22:57:34 [scrapy.middleware] INFO: Enabled item pipelines:

[]

2023-08-03 22:57:34 [scrapy.core.engine] INFO: Spider opened

2023-08-03 22:57:34 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2023-08-03 22:57:34 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2023-08-03 22:57:36 [scrapy.core.engine] DEBUG: Crawled (404) <GET https://mouday.github.io/robots.txt> (referer: None)

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 5 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 10 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 11 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 14 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 17 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 19 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 20 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 22 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 23 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 25 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 26 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 28 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 29 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 30 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 31 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 32 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 33 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 34 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 35 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 39 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 44 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 45 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 46 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 66 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 71 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 76 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 77 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 81 without any user agent to enforce it on.

2023-08-03 22:57:36 [protego] DEBUG: Rule at line 85 without any user agent to enforce it on.

2023-08-03 22:57:36 [scrapy.core.engine] DEBUG: Crawled (200) <GET https://mouday.github.io/wallpaper-database/2023/08/03.json> (referer: None)

{"date": "2023-08-03","headline": "绿松石般的泉水","title": "泽伦西自然保护区,斯洛文尼亚","description": "泽伦西温泉位于意大利、奥地利和斯洛文尼亚三国的交界处,多个泉眼汇集形成了这个清澈的海蓝色湖泊。在这里,游客们可以尽情欣赏大自然色彩瑰丽的调色盘。","image_url": "https://cn.bing.com/th?id=OHR.ZelenciSprings_ZH-CN8022746409_1920x1080.webp","main_text": "泽伦西自然保护区毗邻意大利和奥地利边境,距离斯洛文尼亚的克拉尼斯卡戈拉不到5公里。"

}

2023-08-03 22:57:36 [scrapy.core.engine] INFO: Closing spider (finished)

2023-08-03 22:57:36 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

{'downloader/request_bytes': 476,'downloader/request_count': 2,'downloader/request_method_count/GET': 2,'downloader/response_bytes': 7201,'downloader/response_count': 2,'downloader/response_status_count/200': 1,'downloader/response_status_count/404': 1,'elapsed_time_seconds': 2.092338,'finish_reason': 'finished','finish_time': datetime.datetime(2023, 8, 3, 14, 57, 36, 733301),'httpcompression/response_bytes': 9972,'httpcompression/response_count': 2,'log_count/DEBUG': 34,'log_count/INFO': 10,'memusage/max': 61906944,'memusage/startup': 61906944,'response_received_count': 2,'robotstxt/request_count': 1,'robotstxt/response_count': 1,'robotstxt/response_status_count/404': 1,'scheduler/dequeued': 1,'scheduler/dequeued/memory': 1,'scheduler/enqueued': 1,'scheduler/enqueued/memory': 1,'start_time': datetime.datetime(2023, 8, 3, 14, 57, 34, 640963)}

2023-08-03 22:57:36 [scrapy.core.engine] INFO: Spider closed (finished)

运行爬虫后,输出了很多日志,我们可以先不管,可以看到我们需要的数据已经爬取到了

{"date": "2023-08-03","headline": "绿松石般的泉水","title": "泽伦西自然保护区,斯洛文尼亚","description": "泽伦西温泉位于意大利、奥地利和斯洛文尼亚三国的交界处,多个泉眼汇集形成了这个清澈的海蓝色湖泊。在这里,游客们可以尽情欣赏大自然色彩瑰丽的调色盘。","image_url": "https://cn.bing.com/th?id=OHR.ZelenciSprings_ZH-CN8022746409_1920x1080.webp","main_text": "泽伦西自然保护区毗邻意大利和奥地利边境,距离斯洛文尼亚的克拉尼斯卡戈拉不到5公里。"

}

3、总结

我们通过以上学习,仅编写了2行代码,就完成了爬取数据的工作。

同时,也了解到了好几个命令,通过Scrapy 提供的命令行工具,可以进行如下操作:

- 创建爬虫项目:

scrapy startproject web_spiders . - 生成爬虫文件:

scrapy genspider wallpaper mouday.github.io - 运行爬虫代码:

scrapy crawl wallpaper

4、参考文章

- Scrapy 安装文档:https://docs.scrapy.org/en/latest/intro/install.html

- Scrapy命令行文档: https://docs.scrapy.org/en/latest/topics/commands.html