本地知识库可以解决本地资源与AI结合的问题,为下一步应用管理已有资产奠定基础。 本地知识库的建立可参考LangChain结合通义千问的自建知识库 (二)、(三)、(四)

本文主要记录两个方面的问题

1 搭建过程中遇到的坑

2 向量是数据库改成ES7

1 搭建过程中遇到的坑

1) 安装bce-embedding-base_v1模型

需要用git clone到本地,但由于模型比较大,需要先安装git lfs管理大型的文件,再克隆

sudo apt-get install git-lfs

安装成功后,再进入预备安装模型的目录下,执行clone

git clone https://www.modelscope.cn/maidalun/bce-embedding-base_v1.git

- nltk corpora语料库缺失

报错样例如下:

缺少 两个库 punkt和 averaged_perceptron_tagger

解决的方式是离线下载,参考NLTK:离线安装punkt

2 向量是数据库改成ES7

原博中使用Chroma,但我已经装了ES7,所以改用ES7作为向量数据库

原博中两部分代码,导入本地知识库和应用本地知识库,修改如下

导入本地知识库

import time

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain_community.document_loaders import UnstructuredFileLoader

from langchain_community.embeddings.huggingface import HuggingFaceEmbeddings

from langchain_community.vectorstores import ElasticVectorSearchtime_list = []

t = time.time()# loader = UnstructuredFileLoader("/home/cfets/AI/textwarefare/test.txt")

loader = UnstructuredFileLoader("/home/cfets/AI/textwarefare/cmtest.txt")

data = loader.load()# 文本切分去。

text_splitter = RecursiveCharacterTextSplitter(chunk_size=50, chunk_overlap=0)

split_docs = text_splitter.split_documents(data)

print(split_docs)model_name = r"/home/cfets/AI/model/bce-embedding-base_v1"

model_kwargs = {'device': 'cpu'}

encode_kwargs = {'normalize_embeddings': False}embeddings = HuggingFaceEmbeddings(model_name=model_name,model_kwargs=model_kwargs,encode_kwargs=encode_kwargs)# 初始化加载器 构建本地知识向量库

# db = Chroma.from_documents(split_docs, embeddings, persist_directory="./chroma/bu_test_bec")# 使用ES

db = ElasticVectorSearch.from_documents(split_docs,embeddings,elasticsearch_url="http://localhost:9200",index_name="elastic-ai-test",

)# 持久化

# db.persist()

print(db.client.info())# 打印时间##

time_list.append(time.time() - t)

print(time.time() - t)

应用本地知识库

import dashscope

from dashscope import Generation

from dashscope.api_entities.dashscope_response import Role

from http import HTTPStatus

from langchain_community.vectorstores import Chroma

from langchain_community.vectorstores import ElasticVectorSearch

from langchain_community.embeddings.huggingface import HuggingFaceEmbeddingsdashscope.api_key = XXXXXXdef conversation_mutual():messages = []# 引入模型model_name = r"/home/cfets/AI/model/bce-embedding-base_v1"model_kwargs = {'device': 'cpu'}encode_kwargs = {'normalize_embeddings': False}embeddings = HuggingFaceEmbeddings(model_name=model_name,model_kwargs=model_kwargs,encode_kwargs=encode_kwargs)# 使用chroma本地库# db = Chroma(persist_directory="./chroma/bu_cmtest_bec", embedding_function=embeddings)# 使用elastic searchmy_index = "es_akshare-api"db = ElasticVectorSearch(embedding=embeddings,elasticsearch_url="http://localhost:9200",index_name=my_index,)while True:message = input('user:')# 引入本地库similarDocs = db.similarity_search(message, k=5)summary_prompt = "".join([doc.page_content for doc in similarDocs])print(summary_prompt)send_message = f"下面的信息({summary_prompt})是否有这个问题({message})有关" \f",如果你觉得无关请告诉我无法根据提供的上下文回答'{message}'这个问题,简要回答即可" \f",否则请根据{summary_prompt}对{message}的问题进行回答"messages.append({'role': Role.USER, 'content': message})messages.append({'role': Role.USER, 'content': send_message}) # 按本地库回复whole_message = ''# 切换到通义模型responses = Generation.call(Generation.Models.qwen_plus, messages=messages, result_format='message', stream=True,incremental_output=True)print('system:', end='')for response in responses:if response.status_code == HTTPStatus.OK:whole_message += response.output.choices[0]['message']['content']print(response.output.choices[0]['message']['content'], end='')else:print('Request id: %s, Status code: %s, error code: %s, error message: %s' % (response.request_id, response.status_code,response.code, response.message))print()messages.append({'role': 'assistant', 'content': whole_message})print('\n')if __name__ == '__main__':conversation_mutual()

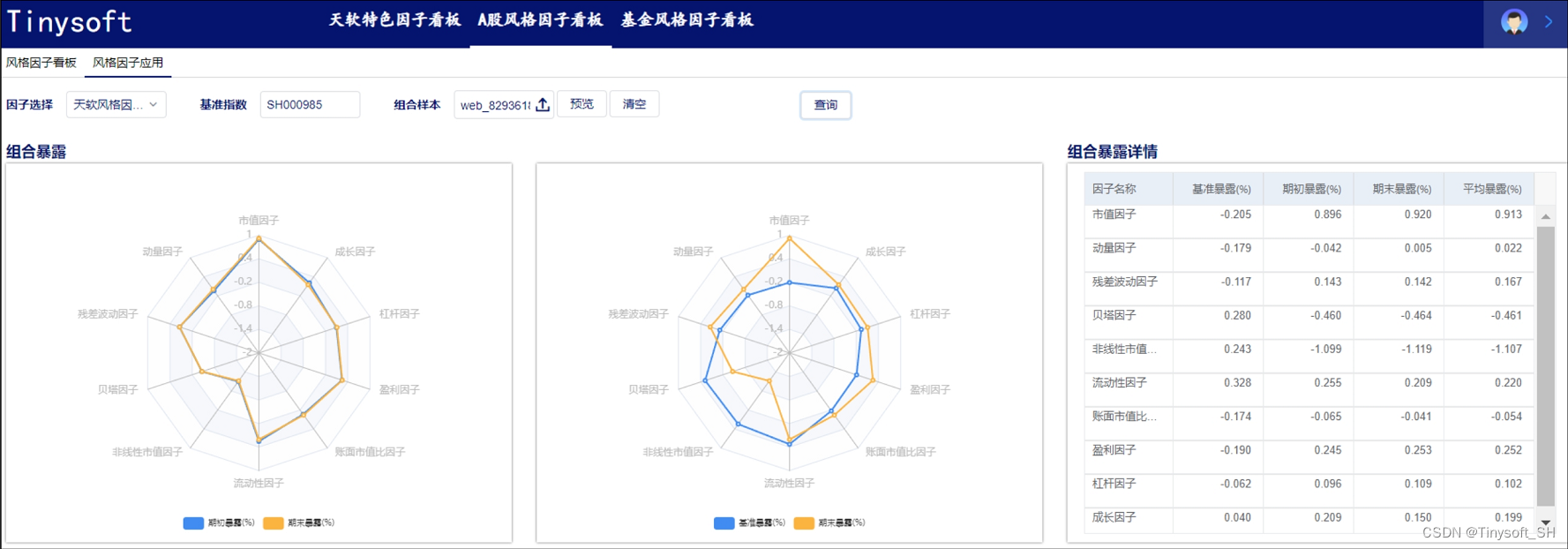

使用效果

导入本地知识库

执行本地库效果