一、本文介绍

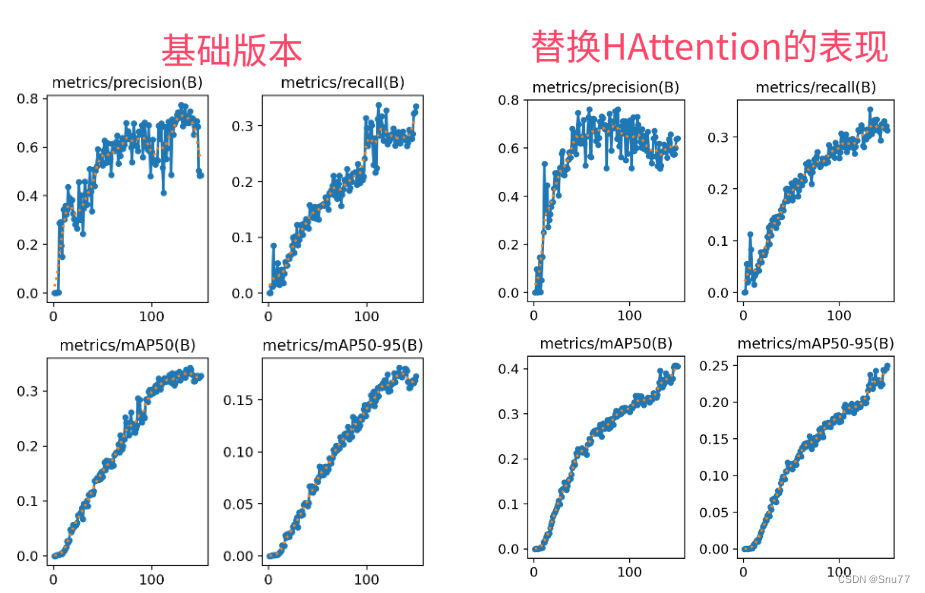

本文给大家带来的改进机制是由由我本人利用HAT注意力机制(超分辨率注意力机制)结合V8检测头去掉其中的部分内容形成一种全新的超分辨率检测头。混合注意力变换器(HAT)的设计理念是通过融合通道注意力和自注意力机制来提升单图像超分辨率重建的性能。通道注意力关注于识别哪些通道更重要,而自注意力则关注于图像内部各个位置之间的关系。HAT利用这两种注意力机制,有效地整合了全局的像素信息。本文中均有添加方法和原理解析,本文内容为我独家创新。

欢迎大家订阅我的专栏一起学习YOLO!

专栏目录:YOLOv8改进有效系列目录 | 包含卷积、主干、检测头、注意力机制、Neck上百种创新机制

专栏回顾:YOLOv8改进系列专栏——本专栏持续复习各种顶会内容——科研必备

目录

一、本文介绍

二、基本原理介绍

三、核心代码

四、HATHead的添加方法

4.1 修改一

4.2 修改二

4.3 修改三

4.4 修改四

4.5 修改五

4.6 修改六

4.7 修改七

4.8 修改八

4.9 修改九

4.9 修改十

五、 目标检测的yaml文件

5.1 yaml文件

5.2 运行记录

六、本文总结

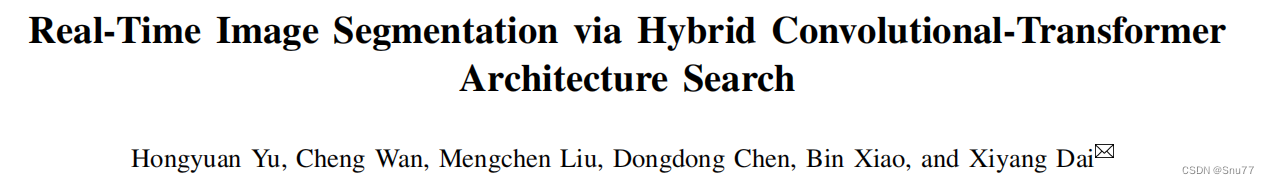

二、基本原理介绍

官方论文地址: 官方论文地址点击此处即可跳转

官方代码地址: 官方代码地址点击此处即可跳转

这张图片展示了两种神经网络模块的结构:自注意力模块(Self-Attention Module)和卷积模块(Convolution Module)。这两个模块被设计用于搜索最佳组合,以在保持记忆效率的同时实现特征提取。

自注意力模块包含以下部分:

- 一个1x1的卷积层,用于降低特征维度(c1->c2),减少自注意力的计算负荷。

- 多头自注意力层(MHSA),能够捕获特征间的长距离依赖关系。

- 另一个1x1的卷积层,用于恢复特征维度(c2->c1)。

- 批量归一化层(BN),用于网络训练中的规范化处理。

- 加法操作,将自注意力模块的输出与初始输入相加,形成残差连接。

- ReLu激活函数。

卷积模块包含以下部分:

- 一个降采样步骤,通过0.5倍的降频和3x3的卷积来降低空间分辨率,减少计算量。

- 一个1x1的卷积层,用于特征转换。

- 一个上采样步骤,通过2倍的增频恢复空间分辨率。

- 批量归一化层(BN)。

- 加法操作,将上采样后的输出与降采样之前的输入相加,实现跳跃连接。

- ReLu激活函数。

总结:

图中所示的模块是为了在高分辨率特征提取中寻找高效的结构。自注意力模块旨在捕获更广泛的上下文信息,而卷积模块则专注于保留局部信息和减少计算复杂度。这两种模块的结合旨在通过架构搜索找到一个既能高效提取特征又能保持较低计算成本的最佳网络结构。

三、核心代码

核心代码的使用方式看章节四!

import math

import torch

import torch.nn as nn

from basicsr.archs.arch_util import to_2tuple, trunc_normal_

from einops import rearrange

from ultralytics.utils.tal import dist2bbox, make_anchors__all__ = ['HATHead']def drop_path(x, drop_prob: float = 0., training: bool = False):"""Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).From: https://github.com/rwightman/pytorch-image-models/blob/master/timm/models/layers/drop.py"""if drop_prob == 0. or not training:return xkeep_prob = 1 - drop_probshape = (x.shape[0],) + (1,) * (x.ndim - 1) # work with diff dim tensors, not just 2D ConvNetsrandom_tensor = keep_prob + torch.rand(shape, dtype=x.dtype, device=x.device)random_tensor.floor_() # binarizeoutput = x.div(keep_prob) * random_tensorreturn outputclass DropPath(nn.Module):"""Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).From: https://github.com/rwightman/pytorch-image-models/blob/master/timm/models/layers/drop.py"""def __init__(self, drop_prob=None):super(DropPath, self).__init__()self.drop_prob = drop_probdef forward(self, x):return drop_path(x, self.drop_prob, self.training)class ChannelAttention(nn.Module):"""Channel attention used in RCAN.Args:num_feat (int): Channel number of intermediate features.squeeze_factor (int): Channel squeeze factor. Default: 16."""def __init__(self, num_feat, squeeze_factor=16):super(ChannelAttention, self).__init__()self.attention = nn.Sequential(nn.AdaptiveAvgPool2d(1),nn.Conv2d(num_feat, num_feat // squeeze_factor, 1, padding=0),nn.ReLU(inplace=True),nn.Conv2d(num_feat // squeeze_factor, num_feat, 1, padding=0),nn.Sigmoid())def forward(self, x):y = self.attention(x)return x * yclass CAB(nn.Module):def __init__(self, num_feat, compress_ratio=3, squeeze_factor=30):super(CAB, self).__init__()self.cab = nn.Sequential(nn.Conv2d(num_feat, num_feat // compress_ratio, 3, 1, 1),nn.GELU(),nn.Conv2d(num_feat // compress_ratio, num_feat, 3, 1, 1),ChannelAttention(num_feat, squeeze_factor))def forward(self, x):return self.cab(x)class Mlp(nn.Module):def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):super().__init__()out_features = out_features or in_featureshidden_features = hidden_features or in_featuresself.fc1 = nn.Linear(in_features, hidden_features)self.act = act_layer()self.fc2 = nn.Linear(hidden_features, out_features)self.drop = nn.Dropout(drop)def forward(self, x):x = self.fc1(x)x = self.act(x)x = self.drop(x)x = self.fc2(x)x = self.drop(x)return xdef window_partition(x, window_size):"""Args:x: (b, h, w, c)window_size (int): window sizeReturns:windows: (num_windows*b, window_size, window_size, c)"""b, h, w, c = x.shapex = x.view(b, h // window_size, window_size, w // window_size, window_size, c)windows = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(-1, window_size, window_size, c)return windowsdef window_reverse(windows, window_size, h, w):"""Args:windows: (num_windows*b, window_size, window_size, c)window_size (int): Window sizeh (int): Height of imagew (int): Width of imageReturns:x: (b, h, w, c)"""b = int(windows.shape[0] / (h * w / window_size / window_size))x = windows.view(b, h // window_size, w // window_size, window_size, window_size, -1)x = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(b, h, w, -1)return xclass WindowAttention(nn.Module):r""" Window based multi-head self attention (W-MSA) module with relative position bias.It supports both of shifted and non-shifted window.Args:dim (int): Number of input channels.window_size (tuple[int]): The height and width of the window.num_heads (int): Number of attention heads.qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if setattn_drop (float, optional): Dropout ratio of attention weight. Default: 0.0proj_drop (float, optional): Dropout ratio of output. Default: 0.0"""def __init__(self, dim, window_size, num_heads, qkv_bias=True, qk_scale=None, attn_drop=0., proj_drop=0.):super().__init__()self.dim = dimself.window_size = window_size # Wh, Wwself.num_heads = num_headshead_dim = dim // num_headsself.scale = qk_scale or head_dim ** -0.5# define a parameter table of relative position biasself.relative_position_bias_table = nn.Parameter(torch.zeros((2 * window_size[0] - 1) * (2 * window_size[1] - 1), num_heads)) # 2*Wh-1 * 2*Ww-1, nHself.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)self.attn_drop = nn.Dropout(attn_drop)self.proj = nn.Linear(dim, dim)self.proj_drop = nn.Dropout(proj_drop)trunc_normal_(self.relative_position_bias_table, std=.02)self.softmax = nn.Softmax(dim=-1)def forward(self, x, rpi, mask=None):"""Args:x: input features with shape of (num_windows*b, n, c)mask: (0/-inf) mask with shape of (num_windows, Wh*Ww, Wh*Ww) or None"""b_, n, c = x.shapeqkv = self.qkv(x).reshape(b_, n, 3, self.num_heads, c // self.num_heads).permute(2, 0, 3, 1, 4)q, k, v = qkv[0], qkv[1], qkv[2] # make torchscript happy (cannot use tensor as tuple)q = q * self.scaleattn = (q @ k.transpose(-2, -1))relative_position_bias = self.relative_position_bias_table[rpi.view(-1)].view(self.window_size[0] * self.window_size[1], self.window_size[0] * self.window_size[1], -1) # Wh*Ww,Wh*Ww,nHrelative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous() # nH, Wh*Ww, Wh*Wwattn = attn + relative_position_bias.unsqueeze(0)if mask is not None:nw = mask.shape[0]attn = attn.view(b_ // nw, nw, self.num_heads, n, n) + mask.unsqueeze(1).unsqueeze(0)attn = attn.view(-1, self.num_heads, n, n)attn = self.softmax(attn)else:attn = self.softmax(attn)attn = self.attn_drop(attn)x = (attn @ v).transpose(1, 2).reshape(b_, n, c)x = self.proj(x)x = self.proj_drop(x)return xclass HAB(nn.Module):r""" Hybrid Attention Block.Args:dim (int): Number of input channels.input_resolution (tuple[int]): Input resolution.num_heads (int): Number of attention heads.window_size (int): Window size.shift_size (int): Shift size for SW-MSA.mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set.drop (float, optional): Dropout rate. Default: 0.0attn_drop (float, optional): Attention dropout rate. Default: 0.0drop_path (float, optional): Stochastic depth rate. Default: 0.0act_layer (nn.Module, optional): Activation layer. Default: nn.GELUnorm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm"""def __init__(self,dim,input_resolution,num_heads,window_size=7,shift_size=0,compress_ratio=3,squeeze_factor=30,conv_scale=0.01,mlp_ratio=4.,qkv_bias=True,qk_scale=None,drop=0.,attn_drop=0.,drop_path=0.,act_layer=nn.GELU,norm_layer=nn.LayerNorm):super().__init__()self.dim = dimself.input_resolution = input_resolutionself.num_heads = num_headsself.window_size = window_sizeself.shift_size = shift_sizeself.mlp_ratio = mlp_ratioif min(self.input_resolution) <= self.window_size:# if window size is larger than input resolution, we don't partition windowsself.shift_size = 0self.window_size = min(self.input_resolution)assert 0 <= self.shift_size < self.window_size, 'shift_size must in 0-window_size'self.norm1 = norm_layer(dim)self.attn = WindowAttention(dim,window_size=to_2tuple(self.window_size),num_heads=num_heads,qkv_bias=qkv_bias,qk_scale=qk_scale,attn_drop=attn_drop,proj_drop=drop)self.conv_scale = conv_scaleself.conv_block = CAB(num_feat=dim, compress_ratio=compress_ratio, squeeze_factor=squeeze_factor)self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()self.norm2 = norm_layer(dim)mlp_hidden_dim = int(dim * mlp_ratio)self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop)def forward(self, x, x_size, rpi_sa, attn_mask):h, w = x_sizeb, _, c = x.shape# assert seq_len == h * w, "input feature has wrong size"shortcut = xx = self.norm1(x)x = x.view(b, h, w, c)# Conv_Xconv_x = self.conv_block(x.permute(0, 3, 1, 2))conv_x = conv_x.permute(0, 2, 3, 1).contiguous().view(b, h * w, c)# cyclic shiftif self.shift_size > 0:shifted_x = torch.roll(x, shifts=(-self.shift_size, -self.shift_size), dims=(1, 2))attn_mask = attn_maskelse:shifted_x = xattn_mask = None# partition windowsx_windows = window_partition(shifted_x, self.window_size) # nw*b, window_size, window_size, cx_windows = x_windows.view(-1, self.window_size * self.window_size, c) # nw*b, window_size*window_size, c# W-MSA/SW-MSA (to be compatible for testing on images whose shapes are the multiple of window sizeattn_windows = self.attn(x_windows, rpi=rpi_sa, mask=attn_mask)# merge windowsattn_windows = attn_windows.view(-1, self.window_size, self.window_size, c)shifted_x = window_reverse(attn_windows, self.window_size, h, w) # b h' w' c# reverse cyclic shiftif self.shift_size > 0:attn_x = torch.roll(shifted_x, shifts=(self.shift_size, self.shift_size), dims=(1, 2))else:attn_x = shifted_xattn_x = attn_x.view(b, h * w, c)# FFNx = shortcut + self.drop_path(attn_x) + conv_x * self.conv_scalex = x + self.drop_path(self.mlp(self.norm2(x)))return xclass PatchMerging(nn.Module):r""" Patch Merging Layer.Args:input_resolution (tuple[int]): Resolution of input feature.dim (int): Number of input channels.norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm"""def __init__(self, input_resolution, dim, norm_layer=nn.LayerNorm):super().__init__()self.input_resolution = input_resolutionself.dim = dimself.reduction = nn.Linear(4 * dim, 2 * dim, bias=False)self.norm = norm_layer(4 * dim)def forward(self, x):"""x: b, h*w, c"""h, w = self.input_resolutionb, seq_len, c = x.shapeassert seq_len == h * w, 'input feature has wrong size'assert h % 2 == 0 and w % 2 == 0, f'x size ({h}*{w}) are not even.'x = x.view(b, h, w, c)x0 = x[:, 0::2, 0::2, :] # b h/2 w/2 cx1 = x[:, 1::2, 0::2, :] # b h/2 w/2 cx2 = x[:, 0::2, 1::2, :] # b h/2 w/2 cx3 = x[:, 1::2, 1::2, :] # b h/2 w/2 cx = torch.cat([x0, x1, x2, x3], -1) # b h/2 w/2 4*cx = x.view(b, -1, 4 * c) # b h/2*w/2 4*cx = self.norm(x)x = self.reduction(x)return xclass OCAB(nn.Module):# overlapping cross-attention blockdef __init__(self, dim,input_resolution,window_size,overlap_ratio,num_heads,qkv_bias=True,qk_scale=None,mlp_ratio=2,norm_layer=nn.LayerNorm):super().__init__()self.dim = dimself.input_resolution = input_resolutionself.window_size = window_sizeself.num_heads = num_headshead_dim = dim // num_headsself.scale = qk_scale or head_dim ** -0.5self.overlap_win_size = int(window_size * overlap_ratio) + window_sizeself.norm1 = norm_layer(dim)self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)self.unfold = nn.Unfold(kernel_size=(self.overlap_win_size, self.overlap_win_size), stride=window_size,padding=(self.overlap_win_size - window_size) // 2)# define a parameter table of relative position biasself.relative_position_bias_table = nn.Parameter(torch.zeros((window_size + self.overlap_win_size - 1) * (window_size + self.overlap_win_size - 1),num_heads)) # 2*Wh-1 * 2*Ww-1, nHtrunc_normal_(self.relative_position_bias_table, std=.02)self.softmax = nn.Softmax(dim=-1)self.proj = nn.Linear(dim, dim)self.norm2 = norm_layer(dim)mlp_hidden_dim = int(dim * mlp_ratio)self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=nn.GELU)def forward(self, x, x_size, rpi):h, w = x_sizeb, _, c = x.shapeshortcut = xx = self.norm1(x)x = x.view(b, h, w, c)qkv = self.qkv(x).reshape(b, h, w, 3, c).permute(3, 0, 4, 1, 2) # 3, b, c, h, wq = qkv[0].permute(0, 2, 3, 1) # b, h, w, ckv = torch.cat((qkv[1], qkv[2]), dim=1) # b, 2*c, h, w# partition windowsq_windows = window_partition(q, self.window_size) # nw*b, window_size, window_size, cq_windows = q_windows.view(-1, self.window_size * self.window_size, c) # nw*b, window_size*window_size, ckv_windows = self.unfold(kv) # b, c*w*w, nwkv_windows = rearrange(kv_windows, 'b (nc ch owh oww) nw -> nc (b nw) (owh oww) ch', nc=2, ch=c,owh=self.overlap_win_size, oww=self.overlap_win_size).contiguous() # 2, nw*b, ow*ow, ck_windows, v_windows = kv_windows[0], kv_windows[1] # nw*b, ow*ow, cb_, nq, _ = q_windows.shape_, n, _ = k_windows.shaped = self.dim // self.num_headsq = q_windows.reshape(b_, nq, self.num_heads, d).permute(0, 2, 1, 3) # nw*b, nH, nq, dk = k_windows.reshape(b_, n, self.num_heads, d).permute(0, 2, 1, 3) # nw*b, nH, n, dv = v_windows.reshape(b_, n, self.num_heads, d).permute(0, 2, 1, 3) # nw*b, nH, n, dq = q * self.scaleattn = (q @ k.transpose(-2, -1))relative_position_bias = self.relative_position_bias_table[rpi.view(-1)].view(self.window_size * self.window_size, self.overlap_win_size * self.overlap_win_size,-1) # ws*ws, wse*wse, nHrelative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous() # nH, ws*ws, wse*wseattn = attn + relative_position_bias.unsqueeze(0)attn = self.softmax(attn)attn_windows = (attn @ v).transpose(1, 2).reshape(b_, nq, self.dim)# merge windowsattn_windows = attn_windows.view(-1, self.window_size, self.window_size, self.dim)x = window_reverse(attn_windows, self.window_size, h, w) # b h w cx = x.view(b, h * w, self.dim)x = self.proj(x) + shortcutx = x + self.mlp(self.norm2(x))return xclass AttenBlocks(nn.Module):""" A series of attention blocks for one RHAG.Args:dim (int): Number of input channels.input_resolution (tuple[int]): Input resolution.depth (int): Number of blocks.num_heads (int): Number of attention heads.window_size (int): Local window size.mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set.drop (float, optional): Dropout rate. Default: 0.0attn_drop (float, optional): Attention dropout rate. Default: 0.0drop_path (float | tuple[float], optional): Stochastic depth rate. Default: 0.0norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNormdownsample (nn.Module | None, optional): Downsample layer at the end of the layer. Default: Noneuse_checkpoint (bool): Whether to use checkpointing to save memory. Default: False."""def __init__(self,dim,input_resolution,depth,num_heads,window_size,compress_ratio,squeeze_factor,conv_scale,overlap_ratio,mlp_ratio=4.,qkv_bias=True,qk_scale=None,drop=0.,attn_drop=0.,drop_path=0.,norm_layer=nn.LayerNorm,downsample=None,use_checkpoint=False):super().__init__()self.dim = dimself.input_resolution = input_resolutionself.depth = depthself.use_checkpoint = use_checkpoint# build blocksself.blocks = nn.ModuleList([HAB(dim=dim,input_resolution=input_resolution,num_heads=num_heads,window_size=window_size,shift_size=0 if (i % 2 == 0) else window_size // 2,compress_ratio=compress_ratio,squeeze_factor=squeeze_factor,conv_scale=conv_scale,mlp_ratio=mlp_ratio,qkv_bias=qkv_bias,qk_scale=qk_scale,drop=drop,attn_drop=attn_drop,drop_path=drop_path[i] if isinstance(drop_path, list) else drop_path,norm_layer=norm_layer) for i in range(depth)])# OCABself.overlap_attn = OCAB(dim=dim,input_resolution=input_resolution,window_size=window_size,overlap_ratio=overlap_ratio,num_heads=num_heads,qkv_bias=qkv_bias,qk_scale=qk_scale,mlp_ratio=mlp_ratio,norm_layer=norm_layer)# patch merging layerif downsample is not None:self.downsample = downsample(input_resolution, dim=dim, norm_layer=norm_layer)else:self.downsample = Nonedef forward(self, x, x_size, params):for blk in self.blocks:x = blk(x, x_size, params['rpi_sa'], params['attn_mask'])x = self.overlap_attn(x, x_size, params['rpi_oca'])if self.downsample is not None:x = self.downsample(x)return xclass RHAG(nn.Module):"""Residual Hybrid Attention Group (RHAG).Args:dim (int): Number of input channels.input_resolution (tuple[int]): Input resolution.depth (int): Number of blocks.num_heads (int): Number of attention heads.window_size (int): Local window size.mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set.drop (float, optional): Dropout rate. Default: 0.0attn_drop (float, optional): Attention dropout rate. Default: 0.0drop_path (float | tuple[float], optional): Stochastic depth rate. Default: 0.0norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNormdownsample (nn.Module | None, optional): Downsample layer at the end of the layer. Default: Noneuse_checkpoint (bool): Whether to use checkpointing to save memory. Default: False.img_size: Input image size.patch_size: Patch size.resi_connection: The convolutional block before residual connection."""def __init__(self,dim,input_resolution,depth,num_heads,window_size,compress_ratio,squeeze_factor,conv_scale,overlap_ratio,mlp_ratio=4.,qkv_bias=True,qk_scale=None,drop=0.,attn_drop=0.,drop_path=0.,norm_layer=nn.LayerNorm,downsample=None,use_checkpoint=False,img_size=224,patch_size=4,resi_connection='1conv'):super(RHAG, self).__init__()self.dim = dimself.input_resolution = input_resolutionself.residual_group = AttenBlocks(dim=dim,input_resolution=input_resolution,depth=depth,num_heads=num_heads,window_size=window_size,compress_ratio=compress_ratio,squeeze_factor=squeeze_factor,conv_scale=conv_scale,overlap_ratio=overlap_ratio,mlp_ratio=mlp_ratio,qkv_bias=qkv_bias,qk_scale=qk_scale,drop=drop,attn_drop=attn_drop,drop_path=drop_path,norm_layer=norm_layer,downsample=downsample,use_checkpoint=use_checkpoint)if resi_connection == '1conv':self.conv = nn.Conv2d(dim, dim, 3, 1, 1)elif resi_connection == 'identity':self.conv = nn.Identity()self.patch_embed = PatchEmbed(img_size=img_size, patch_size=patch_size, in_chans=0, embed_dim=dim, norm_layer=None)self.patch_unembed = PatchUnEmbed(img_size=img_size, patch_size=patch_size, in_chans=0, embed_dim=dim, norm_layer=None)def forward(self, x, x_size, params):return self.patch_embed(self.conv(self.patch_unembed(self.residual_group(x, x_size, params), x_size))) + xclass PatchEmbed(nn.Module):r""" Image to Patch EmbeddingArgs:img_size (int): Image size. Default: 224.patch_size (int): Patch token size. Default: 4.in_chans (int): Number of input image channels. Default: 3.embed_dim (int): Number of linear projection output channels. Default: 96.norm_layer (nn.Module, optional): Normalization layer. Default: None"""def __init__(self, img_size=224, patch_size=4, in_chans=3, embed_dim=96, norm_layer=None):super().__init__()img_size = to_2tuple(img_size)patch_size = to_2tuple(patch_size)patches_resolution = [img_size[0] // patch_size[0], img_size[1] // patch_size[1]]self.img_size = img_sizeself.patch_size = patch_sizeself.patches_resolution = patches_resolutionself.num_patches = patches_resolution[0] * patches_resolution[1]self.in_chans = in_chansself.embed_dim = embed_dimif norm_layer is not None:self.norm = norm_layer(embed_dim)else:self.norm = Nonedef forward(self, x):x = x.flatten(2).transpose(1, 2) # b Ph*Pw cif self.norm is not None:x = self.norm(x)return xclass PatchUnEmbed(nn.Module):r""" Image to Patch UnembeddingArgs:img_size (int): Image size. Default: 224.patch_size (int): Patch token size. Default: 4.in_chans (int): Number of input image channels. Default: 3.embed_dim (int): Number of linear projection output channels. Default: 96.norm_layer (nn.Module, optional): Normalization layer. Default: None"""def __init__(self, img_size=224, patch_size=4, in_chans=3, embed_dim=96, norm_layer=None):super().__init__()img_size = to_2tuple(img_size)patch_size = to_2tuple(patch_size)patches_resolution = [img_size[0] // patch_size[0], img_size[1] // patch_size[1]]self.img_size = img_sizeself.patch_size = patch_sizeself.patches_resolution = patches_resolutionself.num_patches = patches_resolution[0] * patches_resolution[1]self.in_chans = in_chansself.embed_dim = embed_dimdef forward(self, x, x_size):x = x.transpose(1, 2).contiguous().view(x.shape[0], self.embed_dim, x_size[0], x_size[1]) # b Ph*Pw creturn xclass Upsample(nn.Sequential):"""Upsample module.Args:scale (int): Scale factor. Supported scales: 2^n and 3.num_feat (int): Channel number of intermediate features."""def __init__(self, scale, num_feat):m = []if (scale & (scale - 1)) == 0: # scale = 2^nfor _ in range(int(math.log(scale, 2))):m.append(nn.Conv2d(num_feat, 4 * num_feat, 3, 1, 1))m.append(nn.PixelShuffle(2))elif scale == 3:m.append(nn.Conv2d(num_feat, 9 * num_feat, 3, 1, 1))m.append(nn.PixelShuffle(3))else:raise ValueError(f'scale {scale} is not supported. ' 'Supported scales: 2^n and 3.')super(Upsample, self).__init__(*m)class HAT(nn.Module):r""" Hybrid Attention TransformerA PyTorch implementation of : `Activating More Pixels in Image Super-Resolution Transformer`.Some codes are based on SwinIR.Args:img_size (int | tuple(int)): Input image size. Default 64patch_size (int | tuple(int)): Patch size. Default: 1in_chans (int): Number of input image channels. Default: 3embed_dim (int): Patch embedding dimension. Default: 96depths (tuple(int)): Depth of each Swin Transformer layer.num_heads (tuple(int)): Number of attention heads in different layers.window_size (int): Window size. Default: 7mlp_ratio (float): Ratio of mlp hidden dim to embedding dim. Default: 4qkv_bias (bool): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float): Override default qk scale of head_dim ** -0.5 if set. Default: Nonedrop_rate (float): Dropout rate. Default: 0attn_drop_rate (float): Attention dropout rate. Default: 0drop_path_rate (float): Stochastic depth rate. Default: 0.1norm_layer (nn.Module): Normalization layer. Default: nn.LayerNorm.ape (bool): If True, add absolute position embedding to the patch embedding. Default: Falsepatch_norm (bool): If True, add normalization after patch embedding. Default: Trueuse_checkpoint (bool): Whether to use checkpointing to save memory. Default: Falseupscale: Upscale factor. 2/3/4/8 for image SR, 1 for denoising and compress artifact reductionimg_range: Image range. 1. or 255.upsampler: The reconstruction reconstruction module. 'pixelshuffle'/'pixelshuffledirect'/'nearest+conv'/Noneresi_connection: The convolutional block before residual connection. '1conv'/'3conv'"""def __init__(self,in_chans=3,img_size=64,patch_size=1,embed_dim=96,depths=(6, 6, 6, 6),num_heads=(6, 6, 6, 6),window_size=7,compress_ratio=3,squeeze_factor=30,conv_scale=0.01,overlap_ratio=0.5,mlp_ratio=4.,qkv_bias=True,qk_scale=None,drop_rate=0.,attn_drop_rate=0.,drop_path_rate=0.1,norm_layer=nn.LayerNorm,ape=False,patch_norm=True,use_checkpoint=False,upscale=2,img_range=1.,upsampler='',resi_connection='1conv',**kwargs):super(HAT, self).__init__()self.window_size = window_sizeself.shift_size = window_size // 2self.overlap_ratio = overlap_rationum_in_ch = in_chansnum_out_ch = in_chansnum_feat = 64self.img_range = img_rangeif in_chans == 3:rgb_mean = (0.4488, 0.4371, 0.4040)self.mean = torch.Tensor(rgb_mean).view(1, 3, 1, 1)else:self.mean = torch.zeros(1, 1, 1, 1)self.upscale = upscaleself.upsampler = upsampler# relative position indexrelative_position_index_SA = self.calculate_rpi_sa()relative_position_index_OCA = self.calculate_rpi_oca()self.register_buffer('relative_position_index_SA', relative_position_index_SA)self.register_buffer('relative_position_index_OCA', relative_position_index_OCA)# ------------------------- 1, shallow feature extraction ------------------------- #self.conv_first = nn.Conv2d(num_in_ch, embed_dim, 3, 1, 1)# ------------------------- 2, deep feature extraction ------------------------- #self.num_layers = len(depths)self.embed_dim = embed_dimself.ape = apeself.patch_norm = patch_normself.num_features = embed_dimself.mlp_ratio = mlp_ratio# split image into non-overlapping patchesself.patch_embed = PatchEmbed(img_size=img_size,patch_size=patch_size,in_chans=embed_dim,embed_dim=embed_dim,norm_layer=norm_layer if self.patch_norm else None)num_patches = self.patch_embed.num_patchespatches_resolution = self.patch_embed.patches_resolutionself.patches_resolution = patches_resolution# merge non-overlapping patches into imageself.patch_unembed = PatchUnEmbed(img_size=img_size,patch_size=patch_size,in_chans=embed_dim,embed_dim=embed_dim,norm_layer=norm_layer if self.patch_norm else None)# absolute position embeddingif self.ape:self.absolute_pos_embed = nn.Parameter(torch.zeros(1, num_patches, embed_dim))trunc_normal_(self.absolute_pos_embed, std=.02)self.pos_drop = nn.Dropout(p=drop_rate)# stochastic depthdpr = [x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))] # stochastic depth decay rule# build Residual Hybrid Attention Groups (RHAG)self.layers = nn.ModuleList()for i_layer in range(self.num_layers):layer = RHAG(dim=embed_dim,input_resolution=(patches_resolution[0], patches_resolution[1]),depth=depths[i_layer],num_heads=num_heads[i_layer],window_size=window_size,compress_ratio=compress_ratio,squeeze_factor=squeeze_factor,conv_scale=conv_scale,overlap_ratio=overlap_ratio,mlp_ratio=self.mlp_ratio,qkv_bias=qkv_bias,qk_scale=qk_scale,drop=drop_rate,attn_drop=attn_drop_rate,drop_path=dpr[sum(depths[:i_layer]):sum(depths[:i_layer + 1])], # no impact on SR resultsnorm_layer=norm_layer,downsample=None,use_checkpoint=use_checkpoint,img_size=img_size,patch_size=patch_size,resi_connection=resi_connection)self.layers.append(layer)self.norm = norm_layer(self.num_features)# build the last conv layer in deep feature extractionif resi_connection == '1conv':self.conv_after_body = nn.Conv2d(embed_dim, embed_dim, 3, 1, 1)elif resi_connection == 'identity':self.conv_after_body = nn.Identity()# ------------------------- 3, high quality image reconstruction ------------------------- #if self.upsampler == 'pixelshuffle':# for classical SRself.conv_before_upsample = nn.Sequential(nn.Conv2d(embed_dim, num_feat, 3, 1, 1), nn.LeakyReLU(inplace=True))self.upsample = Upsample(upscale, num_feat)self.conv_last = nn.Conv2d(num_feat, num_out_ch, 3, 1, 1)self.apply(self._init_weights)def _init_weights(self, m):if isinstance(m, nn.Linear):trunc_normal_(m.weight, std=.02)if isinstance(m, nn.Linear) and m.bias is not None:nn.init.constant_(m.bias, 0)elif isinstance(m, nn.LayerNorm):nn.init.constant_(m.bias, 0)nn.init.constant_(m.weight, 1.0)def calculate_rpi_sa(self):# calculate relative position index for SAcoords_h = torch.arange(self.window_size)coords_w = torch.arange(self.window_size)coords = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, Wh, Wwcoords_flatten = torch.flatten(coords, 1) # 2, Wh*Wwrelative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :] # 2, Wh*Ww, Wh*Wwrelative_coords = relative_coords.permute(1, 2, 0).contiguous() # Wh*Ww, Wh*Ww, 2relative_coords[:, :, 0] += self.window_size - 1 # shift to start from 0relative_coords[:, :, 1] += self.window_size - 1relative_coords[:, :, 0] *= 2 * self.window_size - 1relative_position_index = relative_coords.sum(-1) # Wh*Ww, Wh*Wwreturn relative_position_indexdef calculate_rpi_oca(self):# calculate relative position index for OCAwindow_size_ori = self.window_sizewindow_size_ext = self.window_size + int(self.overlap_ratio * self.window_size)coords_h = torch.arange(window_size_ori)coords_w = torch.arange(window_size_ori)coords_ori = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, ws, wscoords_ori_flatten = torch.flatten(coords_ori, 1) # 2, ws*wscoords_h = torch.arange(window_size_ext)coords_w = torch.arange(window_size_ext)coords_ext = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, wse, wsecoords_ext_flatten = torch.flatten(coords_ext, 1) # 2, wse*wserelative_coords = coords_ext_flatten[:, None, :] - coords_ori_flatten[:, :, None] # 2, ws*ws, wse*wserelative_coords = relative_coords.permute(1, 2, 0).contiguous() # ws*ws, wse*wse, 2relative_coords[:, :, 0] += window_size_ori - window_size_ext + 1 # shift to start from 0relative_coords[:, :, 1] += window_size_ori - window_size_ext + 1relative_coords[:, :, 0] *= window_size_ori + window_size_ext - 1relative_position_index = relative_coords.sum(-1)return relative_position_indexdef calculate_mask(self, x_size):# calculate attention mask for SW-MSAh, w = x_sizeimg_mask = torch.zeros((1, h, w, 1)) # 1 h w 1h_slices = (slice(0, -self.window_size), slice(-self.window_size,-self.shift_size), slice(-self.shift_size, None))w_slices = (slice(0, -self.window_size), slice(-self.window_size,-self.shift_size), slice(-self.shift_size, None))cnt = 0for h in h_slices:for w in w_slices:img_mask[:, h, w, :] = cntcnt += 1mask_windows = window_partition(img_mask, self.window_size) # nw, window_size, window_size, 1mask_windows = mask_windows.view(-1, self.window_size * self.window_size)attn_mask = mask_windows.unsqueeze(1) - mask_windows.unsqueeze(2)attn_mask = attn_mask.masked_fill(attn_mask != 0, float(-100.0)).masked_fill(attn_mask == 0, float(0.0))return attn_mask@torch.jit.ignoredef no_weight_decay(self):return {'absolute_pos_embed'}@torch.jit.ignoredef no_weight_decay_keywords(self):return {'relative_position_bias_table'}def forward_features(self, x):x_size = (x.shape[2], x.shape[3])# Calculate attention mask and relative position index in advance to speed up inference.# The original code is very time-consuming for large window size.attn_mask = self.calculate_mask(x_size).to(x.device)params = {'attn_mask': attn_mask, 'rpi_sa': self.relative_position_index_SA,'rpi_oca': self.relative_position_index_OCA}x = self.patch_embed(x)if self.ape:x = x + self.absolute_pos_embedx = self.pos_drop(x)for layer in self.layers:x = layer(x, x_size, params)x = self.norm(x) # b seq_len cx = self.patch_unembed(x, x_size)return xdef forward(self, x):self.mean = self.mean.type_as(x)x = (x - self.mean) * self.img_rangeif self.upsampler == 'pixelshuffle':# for classical SRx = self.conv_first(x)x = self.conv_after_body(self.forward_features(x)) + xx = self.conv_before_upsample(x)x = self.conv_last(self.upsample(x))x = x / self.img_range + self.meanreturn xdef dist2rbox(pred_dist, pred_angle, anchor_points, dim=-1):"""Decode predicted object bounding box coordinates from anchor points and distribution.Args:pred_dist (torch.Tensor): Predicted rotated distance, (bs, h*w, 4).pred_angle (torch.Tensor): Predicted angle, (bs, h*w, 1).anchor_points (torch.Tensor): Anchor points, (h*w, 2).Returns:(torch.Tensor): Predicted rotated bounding boxes, (bs, h*w, 4)."""lt, rb = pred_dist.split(2, dim=dim)cos, sin = torch.cos(pred_angle), torch.sin(pred_angle)# (bs, h*w, 1)xf, yf = ((rb - lt) / 2).split(1, dim=dim)x, y = xf * cos - yf * sin, xf * sin + yf * cosxy = torch.cat([x, y], dim=dim) + anchor_pointsreturn torch.cat([xy, lt + rb], dim=dim)class Proto(nn.Module):"""YOLOv8 mask Proto module for segmentation models."""def __init__(self, c1, c_=256, c2=32):"""Initializes the YOLOv8 mask Proto module with specified number of protos and masks.Input arguments are ch_in, number of protos, number of masks."""super().__init__()self.cv1 = Conv(c1, c_, k=3)self.upsample = nn.ConvTranspose2d(c_, c_, 2, 2, 0, bias=True) # nn.Upsample(scale_factor=2, mode='nearest')self.cv2 = Conv(c_, c_, k=3)self.cv3 = Conv(c_, c2)def forward(self, x):"""Performs a forward pass through layers using an upsampled input image."""return self.cv3(self.cv2(self.upsample(self.cv1(x))))def autopad(k, p=None, d=1): # kernel, padding, dilation"""Pad to 'same' shape outputs."""if d > 1:k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-sizeif p is None:p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-padreturn pclass Conv(nn.Module):"""Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)."""default_act = nn.SiLU() # default activationdef __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):"""Initialize Conv layer with given arguments including activation."""super().__init__()self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)self.bn = nn.BatchNorm2d(c2)self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()def forward(self, x):"""Apply convolution, batch normalization and activation to input tensor."""return self.act(self.bn(self.conv(x)))def forward_fuse(self, x):"""Perform transposed convolution of 2D data."""return self.act(self.conv(x))class DFL(nn.Module):"""Integral module of Distribution Focal Loss (DFL).Proposed in Generalized Focal Loss https://ieeexplore.ieee.org/document/9792391"""def __init__(self, c1=16):"""Initialize a convolutional layer with a given number of input channels."""super().__init__()self.conv = nn.Conv2d(c1, 1, 1, bias=False).requires_grad_(False)x = torch.arange(c1, dtype=torch.float)self.conv.weight.data[:] = nn.Parameter(x.view(1, c1, 1, 1))self.c1 = c1def forward(self, x):"""Applies a transformer layer on input tensor 'x' and returns a tensor."""b, c, a = x.shape # batch, channels, anchorsreturn self.conv(x.view(b, 4, self.c1, a).transpose(2, 1).softmax(1)).view(b, 4, a)# return self.conv(x.view(b, self.c1, 4, a).softmax(1)).view(b, 4, a)class HATHead(nn.Module):"""YOLOv8 Detect head for detection models."""dynamic = False # force grid reconstructionexport = False # export modeshape = Noneanchors = torch.empty(0) # initstrides = torch.empty(0) # initdef __init__(self, nc=80, ch=()):"""Initializes the YOLOv8 detection layer with specified number of classes and channels."""super().__init__()self.nc = nc # number of classesself.nl = len(ch) # number of detection layersself.reg_max = 16 # DFL channels (ch[0] // 16 to scale 4/8/12/16/20 for n/s/m/l/x)self.no = nc + self.reg_max * 4 # number of outputs per anchorself.stride = torch.zeros(self.nl) # strides computed during buildc2, c3 = max((16, ch[0] // 4, self.reg_max * 4)), max(ch[0], min(self.nc, 100)) # channelsself.cv2 = nn.ModuleList(nn.Sequential(Conv(x, c2, 3), HAT(c2), nn.Conv2d(c2, 4 * self.reg_max, 1)) for x in ch)self.cv3 = nn.ModuleList(nn.Sequential(Conv(x, c3, 3), HAT(c3), nn.Conv2d(c3, self.nc, 1)) for x in ch)self.dfl = DFL(self.reg_max) if self.reg_max > 1 else nn.Identity()def forward(self, x):"""Concatenates and returns predicted bounding boxes and class probabilities."""shape = x[0].shape # BCHWfor i in range(self.nl):x[i] = torch.cat((self.cv2[i](x[i]), self.cv3[i](x[i])), 1)if self.training:return xelif self.dynamic or self.shape != shape:self.anchors, self.strides = (x.transpose(0, 1) for x in make_anchors(x, self.stride, 0.5))self.shape = shapex_cat = torch.cat([xi.view(shape[0], self.no, -1) for xi in x], 2)if self.export and self.format in ('saved_model', 'pb', 'tflite', 'edgetpu', 'tfjs'): # avoid TF FlexSplitV opsbox = x_cat[:, :self.reg_max * 4]cls = x_cat[:, self.reg_max * 4:]else:box, cls = x_cat.split((self.reg_max * 4, self.nc), 1)dbox = dist2bbox(self.dfl(box), self.anchors.unsqueeze(0), xywh=True, dim=1) * self.stridesif self.export and self.format in ('tflite', 'edgetpu'):# Normalize xywh with image size to mitigate quantization error of TFLite integer models as done in YOLOv5:# https://github.com/ultralytics/yolov5/blob/0c8de3fca4a702f8ff5c435e67f378d1fce70243/models/tf.py#L307-L309# See this PR for details: https://github.com/ultralytics/ultralytics/pull/1695img_h = shape[2] * self.stride[0]img_w = shape[3] * self.stride[0]img_size = torch.tensor([img_w, img_h, img_w, img_h], device=dbox.device).reshape(1, 4, 1)dbox /= img_sizey = torch.cat((dbox, cls.sigmoid()), 1)return y if self.export else (y, x)def bias_init(self):"""Initialize Detect() biases, WARNING: requires stride availability."""m = self # self.model[-1] # Detect() module# cf = torch.bincount(torch.tensor(np.concatenate(dataset.labels, 0)[:, 0]).long(), minlength=nc) + 1# ncf = math.log(0.6 / (m.nc - 0.999999)) if cf is None else torch.log(cf / cf.sum()) # nominal class frequencyfor a, b, s in zip(m.cv2, m.cv3, m.stride): # froma[-1].bias.data[:] = 1.0 # boxb[-1].bias.data[:m.nc] = math.log(5 / m.nc / (640 / s) ** 2) # cls (.01 objects, 80 classes, 640 img)if __name__ == "__main__":# Generating Sample imageimage1 = (1, 64, 32, 32)image2 = (1, 128, 16, 16)image3 = (1, 256, 8, 8)image1 = torch.rand(image1)image2 = torch.rand(image2)image3 = torch.rand(image3)image = [image1, image2, image3]channel = (64, 128, 256)# Modelmobilenet_v1 = HATHead(nc=80, ch=channel)out = mobilenet_v1(image)print(out)四、HATHead的添加方法

这个添加方式和之前的变了一下,以后的添加方法都按照这个来了,是为了和群内的文件适配。

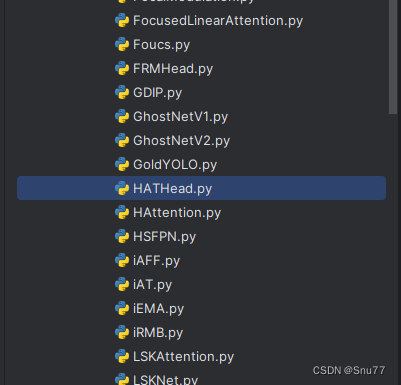

4.1 修改一

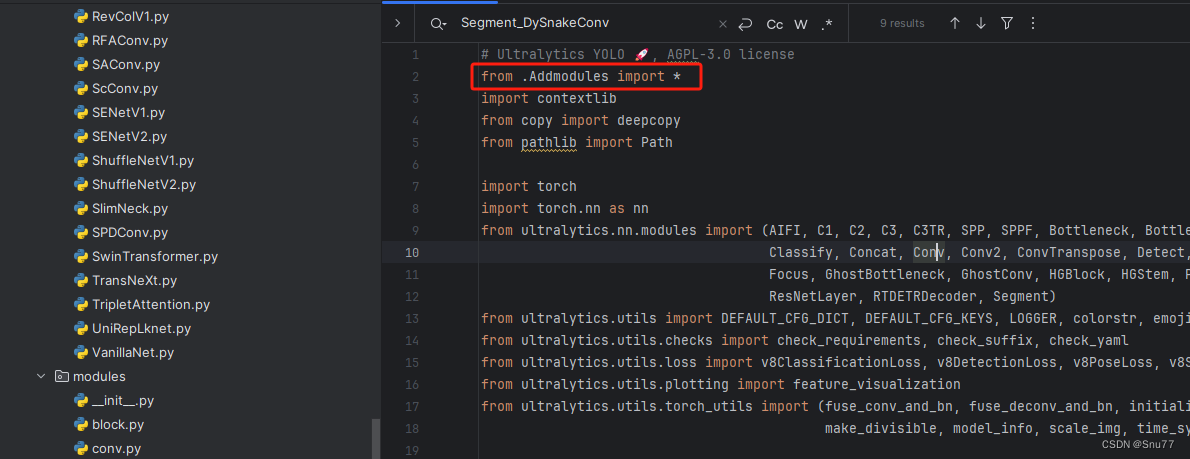

第一还是建立文件,我们找到如下ultralytics/nn/modules文件夹下建立一个目录名字呢就是'Addmodules'文件夹(用群内的文件的话已经有了无需新建)!然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可。

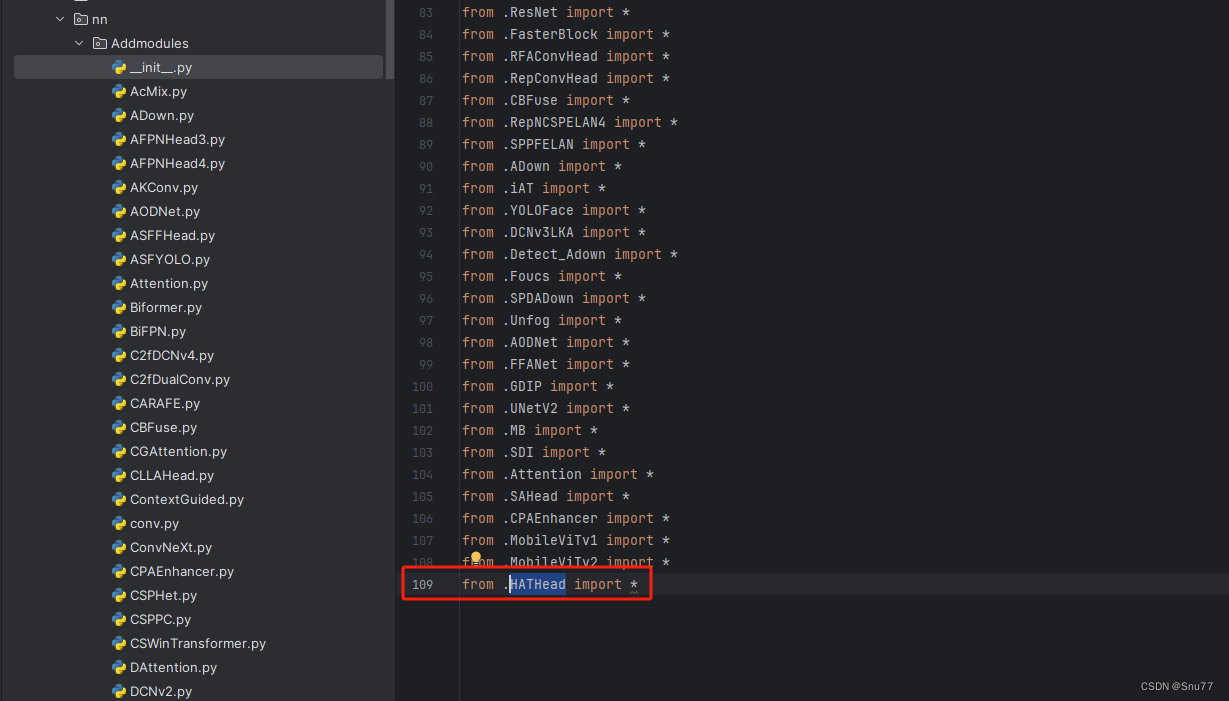

4.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'(用群内的文件的话已经有了无需新建),然后在其内部导入我们的检测头如下图所示。

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块(用群内的文件的话已经有了无需重新导入直接开始第四步即可)!

从今天开始以后的教程就都统一成这个样子了,因为我默认大家用了我群内的文件来进行修改!!

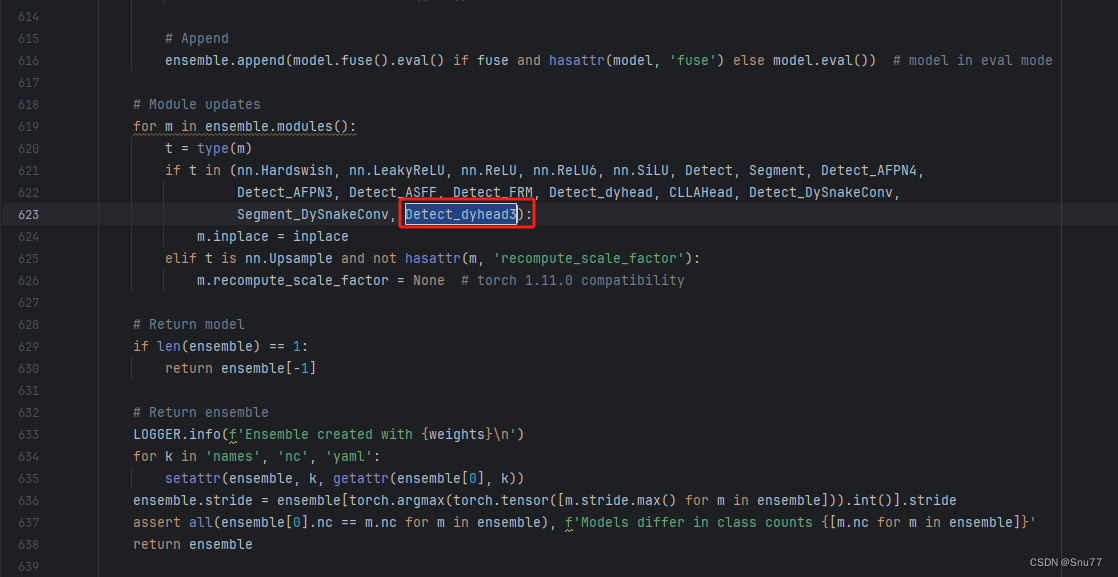

4.4 修改四

按照我的进行添加即可,当然其中有些检测头你们的文件中可能没有,无需理会,主要看其周围的代码一直来寻找即可!

4.5 修改五

按照我下面的添加!

4.6 修改六

注意!!!注意!!!

此处在YOLOv8.1版本之后以及删除了不用改了,但是如果你是老版本此处需要修改,所以如果你的是新版本就不用修改了,如果是老版本的就需要修改(因为群里文件是新版本的下面的图片中的红框标记的代码换成本文的HATHead即可)。

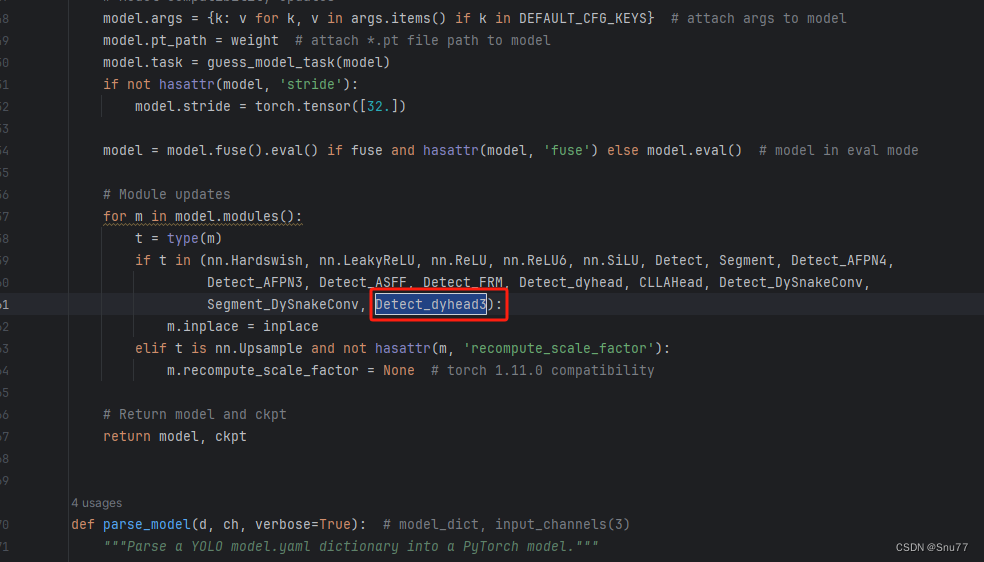

4.7 修改七

注意!!!注意!!!

此处在YOLOv8.1版本之后以及删除了不用改了,但是如果你是老版本此处需要修改,所以如果你的是新版本就不用修改了,如果是老版本的就需要修改(因为群里文件是新版本的下面的图片中的红框标记的代码换成本文的HATHead即可)。

4.8 修改八

同理

4.9 修改九

这里有一些不一样,我们需要加一行代码

else:return 'detect'为啥呢不一样,因为这里的m在代码执行过程中会将你的代码自动转换为小写,所以直接else方便一点,以后出现一些其它分割或者其它的教程的时候在提供其它的修改教程。

4.9 修改十

同理

五、 目标检测的yaml文件

5.1 yaml文件

复制如下的yaml文件即可运行。

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'# [depth, width, max_channels]n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPss: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPsm: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPsl: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPsx: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOP# YOLOv8.0n backbone

backbone:# [from, repeats, module, args]- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4- [-1, 3, C2f, [128, True]]- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8- [-1, 6, C2f, [256, True]]- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16- [-1, 6, C2f, [512, True]]- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32- [-1, 3, C2f, [1024, True]]- [-1, 1, SPPF, [1024, 5]] # 9# YOLOv8.0n head

head:- [-1, 1, nn.Upsample, [None, 2, 'nearest']]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 3, C2f, [512]] # 12- [-1, 1, nn.Upsample, [None, 2, 'nearest']]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 3, C2f, [256]] # 15 (P3/8-small)- [-1, 1, Conv, [256, 3, 2]]- [[-1, 12], 1, Concat, [1]] # cat head P4- [-1, 3, C2f, [512]] # 18 (P4/16-medium)- [-1, 1, Conv, [512, 3, 2]]- [[-1, 9], 1, Concat, [1]] # cat head P5- [-1, 3, C2f, [1024]] # 21 (P5/32-large)- [[15, 18, 21], 1, HATHead, [nc]] # Detect(P3, P4, P5)

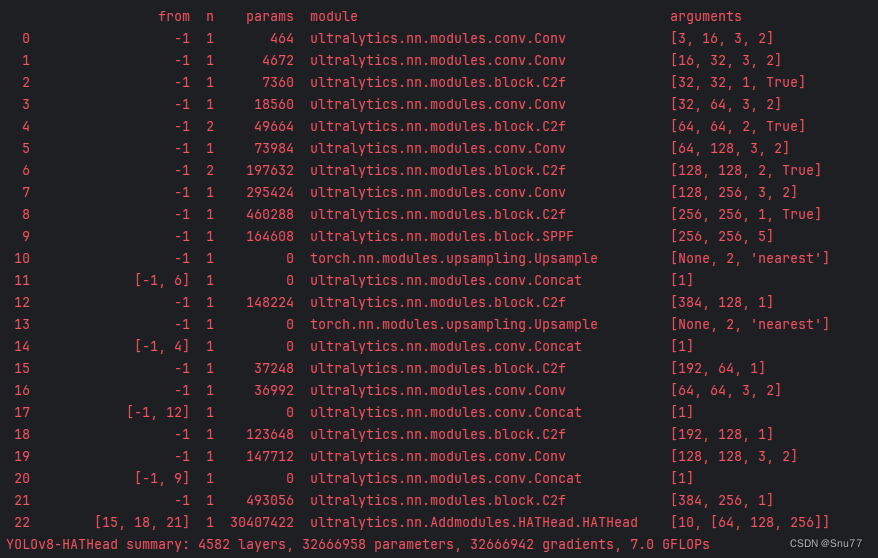

5.2 运行记录

六、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv8改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~

专栏回顾: