案例说明:

在CentOS 7环境部署gfs2共享存储应用。

系统环境:

[root@node201 ~]# cat /etc/centos-release

CentOS Linux release 7.9.2009 (Core)

系统架构:

[root@node203 ~]# cat /etc/hosts

192.168.1.201 node201

192.168.1.202 node202

192.168.1.203 node203 iscsi server

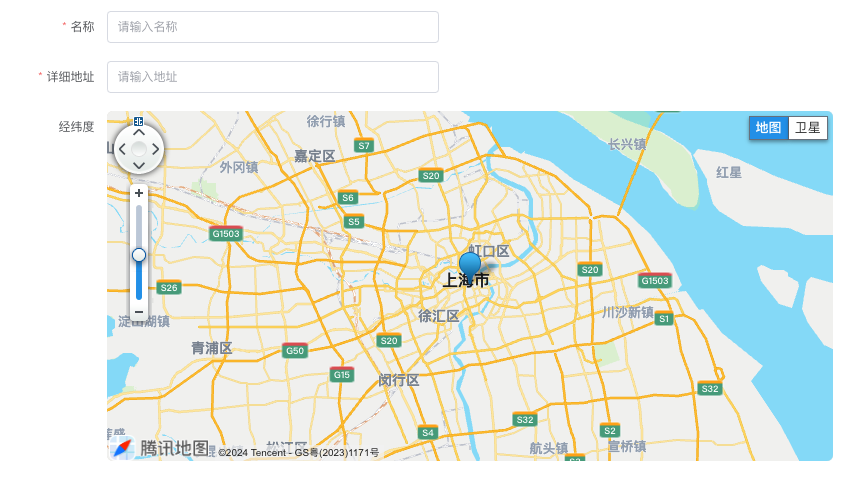

如下所示,通过iscsi server建立共享存储,在集群节点对共享存储建立gfs2文件系统应用:

一、系统环境准备

如下所示,所有节点安装以下软件:

yum -y install gfs2-utils lvm2-cluster

yum -y install lvm2-sysvinit

yum -y pacemaker corosync

二、配置集群

gfs2文件系统用于集群共享存储环境,必须在集群环境下使用,不能用于单实例环境:

1、建立用户认证

如下所示,在集群节点及qdevice节点建立哈cluster用户并设置密码:

[root@node201 ~]# id hacluster

uid=003(hacluster) gid=1004(haclient) groups=1004(haclient)

[root@node202 ~]# id hacluster

uid=5001(hacluster) gid=5010(haclient) groups=5010(haclient)

[root@node203 ~]# id hacluster

uid=5001(hacluster) gid=1004(haclient) groups=1004(haclient)

如下所示,在集群节点建立到qdevice节点的认证:

[root@node201 ~]# pcs cluster auth node203

Username: hacluster

Password:

node203: Authorized

[root@node202 ~]# pcs cluster auth node203

Username: hacluster

Password:

node203: Authorized

2、创建集群

如下所示,创建集群test_cluster:

[root@node201 pcs]# pcs cluster setup --name test_cluster node201 node202 node203 --force

Destroying cluster on nodes: node201, node202, node203...

node202: Stopping Cluster (pacemaker)...

node203: Stopping Cluster (pacemaker)...

node201: Stopping Cluster (pacemaker)...

node203: Successfully destroyed cluster

node202: Successfully destroyed cluster

node201: Successfully destroyed clusterSending 'pacemaker_remote authkey' to 'node201', 'node202', 'node203'

node201: successful distribution of the file 'pacemaker_remote authkey'

node203: successful distribution of the file 'pacemaker_remote authkey'

node202: successful distribution of the file 'pacemaker_remote authkey'

Sending cluster config files to the nodes...

node201: Succeeded

node202: Succeeded

node203: SucceededSynchronizing pcsd certificates on nodes node201, node202, node203...

node201: Success

node203: Success

node202: Success

Restarting pcsd on the nodes in order to reload the certificates...

node201: Success

node203: Success

node202: Success[root@node201 pcs]# pcs cluster start --all

node201: Starting Cluster (corosync)...

node202: Starting Cluster (corosync)...

node203: Starting Cluster (corosync)...

node203: Starting Cluster (pacemaker)...

node202: Starting Cluster (pacemaker)...

node201: Starting Cluster (pacemaker)...[root@node201 pcs]# pcs cluster status

Cluster Status:Stack: unknownCurrent DC: NONELast updated: Thu Aug 29 19:24:45 2024Last change: Thu Aug 29 19:24:40 2024 by hacluster via crmd on node2033 nodes configured0 resource instances configured

PCSD Status:node203: Onlinenode201: Onlinenode202: Online[root@node201 pcs]# pcs cluster enable --all

node201: Cluster Enabled

node202: Cluster Enabled

node203: Cluster Enabled

3、集群节点corosync配置

1)集群节点配置corosync.conf(所有集群节点)

[root@node202 corosync]# cat /etc/corosync/corosync.conf |grep -v ^#|grep -v ^$|grep -v '#'

totem {version: 2cluster_name: test_clustersecauth: offtransport: udpu

}

nodelist {node {ring0_addr: node201nodeid: 1}node {ring0_addr: node202nodeid: 2}node {ring0_addr: node203nodeid: 3}

}quorum {provider: corosync_votequorumdevice {model: netvotes: 1net {algorithm: ffsplithost: node203}}

}logging {to_logfile: yeslogfile: /var/log/cluster/corosync.logto_syslog: yes

}

quorum配置说明:

- cluster_name: 此项设置的集群名,在之后格式化逻辑卷为gfs2类型时要用到。

- clear_node_high_bit: yes:dlm服务需要,否则无法启动。

- quorum {}模块:集群选举投票设置,必需配置。如果是2节点的集群,可参考如下配置:

quorum {provider: corosync_votequorum # 启动了votequorumexpected_votes: 7 # 7表示,7个节点,quorum为4。如果设置了nodelist参数,expected_votes无效wait_for_all: 1 # 值为1表示,当集群启动,集群quorum被挂起,直到所有节点在线并加入集群,这个参数是Corosync 2.0新增的。last_man_standing: 1 # 为1表示,启用LMS特性。默认这个特性是关闭的,即值为0。# 这个参数开启后,当集群的处于表决边缘(如expected_votes=7,而当前online nodes=4),处于表决边缘状态超过last_man_standing_window参数指定的时间,# 则重新计算quorum,直到online nodes=2。如果想让online nodes能够等于1,必须启用auto_tie_breaker选项,生产环境不推荐。last_man_standing_window: 10000 # 单位为毫秒。在一个或多个主机从集群中丢失后,重新计算quorum

2)重启corosync服务

[root@node201 corosync]# systemctl restart corosync

[root@node201 corosync]# systemctl status corosync

三、配置iSCSI共享存储

具体配置见:

文档:CentOS 7配置iSCSI共享存储案例.note

链接:https://note.youdao.com/ynoteshare/index.html?id=96ee40b99499d1d4695d0838cf988f21&type=note&_time=1725353430791

查看集群节点磁盘信息,如下所示,sdc为iscsi共享存储:

四、客户端创建gfs文件系统

1、查看lvm配置

开启lvm的集群模式。

这个命令会自动修改/etc/lvm/lvm.conf配置文件中的locking_type和use_lvmetad选项的值

[root@node201 ~]# lvmconf --enable-cluster

[root@node201 ~]# cat /etc/lvm/lvm.conf|egrep -E 'locking_type|use_lvm'|grep -v '#'locking_type = 3use_lvmetad = 0

2、查看集群配置

[root@node201 ~]# corosync-quorumtool -s

Quorum information

------------------

Date: Tue Sep 3 16:59:31 2024

Quorum provider: corosync_votequorum

Nodes: 3

Node ID: 1

Ring ID: 1.d0

Quorate: YesVotequorum information

----------------------

Expected votes: 4

Highest expected: 4

Total votes: 4

Quorum: 3

Flags: Quorate QdeviceMembership information

----------------------Nodeid Votes Qdevice Name1 1 A,V,NMW node201 (local)2 1 A,V,NMW node2023 1 A,V,NMW node2030 1 Qdevice

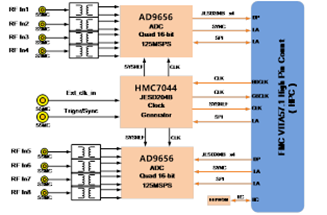

3、启动集群服务(all nodes)

在 LVM 之外使用 GFS2 文件系统时,Red Hat 只支持在 CLVM 逻辑卷中创建的 GFS2 文件系统。CLVM 包含在 Resilient Storage Add-On 中。这是在集群范围内部署 LVM,由在集群中管理 LVM 逻辑卷的 CLVM 守护进程 clvmd 启用。该守护进程可让 LVM2 在集群间管理逻辑卷,允许集群中的所有节点共享该逻辑卷

[root@node201 ~]# systemctl enable dlm

[root@node201 ~]# systemctl start dlm[root@node201 ~]# systemctl enable clvmd

[root@node201 ~]# systemctl start clvmd

如下所示,clvmd服务状态:

4、创建gfs文件系统

1)创建物理卷

[root@node201 ~]# pvcreate /dev/sdc

WARNING: dos signature detected on /dev/sdc at offset 510. Wipe it? [y/n]: yWiping dos signature on /dev/sdc.Physical volume "/dev/sdc" successfully created.

2)查看物理卷信息

[root@node201 ~]# pvscanPV /dev/sda2 VG centos lvm2 [102.39 GiB / 64.00 MiB free]PV /dev/sdc lvm2 [4.00 GiB]Total: 2 [106.39 GiB] / in use: 1 [102.39 GiB] / in no VG: 1 [4.00 GiB]

[root@node201 ~]# pvsPV VG Fmt Attr PSize PFree/dev/sda2 centos lvm2 a-- 102.39g 64.00m/dev/sdc lvm2 --- 4.00g 4.00g

3)创建集群卷组

[root@node201 ~]# vgcreate -Ay -cy gfsvg /dev/sdcClustered volume group "gfsvg" successfully created[root@node201 ~]# vgdisplay /dev/gfsvg--- Volume group ---VG Name gfsvgSystem IDFormat lvm2Metadata Areas 1Metadata Sequence No 1VG Access read/writeVG Status resizableClustered yesShared noMAX LV 0Cur LV 0Open LV 0Max PV 0Cur PV 1Act PV 1VG Size <3.97 GiBPE Size 4.00 MiBTotal PE 1016Alloc PE / Size 0 / 0Free PE / Size 1016 / <3.97 GiBVG UUID mFPDmx-yrW4-e4EZ-mqKE-Ya25-lHWw-yKdY3i

4)创建逻辑卷

[root@node201 ~]# lvcreate -L 2G -n lv_gfs1 gfsvg

WARNING: LVM2_member signature detected on /dev/gfsvg/lv_gfs1 at offset 536. Wipe it? [y/n]: yWiping LVM2_member signature on /dev/gfsvg/lv_gfs1.Logical volume "lv_gfs1" created.

[root@node201 ~]# lvdisplay--- Logical volume ---LV Path /dev/gfsvg/lv_gfs1LV Name lv_gfs1VG Name gfsvgLV UUID Pjy2Qy-4MXj-pR87-2NcQ-9E02-5BF4-eHz3XRLV Write Access read/writeLV Creation host, time node201, 2024-09-02 19:43:43 +0800LV Status available# open 0LV Size 2.00 GiBCurrent LE 512Segments 1Allocation inheritRead ahead sectors auto- currently set to 8192Block device 253:3# 查看逻辑卷信息

[root@node201 ~]# lvs -o +devices gfsvgLV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert Deviceslv_gfs1 gfsvg -wi-a----- 2.00g /dev/sdc(0)

5)创建gfs文件系统

[root@node201 ~]# mkdir /mnt/shgfs

# 创建gfs2文件系统

[root@node201 ~]# mkfs.gfs2 -p lock_dlm -t test_cluster:gfs2 -j 2 /dev/gfsvg/lv_gfs1

/dev/gfsvg/lv_gfs1 is a symbolic link to /dev/dm-3

This will destroy any data on /dev/dm-3

Are you sure you want to proceed? (Y/N): y

Discarding device contents (may take a while on large devices): Done

Adding journals: Done

Building resource groups: Done

Creating quota file: Done

Writing superblock and syncing: Done

Device: /dev/gfsvg/lv_gfs1

Block size: 4096

Device size: 2.00 GB (524288 blocks)

Filesystem size: 2.00 GB (524285 blocks)

Journals: 2

Journal size: 16MB

Resource groups: 10

Locking protocol: "lock_dlm"

Lock table: "test_cluster:gfs2"

UUID: 445e065a-08e2-4d0b-81cc-5325cc444c54# 挂载gfs2文件系统

[root@node201 ~]# mount -t gfs2 /dev/gfsvg/lv_gfs1 /mnt/shgfs[root@node201 ~]# mount -v |grep gfs

/dev/mapper/gfsvg-lv_gfs1 on /mnt/shgfs type gfs2 (rw,relatime)# 访问gfs2文件系统

[root@node201 ~]# cd /mnt/shgfs/

[root@node201 shgfs]# touch gfs1

[root@node201 shgfs]# ls -lh

total 4.0K

-rw-r--r-- 1 root root 0 Sep 2 19:46 gfs1

mkfs创建gfs2文件系统格式:

mkfs.gfs2 -p LockProtoName -t LockTableName -j NumberJournals BlockDevice

- LockProtoName

指定要使用的锁定协议名称,集群的锁定协议为 lock_dlm。 - LockTableName

这个参数是用来指定集群配置中的 GFS2 文件系统。它有两部分,用冒号隔开(没有空格)如下:ClusterName:FSName

ClusterName,用来创建 GFS2 文件系统的集群名称。

FSName,文件系统名称,长度可在 1-16 个字符之间。该名称必须与集群中所有 lock_dlm 文件系统以及每个本地节点中的所有文件系统(lock_dlm 和 lock_nolock)不同。 - Number

指定由 mkfs.gfs2 命令生成的日志数目。每个要挂载文件系统的节点都需要一个日志。对于 GFS2 文件系统来说,以后可以添加更多的日志而不会增大文件系统。 - BlockDevice

指定逻辑卷或者物理卷。

附件:常见错误

1、 运行pvs、vgcreate等lvm相关命令时提示connect()等错误

错误示例如下,原因是clvmd没有正常启动

connect() failed on local socket: 没有那个文件或目录

Internal cluster locking initialisation failed.

WARNING: Falling back to local file-based locking.

Volume Groups with the clustered attribute will be inaccessible.

运行pvs、vgcreate等lvm相关命令时提示Skipping clustered volume group XXX或者Device XXX excluded by a filter

这通常是做了vgremove等操作后、或块设备上存在旧的卷组信息导致的。

2、无clvmd服务

如下所示,启动clvmd服务,提示无此service:

[root@node202 ~]# systemctl start clvmd

Failed to start clvmd.service: Unit not found.[root@node202 ~]# ls /etc/rc.d/init.d/clvmd

ls: cannot access /etc/rc.d/init.d/clvmd: No such file or directory安装lvm2-sysvinit软件:

[root@node202 ~]# yum install -y lvm2-sysvinit-2.02.187-6.el7_9.5.x86_64

[root@node202 ~]# ls /etc/rc.d/init.d/clvmd

/etc/rc.d/init.d/clvmd