代码以及详细注释:

import torch

import torch.utils.data as Data

import torch.nn.functional as F

import matplotlib.pyplot as plt# torch.manual_seed(1) # reproducible

"""超参数

"""

# 学习率

LR = 0.01

# 批大小

BATCH_SIZE = 32

# 轮次

EPOCH = 12# 造数据

x = torch.unsqueeze(torch.linspace(-1, 1, 1000), dim=1)

y = x.pow(2) + 0.1*torch.normal(torch.zeros(*x.size()))# # plot dataset

# plt.scatter(x.numpy(), y.numpy())

# plt.show()# put dateset into torch dataset

torch_dataset = Data.TensorDataset(x, y)

# 数据加载器

loader = Data.DataLoader(dataset=torch_dataset, batch_size=BATCH_SIZE, shuffle=True, num_workers=2,)# default network

class Net(torch.nn.Module):def __init__(self):super(Net, self).__init__()self.hidden = torch.nn.Linear(1, 20) # hidden layerself.predict = torch.nn.Linear(20, 1) # output layerdef forward(self, x):x = F.relu(self.hidden(x)) # activation function for hidden layerx = self.predict(x) # linear outputreturn xif __name__ == '__main__':# 相同的网络结构net_SGD = Net()net_Momentum = Net()net_RMSprop = Net()net_Adam = Net()# 将上面的网络集成到这里nets = [net_SGD, net_Momentum, net_RMSprop, net_Adam]# 不同的优化器opt_SGD = torch.optim.SGD(net_SGD.parameters(), lr=LR)opt_Momentum = torch.optim.SGD(net_Momentum.parameters(), lr=LR, momentum=0.8)opt_RMSprop = torch.optim.RMSprop(net_RMSprop.parameters(), lr=LR, alpha=0.9)opt_Adam = torch.optim.Adam(net_Adam.parameters(), lr=LR, betas=(0.9, 0.99))# 将上面的优化器集成到这里optimizers = [opt_SGD, opt_Momentum, opt_RMSprop, opt_Adam]# 损失函数loss_func = torch.nn.MSELoss()losses_his = [[], [], [], []] # record loss# 训练轮次for epoch in range(EPOCH):print('Epoch: ', epoch)# 分批训练for step, (b_x, b_y) in enumerate(loader): # for each training stepfor net, opt, l_his in zip(nets, optimizers, losses_his):output = net(b_x) # get output for every netloss = loss_func(output, b_y) # compute loss for every netopt.zero_grad() # clear gradients for next trainloss.backward() # backpropagation, compute gradientsopt.step() # apply gradientsl_his.append(loss.data) # loss recoder# 绘图labels = ['SGD', 'Momentum', 'RMSprop', 'Adam']for i, l_his in enumerate(losses_his):plt.plot(l_his, label=labels[i])plt.legend(loc='best')plt.xlabel('Steps')plt.ylabel('Loss')plt.ylim((0, 0.2))plt.show()

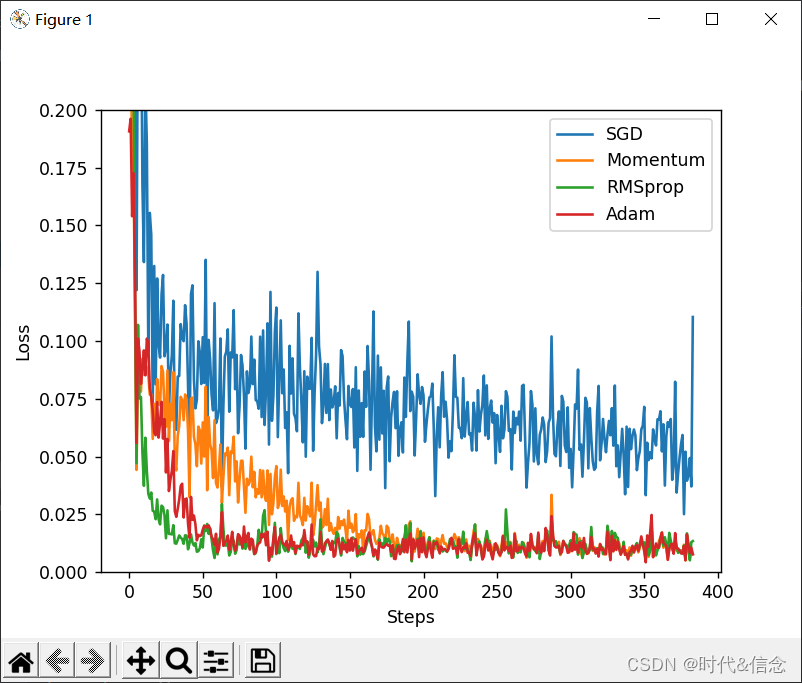

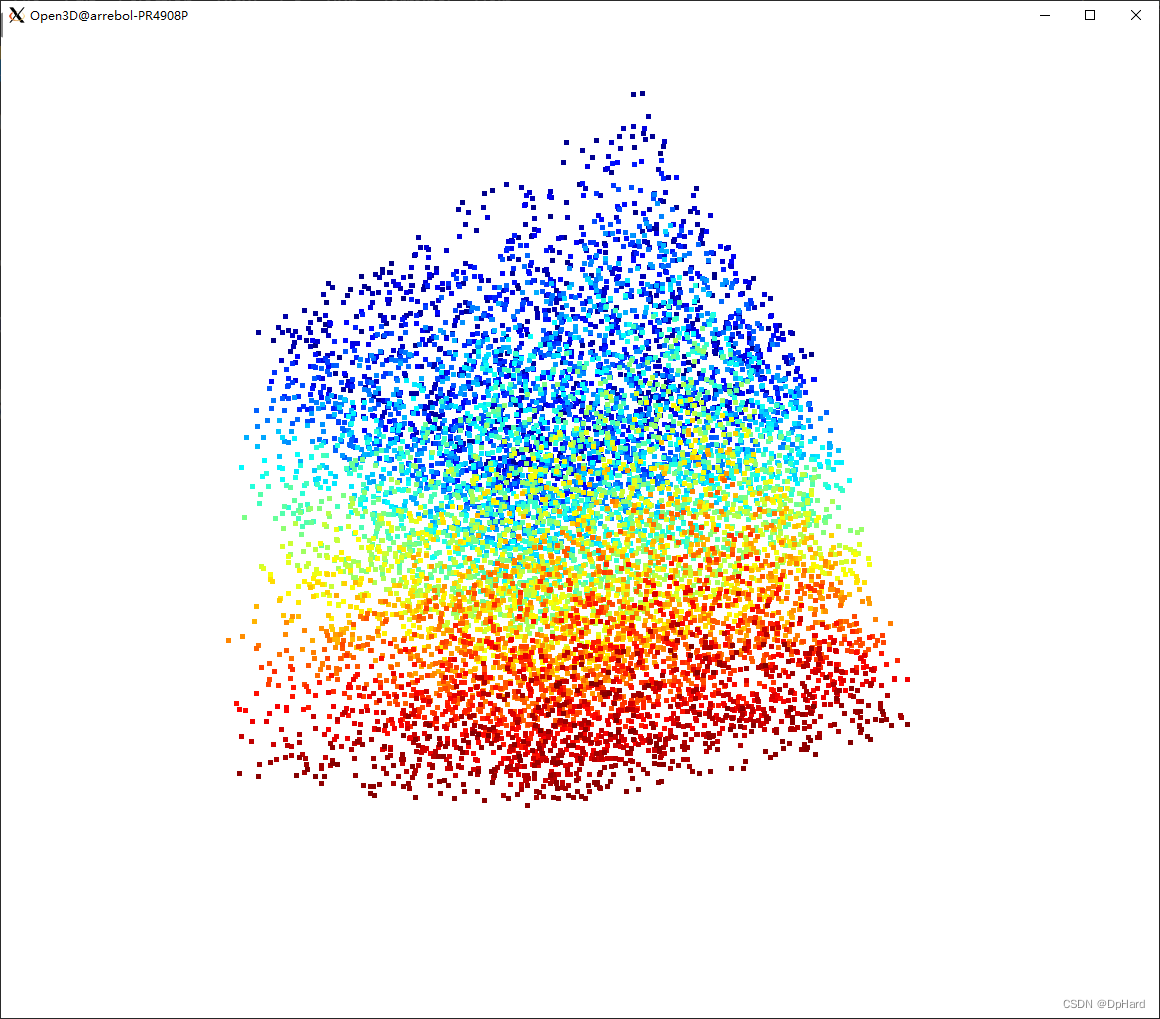

运行结果: