1 环境准备

1.1 主机信息

| ip | hostname |

| 10.220.43.203 | master |

| 10.220.43.204 | node1 |

1.2 系统信息

$ cat /etc/redhat-release

Alibaba Cloud Linux (Aliyun Linux) release 2.1903 LTS (Hunting Beagle)2 部署准备

master/与slave主机均需要设置。

2.1 设置主机名

# master

hostnamectl set-hostname master# slave

hostnamectl set-hostname slave2.2 设置hosts

$ vim /etc/hosts

#添加如下内容:

10.220.43.203 master

10.220.43.204 slave

#保存退出,重新登录主机2.3 网络配置

# 桥接设置(master/node)$ cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

$ sysctl --system3 安装部署

master/slave均安装

3.1 安装docker

docker二进制安装参考:docker部署及常用命令-CSDN博客

3.2 配置kubernetes加速yum源

为kubernetes添加国内阿里云YUM软件源。

$ cat > /etc/yum.repos.d/kubernetes.repo << EOF

[k8s]

name=k8s

enabled=1

gpgcheck=0

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

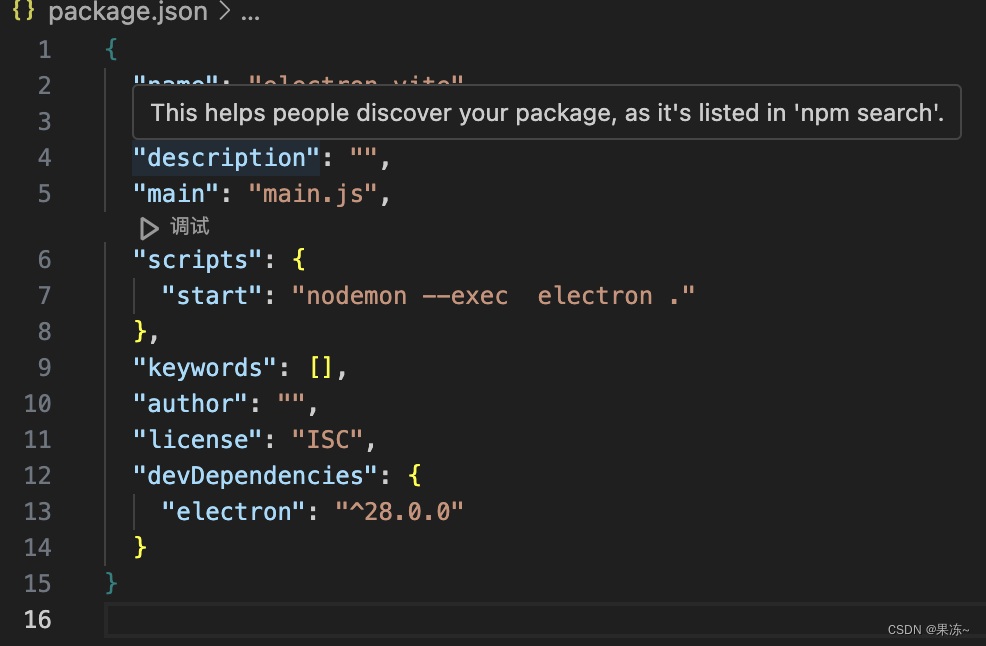

EOF3.3 安装kubeadm/kubelet/kubectl

#版本可以选择自己要安装的版本号

$ yum install -y kubelet-1.25.0 kubectl-1.25.0 kubeadm-1.25.0

# 此时,还不能启动kubelet,因为此时配置还不能,现在仅仅可以设置开机自启动

$ systemctl enable kubelet3.4 安装容器运行时

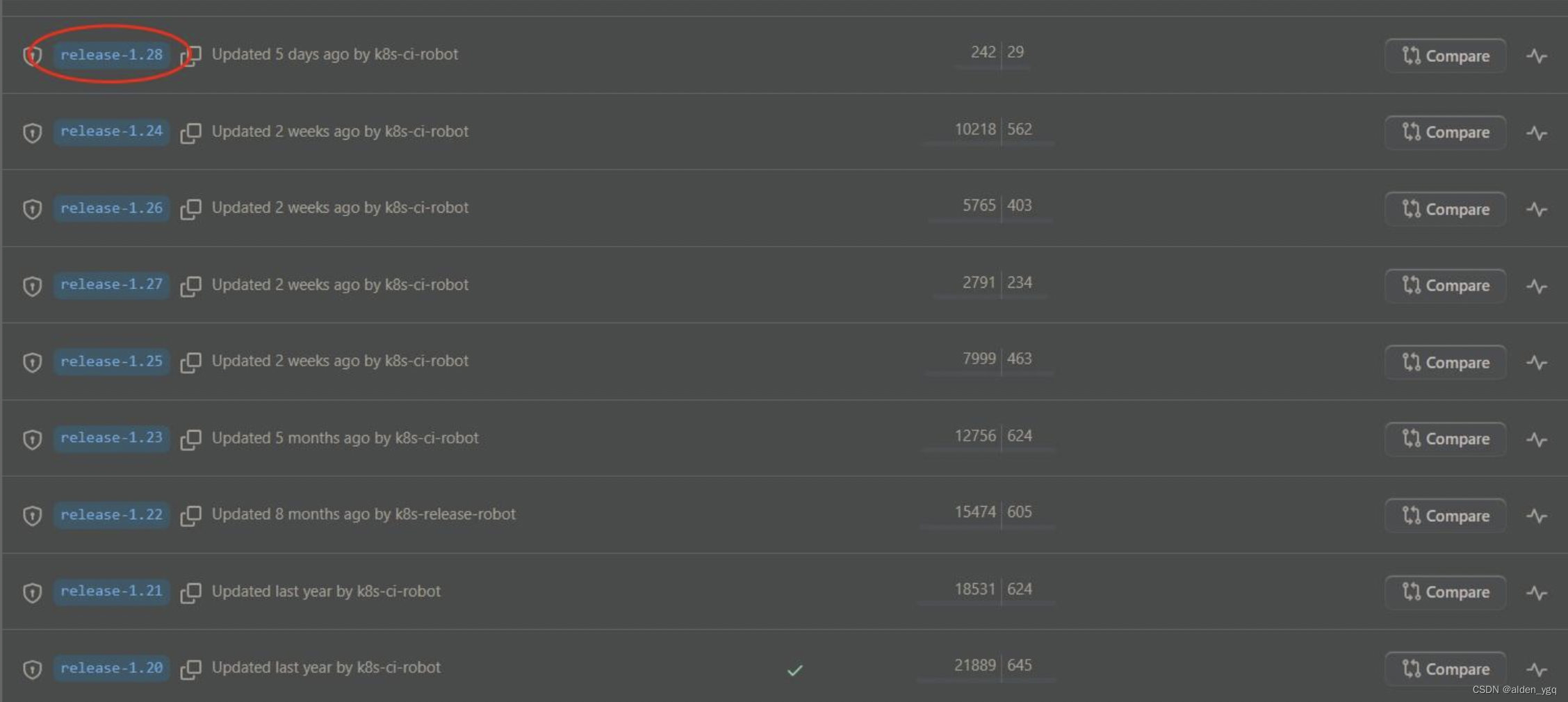

如果k8s版本低于1.24版,可以忽略此步骤。

由于1.24版本不能直接兼容docker引擎,

Docker Engine 没有实现 CRI, 而这是容器运行时在 Kubernetes 中工作所需要的。 为此,必须安装一个额外的服务cri-dockerd。 cri-dockerd 是一个基于传统的内置 Docker 引擎支持的项目, 它在 1.24 版本从 kubelet 中移除。

目前最新k8s版本为1.28.x。

需要在集群内每个节点上安装一个容器运行时以使Pod可以运行在上面。高版本Kubernetes要求使用符合容器运行时接口(CRI)的运行时。

以下是几款 Kubernetes 中几个常见的容器运行时的用法:

- containerd

- CRI-O

- Docker Engine

- Mirantis Container Runtime

以下是使用 cri-dockerd 适配器来将 Docker Engine 与 Kubernetes 集成。

3.4.1 安装cri-dockerd

$ wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.2.6/cri-dockerd-0.2.6.amd64.tgz

$ tar -xf cri-dockerd-0.2.6.amd64.tgz

$ cp cri-dockerd/cri-dockerd /usr/bin/

$ chmod +x /usr/bin/cri-dockerd3.4.2 配置启动服务

$ cat <<"EOF" > /usr/lib/systemd/system/cri-docker.service

> [Unit]

> Description=CRI Interface for Docker Application Container Engine

> Documentation=https://docs.mirantis.com

> After=network-online.target firewalld.service docker.service

> Wants=network-online.target

> Requires=cri-docker.socket

> [Service]

> Type=notify

> ExecStart=/usr/bin/cri-dockerd --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.8

> ExecReload=/bin/kill -s HUP $MAINPID

> TimeoutSec=0

> RestartSec=2

> Restart=always

> StartLimitBurst=3

> StartLimitInterval=60s

> LimitNOFILE=infinity

> LimitNPROC=infinity

> LimitCORE=infinity

> TasksMax=infinity

> Delegate=yes

> KillMode=process

> [Install]

> WantedBy=multi-user.target

> EOF主要是以下命令:ExecStart=/usr/bin/cri-dockerd --network-plugin=cni --pod-infra-container-image=http://registry.aliyuncs.com/google_containers/pause:3.8

pause容器的版本可以通过kubeadm config images list查看:

$ kubeadm config images list

W1210 17:27:44.009895 31608 version.go:104] could not fetch a Kubernetes version from the internet: unable to get URL "https://dl.k8s.io/release/stable-1.txt": Get "https://cdn.dl.k8s.io/release/stable-1.txt": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

W1210 17:27:44.009935 31608 version.go:105] falling back to the local client version: v1.25.0

registry.k8s.io/kube-apiserver:v1.25.0

registry.k8s.io/kube-controller-manager:v1.25.0

registry.k8s.io/kube-scheduler:v1.25.0

registry.k8s.io/kube-proxy:v1.25.0

registry.k8s.io/pause:3.8

registry.k8s.io/etcd:3.5.4-0

registry.k8s.io/coredns/coredns:v1.9.33.4.3 ⽣成 socket ⽂件

$ cat <<"EOF" > /usr/lib/systemd/system/cri-docker.socket

[Unit]

Description=CRI Docker Socket for the API

PartOf=cri-docker.service

[Socket]

ListenStream=%t/cri-dockerd.sock

SocketMode=0660

SocketUser=root

SocketGroup=docker

[Install]

WantedBy=sockets.target

EOF3.4.4 启动 cri-docker 服务并配置开机启动

$ systemctl daemon-reload

$ systemctl enable cri-docker

$ systemctl start cri-docker

$ systemctl is-active cri-docker3.5 部署Kubernetes

master需要部署 ,slave node节点不需要执行kubeadm init。

创建kubeadm.yaml文件,内容如下:

kubeadm init \

--apiserver-advertise-address=10.220.43.203 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.25.0 \

--service-cidr=192.168.0.0/16 \

--pod-network-cidr=172.25.0.0/16 \

--ignore-preflight-errors=all \

--cri-socket unix:///var/run/cri-dockerd.sock- --apiserver-advertise-address=master节点IP

- --pod-network-cidr=10.244.0.0/16,要与后面kube-flannel.yml里的ip一致也就是使用10.244.0.0/16不要改它。

输出:

[init] Using Kubernetes version: v1.25.0

[preflight] Running pre-flight checks[WARNING CRI]: container runtime is not running: output: time="2023-12-10T17:38:57+08:00" level=fatal msg="validate service connection: CRI v1 runtime API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.RuntimeService"

, error: exit status 1

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'[WARNING ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/kube-apiserver:v1.25.0: output: time="2023-12-10T17:38:57+08:00" level=fatal msg="validate service connection: CRI v1 image API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.ImageService"

, error: exit status 1[WARNING ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/kube-controller-manager:v1.25.0: output: time="2023-12-10T17:38:57+08:00" level=fatal msg="validate service connection: CRI v1 image API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.ImageService"

, error: exit status 1[WARNING ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/kube-scheduler:v1.25.0: output: time="2023-12-10T17:38:57+08:00" level=fatal msg="validate service connection: CRI v1 image API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.ImageService"

, error: exit status 1[WARNING ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/kube-proxy:v1.25.0: output: time="2023-12-10T17:38:57+08:00" level=fatal msg="validate service connection: CRI v1 image API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.ImageService"

, error: exit status 1[WARNING ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/pause:3.8: output: time="2023-12-10T17:38:57+08:00" level=fatal msg="validate service connection: CRI v1 image API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.ImageService"

, error: exit status 1[WARNING ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/etcd:3.5.4-0: output: time="2023-12-10T17:38:57+08:00" level=fatal msg="validate service connection: CRI v1 image API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.ImageService"

, error: exit status 1[WARNING ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/coredns:v1.9.3: output: time="2023-12-10T17:38:58+08:00" level=fatal msg="validate service connection: CRI v1 image API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.ImageService"

, error: exit status 1

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master] and IPs [192.168.0.1 10.220.43.203]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master] and IPs [10.220.43.203 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master] and IPs [10.220.43.203 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 28.001898 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: 3u2q8d.u899qmv8lsm7sxyz

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configAlternatively, if you are the root user, you can run:export KUBECONFIG=/etc/kubernetes/admin.confYou should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 10.220.43.203:6443 --token 3u2q8d.u899qmv8lsm7sxyz \--discovery-token-ca-cert-hash sha256:d7b2a47417fbff13e11a50ae92aaa0666448a92eb4c8deaaae9e9aa5c0cbc930 这里是通过kubeadm init安装,所以执行后会下载相应的docker镜像,一般会发现在控制台卡着不动很久,这时就是在下载镜像,可以使用docker images命令查看是不是有新的镜像增加。

3.6 测试kubectl工具

master/slave均执行。

kubeadm安装好后,控制台也会有提示执行以下命令,照着执行(也就是第11步最后控制台输出的)。

3.6.1 配置kubeconfig

master执行。

$ mkdir -p $HOME/.kube

$ sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

$ sudo chown $(id -u):$(id -g) $HOME/.kube/config

$ scp /etc/kubernetes/admin.conf 10.220.43.204:/etc/kubernetes

root@10.220.43.204's password:

admin.conf 100% 5641 19.2MB/s 00:00 slave执行。

$ mkdir -p $HOME/.kube

$ sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

$ sudo chown $(id -u):$(id -g) $HOME/.kube/config3.6.2 配置变量

$ vim /etc/profile

#加入以下变量

export KUBECONFIG=/etc/kubernetes/admin.conf

$ source /etc/profile3.6.3 测试kubectl命令

$ kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

master NotReady control-plane 21m v1.25.0 10.220.43.203 <none> Alibaba Cloud Linux (Aliyun Linux) 2.1903 LTS (Hunting Beagle) 4.19.91-27.6.al7.x86_64 docker://20.10.21

一般来说状态先会是NotReady ,可能程序还在启动中,过一会再看看就会变成Ready

3.7 安装Pod网络插件flannel

master/slave均执行

$ kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created报错:The connection to the server http://raw.githubusercontent.com was refused - did you specify the right host or port?

原因:国外资源访问不了

解决办法:host配置可以访问的ip

vim /etc/hosts

#在/etc/hosts增加以下这条

199.232.28.133 raw.githubusercontent.com重新执行上面命令,便可成功安装!

3.8 slave节点加入master

此步骤需要用到第3.5 部署Kubernetes控制台输出内容:

kubeadm join 10.220.43.203:6443 --token 3u2q8d.u899qmv8lsm7sxyz \--discovery-token-ca-cert-hash sha256:d7b2a47417fbff13e11a50ae92aaa0666448a92eb4c8deaaae9e9aa5c0cbc930 加入命令为:

kubeadm join 10.220.43.203:6443 --token 3u2q8d.u899qmv8lsm7sxyz \--discovery-token-ca-cert-hash sha256:d7b2a47417fbff13e11a50ae92aaa0666448a92eb4c8deaaae9e9aa5c0cbc930 \--ignore-preflight-errors=all \

--cri-socket unix:///var/run/cri-dockerd.sock- --ignore-preflight-errors=all

- --cri-socket unix:///var/run/cri-dockerd.sock

这两行一定要加上不然就会报各种错:

[preflight] Running pre-flight checks

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR CRI]: container runtime is not running: output: time="2023-08-31T16:42:23+08:00" level=fatal msg="validate service connection: CRI v1 runtime API is not implemented for endpoint \"unix:///var/run/cri-dockerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.RuntimeService"

, error: exit status 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

Found multiple CRI endpoints on the host. Please define which one do you wish to use by setting the 'criSocket' field in the kubeadm configuration file: unix:///var/run/containerd/containerd.sock, unix:///var/run/cri-dockerd.sock

To see the stack trace of this error execute with --v=5 or higher

3.9 验证

master节点:

$ kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

master Ready control-plane 49m v1.25.0 10.220.43.203 <none> Alibaba Cloud Linux (Aliyun Linux) 2.1903 LTS (Hunting Beagle) 4.19.91-27.6.al7.x86_64 docker://20.10.21

slave Ready <none> 10m v1.25.0 10.220.43.204 <none> Alibaba Cloud Linux (Aliyun Linux) 2.1903 LTS (Hunting Beagle) 4.19.91-27.6.al7.x86_64 docker://20.10.21slavea节点:

$ kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

master Ready control-plane 50m v1.25.0 10.220.43.203 <none> Alibaba Cloud Linux (Aliyun Linux) 2.1903 LTS (Hunting Beagle) 4.19.91-27.6.al7.x86_64 docker://20.10.21

slave Ready <none> 11m v1.25.0 10.220.43.204 <none> Alibaba Cloud Linux (Aliyun Linux) 2.1903 LTS (Hunting Beagle) 4.19.91-27.6.al7.x86_64 docker://20.10.214 常见使用问题

4.1 K8S在kubeadm init后,没有记录kubeadm join如何查询?

#再生成一个token即可

kubeadm token create --print-join-command

#下在的命令可以查看历史的token

kubeadm token list4.2 node节点kubeadm join失败后,要重新join怎么办?

#再生成一个token即可

kubeadm token create --print-join-command

#下在的命令可以查看历史的token

kubeadm token list4.3 重启kubelet

systemctl daemon-reload

systemctl restart kubelet4.4 查询系统组件

#查询节点

kubectl get nodes

#查询pods 一般要带上"-n"即命名空间。不带等同 -n dafault

kubectl get pods -n kube-system5 异常问题处理

5.1 kubeadm init报错

[root@k8s centos]# kubeadm init

I1205 06:44:01.459391 12097 version.go:94] could not fetch a Kubernetes version from the internet: unable to get URL "https://dl.k8s.io/release/stable-1.txt": Get https://dl.k8s.io/release/stable-1.txt: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

I1205 06:44:01.459549 12097 version.go:95] falling back to the local client version: v1.13.0

[init] Using Kubernetes version: v1.13.0

[preflight] Running pre-flight checks[WARNING Service-Docker]: docker service is not enabled, please run 'systemctl enable docker.service'[WARNING Hostname]: hostname "k8s.novalocal" could not be reached[WARNING Hostname]: hostname "k8s.novalocal": lookup k8s.novalocal on 10.32.148.99:53: no such host[WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service'

error execution phase preflight: [preflight] Some fatal errors occurred:[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`5.1.1 网络设置问题

5.1.1.1 错误内容

/proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 15.1.1.2 解决方法

$ echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables5.1.2 Enable docker

5.1.2.1 错误内容

[WARNING Service-Docker]: docker service is not enabled, please run 'systemctl enable docker.service'5.1.2.2 解决方法

$ systemctl enable docker.service5.1.3 hostname问题

5.1.3.1 错误内容

[WARNING Hostname]: hostname "slave" could not be reached

[WARNING Hostname]: hostname "slave": lookup slave on 10.32.148.99:53: no such host5.1.3.2 解决方法

1)修改主机名

$ hostnamectl set-hostname slave2)更改/etc/hostname

$ echo k8s > /etc/hostname5.1.4 Enable kubelet

5.1.4.1 错误内容

[WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service'5.1.4.2 错误内容

$ systemctl enable kubelet.service