一、BP网络

1.1 单层感知机

单层感知机,就是只有一层神经元,它的模型结构如下1:

对于权重 w w w的更新,我们采用如下公式:

w i = w i + Δ w i Δ w i = η ( y − y ^ ) x i (1) w_i=w_i+\Delta w_i \\ \Delta w_i=\eta(y-\hat{y})x_i\tag{1} wi=wi+ΔwiΔwi=η(y−y^)xi(1)

其中, y y y 为标签, y ^ \hat{y} y^ 为模型输出值, η \eta η 为学习率, x i x_i xi 为模型输入。

这样我们就实现了对一层感知机的参数调整。

但是,单层感知机由于是线性的,拟合能力很有限,对于非线性可分的问题(比如异或)都是无法处理的。

1.2 多层感知机

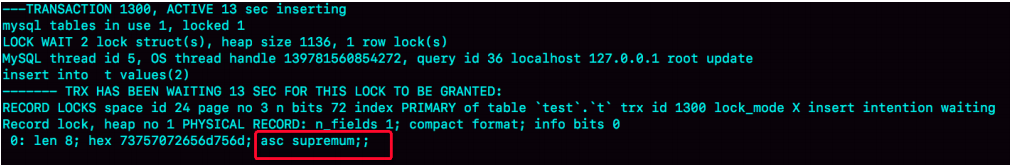

如果是多层感知机,参数调整复杂一些,需要采用误差逆向传播算法。网络结构如图,这里假设我们只考虑包含一个隐含层的神经网络:

网络的具体参数如上图所示。

其在第k个样例上的误差为:

E k = 1 2 ∑ j = 1 l ( y ^ j k − y j k ) E_k=\frac{1}{2}\sum_{j=1}^l(\hat{y}_j^k-y_j^k) Ek=21j=1∑l(y^jk−yjk)

对于任意参数更新:

v = v + Δ v v=v+\Delta v v=v+Δv

对于参数 w w w,

Δ w h j = − η ∂ E k ∂ w h j \Delta w_{hj}=-\eta \frac{\partial E_k}{\partial w_{hj}} Δwhj=−η∂whj∂Ek

∂ E k ∂ w h j = ∂ E k ∂ y ^ j k ⋅ ∂ y ^ j k ∂ β j ⋅ ∂ β j ∂ w h j \frac{\partial E_k}{\partial w_{hj}}=\frac{\partial E_k}{\partial \hat{y}_j^k} \cdot \frac{\partial \hat{y}_j^k}{\partial \beta_j} \cdot \frac{\partial \beta_j}{\partial w_{hj}} ∂whj∂Ek=∂y^jk∂Ek⋅∂βj∂y^jk⋅∂whj∂βj

β i \beta_i βi为输出层的输入,根据其定义,

∂ β j ∂ w h j = b h \frac{\partial \beta_j}{\partial w_{hj}}=b_h ∂whj∂βj=bh

其中 b h b_h bh为隐含层的输出。

因为每一层要经过一个激活函数,这里我们采用的是sigmoid函数,他有一个很好的性质:

f ′ ( x ) = f ( x ) ( 1 − f ( x ) ) f'(x)=f(x)(1-f(x)) f′(x)=f(x)(1−f(x))

于是就有:

g j = − ∂ E k ∂ y ^ j k ⋅ ∂ y ^ j k ∂ β j = − ( y ^ j k − y j k ) f ′ ( β j − θ j ) = y ^ j k ( 1 − y ^ j k ) ( y j k − y ^ j k ) \begin{aligned} g_j=& -\frac{\partial E_k}{\partial \hat{y}_j^k} \cdot \frac{\partial \hat{y}_j^k}{\partial \beta_j} \\ =&-(\hat{y}_j^k-y_j^k)f'(\beta_j-\theta_j)\\ =&\hat{y}_j^k(1-\hat{y}_j^k)(y_j^k-\hat{y}_j^k) \end{aligned} gj===−∂y^jk∂Ek⋅∂βj∂y^jk−(y^jk−yjk)f′(βj−θj)y^jk(1−y^jk)(yjk−y^jk)

其中, θ j \theta_j θj 为第j个输出神经元的 bias。

这样,我们就得到了 w w w的更新量(注意:这里不需要加负号了,因为我们已经在 g j g_j gj中添加过了),

Δ w h j = η g j b h \Delta w_{hj}=\eta g_j b_h Δwhj=ηgjbh

Δ θ j = − η g j Δ v i h = η e h x i Δ γ h = − η e h \Delta \theta_j=-\eta g_j\\ \Delta v_{ih}=\eta e_h x_i \\ \Delta \gamma_h=-\eta e_h Δθj=−ηgjΔvih=ηehxiΔγh=−ηeh

在BP算法中,假设我们的只有3层网络,1个输入层、一个隐含层、一个输出层,那么参数的数量为 d × q + q × l + q + l d\times q+q\times l+q+l d×q+q×l+q+l(其中,d,q,l分别为输入层、隐藏层、输出层的神经元数量),我们根据上面的公式就可以进行参数的迭代计算了。

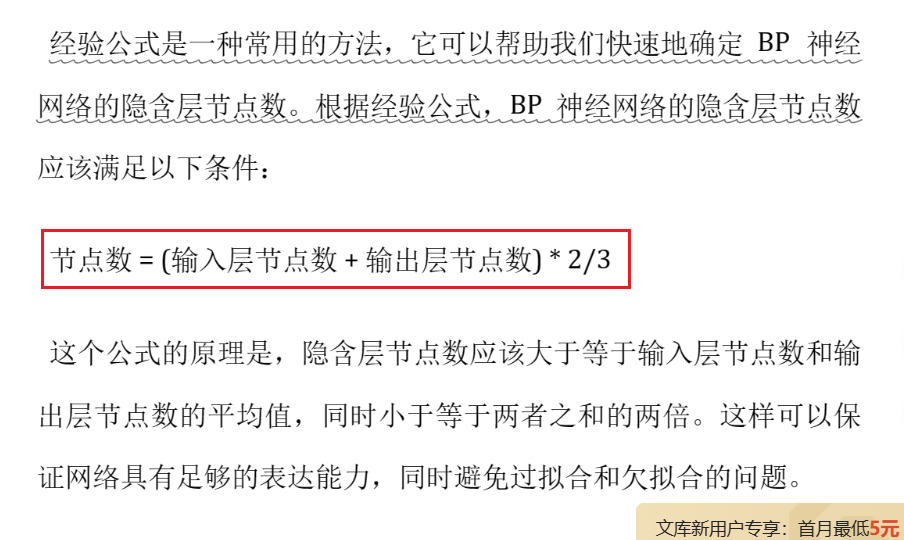

而根据不同的输入数据,隐藏层神经元的数量如何设置呢?我这里采用了一个经验公式,参考自bp隐含层节点数经验公式。

文中指出:

q = ( d + l ) × 2 3 q=(d+l)\times \frac{2}{3} q=(d+l)×32

二、基于weka代码实现

package weka.classifiers.myf;import weka.classifiers.Classifier;

import weka.core.*;

import weka.core.matrix.Matrix;

import weka.filters.Filter;

import weka.filters.unsupervised.attribute.NominalToBinary;/*** @author YFMan* @Description 自定义的 BP 神经网络 分类器* @Date 2023/6/7 11:30*/

public class myBPNet extends Classifier {/** @Author YFMan* @Description //主函数* @Date 2023/6/7 19:14* @Param [argv 命令行参数]* @return void**/public static void main(String[] argv) {runClassifier(new myBPNet(), argv);}// 训练数据private Instances m_instances;// 当前正在训练的样例private Instance m_currentInstance;// 训练总次数private final int m_numEpochs;// 二值化过滤器,将标称属性转换为二值属性,进而输入到神经网络中private final NominalToBinary m_nominalToBinaryFilter;// 学习率private final double m_learningRate;// 当前的训练误差private double m_error;// 输入维度(输入层神经元数量)private int m_numInputs;// 隐藏层维度(隐藏层神经元数量)private int m_numHidden;// 输出维度(输出层神经元数量)private int m_numOutputs;// 输入层的输出private Matrix m_input;// 输入层到隐藏层的权重矩阵private Matrix m_weightsInputHidden;// 隐藏层到输出层的权重矩阵private Matrix m_weightsHiddenOutput;// 隐藏层的阈值private Matrix m_hiddenThresholds;// 输出层的阈值private Matrix m_outputThresholds;// 隐藏层的输出private Matrix m_hiddenOutput;// 输出层的输出private Matrix m_output;// 输出层对应的标签private Matrix m_labels;/** @Author YFMan* @Description // BP 网络 构造函数* @Date 2023/6/7 19:04* @Param []* @return**/public myBPNet() {// 训练数据m_instances = null;// 当前样例m_currentInstance = null;// 训练误差m_error = 0;// 二值化过滤器,将标称属性转换为二值属性m_nominalToBinaryFilter = new NominalToBinary();// 训练总次数m_numEpochs = 500;// 学习率m_learningRate = .3;}/** @Author YFMan* @Description //Sigmoid函数* @Date 2023/6/7 19:53* @Param [x]* @return double**/double sigmoid(double x) {return 1.0 / (1.0 + Math.exp(-x));}/** @Author YFMan* @Description //前向传播* @Date 2023/6/7 19:47* @Param []* @return void**/void forwardPropagation() {// 初始化输入层的输出for (int i = 0; i < m_numInputs; i++) {m_input.set(0, i, m_currentInstance.value(i));}// 计算隐藏层的输出m_hiddenOutput = m_input.times(m_weightsInputHidden);// 减去阈值m_hiddenOutput = m_hiddenOutput.minus(m_hiddenThresholds);// 激活函数for (int i = 0; i < m_numHidden; i++) {m_hiddenOutput.set(0, i, sigmoid(m_hiddenOutput.get(0, i)));}// 计算输出层的输出m_output = m_hiddenOutput.times(m_weightsHiddenOutput);// 减去阈值m_output = m_output.minus(m_outputThresholds);// 激活函数for (int i = 0; i < m_numOutputs; i++) {m_output.set(0, i, sigmoid(m_output.get(0, i)));}}/** @Author YFMan* @Description //反向传播* @Date 2023/6/7 20:20* @Param []* @return void**/void backPropagation() {// 获取当前样例的标签m_labels = new Matrix(1, m_numOutputs);for (int i = 0; i < m_numOutputs; i++) {// 将标签转换为矩阵(最后部分的 binary 对应 类标签 标值)m_labels.set(0, i, m_currentInstance.value(m_numInputs + i));}// 计算 g = m_output * (1 - m_output) * (m_labels - m_output)// 输出层的误差Matrix m_outputError = m_output.copy();m_outputError = m_outputError.times(-1);// 创建一个全为 1 的矩阵 用于 1 - m_outputMatrix one = new Matrix(1, m_numOutputs, 1);m_outputError = m_outputError.plus(one);m_outputError = m_outputError.arrayTimes(m_output);m_outputError = m_outputError.arrayTimes(m_labels.minus(m_output));// 计算 隐藏层到输出层的权重矩阵的增量 deltaWeightsHiddenOutput = m_learningRate * g * b_h// 隐藏层到输出层的权重矩阵的增量Matrix m_deltaWeightsHiddenOutput = new Matrix(m_numHidden, m_numOutputs);for(int h = 0; h < m_numHidden; h++) {for(int j = 0; j < m_numOutputs; j++) {m_deltaWeightsHiddenOutput.set(h, j, m_learningRate * m_outputError.get(0, j) * m_hiddenOutput.get(0, h));}}// 计算 隐藏层到输出层的阈值的增量 deltaOutputThresholds = - m_learningRate * g// 输出层的阈值的增量Matrix m_deltaOutputThresholds = m_outputError.times(-m_learningRate);// 计算 隐藏层的误差 hiddenError = b_h * (1 - b_h) * g * m_weightsHiddenOutput.transpose()Matrix one1 = new Matrix(1, m_numHidden, 1);// 隐藏层的误差Matrix m_hiddenError = m_hiddenOutput.copy();m_hiddenError = m_hiddenError.times(-1);m_hiddenError = m_hiddenError.plus(one1);m_hiddenError = m_hiddenError.arrayTimes(m_hiddenOutput);m_hiddenError = m_hiddenError.arrayTimes(m_outputError.times(m_weightsHiddenOutput.transpose()));// 计算 输入层到隐藏层的权重矩阵的增量 deltaWeightsInputHidden = m_learningRate * hiddenError * x_i// 输入层到隐藏层的权重矩阵的增量Matrix m_deltaWeightsInputHidden = new Matrix(m_numInputs, m_numHidden);for(int i = 0; i < m_numInputs; i++) {for(int h = 0; h < m_numHidden; h++) {m_deltaWeightsInputHidden.set(i, h, m_learningRate * m_hiddenError.get(0, h) * m_input.get(0, i));}}// 计算 输入层到隐藏层的阈值的增量 deltaHiddenThresholds = - m_learningRate * hiddenError// 隐藏层的阈值的增量Matrix m_deltaHiddenThresholds = m_hiddenError.times(-m_learningRate);// 更新 隐藏层到输出层的权重矩阵m_weightsHiddenOutput = m_weightsHiddenOutput.plus(m_deltaWeightsHiddenOutput);// 更新 隐藏层到输出层的阈值m_outputThresholds = m_outputThresholds.plus(m_deltaOutputThresholds);// 更新 输入层到隐藏层的权重矩阵m_weightsInputHidden = m_weightsInputHidden.plus(m_deltaWeightsInputHidden);// 更新 输入层到隐藏层的阈值m_hiddenThresholds = m_hiddenThresholds.plus(m_deltaHiddenThresholds);// 更新训练误差m_error += calculateError();}/** @Author YFMan* @Description //计算训练误差* @Date 2023/6/7 21:04* @Param []* @return double**/double calculateError() {// 计算误差double error = 0;for (int i = 0; i < m_numOutputs; i++) {error += Math.pow(m_labels.get(0, i) - m_output.get(0, i), 2);}error /= 2;return error;}/** @Author YFMan* @Description //训练BP网络* @Date 2023/6/7 19:44* @Param []* @return void**/void train() {// 遍历epochs次训练for (int i = 0; i < m_numEpochs; i++) {// 初始化训练误差m_error = 0;// 遍历训练数据for (int j = 0; j < m_instances.numInstances(); j++) {// 获取当前训练样例m_currentInstance = m_instances.instance(j);// 前向传播forwardPropagation();// 反向传播backPropagation();}// 打印训练误差System.out.println("Epoch " + (i + 1) + " error: " + m_error);// 训练误差小于0.01,停止训练if (m_error < 0.01) {break;}}}/** @Author YFMan* @Description //根据训练数据训练BP网络* @Date 2023/6/7 19:12* @Param [instances 训练数据]* @return void**/public void buildClassifier(Instances instances) throws Exception {// 训练数据m_instances = instances;// 获取训练数据的标签m_nominalToBinaryFilter.setInputFormat(m_instances);m_instances = Filter.useFilter(m_instances, m_nominalToBinaryFilter);m_numInputs = 0;// 遍历训练数据,获取输入维度、隐藏层维度、输出维度for (int i = 0; i < instances.numAttributes(); i++) {// 获取输入维度if (i != instances.classIndex()) {if(instances.attribute(i).numValues()<=2){m_numInputs += 1;}else{m_numInputs += instances.attribute(i).numValues();}} else { // 获取输出维度if(instances.attribute(i).numValues()<=2){m_numOutputs = 1;}else{m_numOutputs = instances.attribute(i).numValues();}}}// 隐藏层维度(输入维度+输出维度) * 2 / 3m_numHidden = (m_numInputs + m_numOutputs) * 2 / 3;// 初始化输入层m_input = new Matrix(1, m_numInputs);// 初始化权重矩阵m_weightsInputHidden = Matrix.random(m_numInputs, m_numHidden);m_weightsHiddenOutput = Matrix.random(m_numHidden, m_numOutputs);// 初始化隐藏层阈值向量m_hiddenThresholds = new Matrix(1, m_numHidden);// 初始化输出层阈值向量m_outputThresholds = new Matrix(1, m_numOutputs);// 训练误差m_error = 0;// 训练train();}/** @Author YFMan* @Description //根据样例分类数据,返回各个类别的概率* @Date 2023/6/7 19:11* @Param [instance 输入样例]* @return double[] 各个类别的概率**/public double[] distributionForInstance(Instance instance) throws Exception {// 将输入样例由标称属性转换为二值属性m_nominalToBinaryFilter.input(instance);m_currentInstance = m_nominalToBinaryFilter.output();// 前向传播forwardPropagation();// 如果输出维度为1,返回概率double[] result;if (m_numOutputs == 1) {result = new double[2];result[0] = 1 - m_output.get(0, 0);result[1] = m_output.get(0, 0);}else{ // 否则,进行归一化处理result = m_output.getRowPackedCopy();// 归一化处理double sum = 0;for (double v : result) {sum += v;}for (int i = 0; i < result.length; i++) {result[i] /= sum;}}return result;}

}

周志华.《机器学习》 ↩︎

![[JVM] 2. 类加载子系统(1)-- 内存结构、类加载子系统概述](https://img-blog.csdnimg.cn/93afdd733ba048279a5c5abba45b09eb.png)