深度学习(29)—— DETR

DETR代码欢迎光临Jane的GitHub:在这里等你

看完YOLO 之后,紧接着看了DETR。作为Transformer在物体检测上的开山之作,虽然他的性能或许不及其他的模型,但是想法是OK的。里面还有一些比较好的点

文章目录

- 深度学习(29)—— DETR

- 1. Transformer

- 2. DETR

- 2.1 背景

- 两阶段与单阶段目标检测

- Faster R-CNN

- YOLO系列

- 2.2 Knowledge Gap——DETR相比于传统算法的不同

- 2.3 DETR实现过程

- 2.4 核心代码

- 数据准备

- Transform

- 位置编码position embedding

- loss 定义

- Encoder 处理backbone得到的src特征和位置编码pos

- Decoder

- 3. 匈牙利匹配

- 4. 随手缕思路

1. Transformer

Transformer最早是谷歌提出的,目前在NLP领域依然是SOTA的,是encoder-decoder结构,encoder和decoder都是一种self-attention模块的多层叠加。详情见

2. DETR

2.1 背景

两阶段与单阶段目标检测

两阶段目标检测方法和单阶段目标检测方法是常用的目标检测算法,它们的主要区别在于目标定位和分类的方式。

- 两阶段目标检测方法: 两阶段目标检测方法首先生成一组候选框(Region Proposal),然后对每个候选框进行分类和位置回归。常见的两阶段目标检测方法包括:

- R-CNN系列:包括R-CNN、Fast R-CNN、Faster R-CNN等。这些方法使用选择性搜索等算法生成候选框,并通过卷积神经网络对每个候选框进行特征提取和分类。

- Cascade R-CNN:在基于R-CNN的方法上进一步增加了级联结构,通过多个级联分类器逐级筛选候选框,提高了检测精度。

- 单阶段目标检测方法: 单阶段目标检测方法直接在原始图像上进行分类和定位,不需要生成候选框。常见的单阶段目标检测方法包括:

- YOLO系列(You Only Look Once):包括YOLOv1、YOLOv2、YOLOv3和YOLOv4等。这些方法将整个图像划分为网格,并在每个网格上预测边界框和类别概率,然后进行非极大值抑制来得到最终的检测结果。

- RetinaNet:采用了特殊的Focal Loss函数来解决单阶段目标检测中正负样本不平衡问题,提高了对小目标的检测能力。

总结来说,两阶段目标检测方法先生成候选框再进行分类和位置回归,而单阶段目标检测方法直接在原始图像上进行分类和定位。具体选择哪种方法取决于应用需求、准确性和速度的权衡。

Faster R-CNN

Faster R-CNN(Region-based Convolutional Neural Networks)是一种用于物体检测的深度学习模型,它通过将区域生成网络(Region Proposal Network, RPN)与卷积神经网络(Convolutional Neural Network, CNN)结合起来,实现了准确且高效的目标检测。

Faster R-CNN的原理如下:

- 卷积特征提取:输入图像经过卷积神经网络(如VGGNet、ResNet等)进行前向传播,得到一个特征图。

- 区域生成网络(RPN):在特征图上滑动一个小窗口,该窗口称为锚框(anchor),对每个锚框预测两个值:1)锚框内是否包含物体;2)对应物体的边界框坐标。这样就可以生成一系列候选框。

- Roi池化:根据RPN生成的候选框,从特征图中提取固定大小的特征向量。

- 目标分类和边界框回归:将Roi池化后的特征向量输入全连接层,进行

目标分类和边界框回归。目标分类使用softmax函数输出每个类别的概率,边界框回归则用于调整候选框的位置。 - NMS(非极大值抑制):对于分类得分较高的候选框,使用非极大值抑制方法筛选出最终的检测结果。

Faster R-CNN通过将区域生成网络和目标分类回归网络结合在一起,实现了端到端的物体检测。该模型可以在保持准确性的同时提高检测速度,具有较高的实用性

YOLO系列

YOLO(You Only Look Once)的物体检测算法基于anchor设计和选择具有以下特点:

- 实时性:YOLO是一种实时物体检测算法,能够在高帧率下进行目标检测,适用于需要快速实时响应的应用场景。

- 端到端:YOLO采用单次前向传播的方式进行检测,从原始图像直接预测边界框和类别,无需使用区域生成网络或候选框筛选等复杂过程,简化了物体检测的流程。

- 全局信息:YOLO在整个图像上进行检测,将图像划分为网格,并在每个网格中预测边界框和类别概率。这样可以捕捉到全局的上下文信息,有利于处理小目标和密集目标。

- 多尺度支持:YOLO使用多层卷积特征图提取不同尺度的特征,从而可以检测不同大小的目标。通过融合多个尺度的特征,YOLO可以更好地处理多尺度的目标检测任务。

- 对多类别检测的支持:YOLO可以检测多个类别的目标,并对每个目标给出相应的类别概率。它使用softmax函数对类别进行分类,可以适应多类别的物体检测任务。

- 高效的训练和推理:YOLO具有较少的参数量,因此在训练和推理过程中消耗的计算资源相对较少。这使得YOLO可以在较低的硬件配置上实现高效的物体检测。

2.2 Knowledge Gap——DETR相比于传统算法的不同

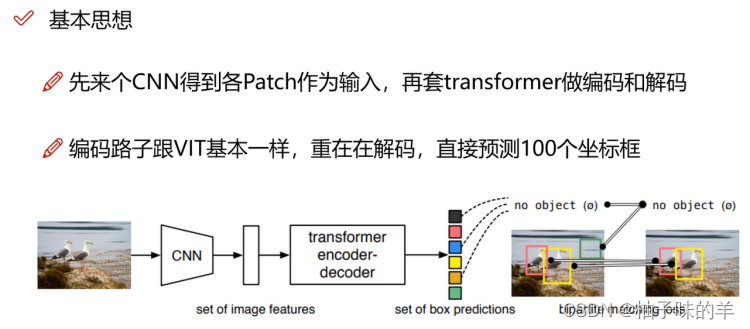

不需要候选框生成:DETR完全摒弃了传统目标检测方法中的候选框生成过程,实现了端到端的物体检测。这使得DETR在设计上更简洁,减少了额外的计算开销。【有别于单阶段,如Faster-RCNN】使用Transformer架构:DETR采用了Transformer架构来处理序列数据,将输入特征序列转换为预测序列。这种使用Transformer的方式使得DETR能够捕捉全局上下文信息,有利于处理小目标、密集目标以及多尺度问题。【开山之作】目标数量灵活:DETR可以处理任意数量的目标,因为它使用固定数量的对象查询(object queries:100)进行目标位置和类别预测。这使得DETR能够在不受限于预定义锚框或先验知识的情况下进行目标检测。【有别于yolo前期设计选择anchor】基于匈牙利算法的目标匹配:DETR采用匈牙利算法将预测的边界框与真实边界框进行匹配,以最小化匹配对的损失。这样可以建立目标与预测之间的对应关系,从而提高了检测准确性。损失函数的设计:DETR使用了两个损失函数进行训练,一个是目标分类损失 (cross-entropy loss),用于预测目标类别 ;另一个是目标框回归损失 (Smooth L1 loss),用于预测目标边界框 。这样的损失函数设计有助于模型的训练和优化。

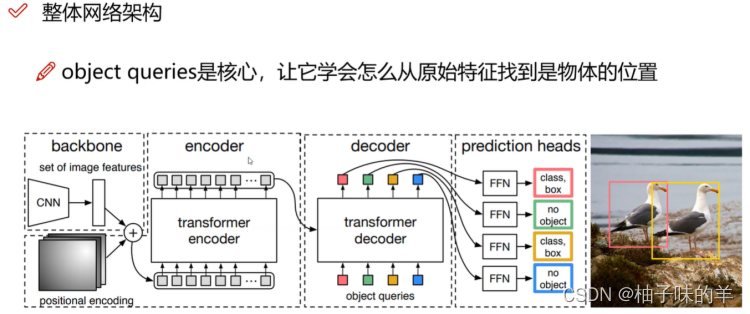

2.3 DETR实现过程

特征提取:输入图像经过卷积神经网络(如ResNet)进行特征提取得到特征图。encoder-decoder架构:encoder将特征图作为输入,并将其转换为一系列encoder特征。decoder则通过self-attention处理encoder特征并生成包含目标位置、类别和目标背景信息的特征序列。目标位置和类别预测:在decoder中,首先需要初始化一个100的object query,这个query先经过自身的self-attention得到新的query,该query与encoder生成的特征得到key和value再经过注意力机制生成预测目标的边界框位置和类别。目标匹配:使用匈牙利算法将预测的边界框与真实边界框进行匹配,以最小化匹配对的损失。这样可以建立目标与预测之间的对应关系。损失计算和训练:DETR使用了两个损失函数进行训练,一个是目标分类损失(cross-entropy loss),用于预测目标类别;另一个是目标框回归损失(Smooth L1 loss),用于预测目标边界框。通过优化这两个损失函数,训练DETR模型。

摒弃了传统的anchor,候选框和非极大值抑制等复杂的设计,实现了端到端的物体检测。它具有更简洁的设计和更高的准确性,并且可以处理任意数量的目标,适用于不同尺度和密集度的场景。

2.4 核心代码

数据准备

敲重点!DETR是在CoCo数据集上训练的,如果想在CoCo数据集上运行会非常方便。 但是!但是如果想要训练自己的数据集简单的方法只有把自己的数据格式制作成coco的那样。我这么懒,所以大家知道我一定不~ 附:CoCo【我愿称之为:搓搓】 需要梯子才能进去,但是大家如果被墙了,就网盘上搜搜应该有资源的。太大了14版本train+val都要19G,我选择退~

Transform

这个就是torchvision自带的一些数据增强方法,不想yolo-v7中那么复杂。

def make_coco_transforms(image_set):normalize = T.Compose([T.ToTensor(),T.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])])scales = [480, 512, 544, 576, 608, 640, 672, 704, 736, 768, 800]if image_set == 'train':return T.Compose([T.RandomHorizontalFlip(),T.RandomSelect(T.RandomResize(scales, max_size=1333),T.Compose([T.RandomResize([400, 500, 600]),T.RandomSizeCrop(384, 600),T.RandomResize(scales, max_size=1333),])),normalize,])if image_set == 'val':return T.Compose([T.RandomResize([800], max_size=1333),normalize,])raise ValueError(f'unknown {image_set}')

位置编码position embedding

- 正余弦

- 可学习的方法

class PositionEmbeddingSine(nn.Module):"""This is a more standard version of the position embedding, very similar to the oneused by the Attention is all you need paper, generalized to work on images."""def __init__(self, num_pos_feats=64, temperature=10000, normalize=False, scale=None):super().__init__()self.num_pos_feats = num_pos_featsself.temperature = temperatureself.normalize = normalizeif scale is not None and normalize is False:raise ValueError("normalize should be True if scale is passed")if scale is None:scale = 2 * math.piself.scale = scaledef forward(self, tensor_list: NestedTensor):x = tensor_list.tensorsprint(x.shape)mask = tensor_list.mask # mask表示每个位置是实际特征还是padding出来的print(mask.shape)assert mask is not Nonenot_mask = ~masky_embed = not_mask.cumsum(1, dtype=torch.float32) #行方向累加print(y_embed.shape)x_embed = not_mask.cumsum(2, dtype=torch.float32) #列方向累加print(x_embed.shape)if self.normalize:# 归一化eps = 1e-6y_embed = y_embed / (y_embed[:, -1:, :] + eps) * self.scalex_embed = x_embed / (x_embed[:, :, -1:] + eps) * self.scaledim_t = torch.arange(self.num_pos_feats, dtype=torch.float32, device=x.device)print(dim_t.shape)dim_t = self.temperature ** (2 * (dim_t // 2) / self.num_pos_feats) # attention is all you needprint(dim_t.shape)pos_x = x_embed[:, :, :, None] / dim_tprint(pos_x.shape)pos_y = y_embed[:, :, :, None] / dim_tprint(pos_y.shape)pos_x = torch.stack((pos_x[:, :, :, 0::2].sin(), pos_x[:, :, :, 1::2].cos()), dim=4).flatten(3)print(pos_x.shape)pos_y = torch.stack((pos_y[:, :, :, 0::2].sin(), pos_y[:, :, :, 1::2].cos()), dim=4).flatten(3)print(pos_y.shape)pos = torch.cat((pos_y, pos_x), dim=3).permute(0, 3, 1, 2)print(pos.shape)return posclass PositionEmbeddingLearned(nn.Module):"""Absolute pos embedding, learned."""def __init__(self, num_pos_feats=256):super().__init__()self.row_embed = nn.Embedding(50, num_pos_feats)self.col_embed = nn.Embedding(50, num_pos_feats)self.reset_parameters()def reset_parameters(self):nn.init.uniform_(self.row_embed.weight)nn.init.uniform_(self.col_embed.weight)def forward(self, tensor_list: NestedTensor):x = tensor_list.tensorsh, w = x.shape[-2:]i = torch.arange(w, device=x.device)j = torch.arange(h, device=x.device)x_emb = self.col_embed(i)y_emb = self.row_embed(j)pos = torch.cat([x_emb.unsqueeze(0).repeat(h, 1, 1),y_emb.unsqueeze(1).repeat(1, w, 1),], dim=-1).permute(2, 0, 1).unsqueeze(0).repeat(x.shape[0], 1, 1, 1)return pos

loss 定义

class SetCriterion(nn.Module):""" This class computes the loss for DETR.The process happens in two steps:1) we compute hungarian assignment between ground truth boxes and the outputs of the model2) we supervise each pair of matched ground-truth / prediction (supervise class and box)"""def __init__(self, num_classes, matcher, weight_dict, eos_coef, losses):""" Create the criterion.Parameters:num_classes: number of object categories, omitting the special no-object categorymatcher: module able to compute a matching between targets and proposalsweight_dict: dict containing as key the names of the losses and as values their relative weight.eos_coef: relative classification weight applied to the no-object categorylosses: list of all the losses to be applied. See get_loss for list of available losses."""super().__init__()self.num_classes = num_classesself.matcher = matcherself.weight_dict = weight_dictself.eos_coef = eos_coefself.losses = lossesempty_weight = torch.ones(self.num_classes + 1)empty_weight[-1] = self.eos_coefself.register_buffer('empty_weight', empty_weight)def loss_labels(self, outputs, targets, indices, num_boxes, log=True):"""Classification loss (NLL)targets dicts must contain the key "labels" containing a tensor of dim [nb_target_boxes]"""assert 'pred_logits' in outputssrc_logits = outputs['pred_logits']idx = self._get_src_permutation_idx(indices)target_classes_o = torch.cat([t["labels"][J] for t, (_, J) in zip(targets, indices)])target_classes = torch.full(src_logits.shape[:2], self.num_classes,dtype=torch.int64, device=src_logits.device)target_classes[idx] = target_classes_oloss_ce = F.cross_entropy(src_logits.transpose(1, 2), target_classes, self.empty_weight) #class类别损失losses = {'loss_ce': loss_ce}if log:# TODO this should probably be a separate loss, not hacked in this one herelosses['class_error'] = 100 - accuracy(src_logits[idx], target_classes_o)[0]return losses@torch.no_grad()def loss_cardinality(self, outputs, targets, indices, num_boxes):""" Compute the cardinality error, ie the absolute error in the number of predicted non-empty boxesThis is not really a loss, it is intended for logging purposes only. It doesn't propagate gradients"""pred_logits = outputs['pred_logits']device = pred_logits.devicetgt_lengths = torch.as_tensor([len(v["labels"]) for v in targets], device=device)# Count the number of predictions that are NOT "no-object" (which is the last class)card_pred = (pred_logits.argmax(-1) != pred_logits.shape[-1] - 1).sum(1)card_err = F.l1_loss(card_pred.float(), tgt_lengths.float()) #losses = {'cardinality_error': card_err}return lossesdef loss_boxes(self, outputs, targets, indices, num_boxes):"""Compute the losses related to the bounding boxes, the L1 regression loss and the GIoU losstargets dicts must contain the key "boxes" containing a tensor of dim [nb_target_boxes, 4]The target boxes are expected in format (center_x, center_y, w, h), normalized by the image size."""assert 'pred_boxes' in outputsidx = self._get_src_permutation_idx(indices)src_boxes = outputs['pred_boxes'][idx]target_boxes = torch.cat([t['boxes'][i] for t, (_, i) in zip(targets, indices)], dim=0)loss_bbox = F.l1_loss(src_boxes, target_boxes, reduction='none') # box的回归误差losses = {}losses['loss_bbox'] = loss_bbox.sum() / num_boxesloss_giou = 1 - torch.diag(box_ops.generalized_box_iou(box_ops.box_cxcywh_to_xyxy(src_boxes),box_ops.box_cxcywh_to_xyxy(target_boxes)))losses['loss_giou'] = loss_giou.sum() / num_boxesreturn lossesdef loss_masks(self, outputs, targets, indices, num_boxes):"""Compute the losses related to the masks: the focal loss and the dice loss.targets dicts must contain the key "masks" containing a tensor of dim [nb_target_boxes, h, w]"""assert "pred_masks" in outputssrc_idx = self._get_src_permutation_idx(indices)tgt_idx = self._get_tgt_permutation_idx(indices)src_masks = outputs["pred_masks"]src_masks = src_masks[src_idx]masks = [t["masks"] for t in targets]# TODO use valid to mask invalid areas due to padding in losstarget_masks, valid = nested_tensor_from_tensor_list(masks).decompose()target_masks = target_masks.to(src_masks)target_masks = target_masks[tgt_idx]# upsample predictions to the target sizesrc_masks = interpolate(src_masks[:, None], size=target_masks.shape[-2:],mode="bilinear", align_corners=False)src_masks = src_masks[:, 0].flatten(1)target_masks = target_masks.flatten(1)target_masks = target_masks.view(src_masks.shape)losses = {"loss_mask": sigmoid_focal_loss(src_masks, target_masks, num_boxes),"loss_dice": dice_loss(src_masks, target_masks, num_boxes),}return lossesdef _get_src_permutation_idx(self, indices):# permute predictions following indicesbatch_idx = torch.cat([torch.full_like(src, i) for i, (src, _) in enumerate(indices)])src_idx = torch.cat([src for (src, _) in indices])return batch_idx, src_idxdef _get_tgt_permutation_idx(self, indices):# permute targets following indicesbatch_idx = torch.cat([torch.full_like(tgt, i) for i, (_, tgt) in enumerate(indices)])tgt_idx = torch.cat([tgt for (_, tgt) in indices])return batch_idx, tgt_idxdef get_loss(self, loss, outputs, targets, indices, num_boxes, **kwargs):loss_map = {'labels': self.loss_labels,'cardinality': self.loss_cardinality,'boxes': self.loss_boxes,'masks': self.loss_masks}assert loss in loss_map, f'do you really want to compute {loss} loss?'return loss_map[loss](outputs, targets, indices, num_boxes, **kwargs)def forward(self, outputs, targets):""" This performs the loss computation.Parameters:outputs: dict of tensors, see the output specification of the model for the formattargets: list of dicts, such that len(targets) == batch_size.The expected keys in each dict depends on the losses applied, see each loss' doc"""outputs_without_aux = {k: v for k, v in outputs.items() if k != 'aux_outputs'}# Retrieve the matching between the outputs of the last layer and the targetsindices = self.matcher(outputs_without_aux, targets) # 匈牙利匹配# Compute the average number of target boxes accross all nodes, for normalization purposesnum_boxes = sum(len(t["labels"]) for t in targets)num_boxes = torch.as_tensor([num_boxes], dtype=torch.float, device=next(iter(outputs.values())).device)if is_dist_avail_and_initialized():torch.distributed.all_reduce(num_boxes)num_boxes = torch.clamp(num_boxes / get_world_size(), min=1).item()# Compute all the requested losseslosses = {}for loss in self.losses:losses.update(self.get_loss(loss, outputs, targets, indices, num_boxes))# In case of auxiliary losses, we repeat this process with the output of each intermediate layer.if 'aux_outputs' in outputs:for i, aux_outputs in enumerate(outputs['aux_outputs']):indices = self.matcher(aux_outputs, targets)for loss in self.losses:if loss == 'masks':# Intermediate masks losses are too costly to compute, we ignore them.continuekwargs = {}if loss == 'labels':# Logging is enabled only for the last layerkwargs = {'log': False}l_dict = self.get_loss(loss, aux_outputs, targets, indices, num_boxes, **kwargs)l_dict = {k + f'_{i}': v for k, v in l_dict.items()}losses.update(l_dict)return losses

Encoder 处理backbone得到的src特征和位置编码pos

def forward_post(self,src,src_mask: Optional[Tensor] = None,src_key_padding_mask: Optional[Tensor] = None,pos: Optional[Tensor] = None):q = k = self.with_pos_embed(src, pos) #只有K和Q 加入了位置编码;并没有对V做print(q.shape)src2 = self.self_attn(q, k, value=src, attn_mask=src_mask,key_padding_mask=src_key_padding_mask)[0] #两个返回值:自注意力层的输出,自注意力权重;只需要第一个print(src2.shape)src = src + self.dropout1(src2)src = self.norm1(src)src2 = self.linear2(self.dropout(self.activation(self.linear1(src))))print(src2.shape)src = src + self.dropout2(src2)src = self.norm2(src)print(src.shape)return src

Decoder

def forward_post(self, tgt, memory, tgt_mask: Optional[Tensor] = None,memory_mask: Optional[Tensor] = None,tgt_key_padding_mask: Optional[Tensor] = None,memory_key_padding_mask: Optional[Tensor] = None,pos: Optional[Tensor] = None,query_pos: Optional[Tensor] = None):q = k = self.with_pos_embed(tgt, query_pos) # tgt是自己定义的query,第一轮全部为0print(q.shape)tgt2 = self.self_attn(q, k, value=tgt, attn_mask=tgt_mask,key_padding_mask=tgt_key_padding_mask)[0] #query先做self-attention print(tgt2.shape)tgt = tgt + self.dropout1(tgt2)tgt = self.norm1(tgt)print(memory.shape) #memory是encoder得到的做完self-attention和positionembedding的特征tgt2 = self.multihead_attn(query=self.with_pos_embed(tgt, query_pos), #encoder得到的特征作为key和value和decoder定义的query做注意力机制key=self.with_pos_embed(memory, pos),value=memory, attn_mask=memory_mask,key_padding_mask=memory_key_padding_mask)[0]print(tgt2.shape)tgt = tgt + self.dropout2(tgt2)tgt = self.norm2(tgt)tgt2 = self.linear2(self.dropout(self.activation(self.linear1(tgt))))print(tgt2.shape)tgt = tgt + self.dropout3(tgt2)tgt = self.norm3(tgt)print(tgt.shape)return tgt

3. 匈牙利匹配

匈牙利匹配是一种用于解决二分图最大匹配问题的算法。使用步骤:

- 构建二分图:将问题转化为二分图的形式,其中左侧顶点集合表示匹配的起始点,右侧顶点集合表示匹配的目标点,边表示两个顶点之间的可匹配关系。

- 初始化匹配状态:将每个顶点的匹配状态初始化为未匹配。

- 遍历起始点集合:从左侧顶点集合的每一个未匹配顶点开始,尝试进行增广路径的查找。

- 增广路径查找:对于当前的起始点,通过遍历与其相邻的未匹配目标点,如果发现一个未匹配目标点,则将其匹配到当前的起始点,并递归地继续查找增广路径。

- 匹配成功更新:如果在查找增广路径时找到了增广路径,就将路径上的所有边进行翻转操作(即原来的匹配边变成非匹配边,原来的非匹配边变成匹配边),同时将路径上的起始点和目标点的匹配状态更新为已匹配。

- 终止条件判断:如果在遍历起始点时没有找到任何增广路径,说明当前匹配状态已经是最大匹配了,算法结束。

- 输出结果:根据匹配状态确定最大匹配的边集合或者顶点集合。

通过不断查找增广路径来逐步增加二分图的匹配数量,直到无法再找到增广路径为止,从而得到最大匹配。

class HungarianMatcher(nn.Module):"""This class computes an assignment between the targets and the predictions of the networkFor efficiency reasons, the targets don't include the no_object. Because of this, in general,there are more predictions than targets. In this case, we do a 1-to-1 matching of the best predictions,while the others are un-matched (and thus treated as non-objects)."""def __init__(self, cost_class: float = 1, cost_bbox: float = 1, cost_giou: float = 1):"""Creates the matcherParams:cost_class: This is the relative weight of the classification error in the matching costcost_bbox: This is the relative weight of the L1 error of the bounding box coordinates in the matching costcost_giou: This is the relative weight of the giou loss of the bounding box in the matching cost"""super().__init__()self.cost_class = cost_classself.cost_bbox = cost_bboxself.cost_giou = cost_giouassert cost_class != 0 or cost_bbox != 0 or cost_giou != 0, "all costs cant be 0"@torch.no_grad()def forward(self, outputs, targets):""" Performs the matchingParams:outputs: This is a dict that contains at least these entries:"pred_logits": Tensor of dim [batch_size, num_queries, num_classes] with the classification logits"pred_boxes": Tensor of dim [batch_size, num_queries, 4] with the predicted box coordinatestargets: This is a list of targets (len(targets) = batch_size), where each target is a dict containing:"labels": Tensor of dim [num_target_boxes] (where num_target_boxes is the number of ground-truthobjects in the target) containing the class labels"boxes": Tensor of dim [num_target_boxes, 4] containing the target box coordinatesReturns:A list of size batch_size, containing tuples of (index_i, index_j) where:- index_i is the indices of the selected predictions (in order)- index_j is the indices of the corresponding selected targets (in order)For each batch element, it holds:len(index_i) = len(index_j) = min(num_queries, num_target_boxes)"""bs, num_queries = outputs["pred_logits"].shape[:2]# We flatten to compute the cost matrices in a batchout_prob = outputs["pred_logits"].flatten(0, 1).softmax(-1) # [batch_size * num_queries, num_classes]out_bbox = outputs["pred_boxes"].flatten(0, 1) # [batch_size * num_queries, 4]# Also concat the target labels and boxestgt_ids = torch.cat([v["labels"] for v in targets])tgt_bbox = torch.cat([v["boxes"] for v in targets])# Compute the classification cost. Contrary to the loss, we don't use the NLL,# but approximate it in 1 - proba[target class].# The 1 is a constant that doesn't change the matching, it can be ommitted.cost_class = -out_prob[:, tgt_ids]# Compute the L1 cost between boxescost_bbox = torch.cdist(out_bbox, tgt_bbox, p=1)# Compute the giou cost betwen boxescost_giou = -generalized_box_iou(box_cxcywh_to_xyxy(out_bbox), box_cxcywh_to_xyxy(tgt_bbox))# Final cost matrixC = self.cost_bbox * cost_bbox + self.cost_class * cost_class + self.cost_giou * cost_giouC = C.view(bs, num_queries, -1).cpu()sizes = [len(v["boxes"]) for v in targets]indices = [linear_sum_assignment(c[i]) for i, c in enumerate(C.split(sizes, -1))]return [(torch.as_tensor(i, dtype=torch.int64), torch.as_tensor(j, dtype=torch.int64)) for i, j in indices]4. 随手缕思路

-

main

-

build_model

-

build

- build_backbone

- build_transformer

- DETR

- build_matcher

- criterion

- postprocessors

-

train(DETR.forward)

- nested_tensor_from_tensor_list

- backbone得到特征和position

- transformer

- 得到pred_logits,pred_boxes

886,回家做饭咯~

![C国演义 [第十二章]](https://img-blog.csdnimg.cn/ef799b9f95114cb197c9eb1e1143b2e6.png)