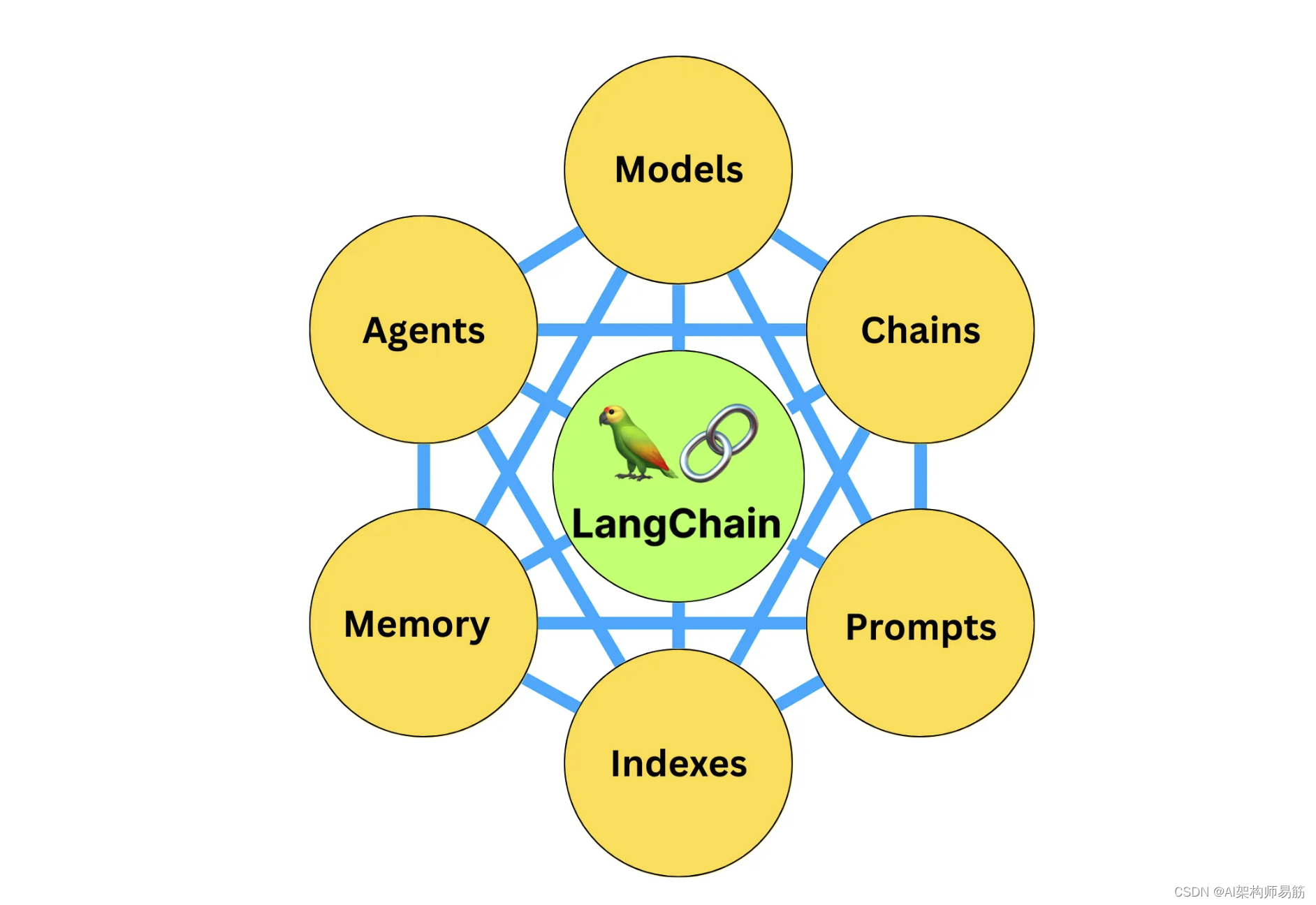

LangChain系列文章

- LangChain 60 深入理解LangChain 表达式语言23 multiple chains链透传参数 LangChain Expression Language (LCEL)

- LangChain 61 深入理解LangChain 表达式语言24 multiple chains链透传参数 LangChain Expression Language (LCEL)

- LangChain 62 深入理解LangChain 表达式语言25 agents代理 LangChain Expression Language (LCEL)

- LangChain 63 深入理解LangChain 表达式语言26 生成代码code并执行 LangChain Expression Language (LCEL)

- LangChain 64 深入理解LangChain 表达式语言27 添加审查 Moderation LangChain Expression Language (LCEL)

- LangChain 65 深入理解LangChain 表达式语言28 余弦相似度Router Moderation LangChain Expression Language (LCEL)

- LangChain 66 深入理解LangChain 表达式语言29 管理prompt提示窗口大小 LangChain Expression Language (LCEL)

- LangChain 67 深入理解LangChain 表达式语言30 调用tools搜索引擎 LangChain Expression Language (LCEL)

- LangChain 68 LLM Deployment大语言模型部署方案

- LangChain 69 向量数据库Pinecone入门

- LangChain 70 Evaluation 评估、衡量在多样化数据上的性能和完整性

1. 字符串评估器String Evaluation

字符串评估器是LangChain内的一个组件,旨在通过将语言模型生成的输出(预测)与参考字符串或输入进行比较,来评估语言模型的性能。这种比较是评估语言模型的关键步骤,为生成文本的准确性或质量提供了衡量标准。

在实践中,字符串评估器通常用于评估预测字符串与给定输入(如问题或提示)的一致性。通常会提供参考标签或上下文字符串,以定义正确或理想回应的外观。这些评估器可以根据您的应用程序的具体需求进行定制。

要创建自定义字符串评估器,请继承StringEvaluator类并实现_evaluate_strings方法。如果您需要异步支持,还应实现_aevaluate_strings方法。

以下是与字符串评估器相关的关键属性和方法的总结:

evaluation_name评估名称:指定评估的名称。requires_input必要输入:布尔属性,用于指示评估器是否需要输入字符串。如果为真,当未提供输入时,评估器将抛出错误。如果为假,如果提供了输入,则会记录警告,表明输入在评估中不会被考虑。requires_reference需要参考:布尔属性,用于指定评估器是否需要参考标签。如果为真,当未提供参考时,评估器将抛出错误。如果为假,如果提供了参考,则会记录警告,表明参考在评估中不会被考虑。

字符串评估器还实现了以下方法:

aevaluate_strings异步评估字符串:异步评估链或语言模型的输出,支持可选的输入和标签。evaluate_strings同步评估字符串:同步评估链或语言模型的输出,支持可选的输入和标签。

以下部分提供了关于可用的字符串评估器实现以及如何创建自定义字符串评估器的详细信息。

2. 标准评估 Criteria Evaluation

在您希望使用特定评分标准或标准集来评估模型输出的场景中,标准评估器是一个非常实用的工具。它可以帮助您检查LLM或Chain的输出是否符合定义的一套标准。

要深入了解其功能和可配置性,请参阅CriteriaEvalChain类的参考文档。

3. 使用CriteriaEvalChain无需参考资料 Usage without references

在这个例子中,你将使用CriteriaEvalChain来检查一个输出是否简洁。首先,创建评估链以预测输出是否“简洁”。

from langchain.evaluation import load_evaluatorfrom dotenv import load_dotenv # 导入从 .env 文件加载环境变量的函数

load_dotenv() # 调用函数实际加载环境变量from langchain.globals import set_debug # 导入在 langchain 中设置调试模式的函数

set_debug(True) # 启用 langchain 的调试模式# from langchain.evaluation import load_evaluator

# evaluator = load_evaluator("criteria", criteria="conciseness")# This is equivalent to loading using the enum

from langchain.evaluation import EvaluatorType

evaluator = load_evaluator(EvaluatorType.CRITERIA, criteria="conciseness")eval_result = evaluator.evaluate_strings(prediction="What's 2+2? That's an elementary question. The answer you're looking for is that two and two is four.",input="What's 2+2?",

)

print('eval_result >> ', eval_result)

3.1 输出格式

所有字符串评估器都暴露了一个 evaluate_strings(或 async aevaluate_strings)方法,该方法接受:

- 输入input (str)- 发送给agent代理的输入。

- 预测 prediction(str)- 预测的回应。

评估器返回包含以下值的字典:- 分数:二进制整数0到1,其中1意味着输出符合标准,0则相反 - 值:对应分数的“Y”或“N” - 推理:从LLM生成的“思维链条推理”字符串,在创建分数之前产生。

输出

(.venv) ~/Workspace/LLM/langchain-llm-app/ [develop*] python Evaluate/criteria.py ⏎

[chain/start] [1:chain:CriteriaEvalChain] Entering Chain run with input:

{"input": "What's 2+2?","output": "What's 2+2? That's an elementary question. The answer you're looking for is that two and two is four."

}

[llm/start] [1:chain:CriteriaEvalChain > 2:llm:ChatOpenAI] Entering LLM run with input:

{"prompts": ["Human: You are assessing a submitted answer on a given task or input based on a set of criteria. Here is the data:\n[BEGIN DATA]\n***\n[Input]: What's 2+2?\n***\n[Submission]: What's 2+2? That's an elementary question. The answer you're looking for is that two and two is four.\n***\n[Criteria]: conciseness: Is the submission concise and to the point?\n***\n[END DATA]\nDoes the submission meet the Criteria? First, write out in a step by step manner your reasoning about each criterion to be sure that your conclusion is correct. Avoid simply stating the correct answers at the outset. Then print only the single character \"Y\" or \"N\" (without quotes or punctuation) on its own line corresponding to the correct answer of whether the submission meets all criteria. At the end, repeat just the letter again by itself on a new line."]

}

[llm/end] [1:chain:CriteriaEvalChain > 2:llm:ChatOpenAI] [7.17s] Exiting LLM run with output:

{"generations": [[{"text": "The criterion to evaluate the submission is \"conciseness\". This requires the answer to be brief, to the point, and without unnecessary information or explanation.\n\nAssessing the submission, the responder did not solely provide the answer. The submission included additional commentary: \"That's an elementary question.\" This part of the response is not integral to answering the question and thus adds unnecessary length and detail.\n\nFurthermore, the phrase, \"The answer you're looking for is\" also adds unneeded length to the answer. A more concise response would simply state the answer: \"four\".\n\nConsidering these points, the submission does not meet the criterion of conciseness, as it contains unnecessary extraneous detail and is not as brief as it could be.\n\nN\nN","generation_info": {"finish_reason": "stop","logprobs": null},"type": "ChatGeneration","message": {"lc": 1,"type": "constructor","id": ["langchain","schema","messages","AIMessage"],"kwargs": {"content": "The criterion to evaluate the submission is \"conciseness\". This requires the answer to be brief, to the point, and without unnecessary information or explanation.\n\nAssessing the submission, the responder did not solely provide the answer. The submission included additional commentary: \"That's an elementary question.\" This part of the response is not integral to answering the question and thus adds unnecessary length and detail.\n\nFurthermore, the phrase, \"The answer you're looking for is\" also adds unneeded length to the answer. A more concise response would simply state the answer: \"four\".\n\nConsidering these points, the submission does not meet the criterion of conciseness, as it contains unnecessary extraneous detail and is not as brief as it could be.\n\nN\nN","additional_kwargs": {}}}}]],"llm_output": {"token_usage": {"completion_tokens": 151,"prompt_tokens": 192,"total_tokens": 343},"model_name": "gpt-4","system_fingerprint": null},"run": null

}

[chain/end] [1:chain:CriteriaEvalChain] [7.18s] Exiting Chain run with output:

{"results": {"reasoning": "The criterion to evaluate the submission is \"conciseness\". This requires the answer to be brief, to the point, and without unnecessary information or explanation.\n\nAssessing the submission, the responder did not solely provide the answer. The submission included additional commentary: \"That's an elementary question.\" This part of the response is not integral to answering the question and thus adds unnecessary length and detail.\n\nFurthermore, the phrase, \"The answer you're looking for is\" also adds unneeded length to the answer. A more concise response would simply state the answer: \"four\".\n\nConsidering these points, the submission does not meet the criterion of conciseness, as it contains unnecessary extraneous detail and is not as brief as it could be.\n\nN","value": "N","score": 0}

}

eval_result >> {'reasoning': 'The criterion to evaluate the submission is "conciseness". This requires the answer to be brief, to the point, and without unnecessary information or explanation.\n\nAssessing the submission, the responder did not solely provide the answer. The submission included additional commentary: "That\'s an elementary question." This part of the response is not integral to answering the question and thus adds unnecessary length and detail.\n\nFurthermore, the phrase, "The answer you\'re looking for is" also adds unneeded length to the answer. A more concise response would simply state the answer: "four".\n\nConsidering these points, the submission does not meet the criterion of conciseness, as it contains unnecessary extraneous detail and is not as brief as it could be.\n\nN', 'value': 'N', 'score': 0}

代码

https://github.com/zgpeace/pets-name-langchain/tree/develop

参考

https://python.langchain.com/docs/guides/evaluation/string/criteria_eval_chain