ffmpeg 硬件解码

ffmpeg硬件解码可以使用最新的vulkan来做,基本上来说,不挑操作系统是比较重要的,如果直接使用cuda也是非常好的选择。

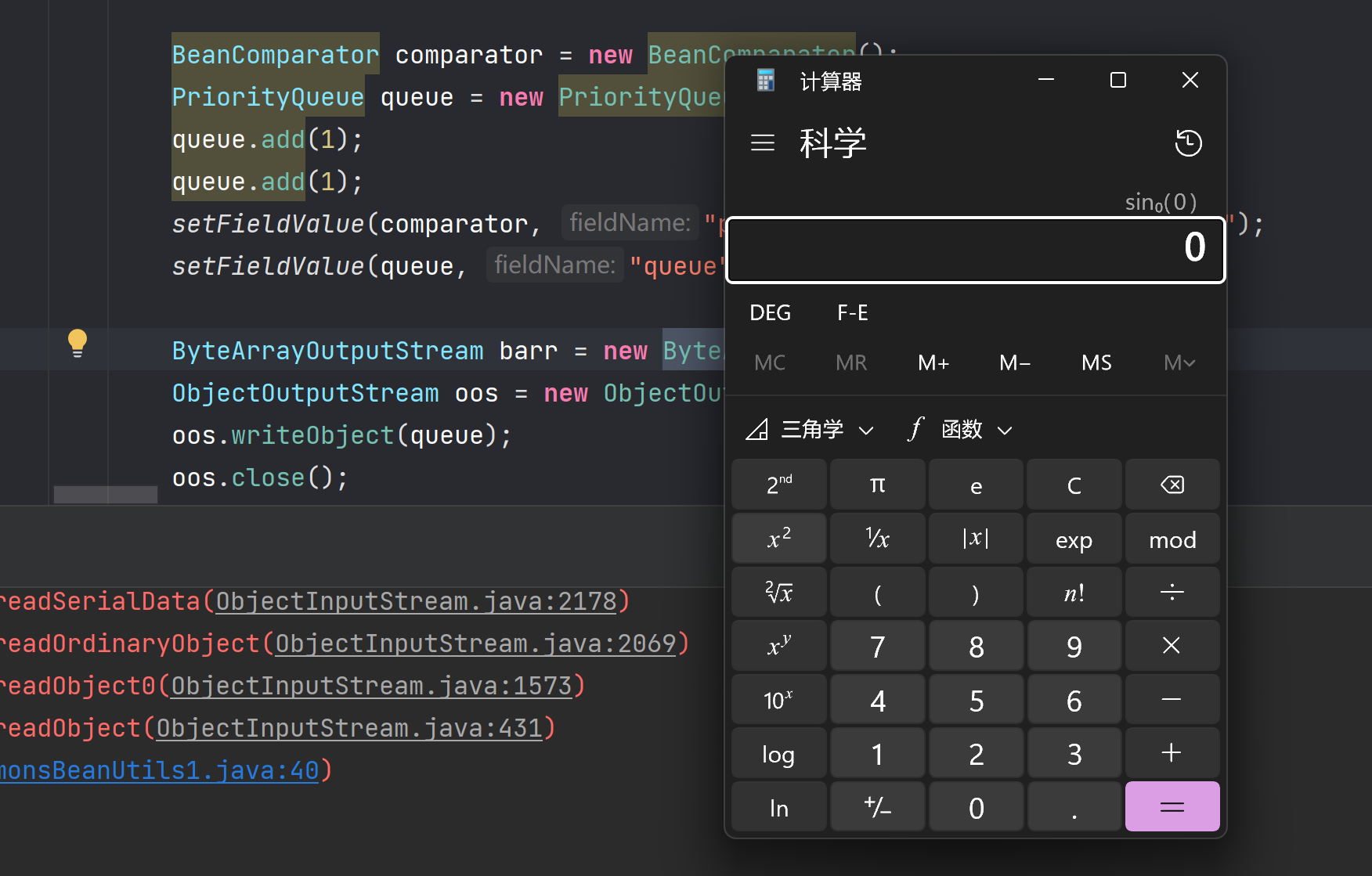

AVPixelFormat sourcepf = AV_PIX_FMT_NV12;// AV_PIX_FMT_NV12;// AV_PIX_FMT_YUV420P;AVPixelFormat destpf = AV_PIX_FMT_YUV420P; //AV_PIX_FMT_BGR24AVBufferRef* hw_device_ctx = NULL;

下面的class 是我封装了一些功能,只是示例,读者可以自行修改,读的是rtsp 流,后面准备推流到gb28181上。

class c_AVDecoder:public TThreadRunable

{AVPixelFormat sourcepf = AV_PIX_FMT_NV12;// AV_PIX_FMT_NV12;// AV_PIX_FMT_YUV420P;AVPixelFormat destpf = AV_PIX_FMT_YUV420P; //AV_PIX_FMT_BGR24AVBufferRef* hw_device_ctx = NULL;SDLDraw v_draw;int hw_decoder_init(AVCodecContext* ctx, const enum AVHWDeviceType type){int err = 0;if ((err = av_hwdevice_ctx_create(&hw_device_ctx, type,NULL, NULL, 0)) < 0) {fprintf(stderr, "Failed to create specified HW device.\n");return err;}ctx->hw_device_ctx = av_buffer_ref(hw_device_ctx);return err;}//SDLDraw g_draw;struct SwsContext* img_convert_ctx = NULL;AVFormatContext* input_ctx = NULL;int video_stream, ret;AVStream* video = NULL;AVCodecContext* decoder_ctx = NULL;AVCodec* decoder = NULL;AVPacket packet;enum AVHWDeviceType type;//转换成yuv420 或者rgbint decode_write(AVCodecContext* avctx, AVPacket* packet){AVFrame* frame = NULL, * sw_frame = NULL;AVFrame* tmp_frame = NULL;//AVFrame* pFrameDst = NULL;unsigned char* out_buffer = NULL;int ret = 0;ret = avcodec_send_packet(avctx, packet);if (ret < 0) {fprintf(stderr, "Error during decoding\n");return ret;}if (img_convert_ctx == NULL){img_convert_ctx = sws_getContext(avctx->width, avctx->height, sourcepf,WIDTH, HEIGHT, destpf, SWS_FAST_BILINEAR, NULL, NULL, NULL);}static int i = 0;while (1) {if (!(frame = av_frame_alloc()) || !(sw_frame = av_frame_alloc())) {fprintf(stderr, "Can not alloc frame\n");ret = AVERROR(ENOMEM);goto fail;}//avctx->get_buffer2ret = avcodec_receive_frame(avctx, frame);if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF) {av_frame_free(&frame);av_frame_free(&sw_frame);return 0;}else if (ret < 0) {fprintf(stderr, "Error while decoding\n");goto fail;}if (frame->format == hw_pix_fmt) {/* retrieve data from GPU to CPU */sw_frame->format = sourcepf; // AV_PIX_FMT_NV12;// AV_PIX_FMT_YUV420P;// AV_PIX_FMT_NV12;// AV_PIX_FMT_BGR24;// AV_PIX_FMT_YUV420P;if ((ret = av_hwframe_transfer_data(sw_frame, frame, 0)) < 0) {fprintf(stderr, "Error transferring the data to system memory\n");goto fail;}//av_frame_copy_props(sw_frame, frame);tmp_frame = sw_frame;}elsetmp_frame = frame;#if 0if (out_buffer == NULL){out_buffer = (unsigned char*)av_malloc(av_image_get_buffer_size(destpf,WIDTH,HEIGHT,1));//av_image_alloc()av_image_fill_arrays(pFrameDst.data, pFrameDst.linesize, out_buffer,destpf, WIDTH, HEIGHT, 1);}#endifAVFrame* pFrameDst = av_frame_alloc();av_image_alloc(pFrameDst->data, pFrameDst->linesize, WIDTH, HEIGHT, destpf, 1);

#if 1sws_scale(img_convert_ctx, tmp_frame->data, tmp_frame->linesize,0, avctx->height, pFrameDst->data, pFrameDst->linesize);#endif//cout << "into " << i++ << endl;#if 1if (!v_draw.push(pFrameDst)){av_freep(&pFrameDst->data[0]);av_frame_free(&pFrameDst);}#endif

#if 0size = av_image_get_buffer_size((AVPixelFormat)tmp_frame->format, tmp_frame->width,tmp_frame->height, 1);buffer = (uint8_t*)av_malloc(size);if (!buffer) {fprintf(stderr, "Can not alloc buffer\n");ret = AVERROR(ENOMEM);goto fail;}ret = av_image_copy_to_buffer(buffer, size,(const uint8_t* const*)tmp_frame->data,(const int*)tmp_frame->linesize, (AVPixelFormat)tmp_frame->format,tmp_frame->width, tmp_frame->height, 1);if (ret < 0) {fprintf(stderr, "Can not copy image to buffer\n");goto fail;}if ((ret = (int)fwrite(buffer, 1, size, output_file)) < 0) {fprintf(stderr, "Failed to dump raw data.\n");goto fail;}

#endiffail:av_frame_free(&frame);av_frame_free(&sw_frame);//av_freep(&buffer);if (ret < 0)return ret;}}int func_init(const char* url){const char* stype = "dxva2";type = av_hwdevice_find_type_by_name(stype);if (type == AV_HWDEVICE_TYPE_NONE) {fprintf(stderr, "Device type %s is not supported.\n", stype);fprintf(stderr, "Available device types:");while ((type = av_hwdevice_iterate_types(type)) != AV_HWDEVICE_TYPE_NONE)fprintf(stderr, " %s", av_hwdevice_get_type_name(type));fprintf(stderr, "\n");return -1;}/* open the input file *///const char * filename = "h:/video/a.mp4";AVDictionary* opts = NULL;av_dict_set(&opts, "rtsp_transport", "tcp", 0);av_dict_set(&opts, "buffer_size", "1048576", 0);av_dict_set(&opts, "fpsprobesize", "5", 0);av_dict_set(&opts, "analyzeduration", "5000000", 0);if (avformat_open_input(&input_ctx, url, NULL, &opts) != 0) {fprintf(stderr, "Cannot open input file '%s'\n", url);return -1;}if (avformat_find_stream_info(input_ctx, NULL) < 0) {fprintf(stderr, "Cannot find input stream information.\n");return -1;}/* find the video stream information */ret = av_find_best_stream(input_ctx, AVMEDIA_TYPE_VIDEO, -1, -1, &decoder, 0);if (ret < 0) {fprintf(stderr, "Cannot find a video stream in the input file\n");return -1;}video_stream = ret;AVStream* stream = input_ctx->streams[video_stream];float frame_rate = stream->avg_frame_rate.num / stream->avg_frame_rate.den;//每秒多少帧std::cout << "frame_rate is:" << frame_rate << std::endl;//优化直接定死/*for (int i = 0;; i++) {const AVCodecHWConfig* config = avcodec_get_hw_config(decoder, i);if (!config) {fprintf(stderr, "Decoder %s does not support device type %s.\n",decoder->name, av_hwdevice_get_type_name(type));return -1;}if (config->methods & AV_CODEC_HW_CONFIG_METHOD_HW_DEVICE_CTX &&config->device_type == type) {hw_pix_fmt = config->pix_fmt;break;}}*/if (!(decoder_ctx = avcodec_alloc_context3(decoder)))return AVERROR(ENOMEM);video = input_ctx->streams[video_stream];if (avcodec_parameters_to_context(decoder_ctx, video->codecpar) < 0)return -1;decoder_ctx->get_format = get_hw_format;if (hw_decoder_init(decoder_ctx, type) < 0)return -1;if ((ret = avcodec_open2(decoder_ctx, decoder, NULL)) < 0) {fprintf(stderr, "Failed to open codec for stream #%u\n", video_stream);return -1;}/* open the file to dump raw data *///output_file = fopen(argv[3], "w+");v_draw.init(1280, 720, (int)frame_rate);v_draw.Start();/* actual decoding and dump the raw data */while (ret >= 0) {if ((ret = av_read_frame(input_ctx, &packet)) < 0)break;if (video_stream == packet.stream_index){//这里需要更加精确的计算ret = decode_write(decoder_ctx, &packet);//SDL_Delay(1000 / frame_rate);}av_packet_unref(&packet);if (IsStop())break;}/* flush the decoder */packet.data = NULL;packet.size = 0;ret = decode_write(decoder_ctx, &packet);av_packet_unref(&packet);avcodec_free_context(&decoder_ctx);avformat_close_input(&input_ctx);av_buffer_unref(&hw_device_ctx);return 0;}public:void Run(){while (1){if(!IsStop())func_init(rtspurl);}}

};

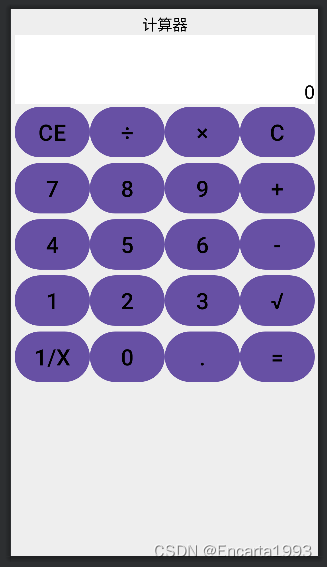

使用sdl来绘制画面

首先声明,使用sdl来渲染画面并不是有多好,如果可以,可以使用新的绘制方式,比如直接使用opengl,直接使用vulkan来绘制是更好的,sdl封装了opengl,d3d等绘制方式,但是也失去了灵活性,当然,你从头到尾读遍了源码,另当别论,如果要使用均值处理函数来做消除百叶窗效果,摩尔纹,还是直接使用opengl 渲染更为简单,使用glsl语言就行了,甚至要使用非线性函数来增亮图形,最好也是直接使用opengl来绘制。

#pragma once#include<chrono>

#define __STDC_CONSTANT_MACROS

#define SDL_MAIN_HANDLED

extern "C"

{

#include <libavcodec/avcodec.h>

#include <libavformat/avformat.h>

#include <libavutil/pixdesc.h>

#include <libavutil/hwcontext.h>

#include <libavutil/opt.h>

#include <libavutil/avassert.h>

#include <libavutil/imgutils.h>

#include <libswscale/swscale.h>

#include "SDL2\SDL.h"

};#include "c_ringbuffer.h"

#include "TThreadRunable.h"#include "TYUVMerge.h"#define SFM_REFRESH_EVENT (SDL_USEREVENT + 1)

#define SFM_BREAK_EVENT (SDL_USEREVENT + 2)#define WIDTH 640

#define HEIGHT 360typedef struct

{int g_fps = 40;int thread_exit = 0;int thread_pause = 0;

}s_param;

static int sfp_refresh_thread(void *opaque)

{s_param * param = (s_param*)opaque;param->thread_exit = 0;param->thread_pause = 0;while (!param->thread_exit){if (!param->thread_pause){SDL_Event event;event.type = SFM_REFRESH_EVENT;SDL_PushEvent(&event);}SDL_Delay(1000 / param->g_fps);}param->thread_exit = 0;param->thread_pause = 0;//BreakSDL_Event event;event.type = SFM_BREAK_EVENT;SDL_PushEvent(&event);return 0;

}class TicToc

{

public:TicToc(){tic();}void tic(){start = std::chrono::system_clock::now();}double toc(){end = std::chrono::system_clock::now();std::chrono::duration<double> elapsed_seconds = end - start;return elapsed_seconds.count() * 1000;}private:std::chrono::time_point<std::chrono::system_clock> start, end;

};class SDLDraw :public TThreadRunable//实际上这里可以编码发送出去

{int m_w = 0, m_h = 0;SDL_Window *screen = NULL;SDL_Renderer *sdlRenderer = NULL;SDL_Texture *sdlTexture = NULL;SDL_Rect sdlRect;SDL_Thread *video_tid;SDL_Event event;//struct SwsContext *img_convert_ctx = NULL;bool m_window_init = false;lfringqueue<AVFrame, 20> v_frames;lfringqueue<AVFrame, 20> v_frames_1;lfringqueue<AVFrame, 20> v_frames_2;s_param v_param;//画布AVFrame * v_canvas_frame = NULL;

public:void init(int w, int h,int fps){if (w != m_w || h != m_h){m_w = w;m_h = h;if (v_canvas_frame != NULL){func_uninit();}}v_param.g_fps = fps;//这是背景画布if (v_canvas_frame == NULL){v_canvas_frame = av_frame_alloc();av_image_alloc(v_canvas_frame->data, v_canvas_frame->linesize, 1280, 720, AV_PIX_FMT_YUV420P, 1);}}void func_uninit(){av_freep(&v_canvas_frame->data[0]);av_frame_free(&v_canvas_frame);}bool push(AVFrame * frame){//尝试三次插入return v_frames.enqueue(frame,3);}protected:int draw_init(/*HWND hWnd,*/ ){//m_w = 1280;//m_h = 720;if (m_window_init == false){SDL_Init(SDL_INIT_VIDEO);SDL_SetHint(SDL_HINT_RENDER_SCALE_QUALITY, "1");screen = SDL_CreateWindow("FF", SDL_WINDOWPOS_UNDEFINED, SDL_WINDOWPOS_UNDEFINED,1920, 1000, SDL_WINDOW_SHOWN/* SDL_WINDOW_OPENGL | SDL_WINDOW_RESIZABLE*/);//screen = SDL_CreateWindowFrom((void *)(hWnd));for (int i = 0; i < SDL_GetNumRenderDrivers(); ++i){SDL_RendererInfo rendererInfo = {};SDL_GetRenderDriverInfo(i, &rendererInfo);cout << i << " " << rendererInfo.name << endl;//if (rendererInfo.name != std::string("direct3d11"))//{// continue;//}}if (screen == NULL){//printf("Window could not be created! SDL_Error: %s\n", SDL_GetError());return -1;}sdlRenderer = SDL_CreateRenderer(screen,0, SDL_RENDERER_ACCELERATED | SDL_RENDERER_PRESENTVSYNC);//sdlRenderer = SDL_CreateRenderer(screen, -1, SDL_RENDERER_ACCELERATED);//SDL_SetHint(SDL_HINT_RENDER_DRIVER, "opengl");m_window_init = true;SDL_Thread *video_tid = SDL_CreateThread(sfp_refresh_thread, NULL, &v_param);}return 0;}void draw(uint8_t *data[], int linesize[]){if (sdlTexture != NULL){SDL_DestroyTexture(sdlTexture);sdlTexture = NULL;}//m_w = w;//m_h = h;if (sdlTexture == NULL){sdlTexture = SDL_CreateTexture(sdlRenderer, SDL_PIXELFORMAT_IYUV,SDL_TEXTUREACCESS_STREAMING, m_w, m_h);sdlRect.x = 0;sdlRect.y = 0;sdlRect.w = m_w;sdlRect.h = m_h;// nh;}SDL_UpdateYUVTexture(sdlTexture, &sdlRect,data[0], linesize[0],data[1], linesize[1],data[2], linesize[2]);//SDL_RenderClear(sdlRenderer);SDL_RenderCopy(sdlRenderer, sdlTexture, NULL, NULL);SDL_RenderPresent(sdlRenderer);//video_tid = SDL_CreateThread(sfp_refresh_thread, NULL, NULL);}

public:void Run(){draw_init();AVFrame * frame = NULL;//while (!IsStop())//{// //解码播放 直接播放// if (v_frames.dequeue(&frame))// {// //播放// draw(frame->data, frame->linesize,WIDTH,HEIGHT);// av_frame_free(&frame);// SDL_Delay(10);// }// else// {// SDL_Delay(10);// }//}#if 1/*SDL_Thread *video_tid = SDL_CreateThread(sfp_refresh_thread, NULL, &v_param);*/int tick = 0;double x = 0.0f;for (;;){if (IsStop()){v_param.thread_exit = 1;//break;}SDL_WaitEvent(&event);if (event.type == SFM_REFRESH_EVENT){if (v_frames.dequeue(&frame)){tick++;TicToc tt;//MergeYUV(v_canvas_frame->data[0], 1280, 720,// frame->data[0], WIDTH, HEIGHT, 1, 10, 10);MergeYUV_S(v_canvas_frame->data[0], 1280, 720,frame->data[0], WIDTH, HEIGHT, 10, 10);x += tt.toc();//播放draw(v_canvas_frame->data, v_canvas_frame->linesize);av_freep(&frame->data[0]);av_frame_free(&frame);if (tick == 10){tick = 0;cout << x << endl;x = 0.0f;}}}else if (event.type == SDL_KEYDOWN){if (event.key.keysym.sym == SDLK_SPACE)v_param.thread_pause = !v_param.thread_pause;}else if (event.type == SDL_QUIT){v_param.thread_exit = 1;}else if (event.type == SFM_BREAK_EVENT){break;}}

#endif}SDLDraw(){}~SDLDraw(){func_uninit();}};//SDL_FillRect(gScreenSurface, NULL, SDL_MapRGB(gScreenSurface->format, 0xFF, 0x00, 0x00));ffmpeg 深度学习处理filter

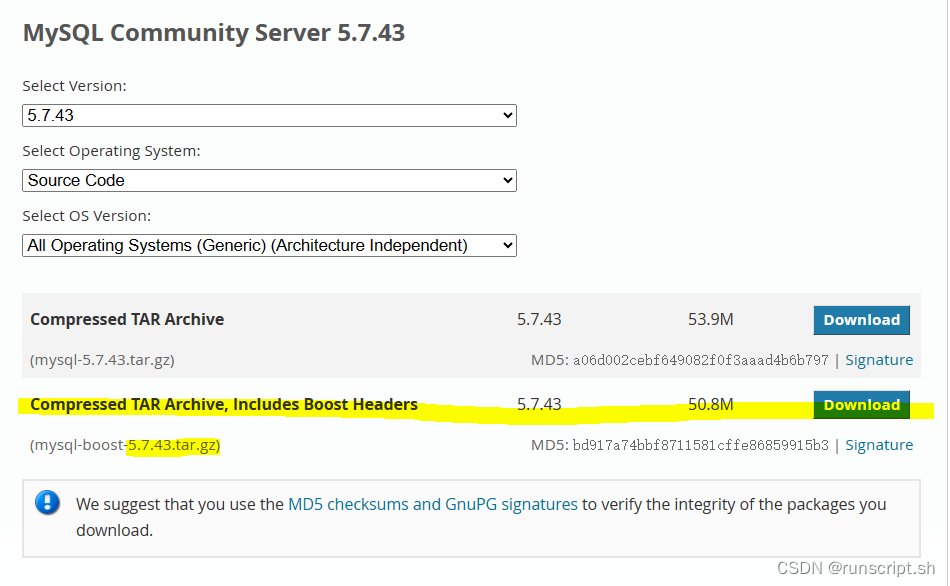

dnn_processing从2018年开始就已经是FFmpeg中的一个视频filter,支持所有基于深度学习模型的图像处理算法,即输入和输出都是AVFrame,而处理过程使用的是深度学习模型。为什么要开发这样一个filter,因为作为FFmpeg DNN模块的maintainer,dnn_processing就是一个很好的使用者入手功能,读ffmpeg的代码就可以知道,其实自己写这些功能就行了,至于支持视频分析功能的filter,先不进行编码,主要考虑如何支持异步建立流水线,如何启用batch size,从而最大化的用好系统的并行计算能力,本来ffmpeg硬件解码后,如何直接在gpu中进行swscale,其实是不支持的,这一部分要自己写代码来支持,这是另外一回话,我们先使用dnn_processing模块再说,

这个模块可以完成针对灰度图的sobel算子的调用,其输入输出的格式是grayf32。除了这个dnn_processing还可以完成sr(超分辨率)和derain(去除雨点)filter的功能,下面使用ffmpeg命令演示对yuv和rgb格式的支持

./ffmpeg -i night.jpg -vf scale=iw*2:ih*2,format=yuv420p,dnn_processing=dnn_backend=tensorflow:model=./srcnn.pb:input=x:output=y srcnn.jpg./ffmpeg -i small.jpg -vf format=yuv420p,scale=iw*2:ih*2,dnn_processing=dnn_backend=native:model=./srcnn.model:input=x:output=y -q:v 2 small.jpgsrcnn.jpg./ffmpeg -i small.jpg -vf format=yuv420p,dnn_processing=dnn_backend=native:model=./espcn.model:input=x:output=y small_b.jpg./ffmpeg -i rain.jpg -vf format=rgb24,dnn_processing=dnn_backend=native:model=./can.model:input=x:output=y derain.jpg

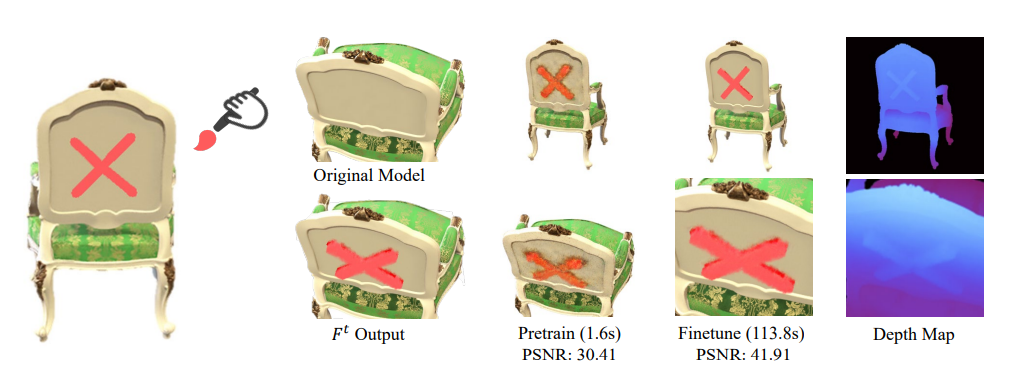

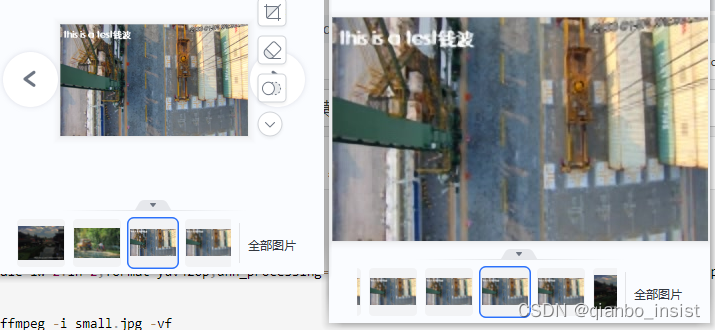

下图可以看出放大两倍以后还是可以的,不是很模糊,其实是因为我们的训练模型很浅,还没有好好做训练,即使如此,我仔细查看过线性差值比这个图像要差。

连贯执行

后面第二篇就要进行硬件解码,到提升质量filter,到输出编码了,请等待第二篇