- 高可用架构

- k8s集群组件

- ectd

- kube-apiserver

- kube-scheduler

- kube-controller-manager

- kubelet

- kube-proxy

- kubectl

- 高可用分析

- 负载均衡节点设计

- 1.环境准备

- 1.1 环境规划

- 1.2 所有节点配置host解析

- 1.3 安装必备工具

- 1.4 所有节点关闭防火墙、selinux、dnsmasq、swap

- 1.5 Master01节点免密钥登录其他节点,安装过程中生成配置文件和证书均在Master01上操作

- 2.基本组件安装

- 2.1 Containerd作为Runtime(二选一)

- 2.2 Docker作为Runtime(二选一)

- 2.3 K8s及etcd安装

- 2.3.1 Master01下载kubernetes安装包

- 2.3.2 以下操作都在master01执行

- 3.生成证书

- 3.1 Master01下载生成证书工具

- 3.2 etcd证书

- 3.2.1 所有Master节点创建etcd证书目录(只有master节点)

- 3.2.2 所有节点创建kubernetes相关目录(包含node节点)

- 3.2.3 Master01节点生成etcd证书

- 3.2.4 将证书复制到其他master节点

- 3.3 k8s组件证书

- 3.3.1 Master01生成kubernetes证书

- 3.3.2 Master01生成apiserver证书

- 3.3.3 Master01生成controller-manage的证书

- 3.3.4 Master01创建ServiceAccount Key à secret

- 3.3.5 生成公钥

- 3.3.6 发送证书至其他节点

- 4.高可用配置

- 4.1 所有master节点安装ha和keepalived

- 4.2 ha配置(三台master节点ha配置都一样)

- 4.3 keepalive配置

- 4.3.1 master01

- 4.3.2 master02

- 4.3.3 master03

- 4.4 启动 keepalived 和 haproxy 服务并加入开机启动

- 4.5 编写健康检查脚本

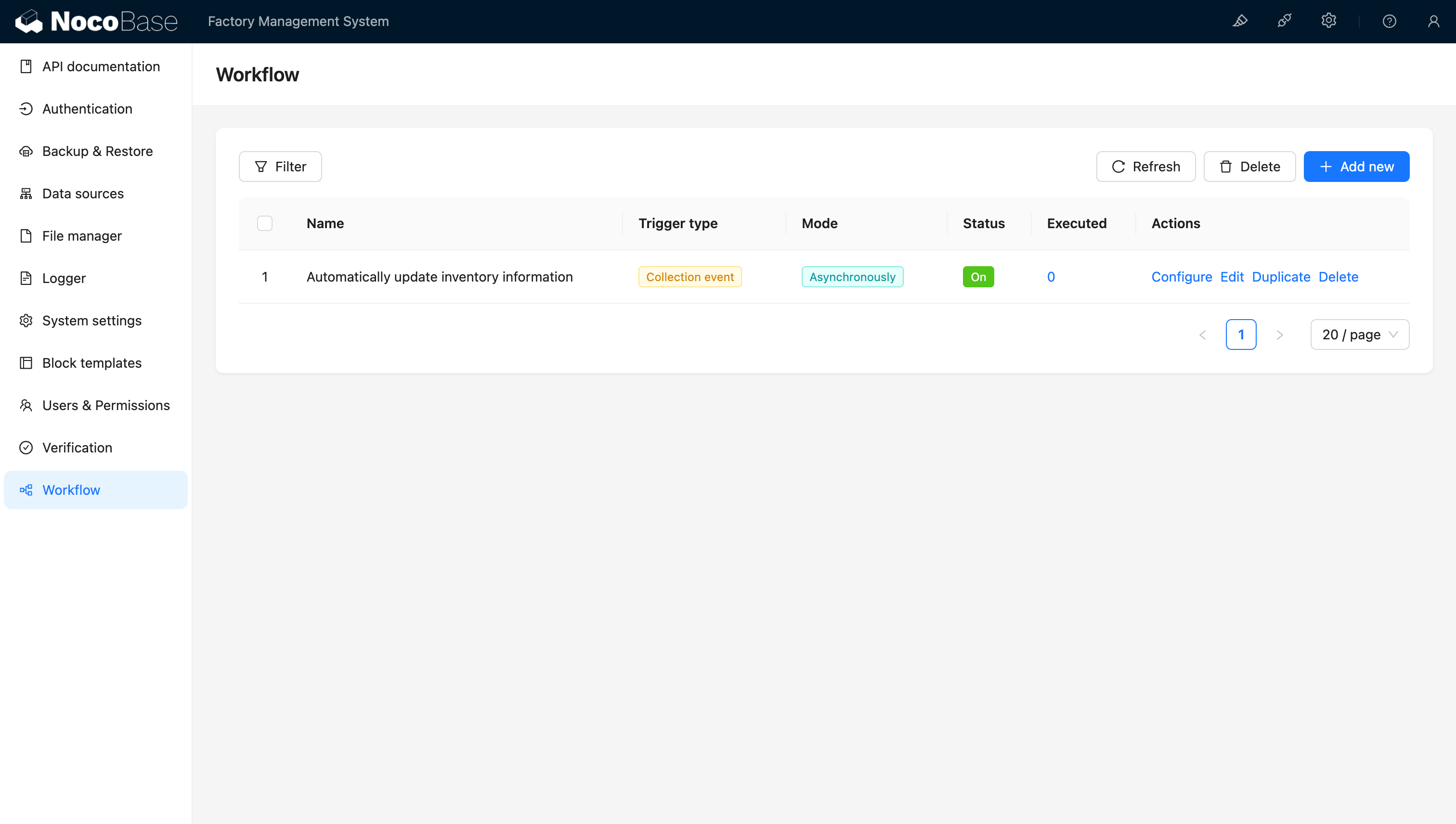

- 5.Kubernetes组件配置

- 5.1 etcd配置

- 5.1.1 master01

- 5.1.2 master02

- 5.1.3 master03

- 5.1.4 配置systemd

- 5.2 apiserver

- 5.2.1 maser01配置

- 5.2.2 master02配置

- 5.2.3 master03配置

- 5.2.4 启动apiserver

- 5.3 Controller Manager

- 5.3.1 所有master节点配置一样

- 5.3.2 启动kube-controller-manager

- 5.4 scheduler

- 5.4.1 所有Master节点配置kube-scheduler service(所有master节点配置一样)

- 5.4.2 启动

- 5.1 etcd配置

- 6.TLS Bootstrapping配置

- 6.1 Master01创建bootstrap

- 6.2 拷贝管理集群文件

- 6.3 创建bootstrap

- 7.node节点配置

- 7.1 复制证书

- 7.2 kubelet配置

- 7.3 查看集群状态

- 7.4 kube-proxy配置

- 8. 安装Calico网络插件

- 8.1 更换POD的网段

- 8.2 安装calico

- 8.3 查看容器状态

- 9.安装CoreDNS

- 9.1 配置service网段

- 9.2 安装CoreDNS

- 9.3 检查状态

- 9.4 安装最新版coredns

- 10. 安装Metrics Server

- 10.1 创建metrics

- 10.2 检查状态

- 11.安装Dashboard

- 12.集群可用性验证

- 12.1 节点需均正常

- 12.2 Pod均需正常

- 12.3 检查集群网段无任何冲突

- 12.4 能够正常创建资源

- 12.5 Pod 必须能够解析 Service(同 namespace 和跨 namespace)

- 12.6 每个节点都必须要能访问 Kubernetes 的 kubernetes svc 443 和 kube-dns 的

- service 53

- 12.7 Pod 和 Pod 之间要能够正常通讯(同 namespace 和跨 namespace)

- 12.8 Pod 和 Pod 之间要能够正常通讯(同机器和跨机器)

高可用架构

k8s集群组件

Kubernetes是属于主从设备模型(Master-Slave架构),即有Master节点负责核心的调度、管理和运维,Slave节点则执行用户的程序。在Kubernetes中,主节点一般被称为Master Node 或者 Head Node,而从节点则被称为Worker Node 或者 Node。

Tips:Master节点通常包括API Server、Scheduler、Controller Manager等组件,Node节点通常包括Kubelet、Kube-Proxy等组件!

看到蓝色框内的Control Plane,这个是整个集群的控制平面,相当于是master进程的加强版。k8s中的Control Plane一般都会运行在Master节点上面。在默认情况下,Master节点并不会运行应用工作负载,所有的应用工作负载都交由Node节点负责。

控制平面中的Master节点主要运行控制平面的各种组件,它们主要的作用就是维持整个k8s集群的正常工作、存储集群的相关信息,同时为集群提供故障转移、负载均衡、任务调度和高可用等功能。对于Master节点一般有多个用于保证高可用,而控制平面中的各个组件均以容器的Pod形式运行在Master节点中,大部分的组件需要在每个Master节点上都运行,少数如DNS服务等组件则只需要保证足够数量的高可用即可。

ectd

ETCD:集群的主数据库,保存了整个集群的状态; etcd负责节点间的服务发现和配置共享。etcd分布式键值存储系统, 用于保持集群状态,比如Pod、Service等对象信息

Kubernetes 集群的 etcd 数据库通常需要有个备份计划。此外还有一种k8s集群部署的高可用方案是将etcd数据库从容器中抽离出来,单独作为一个高可用数据库部署,从而为k8s提供稳定可靠的高可用数据库存储。

kube-apiserver

提供了资源操作的唯一入口,并提供认证、授权、访问控制、API注册和发现等机制;这是kubernetes API,作为集群的统一入口,各组件协调者,以HTTPAPI提供接口服务,所有对象资源的增删改查和监听操作都交给APIServer处理后再提交给Etcd存储

kube-scheduler

资源调度,按照预定的调度策略将Pod调度到相应的机器上;它负责节点资源管理,接受来自kube-apiserver创建Pods任务,并分配到某个节点。它会根据调度算法为新创建的Pod选择一个Node节点

kube-controller-manager

负责维护集群的状态,比如故障检测、自动扩展、滚动更新等;它用来执行整个系统中的后台任务,包括节点状态状况、Pod个数、Pods和Service的关联等, 一个资源对应一个控制器,而ControllerManager就是负责管理这些控制器的。

其中控制器包括:

- 节点控制器(Node Controller): 负责在节点出现故障时进行通知和响应。

- 副本控制器(Replication Controller): 负责为系统中的每个副本控制器对象维护正确数量的 Pod。

- 端点控制器(Endpoints Controller): 填充端点(Endpoints)对象(即加入 Service 与 Pod)。

- 服务帐户和令牌控制器(Service Account & Token Controllers): 为新的命名空间创建默认帐户和 API 访问令牌。

kubelet

负责维护容器的生命周期,负责管理pods和它们上面的容器,images镜像、volumes、etc。同时也负责Volume(CVI)和网络(CNI)的管理;kubelet运行在每个计算节点上,作为agent,接受分配该节点的Pods任务及管理容器,周期性获取容器状态,反馈给kube-apiserver; kubelet是Master在Node节点上的Agent,管理本机运行容器的生命周期,比如创建容器、Pod挂载数据卷、下载secret、获取容器和节点状态等工作。kubelet将每个Pod转换成一组容器。

kube-proxy

负责为Service提供cluster内部的服务发现和负载均衡;它运行在每个计算节点上,负责Pod网络代理。定时从etcd获取到service信息来做相应的策略。它在Node节点上实现Pod网络代理,维护网络规则和四层负载均衡工作。

由于性能问题,目前大部分企业用K8S进行实际生产时,都不会直接使用Kube-proxy作为服务代理,而是通过Ingress Controller来集成HAProxy, Nginx来代替Kube-proxy。

kubectl

客户端命令行工具,将接受的命令格式化后发送给kube-apiserver,作为整个系统的操作入口。

高可用分析

所有从集群(或所运行的 Pods)发出的 API 调用都终止于 API server,而API Server直接与ETCD数据库通讯。若仅部署单一的API server ,当API server所在的 VM 关机或者 API 服务器崩溃将导致不能停止、更新或者启动新的 Pod、服务或副本控制器;而ETCD存储若发生丢失,API 服务器将不能启动。

所以如下几个方面需要做到:

- 集群状态维持:K8S集群状态信息存储在ETCD集群中,该集群非常可靠,且可以分布在多个节点上。需要注意的是,在ETCD群集中至少应该有3个节点,且为了防止2网络分裂,节点的数量必须为奇数。

- API服务器冗余灾备:K8S的API server服务器是无状态的,从ETCD集群中能获取所有必要的数据。这意味着K8S集群中可以轻松地运行多个API服务器,而无需要进行协调,因此我们可以把负载均衡器(LB)放在这些服务器之前,使其对用户、Worker Node均透明。

- Master选举:一些主组件(Scheduler和Controller Manager)不能同时具有多个实例,可以想象多个Scheduler同时进行节点调度会导致多大的混乱。由于Controller Manager等组件通常扮演着一个守护进程的角色,当它自己失败时,K8S将没有更多的手段重新启动它自己,因此必须准备已经启动的组件随时准备取代它。高度可扩展的Kubernetes集群可以让这些组件在领导者选举模式下运行。这意味着虽然多个实例在运行,但是每次只有一个实例是活动的,如果它失败,则另一个实例被选为领导者并代替它。

- K8S高可用:只要K8S集群关键结点均高可用,则部署在K8S集群中的Pod、Service的高可用性就可以由K8S自行保证。

负载均衡节点设计

负载均衡节点承担着Worker Node集群和Master集群通讯的职责,同时Load Balance没有部署在K8S集群中,不受Controller Manager的监控,倘若Load Balance发生故障,将导致Node与Master的通讯全部中断,因此需要对负载均衡做高可用配置。Load Balance同样不能同时有多个实例在服务,因此使用Keepalived对部署了Load Balance的服务器进行监控,当发生失败时将虚拟IP(VIP)飘移至备份节点,确保集群继续可用。

1.环境准备

1.1 环境规划

主机规划

| 主机名 | ip地址 | 备注 |

|---|---|---|

| master01 | 10.0.0.171 | master节点 |

| master02 | 10.0.0.172 | master节点 |

| master03 | 10.0.0.173 | master节点 |

| node01 | 10.0.0.174 | worker节点 |

| VIP | 10.0.0.200 | keepalived虚拟IP |

集群网络规划及版本说明**

| 配置信息 | 备注 |

|---|---|

| 系统版本 | CentOS 7.9 |

| docker版本 | docker-ce-20.10.x |

| POD网段 | 172.16.0.0/16 |

| Service网段 | 10.96.0.0 |

1.2 所有节点配置host解析

cat >> /etc/hosts <<'EOF'

10.0.0.171 master01

10.0.0.172 master02

10.0.0.173 master03

10.0.0.174 node01

EOF

1.3 安装必备工具

yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git -y

1.4 所有节点关闭防火墙、selinux、dnsmasq、swap

1.#闭防火墙、selinux、dnsmasq/NetworkManager

systemctl disable --now firewalld

systemctl disable --now dnsmasq

systemctl disable --now NetworkManagersetenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

#检查

grep ^SELINUX= /etc/selinux/config2.#关闭swap分区

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab3.#安装工ntpdate时间同步服务

rpm -ivh http://mirrors.wlnmp.com/centos/wlnmp-release-centos.noarch.rpm

yum install ntpdate -y4.#所有节点同步时间

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' >/etc/timezone

ntpdate time2.aliyun.com

# 加入到crontab

crontab -e

*/5 * * * * /usr/sbin/ntpdate time2.aliyun.com5.#所有节点配置limit

ulimit -SHn 65535

vi /etc/security/limits.conf

# 末尾添加如下内容

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

1.5 Master01节点免密钥登录其他节点,安装过程中生成配置文件和证书均在Master01上操作

1.#生成密钥并发送到各个节点

ssh-keygen

for i in master02 master03 node01;do ssh-copy-id -i .ssh/id_rsa.pub $i;done2.#下载安装源码文件,提前在外网主机下载和推送到内网即可。

cd /root/ ; git clone https://github.com/dotbalo/k8s-ha-install.git

#如果无法下载就下载:https://gitee.com/dukuan/k8s-ha-install.git

scp -r k8s-ha-install/ 10.0.0.161:~

检查

[root@master01 ~]# ls -l k8s-ha-install/

总用量 24

-rw-r--r-- 1 root root 18092 3月 20 09:39 LICENSE

drwxr-xr-x 2 root root 29 3月 20 09:39 metrics-server-0.3.7

drwxr-xr-x 2 root root 227 3月 20 09:39 metrics-server-3.6.1

-rw-r--r-- 1 root root 379 3月 20 09:39 README.md3.#所有节点升级系统并重启,此处升级没有升级内核,下节会单独升级内核

yum update -y --exclude=kernel* && reboot4.#CentOS 7升级所有机器内核至4.19

#提前在外网主机下载和推送到内网即可

cd /root

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm

#从master01节点传到其他节点:

for i in master02 master03;do scp kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm $i:/root/ ; done5.#所有节点安装内核升级包

cd /root

yum localinstall -y kernel-ml*6.#修改内核启动顺序

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

grubby --args="user_namespace.enable=1" --update-kernel="$(grubby-default-kernel)"7.#检查默认内核是不是4.19

grubby --default-kernel8.#所有节点重启然后再查看内核版本

reboot

uname -a9.#所有节点安装ipvsadm

yum install ipvsadm ipset sysstat libnetfilter_conntrack-devel -y10.#所有节点配置ipvs模块 内核4.19+版本 nf_conntrack_ipv4 改为 nf_conntrack 4.18以下使用 nf_conntrack_ipv4

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF内核4.19+版本

# 加入以下内容

cat > /etc/modules-load.d/ipvs.conf <<EOF

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

systemctl enable --now systemd-modules-load.service#开启一些k8s集群中必须的内核参数,所有节点配置k8s内核:

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

net.ipv4.conf.all.route_localnet = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOFsysctl --system#所有节点配置完内核后,重启服务器,保证重启后内核依旧加载

reboot

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

2.基本组件安装

2.1 Containerd作为Runtime(二选一)

#1.配置docker源

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

curl -o /etc/yum.repos.d/docker-ce.repo https://download.docker.com/linux/centos/docker-ce.repo

sed -i 's+download.docker.com+mirrors.tuna.tsinghua.edu.cn/docker-ce+' /etc/yum.repos.d/docker-ce.repo#2.所有节点安装docker-ce-20.10

yum install docker-ce-20.10.* docker-ce-cli-20.10.* containerd -y#3.首先配置Containerd所需的模块(所有节点)

cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF#4.所有节点加载模块

modprobe -- overlay

modprobe -- br_netfilter#5.所有节点,配置Containerd所需的内核:

cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF#6.所有节点加载内核

sysctl --system#7.所有节点配置Containerd的配置文件

mkdir -p /etc/containerd

containerd config default | tee /etc/containerd/config.toml#8.所有节点将Containerd的Cgroup改为Systemd:

vim /etc/containerd/config.toml

找到containerd.runtimes.runc.options,添加SystemdCgroup = true(如果已存在直接修改,否则会报错),如下图所示#9.所有节点将sandbox_image的Pause镜像改成符合自己版本的地址:

registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6#10.所有节点启动Containerd,并配置开机自启动

systemctl daemon-reload

systemctl enable --now containerd#11.所有节点配置crictl客户端连接的运行时位置

cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

2.2 Docker作为Runtime(二选一)

yum install docker-ce-20.10.* docker-ce-cli-20.10.* -y2.由于新版Kubelet建议使用systemd,所以把Docker的CgroupDriver也改成systemd:

mkdir -pv /etc/docker && cat <<EOF | sudo tee /etc/docker/daemon.json

{"insecure-registries": ["k8s161.registry.com:5000"],"registry-mirrors": ["https://tuv7rqqq.mirror.aliyuncs.com"],"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF3.所有节点设置开机自启动Docker:

systemctl daemon-reload && systemctl enable --now docker

systemctl status docker

2.3 K8s及etcd安装

2.3.1 Master01下载kubernetes安装包

(1.23.0需要更改为你看到的最新版本)

[t@master01 ~]# wget https://dl.k8s.io/v1.23.0/kubernetes-server-linux-amd64.tar.gz --no-check-certificate

2.3.2 以下操作都在master01执行

#1.下载etcd安装包

[root@master01 ~]# wget https://github.com/etcd-io/etcd/releases/download/v3.5.1/etcd-v3.5.1-linux-amd64.tar.gz#2.解压kubernetes安装文件

[root@master01 ~]# tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

#检查

[root@master01 ~]#ll /usr/local/bin/#3.解压etcd安装文件

[root@master01 ~]# tar -zxvf etcd-v3.5.1-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.5.1-linux-amd64/etcd{,ctl}#4.版本查看

[root@master01 ~]# z

Kubernetes v1.23.0

[root@k8s-master01 ~]# etcdctl version

etcdctl version: 3.5.1

API version: 3.5#5.将组件发送到其他节点

MasterNodes='master02 master03'

WorkNodes='node01'#发送到master节点

for NODE in $MasterNodes; do echo $NODE; scp /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy} $NODE:/usr/local/bin/; scp /usr/local/bin/etcd* $NODE:/usr/local/bin/; done#发送到node节点

for NODE in $WorkNodes; do scp /usr/local/bin/kube{let,-proxy} $NODE:/usr/local/bin/ ; done#6.所有节点创建/opt/cni/bin目录

mkdir -p /opt/cni/bin#7.切换分支

Master01节点切换到1.23.x分支(其他版本可以切换到其他分支,.x即可,不需要更改为具体的小版本)

cd /root/k8s-ha-install && git checkout manual-installation-v1.23.x

3.生成证书

二进制安装最关键步骤,一步错误全盘皆输,一定要注意每个步骤都要是正确的

3.1 Master01下载生成证书工具

wget "https://pkg.cfssl.org/R1.2/cfssl_linux-amd64" -O /usr/local/bin/cfssl --no-check-certificatewget "https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64" -O /usr/local/bin/cfssljson --no-check-certificatechmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson

3.2 etcd证书

3.2.1 所有Master节点创建etcd证书目录(只有master节点)

#1.所有Master节点创建etcd证书目录(只有master节点)

mkdir /etc/etcd/ssl -p

3.2.2 所有节点创建kubernetes相关目录(包含node节点)

#2.所有节点创建kubernetes相关目录(包含node节点)

mkdir -p /etc/kubernetes/pki

3.2.3 Master01节点生成etcd证书

#3.Master01节点生成etcd证书

生成证书的CSR文件:证书签名请求文件,配置了一些域名、公司、单位

[root@master01 pki]# cd /root/k8s-ha-install/pki

# 生成etcd CA证书和CA证书的key

[root@master01 pki]# cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-ca

#执行结果

2024/02/07 21:40:35 [INFO] generating a new CA key and certificate from CSR

2024/02/07 21:40:35 [INFO] generate received request

2024/02/07 21:40:35 [INFO] received CSR

2024/02/07 21:40:35 [INFO] generating key: rsa-2048

2024/02/07 21:40:35 [INFO] encoded CSR

2024/02/07 21:40:35 [INFO] signed certificate with serial number 15435287298841637632737384505024999389891829919[root@master01 pki]# cfssl gencert \-ca=/etc/etcd/ssl/etcd-ca.pem \-ca-key=/etc/etcd/ssl/etcd-ca-key.pem \-config=ca-config.json \-hostname=127.0.0.1,master01,master02,master03,10.0.0.171,10.0.0.172,10.0.0.173 \-profile=kubernetes \etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd#执行结果

2023/05/20 20:06:11 [INFO] generate received request

2023/05/20 20:06:11 [INFO] received CSR

2023/05/20 20:06:11 [INFO] generating key: rsa-2048

2023/05/20 20:06:11 [INFO] encoded CSR

2023/05/20 20:06:11 [INFO] signed certificate with serial number 293681993470974662958952098256195962671277009891

3.2.4 将证书复制到其他master节点

#4.将证书复制到其他master节点

MasterNodes='master02 master03'for NODE in $MasterNodes; dossh $NODE "mkdir -p /etc/etcd/ssl"for FILE in etcd-ca-key.pem etcd-ca.pem etcd-key.pem etcd.pem; doscp /etc/etcd/ssl/${FILE} $NODE:/etc/etcd/ssl/${FILE}donedone

3.3 k8s组件证书

3.3.1 Master01生成kubernetes证书

[root@k8s-master01 pki]# cd /root/k8s-ha-install/pkicfssl gencert -initca ca-csr.json | cfssljson -bare /etc/kubernetes/pki/ca

3.3.2 Master01生成apiserver证书

#注释

# 10.96.0.1是k8s service的网段,如果说需要更改k8s service网段,那就需要更改10.96.0.1,

# 如果不是高可用集群,10.0.0.200为Master01的IP,这里的10.0.0.200是vipcfssl gencert -ca=/etc/kubernetes/pki/ca.pem -ca-key=/etc/kubernetes/pki/ca-key.pem -config=ca-config.json -hostname=10.96.0.1,10.0.0.200,127.0.0.1,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.cluster,kubernetes.default.svc.cluster.local,10.0.0.171,10.0.0.172,10.0.0.173 -profile=kubernetes apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/apiserver#生成apiserver的聚合证书。Requestheader-client-xxx requestheader-allowwd-xxx:aggerator

cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-ca cfssl gencert -ca=/etc/kubernetes/pki/front-proxy-ca.pem -ca-key=/etc/kubernetes/pki/front-proxy-ca-key.pem -config=ca-config.json -profile=kubernetes front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client#返回结果(忽略警告)

2023/05/20 20:23:00 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

3.3.3 Master01生成controller-manage的证书

cfssl gencert \-ca=/etc/kubernetes/pki/ca.pem \-ca-key=/etc/kubernetes/pki/ca-key.pem \-config=ca-config.json \-profile=kubernetes \manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager

#返回结果(忽略警告)# 注意,如果不是高可用集群,10.0.0.200:16443改为master01的地址,16443改为apiserver的端口,默认是6443

# set-cluster:设置一个集群项

kubectl config set-cluster kubernetes \--certificate-authority=/etc/kubernetes/pki/ca.pem \--embed-certs=true \--server=https://10.0.0.200:16443 \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig# 设置一个环境项,一个上下文

kubectl config set-context system:kube-controller-manager@kubernetes \--cluster=kubernetes \--user=system:kube-controller-manager \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig# set-credentials 设置一个用户项

kubectl config set-credentials system:kube-controller-manager \--client-certificate=/etc/kubernetes/pki/controller-manager.pem \--client-key=/etc/kubernetes/pki/controller-manager-key.pem \--embed-certs=true \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig#用某个环境当做默认环境

kubectl config use-context system:kube-controller-manager@kubernetes \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfigcfssl gencert \-ca=/etc/kubernetes/pki/ca.pem \-ca-key=/etc/kubernetes/pki/ca-key.pem \-config=ca-config.json \-profile=kubernetes \scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler# 注意,如果不是高可用集群,10.0.0.200:16443改为master01的地址,16443改为apiserver的端口,默认是6443kubectl config set-cluster kubernetes \--certificate-authority=/etc/kubernetes/pki/ca.pem \--embed-certs=true \--server=https://10.0.0.200:16443 \--kubeconfig=/etc/kubernetes/scheduler.kubeconfigkubectl config set-credentials system:kube-scheduler \--client-certificate=/etc/kubernetes/pki/scheduler.pem \--client-key=/etc/kubernetes/pki/scheduler-key.pem \--embed-certs=true \--kubeconfig=/etc/kubernetes/scheduler.kubeconfigkubectl config set-context system:kube-scheduler@kubernetes \--cluster=kubernetes \--user=system:kube-scheduler \--kubeconfig=/etc/kubernetes/scheduler.kubeconfigkubectl config use-context system:kube-scheduler@kubernetes \--kubeconfig=/etc/kubernetes/scheduler.kubeconfigcfssl gencert \-ca=/etc/kubernetes/pki/ca.pem \-ca-key=/etc/kubernetes/pki/ca-key.pem \-config=ca-config.json \-profile=kubernetes \admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin# 注意,如果不是高可用集群,10.0.0.200:16443改为master01的地址,16443改为apiserver的端口,默认是6443

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.0.0.200:16443 --kubeconfig=/etc/kubernetes/admin.kubeconfigkubectl config set-credentials kubernetes-admin --client-certificate=/etc/kubernetes/pki/admin.pem --client-key=/etc/kubernetes/pki/admin-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/admin.kubeconfigkubectl config set-context kubernetes-admin@kubernetes --cluster=kubernetes --user=kubernetes-admin --kubeconfig=/etc/kubernetes/admin.kubeconfigkubectl config use-context kubernetes-admin@kubernetes --kubeconfig=/etc/kubernetes/admin.kubeconfig

3.3.4 Master01创建ServiceAccount Key à secret

openssl genrsa -out /etc/kubernetes/pki/sa.key 2048

返回结果

3.3.5 生成公钥

openssl rsa -in /etc/kubernetes/pki/sa.key -pubout -out /etc/kubernetes/pki/sa.pub

3.3.6 发送证书至其他节点

for NODE in master02 master03; dofor FILE in $(ls /etc/kubernetes/pki | grep -v etcd); doscp /etc/kubernetes/pki/${FILE} $NODE:/etc/kubernetes/pki/${FILE};done;for FILE in admin.kubeconfig controller-manager.kubeconfig scheduler.kubeconfig; doscp /etc/kubernetes/${FILE} $NODE:/etc/kubernetes/${FILE};done;

done

3.3.7 所有master节点查看证书

[root@master01 pki]# ls /etc/kubernetes/pki/ |wc -l

4.高可用配置

4.1 所有master节点安装ha和keepalived

yum install keepalived haproxy -y

4.2 ha配置(三台master节点ha配置都一样)

mkdir /etc/haproxy/

cat > /etc/haproxy/haproxy.cfg <<EOFglobalmaxconn 2000ulimit-n 16384log 127.0.0.1 local0 errstats timeout 30sdefaultslog globalmode httpoption httplogtimeout connect 5000timeout client 50000timeout server 50000timeout http-request 15stimeout http-keep-alive 15sfrontend monitor-inbind *:33305mode httpoption httplogmonitor-uri /monitorfrontend k8s-masterbind 0.0.0.0:16443bind 127.0.0.1:16443mode tcpoption tcplogtcp-request inspect-delay 5sdefault_backend k8s-masterbackend k8s-mastermode tcpoption tcplogoption tcp-checkbalance roundrobindefault-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100server master01 10.0.0.171:6443 checkserver master02 10.0.0.172:6443 checkserver master03 10.0.0.173:6443 check

EOF

4.3 keepalive配置

4.3.1 master01

cat > /etc/keepalived/keepalived.conf <<EOF! Configuration File for keepalived

global_defs {router_id LVS_DEVEL

script_user rootenable_script_security

}

vrrp_script chk_apiserver {script "/etc/keepalived/check_apiserver.sh"interval 5weight -5fall 2

rise 1

}

vrrp_instance VI_1 { #实例名字为VI_1,相同实例的备节点名字要和这个相同state MASTER #状态为MASTER,备节点状态需要为BACKUPinterface eth0 #通信接口为eth0,此参数备节点设置和主节点相同mcast_src_ip 10.0.0.171virtual_router_id 51 #实例ID为55,keepalived.conf里唯一priority 100 #优先级为150,备节点的优先级必须比此数字低advert_int 1 #通信检查间隔时间1秒authentication {auth_type PASS #PASS认证类型,此参数备节点设置和主节点相同auth_pass K8SHA_KA_AUTH #密码是1111,此参数备节点设置和主节点相同。}virtual_ipaddress {10.0.0.200 dev eth0 label eth0:3 #虚拟IP}track_script {chk_apiserver #模块}

}

EOF

4.3.2 master02

cat > /etc/keepalived/keepalived.conf <<EOF! Configuration File for keepalived

global_defs {router_id LVS_DEVEL

script_user rootenable_script_security

}

vrrp_script chk_apiserver {script "/etc/keepalived/check_apiserver.sh"interval 5weight -5fall 2

rise 1

}

vrrp_instance VI_1 { #实例名字为VI_1,相同实例的备节点名字要和这个相同state BACKUP #状态为MASTER,备节点状态需要为BACKUPinterface eth0 #通信接口为eth0,此参数备节点设置和主节点相同mcast_src_ip 10.0.0.172virtual_router_id 51 #实例ID为55,keepalived.conf里唯一priority 50 #优先级为150,备节点的优先级必须比此数字低advert_int 1 #通信检查间隔时间1秒authentication {auth_type PASS #PASS认证类型,此参数备节点设置和主节点相同auth_pass 1111 #密码是1111,此参数备节点设置和主节点相同。}virtual_ipaddress {10.0.0.200 dev eth0 label eth0:3 #虚拟IP}track_script {chk_apiserver #模块}

}

EOF

4.3.3 master03

cat > /etc/keepalived/keepalived.conf <<EOF! Configuration File for keepalived

global_defs {router_id LVS_DEVEL

script_user rootenable_script_security

}

vrrp_script chk_apiserver {script "/etc/keepalived/check_apiserver.sh"interval 5weight -5fall 2

rise 1

}

vrrp_instance VI_1 { #实例名字为VI_1,相同实例的备节点名字要和这个相同state BACKUP #状态为MASTER,备节点状态需要为BACKUPinterface eth0 #通信接口为eth0,此参数备节点设置和主节点相同mcast_src_ip 10.0.0.173virtual_router_id 51 #实例ID为55,keepalived.conf里唯一priority 50 #优先级为150,备节点的优先级必须比此数字低advert_int 1 #通信检查间隔时间1秒authentication {auth_type PASS #PASS认证类型,此参数备节点设置和主节点相同auth_pass 1111 #密码是1111,此参数备节点设置和主节点相同。}virtual_ipaddress {10.0.0.200 dev eth0 label eth0:3 #虚拟IP}track_script {chk_apiserver #模块}

}

EOF

4.4 启动 keepalived 和 haproxy 服务并加入开机启动

systemctl enable keepalived && systemctl start keepalived && systemctl status keepalived

systemctl enable haproxy && systemctl start haproxy && systemctl status haproxy

4.5 编写健康检查脚本

[root@master01 ~]# vim /etc/keepalived/check_apiserver.sh

#!/bin/bash

err=0

for k in $(seq 1 3)

docheck_code=$(pgrep haproxy)if [[ $check_code == "" ]]; thenerr=$(expr $err + 1)sleep 1continueelseerr=0breakfi

doneif [[ $err != "0" ]]; thenecho "systemctl stop keepalived"/usr/bin/systemctl stop keepalivedexit 1

elseexit 0

fi

5.Kubernetes组件配置

5.1 etcd配置

5.1.1 master01

cat > /etc/etcd/etcd.config.yml <<EOF

name: 'master01'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.171:2380'

listen-client-urls: 'https://10.0.0.171:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.171:2380'

advertise-client-urls: 'https://10.0.0.171:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master01=https://10.0.0.171:2380,master02=https://10.0.0.172:2380,master03=https://10.0.0.173:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'client-cert-auth: truetrusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'auto-tls: true

peer-transport-security:cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'peer-client-cert-auth: truetrusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

5.1.2 master02

cat > /etc/etcd/etcd.config.yml <<EOF

name: 'master02'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.172:2380'

listen-client-urls: 'https://10.0.0.172:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.172:2380'

advertise-client-urls: 'https://10.0.0.172:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master01=https://10.0.0.171:2380,master02=https://10.0.0.172:2380,master03=https://10.0.0.173:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'client-cert-auth: truetrusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'auto-tls: true

peer-transport-security:cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'peer-client-cert-auth: truetrusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

5.1.3 master03

cat > /etc/etcd/etcd.config.yml <<EOF

name: 'master03'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.173:2380'

listen-client-urls: 'https://10.0.0.173:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.173:2380'

advertise-client-urls: 'https://10.0.0.173:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'master01=https://10.0.0.171:2380,master02=https://10.0.0.172:2380,master03=https://10.0.0.173:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'client-cert-auth: truetrusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'auto-tls: true

peer-transport-security:cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'peer-client-cert-auth: truetrusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

5.1.4 配置systemd

#1.所有Master节点创建etcd service并启动

cat > /usr/lib/systemd/system/etcd.service <<EOF

[Unit]

Description=Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536[Install]

WantedBy=multi-user.target

Alias=etcd3.service

EOF#2.所有Master节点创建etcd的证书目录

mkdir /etc/kubernetes/pki/etcd

ln -s /etc/etcd/ssl/* /etc/kubernetes/pki/etcd/

systemctl daemon-reload

systemctl enable --now etcd

systemctl status etcd#3.查看etcd状态

export ETCDCTL_API=3

etcdctl --endpoints="10.0.0.171:2379,10.0.0.172:2379,10.0.0.173:2379" --cacert=/etc/kubernetes/pki/etcd/etcd-ca.pem --cert=/etc/kubernetes/pki/etcd/etcd.pem --key=/etc/kubernetes/pki/etcd/etcd-key.pem endpoint status --write-out=table

5.2 apiserver

所有Master节点创建kube-apiserver service,# 注意,如果不是高可用集群,10.0.0.200改为master01的地址

5.2.1 maser01配置

注意本文档使用的k8s service网段为10.96.0.0/16,该网段不能和宿主机的网段、Pod网段的重复,请按需修改cat > /usr/lib/systemd/system/kube-apiserver.service <<EOF[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

ExecStart=/usr/local/bin/kube-apiserver \--v=2 \--allow-privileged=true \--bind-address=0.0.0.0 \--secure-port=6443 \--advertise-address=10.0.0.171 \--service-cluster-ip-range=10.96.0.0/16 \--service-node-port-range=30000-32767 \--etcd-servers=https://10.0.0.171:2379,https://10.0.0.172:2379,https://10.0.0.173:2379 \--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \--etcd-certfile=/etc/etcd/ssl/etcd.pem \--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \--client-ca-file=/etc/kubernetes/pki/ca.pem \--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \--service-account-key-file=/etc/kubernetes/pki/sa.pub \--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \--service-account-issuer=https://kubernetes.default.svc.cluster.local \--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \--authorization-mode=Node,RBAC \--enable-bootstrap-token-auth=true \--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \--requestheader-allowed-names=aggregator \--requestheader-group-headers=X-Remote-Group \--requestheader-extra-headers-prefix=X-Remote-Extra- \--requestheader-username-headers=X-Remote-User# --token-auth-file=/etc/kubernetes/token.csvRestart=on-failure

RestartSec=10s

LimitNOFILE=65535[Install]

WantedBy=multi-user.target

EOF

5.2.2 master02配置

cat > /usr/lib/systemd/system/kube-apiserver.service <<EOF[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

ExecStart=/usr/local/bin/kube-apiserver \--v=2 \--allow-privileged=true \--bind-address=0.0.0.0 \--secure-port=6443 \--advertise-address=10.0.0.172 \--service-cluster-ip-range=10.96.0.0/16 \--service-node-port-range=30000-32767 \--etcd-servers=https://10.0.0.171:2379,https://10.0.0.172:2379,https://10.0.0.173:2379 \--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \--etcd-certfile=/etc/etcd/ssl/etcd.pem \--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \--client-ca-file=/etc/kubernetes/pki/ca.pem \--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \--service-account-key-file=/etc/kubernetes/pki/sa.pub \--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \--service-account-issuer=https://kubernetes.default.svc.cluster.local \--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \--authorization-mode=Node,RBAC \--enable-bootstrap-token-auth=true \--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \--requestheader-allowed-names=aggregator \--requestheader-group-headers=X-Remote-Group \--requestheader-extra-headers-prefix=X-Remote-Extra- \--requestheader-username-headers=X-Remote-UserRestart=on-failure

RestartSec=10s

LimitNOFILE=65535[Install]

WantedBy=multi-user.target

EOF

5.2.3 master03配置

cat > /usr/lib/systemd/system/kube-apiserver.service <<EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

ExecStart=/usr/local/bin/kube-apiserver \--v=2 \--allow-privileged=true \--bind-address=0.0.0.0 \--secure-port=6443 \--advertise-address=10.0.0.173 \--service-cluster-ip-range=10.96.0.0/16 \--service-node-port-range=30000-32767 \--etcd-servers=https://10.0.0.171:2379,https://10.0.0.172:2379,https://10.0.0.173:2379 \--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \--etcd-certfile=/etc/etcd/ssl/etcd.pem \--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \--client-ca-file=/etc/kubernetes/pki/ca.pem \--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \--service-account-key-file=/etc/kubernetes/pki/sa.pub \--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \--service-account-issuer=https://kubernetes.default.svc.cluster.local \--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \--authorization-mode=Node,RBAC \--enable-bootstrap-token-auth=true \--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \--requestheader-allowed-names=aggregator \--requestheader-group-headers=X-Remote-Group \--requestheader-extra-headers-prefix=X-Remote-Extra- \--requestheader-username-headers=X-Remote-UserRestart=on-failure

RestartSec=10s

LimitNOFILE=65535[Install]

WantedBy=multi-user.target

EOF

5.2.4 启动apiserver

#所有Master节点开启kube-apiserver

systemctl daemon-reload && systemctl enable --now kube-apiserver#检测kube-server状态systemctl status kube-apiserver

5.3 Controller Manager

5.3.1 所有master节点配置一样

#所有Master节点配置kube-controller-manager service(所有master节点配置一样)

#注意本文档使用的k8s Pod网段为172.16.0.0/12,该网段不能和宿主机的网段、k8s Service网段的重复,请按需修改

cat > /usr/lib/systemd/system/kube-controller-manager.service <<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

ExecStart=/usr/local/bin/kube-controller-manager \--v=2 \--root-ca-file=/etc/kubernetes/pki/ca.pem \--cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \--cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \--service-account-private-key-file=/etc/kubernetes/pki/sa.key \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \--authentication-kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \--authorization-kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \--leader-elect=true \--use-service-account-credentials=true \--node-monitor-grace-period=40s \--node-monitor-period=5s \--controllers=*,bootstrapsigner,tokencleaner \--allocate-node-cidrs=true \--cluster-cidr=172.16.0.0/16 \--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \--node-cidr-mask-size=24Restart=always

RestartSec=10s[Install]

WantedBy=multi-user.target

EOF

5.3.2 启动kube-controller-manager

#所有Master节点启动kube-controller-managersystemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

5.4 scheduler

5.4.1 所有Master节点配置kube-scheduler service(所有master节点配置一样)

cat > /usr/lib/systemd/system/kube-scheduler.service <<EOF[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

ExecStart=/usr/local/bin/kube-scheduler \--v=2 \--leader-elect=true \--authentication-kubeconfig=/etc/kubernetes/scheduler.kubeconfig \--authorization-kubeconfig=/etc/kubernetes/scheduler.kubeconfig \--kubeconfig=/etc/kubernetes/scheduler.kubeconfigRestart=always

RestartSec=10s[Install]

WantedBy=multi-user.target

EOF

5.4.2 启动

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler

6.TLS Bootstrapping配置

只需要在Master01创建bootstrap

6.1 Master01创建bootstrap

# 注意,如果不是高可用集群,10.103.236.236:16443改为master01的地址,16443改为apiserver的端口,默认是6443cd /root/k8s-ha-install/bootstrapkubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.0.0.200:16443 --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfigkubectl config set-credentials tls-bootstrap-token-user --token=c8ad9c.2e4d610cf3e7426e --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config set-context tls-bootstrap-token-user@kubernetes --cluster=kubernetes --user=tls-bootstrap-token-user --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfigkubectl config use-context tls-bootstrap-token-user@kubernetes --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig#注意:如果要修改bootstrap.secret.yaml的token-id和token-secret,需要保证下图红圈内的字符串一致的,并且位数是一样的。还要保证上个命令的黄色字体:c8ad9c.2e4d610cf3e7426e与你修改的字符串要一致

6.2 拷贝管理集群文件

[root@k8s-master01 bootstrap]# mkdir -p /root/.kube ; cp /etc/kubernetes/admin.kubeconfig /root/.kube/config#可以正常查询集群状态,才可以继续往下,否则不行,需要排查k8s组件是否有故障

kubectl get cs

6.3 创建bootstrap

[root@k8s-master01 bootstrap]# kubectl create -f bootstrap.secret.yaml

secret/bootstrap-token-c8ad9c created

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

clusterrolebinding.rbac.authorization.k8s.io/node-autoapprove-bootstrap created

clusterrolebinding.rbac.authorization.k8s.io/node-autoapprove-certificate-rotation created

clusterrole.rbac.authorization.k8s.io/system:kube-apiserver-to-kubelet created

clusterrolebinding.rbac.authorization.k8s.io/system:kube-apiserver created

7.node节点配置

7.1 复制证书

master01进行

cd /etc/kubernetes/for NODE in master02 master03 node01; dossh $NODE mkdir -p /etc/kubernetes/pkifor FILE in pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.kubeconfig; doscp /etc/kubernetes/$FILE $NODE:/etc/kubernetes/${FILE}done

done

7.2 kubelet配置

所有节点创建相关目录

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/

所有节点配置kubelet service

cat > /usr/lib/systemd/system/kubelet.service <<EOF[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes[Service]

ExecStart=/usr/local/bin/kubeletRestart=always

StartLimitInterval=0

RestartSec=10[Install]

WantedBy=multi-user.target

EOF

所有节点配置kubelet service的配置文件

如果Runtime为Containerd,请使用如下Kubelet的配置:

# Runtime为Containerd

# vim /etc/systemd/system/kubelet.service.d/10-kubelet.conf

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"

Environment="KUBELET_SYSTEM_ARGS=--container-runtime-endpoint=unix:///run/containerd/containerd.sock"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

所有节点配置kubelet service的配置文件

如果Runtime为Docker,请使用如下Kubelet的配置:

# Runtime为Docker

vi /etc/systemd/system/kubelet.service.d/10-kubelet.conf[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"

Environment="KUBELET_SYSTEM_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.5"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

所有节点创建kubelet的配置文件

注意:如果更改了k8s的service网段,需要更改kubelet-conf.yml 的clusterDNS:配置,改成k8s Service网段的第十个地址,比如10.96.0.10

# vi /etc/kubernetes/kubelet-conf.yml

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:anonymous:enabled: falsewebhook:cacheTTL: 2m0senabled: truex509:clientCAFile: /etc/kubernetes/pki/ca.pem

authorization:mode: Webhookwebhook:cacheAuthorizedTTL: 5m0scacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.96.0.10

clusterDomain: cluster.local

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:imagefs.available: 15%memory.available: 100Minodefs.available: 10%nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

所有节点启动kubelet

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet

7.3 查看集群状态

kubectl get no

查看集群状态(此时Ready或NotReady都正常,还没安装Calico)

7.4 kube-proxy配置

以下操作只在Master01执行

# 注意,如果不是高可用集群,10.0.0.200:16443改为master01的地址,8443改为apiserver的端口,默认是6443

cd /root/k8s-ha-install/pkicfssl gencert \-ca=/etc/kubernetes/pki/ca.pem \-ca-key=/etc/kubernetes/pki/ca-key.pem \-config=ca-config.json \-profile=kubernetes \kube-proxy-csr.json | cfssljson -bare /etc/kubernetes/pki/kube-proxykubectl config set-cluster kubernetes \--certificate-authority=/etc/kubernetes/pki/ca.pem \--embed-certs=true \--server=https://10.0.0.200:16443 \--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfigkubectl config set-credentials system:kube-proxy \--client-certificate=/etc/kubernetes/pki/kube-proxy.pem \--client-key=/etc/kubernetes/pki/kube-proxy-key.pem \--embed-certs=true \--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfigkubectl config set-context system:kube-proxy@kubernetes \--cluster=kubernetes \--user=system:kube-proxy \--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfigkubectl config use-context system:kube-proxy@kubernetes \--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

将kubeconfig发送至其他节点

for NODE in master02 master03; doscp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfigdonefor NODE in node01; doscp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfigdone

所有节点添加kube-proxy的配置和service文件

# vi /usr/lib/systemd/system/kube-proxy.service[Unit]

Description=Kubernetes Kube Proxy

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

ExecStart=/usr/local/bin/kube-proxy \--config=/etc/kubernetes/kube-proxy.yaml \--v=2Restart=always

RestartSec=10s[Install]

WantedBy=multi-user.target

如果更改了集群Pod的网段,需要更改kube-proxy.yaml的clusterCIDR为自己的Pod网段

# vi /etc/kubernetes/kube-proxy.yaml

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:acceptContentTypes: ""burst: 10contentType: application/vnd.kubernetes.protobufkubeconfig: /etc/kubernetes/kube-proxy.kubeconfigqps: 5

clusterCIDR: 172.16.0.0/16

configSyncPeriod: 15m0s

conntrack:max: nullmaxPerCore: 32768min: 131072tcpCloseWaitTimeout: 1h0m0stcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:masqueradeAll: falsemasqueradeBit: 14minSyncPeriod: 0ssyncPeriod: 30s

ipvs:masqueradeAll: trueminSyncPeriod: 5sscheduler: "rr"syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs"

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

udpIdleTimeout: 250ms

所有节点启动kube-proxy

systemctl daemon-reload

systemctl enable --now kube-proxy

systemctl status kube-proxy

8. 安装Calico网络插件

8.1 更换POD的网段

以下步骤只在Master01执行,注意更改Pod网段

cd /root/k8s-ha-install/calico/sed "s#POD_CIDR#172.16.0.0/16#g" calico.yaml | grep 172确认更改

sed -i "s#POD_CIDR#172.16.0.0/16#g" calico.yaml

8.2 安装calico

kubectl apply -f calico.yaml

8.3 查看容器状态

kubectl get po -n kube-system

如果容器状态异常可以使用kubectl describe 或者kubectl logs查看容器的日志

9.安装CoreDNS

以下步骤只在Master01执行

9.1 配置service网段

cd /root/k8s-ha-install/#如果更改了k8s service的网段需要将coredns的serviceIP改成k8s service网段的第十个IP

COREDNS_SERVICE_IP=`kubectl get svc | grep kubernetes | awk '{print $3}'`0

echo $COREDNS_SERVICE_IP#检查

[root@master01 k8s-ha-install]# sed "s#KUBEDNS_SERVICE_IP#${COREDNS_SERVICE_IP}#g" CoreDNS/coredns.yaml | grep 10.96clusterIP: 10.96.0.10 #确认更换

sed -i "s#KUBEDNS_SERVICE_IP#${COREDNS_SERVICE_IP}#g" CoreDNS/coredns.yaml

9.2 安装CoreDNS

kubectl create -f CoreDNS/coredns.yaml

9.3 检查状态

kubectl get pods -n kube-system | grep dns

9.4 安装最新版coredns

COREDNS_SERVICE_IP=`kubectl get svc | grep kubernetes | awk '{print $3}'`0

git clone https://github.com/coredns/deployment.git

cd deployment/kubernetes

# ./deploy.sh -s -i ${COREDNS_SERVICE_IP} | kubectl apply -f -

serviceaccount/coredns created

clusterrole.rbac.authorization.k8s.io/system:coredns created

clusterrolebinding.rbac.authorization.k8s.io/system:coredns created

configmap/coredns created

deployment.apps/coredns created

service/kube-dns created

查看状态# kubectl get po -n kube-system -l k8s-app=kube-dns

NAME READY STATUS RESTARTS AGE

coredns-85b4878f78-h29kh 1/1 Running 0 8h

10. 安装Metrics Server

以下步骤只在Master01执行

10.1 创建metrics

cd /root/k8s-ha-install/metrics-server[root@master01 metrics-server]# kubectl create -f .

serviceaccount/metrics-server created

clusterrole.rbac.authorization.k8s.io/system:aggregated-metrics-reader created

clusterrole.rbac.authorization.k8s.io/system:metrics-server created

rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created

clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created

service/metrics-server created

deployment.apps/metrics-server created

apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created

10.2 检查状态

[root@master01 metrics-server]# kubectl get pods -n kube-system | grep metrics

metrics-server-6bf7dcd649-r48gr 1/1 Running 0 2m5s

11.安装Dashboard

在Master01下执行

cd /root/k8s-ha-install/dashboard/

kubectl create -f .

12.集群可用性验证

12.1 节点需均正常

kubectl get node

12.2 Pod均需正常

kubectl get pod -A

12.3 检查集群网段无任何冲突

kubectl get svc

kubectl get pod -A -owide

12.4 能够正常创建资源

kubectl create deploy cluster-test --image=registry.cn-beijing.aliyuncs.com/dotbalo/debug-tools -- sleep 3600

12.5 Pod 必须能够解析 Service(同 namespace 和跨 namespace)

#取上面的NAME进入pod

kubectl exec -it cluster-test-84dfc9c68b-lbkhd -- bash#解析两个域名,能够对应到.1和.10即可

nslookup kubernetes

nslookup kube-dns.kube-system

12.6 每个节点都必须要能访问 Kubernetes 的 kubernetes svc 443 和 kube-dns 的

service 53

curl https://10.96.0.1:443

curl 10.96.0.10:53每个节点均需出现以下返回信息说明已通

12.7 Pod 和 Pod 之间要能够正常通讯(同 namespace 和跨 namespace)

[root@master01 metrics-server]# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

cluster-test-8b47d69f5-rgllt 1/1 Running 0 4m31s 172.16.196.129 node01 <none> <none>

[root@master01 metrics-server]# kubectl -n kube-system get pod -owide

12.8 Pod 和 Pod 之间要能够正常通讯(同机器和跨机器)

for node in master02 master03 node01; do ssh $node ping -c 2 172.16.241.65 && echo 主机名称:$node; done

本文章内容参考杜宽的《云原生Kubernetes全栈架构师》,视频、资料文档等,感谢提供优质知识内容!