一、多感知任务

在移动机器人的感知系统,包括自动驾驶汽车和无人机,会使用多种传感器来获取关键信息,从而实现对环境的感知和物体检测。这些传感器包括相机、激光雷达、雷达、惯性测量单元(IMU)、全球导航卫星系统(GNSS)等。在自动驾驶和移动机器人的感知系统里面,相机的功能主要是周围的物体,这些感知任务包括任务包括物体检测和分割。

物体检测:物体检测是指通过处理传感器数据来检测环境中的物体,如汽车、行人、骑自行车的人、红绿灯等。YOLO系列网络是常用于实时物体检测的解决方案。这些网络可以实时识别多个物体,并提供它们的位置和边界框。

实例分割:实例分割是一项更复杂的任务,它不仅可以检测物体,还可以将它们分割成属于不同实例的部分。这对于跟踪和识别不同的车辆或行人非常有用。通常,Mask R-CNN等网络用于实例分割。

语义分割:语义分割是将图像中的每个像素分配到特定的语义类别的任务,例如将道路、建筑物、车辆等分割成不同的区域。U-Net和全卷积网络(FCN)等CNN架构通常用于语义分割。

在处理这些任务时,有两种主要方法可以考虑:

单一模型:开发一个单一的神经网络模型,可以同时处理多个感知任务,如检测、分割。这种方法可以提高计算效率,但需要大量的训练数据和复杂的网络结构。

多模型集成:使用多个独立的模型来处理不同的感知任务,每个模型专注于一个任务。这种方法可以更容易地处理不同任务之间的数据不平衡,并且可以根据任务的重要性对不同模型进行资源分配。

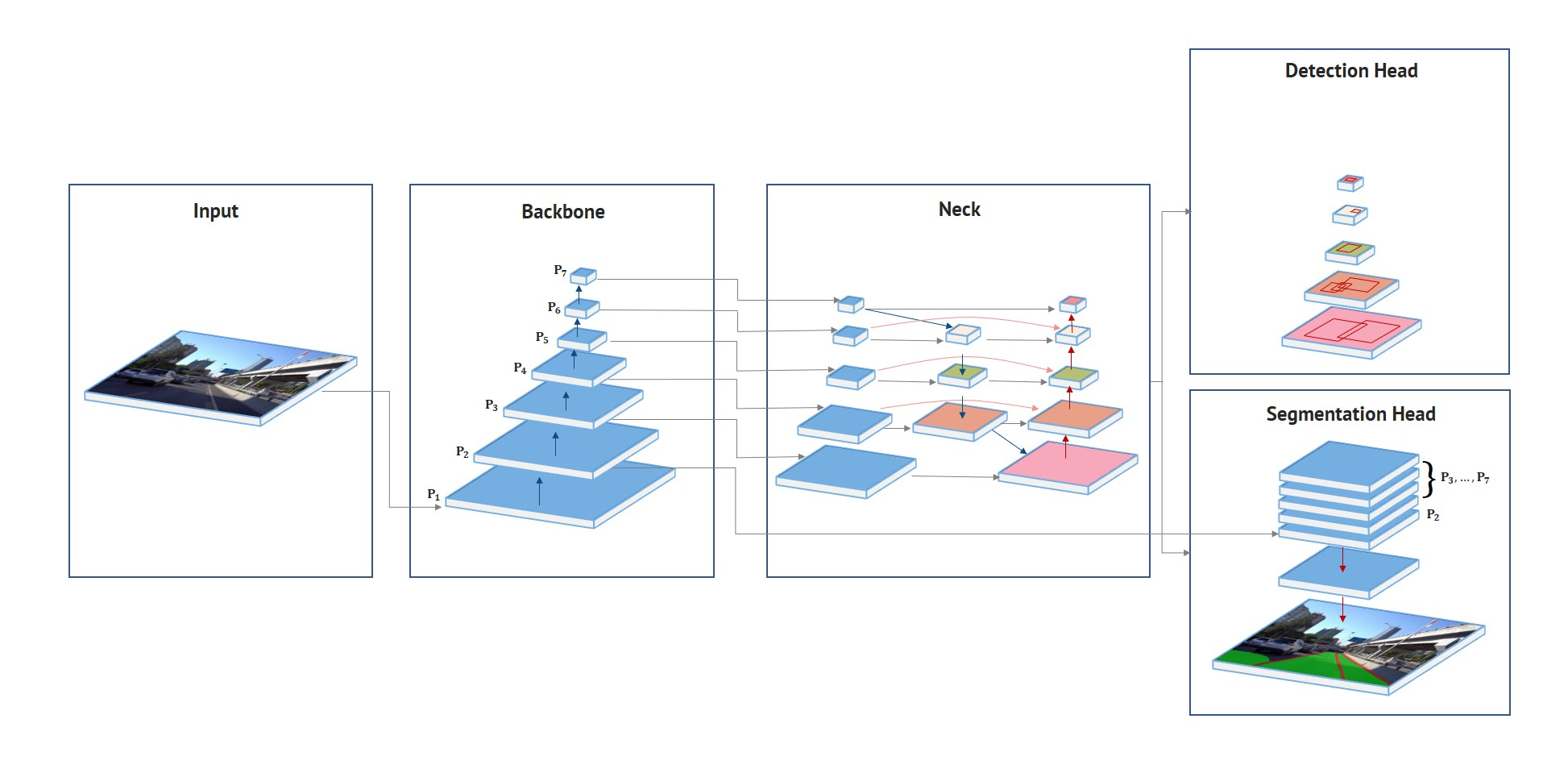

二、Hybridnets多任务框架

Hybridnets一种多任务处理的端到端多感知网络,它提出了几种关键的优化方法以提高精度。

- 引入了基于加权双向特征网络的高效分割头和边界框/类别预测网络。

- 提出了加权双向特征网络中各层的自动定制锚定方法。

- 提出了一种有效的训练损失函数和训练策略,以平衡和优化网络性能。

基于这些优化,Hybridnets开发了一种端到端感知网络,用于执行多任务处理,包括交通目标检测、可驾驶区域分割和车道线检测,将其称为混合网。混合网在伯克利DeepDrive数据集上表现出色,平均精度达到了77.3%,平均交并比为31.6%,并且参数数量仅为1283万,浮点操作数为156亿。此外,它能够实时执行视觉感知任务,因此是一个实用而准确的多任务处理解决方案。

算法源码:https://github.com/datvuthanh/HybridNets.git

三、使用C++进行模型部署

把训练好的模型转成onnx格式的模型,然后使用OpenCV 4.6 和contrib dnn模块进行推理,使用的IDE是Vs 2019。

首先定义一人模型推理的类,这里使用为了方便演示,只用CPU进行推理。

struct Net_config

{float confThreshold; float nmsThreshold; std::string modelpath;std::string anchorpath;

};class HybridNets

{

public:HybridNets(Net_config config);cv::Mat detect(cv::Mat frame); ~HybridNets();

private:int inpWidth;int inpHeight;std::vector<std::string> det_class_names = { "car" };std::vector<std::string> seg_class_names = { "Background", "Lane", "Line" };int det_num_class;int seg_numclass;float confThreshold;float nmsThreshold;cv::dnn::Net net;float* anchors = nullptr;const float mean_[3] = { 0.485, 0.456, 0.406 };const float std_[3] = { 0.229, 0.224, 0.225 };const bool keep_ratio = true;cv::Mat resize_image(cv::Mat srcimg, int* newh, int* neww, int* padh, int* padw);cv::Mat normalize_(cv::Mat img);std::vector<cv::Vec3b> class_colors = { cv::Vec3b(0,0,0), cv::Vec3b(0, 255, 0), cv::Vec3b(255, 0, 0) };

};HybridNets::HybridNets(Net_config config)

{this->confThreshold = config.confThreshold;this->nmsThreshold = config.nmsThreshold;this->net = cv::dnn::readNet(config.modelpath); this->det_num_class = det_class_names.size();this->seg_numclass = seg_class_names.size();size_t pos = config.modelpath.rfind("_");size_t pos_ = config.modelpath.rfind(".");int len = pos_ - pos - 1;std::string hxw = config.modelpath.substr(pos + 1, len);pos = hxw.rfind("x");std::string h = hxw.substr(0, pos);len = hxw.length() - pos;std::string w = hxw.substr(pos + 1, len);this->inpHeight = stoi(h);this->inpWidth = stoi(w);pos = config.anchorpath.rfind("_");pos_ = config.anchorpath.rfind(".");len = pos_ - pos - 1;std::string len_ = config.anchorpath.substr(pos + 1, len);len = stoi(len_);this->anchors = new float[len];FILE* fp = fopen(config.anchorpath.c_str(), "rb");fread(this->anchors, sizeof(float), len, fp);fclose(fp);

}HybridNets::~HybridNets()

{delete[] anchors;anchors = nullptr;

}cv::Mat HybridNets::resize_image(cv::Mat srcimg, int* newh, int* neww, int* padh, int* padw)

{int srch = srcimg.rows, srcw = srcimg.cols;*newh = this->inpHeight;*neww = this->inpWidth;cv::Mat dstimg;if (this->keep_ratio && srch != srcw) {float hw_scale = (float)srch / srcw;if (hw_scale > 1) {*newh = this->inpHeight;*neww = int(this->inpWidth / hw_scale);resize(srcimg, dstimg, cv::Size(*neww, *newh), cv::INTER_LINEAR);*padw = int((this->inpWidth - *neww) * 0.5);copyMakeBorder(dstimg, dstimg, 0, 0, *padw, this->inpWidth - *neww - *padw, cv::BORDER_CONSTANT, 114);}else {*newh = (int)this->inpHeight * hw_scale;*neww = this->inpWidth;resize(srcimg, dstimg, cv::Size(*neww, *newh), cv::INTER_LINEAR);*padh = (int)(this->inpHeight - *newh) * 0.5;copyMakeBorder(dstimg, dstimg, *padh, this->inpHeight - *newh - *padh, 0, 0, cv::BORDER_CONSTANT, 114);}}else {resize(srcimg, dstimg, cv::Size(*neww, *newh), cv::INTER_LINEAR);}return dstimg;

}cv::Mat HybridNets::normalize_(cv::Mat img)

{std::vector<cv::Mat> bgrChannels(3);split(img, bgrChannels);for (int c = 0; c < 3; c++){bgrChannels[c].convertTo(bgrChannels[c], CV_32FC1, 1.0 / (255.0 * std_[c]), (0.0 - mean_[c]) / std_[c]);}cv::Mat m_normalized_mat;merge(bgrChannels, m_normalized_mat);return m_normalized_mat;

}cv::Mat HybridNets::detect(cv::Mat srcimg)

{int newh = 0, neww = 0, padh = 0, padw = 0;cv::Mat rgbimg;cvtColor(srcimg, rgbimg, cv::COLOR_BGR2RGB);cv::Mat dstimg = this->resize_image(rgbimg, &newh, &neww, &padh, &padw);cv::Mat normalized_mat = this->normalize_(dstimg);cv::Mat blob = cv::dnn::blobFromImage(normalized_mat);this->net.setInput(blob);std::vector<cv::Mat> outs;this->net.forward(outs, this->net.getUnconnectedOutLayersNames());float* classification = (float*)outs[0].data;float* box_regression = (float*)outs[1].data;float* seg = (float*)outs[2].data;std::vector<cv::Rect> boxes;std::vector<float> confidences;std::vector<int> classIds;float ratioh = (float)srcimg.rows / newh, ratiow = (float)srcimg.cols / neww;const int num_proposal = outs[1].size[1]; for (int n = 0; n < num_proposal; n++){float conf = classification[n];if (conf > this->confThreshold){const int row_ind = n * 4;float x_centers = box_regression[row_ind + 1] * this->anchors[row_ind + 2] + this->anchors[row_ind];float y_centers = box_regression[row_ind] * this->anchors[row_ind + 3] + this->anchors[row_ind + 1];float w = exp(box_regression[row_ind + 3]) * this->anchors[row_ind + 2];float h = exp(box_regression[row_ind + 2]) * this->anchors[row_ind + 3];float xmin = (x_centers - w * 0.5 - padw) * ratiow;float ymin = (y_centers - h * 0.5 - padh) * ratioh;w *= ratiow;h *= ratioh;cv::Rect box = cv::Rect(int(xmin), int(ymin), int(w), int(h));boxes.push_back(box);confidences.push_back(conf);classIds.push_back(0);}}std::vector<int> indices;cv::dnn::NMSBoxes(boxes, confidences, this->confThreshold, this->nmsThreshold, indices);cv::Mat outimg = srcimg.clone();for (size_t i = 0; i < indices.size(); ++i){int idx = indices[i];cv::Rect box = boxes[idx];rectangle(outimg, cv::Point(box.x, box.y), cv::Point(box.x + box.width, box.y + box.height), cv::Scalar(0, 0, 255), 2);std::string label = cv::format("%.2f", confidences[idx]);label = this->det_class_names[classIds[idx]] + ":" + label;putText(outimg, label, cv::Point(box.x, box.y - 5), cv::FONT_HERSHEY_SIMPLEX, 0.75, cv::Scalar(0, 0, 255), 1);}const int area = this->inpHeight * this->inpWidth;int i = 0, j = 0, c = 0;for (i = 0; i < outimg.rows; i++){for (j = 0; j < outimg.cols; j++){const int x = int(j / ratiow) + padw; const int y = int(i / ratioh) + padh;int max_id = -1;float max_conf = -10000;for (c = 0; c < seg_numclass; c++){float seg_conf = seg[c * area + y * this->inpWidth + x];if (seg_conf > max_conf){max_id = c;max_conf = seg_conf;}}if (max_id > 0){outimg.at<cv::Vec3b>(i, j)[0] = this->class_colors[max_id][0];outimg.at<cv::Vec3b>(i, j)[1] = this->class_colors[max_id][1];outimg.at<cv::Vec3b>(i, j)[2] = this->class_colors[max_id][2];}}}return outimg;

}

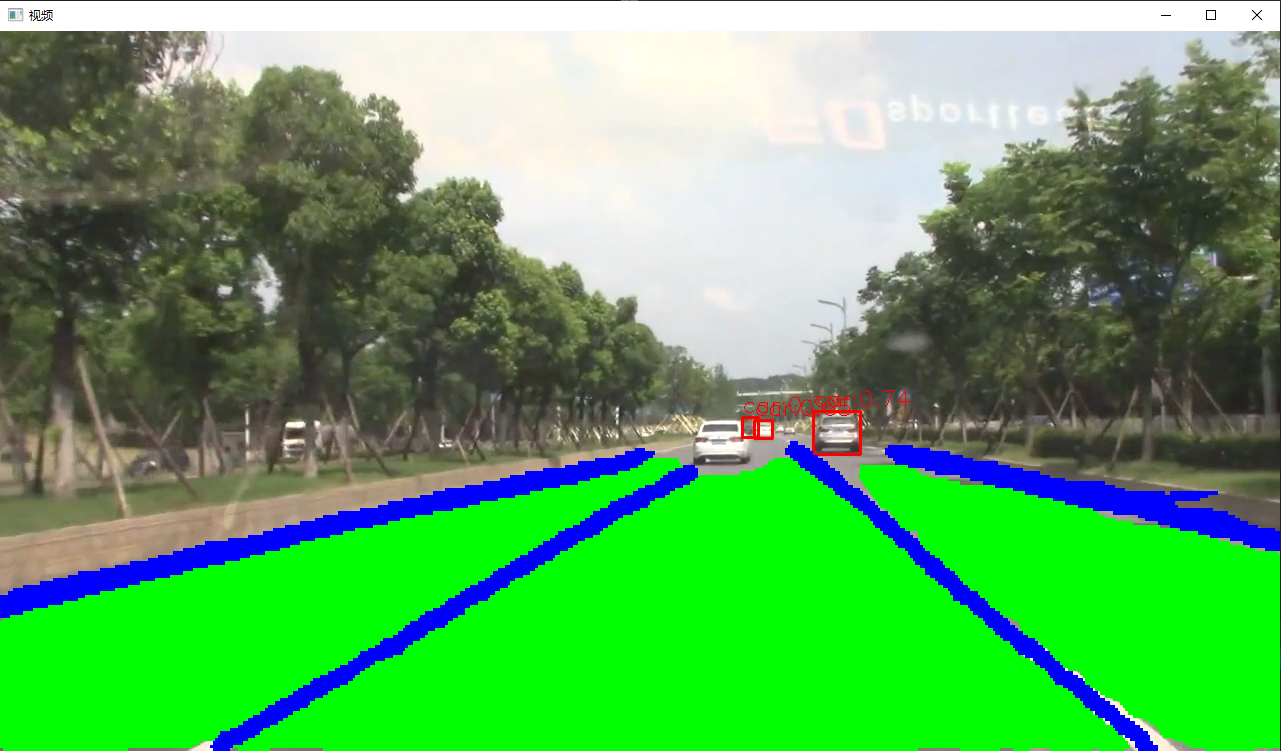

读取视频,然后使用模型进行推理

int main()

{Net_config cfg = { 0.3, 0.5, "models/hybridnets_768x1280.onnx", "models/anchors_736560.bin" };HybridNets net(cfg);//cv::VideoWriter outputVideo;cv::VideoCapture cap("test2.mp4");//cv::Size S = cv::Size((int)cap.get(cv::CAP_PROP_FRAME_WIDTH),//(int)cap.get(cv::CAP_PROP_FRAME_HEIGHT));//std::string out_path = "dst.ma4";if (cap.isOpened()){//outputVideo.open(out_path, -1, 30.0, S, true);cv::Mat cv_fram;while (1){cap.read(cv_fram);if (!cv_fram.empty()){cv::Mat outimg = net.detect(cv_fram);//outputVideo << outimg;cv::imshow("视频", outimg);}if (cv::waitKey(100) == 27)break;}}cap.release();return 0;

}

推理效果如下:

四、使用Python进行模型部署

import cv2

import argparse

import numpy as np

import osprint(cv2.__version__)class HybridNets():def __init__(self, modelpath, anchorpath, confThreshold=0.5, nmsThreshold=0.5):self.det_classes = ["car"]self.seg_classes = ["Background", "Lane", "Line"]self.net = cv2.dnn.readNet(modelpath)self.confThreshold = confThresholdself.nmsThreshold = nmsThresholdh, w = os.path.basename(modelpath).split('_')[-1].replace('.onnx', '').split('x')self.inpHeight, self.inpWidth = int(h), int(w)self.mean_ = np.array([0.485, 0.456, 0.406], dtype=np.float32).reshape((1, 1, 3))self.std_ = np.array([0.229, 0.224, 0.225], dtype=np.float32).reshape((1, 1, 3))self.anchors = np.load(anchorpath) ### cx_cy_w_hdef resize_image(self, srcimg, keep_ratio=True):padh, padw, newh, neww = 0, 0, self.inpWidth, self.inpHeightif keep_ratio and srcimg.shape[0] != srcimg.shape[1]:hw_scale = srcimg.shape[0] / srcimg.shape[1]if hw_scale > 1:newh, neww = self.inpHeight, int(self.inpWidth / hw_scale)img = cv2.resize(srcimg, (neww, newh), interpolation=cv2.INTER_LINEAR)padw = int((self.inpWidth - neww) * 0.5)img = cv2.copyMakeBorder(img, 0, 0, padw, self.inpWidth - neww - padw, cv2.BORDER_CONSTANT,value=(114, 114, 114)) # add borderelse:newh, neww = int(self.inpHeight * hw_scale), self.inpWidthimg = cv2.resize(srcimg, (neww, newh), interpolation=cv2.INTER_LINEAR)padh = int((self.inpHeight - newh) * 0.5)img = cv2.copyMakeBorder(img, padh, self.inpHeight - newh - padh, 0, 0, cv2.BORDER_CONSTANT,value=(114, 114, 114))else:img = cv2.resize(srcimg, (self.inpWidth, self.inpHeight), interpolation=cv2.INTER_LINEAR)return img, newh, neww, padh, padwdef detect(self, srcimg):img, newh, neww, padh, padw = self.resize_image(cv2.cvtColor(srcimg, cv2.COLOR_BGR2RGB))scale_h, scale_w = srcimg.shape[0] / newh, srcimg.shape[1] / newwimg = (img.astype(np.float32) / 255.0 - self.mean_) / self.std_# Sets the input to the networkblob = cv2.dnn.blobFromImage(img)self.net.setInput(blob)classification, box_regression, seg = self.net.forward(self.net.getUnconnectedOutLayersNames())x_centers = box_regression[..., 1] * self.anchors[..., 2] + self.anchors[..., 0]y_centers = box_regression[..., 0] * self.anchors[..., 3] + self.anchors[..., 1]w = np.exp(box_regression[..., 3]) * self.anchors[..., 2]h = np.exp(box_regression[..., 2]) * self.anchors[..., 3]xmin = x_centers - w * 0.5ymin = y_centers - h * 0.5bboxes_wh = np.stack([xmin, ymin, w, h], axis=2).squeeze(axis=0)confidences = np.max(classification.squeeze(axis=0), axis=1) ####max_class_confidenceclassIds = np.argmax(classification.squeeze(axis=0), axis=1)mask = confidences > self.confThresholdbboxes_wh = bboxes_wh[mask]confidences = confidences[mask]classIds = classIds[mask]bboxes_wh -= np.array([[padw, padh, 0, 0]]) bboxes_wh *= np.array([[scale_w, scale_h, scale_w, scale_h]])indices = cv2.dnn.NMSBoxes(bboxes_wh.tolist(), confidences.tolist(), self.confThreshold,self.nmsThreshold).flatten().tolist()drive_area_mask = np.squeeze(seg, axis=0)[:, padh:(self.inpHeight - padh), padw:(self.inpWidth - padw)]seg_id = np.argmax(drive_area_mask, axis=0).astype(np.uint8)seg_id = cv2.resize(seg_id, (srcimg.shape[1], srcimg.shape[0]), interpolation=cv2.INTER_NEAREST)outimg = srcimg.copy()for ind in indices:x, y, w, h = bboxes_wh[ind,:].astype(int)cv2.rectangle(outimg, (x, y), (x + w, y + h), (0, 0, 255), thickness=2, lineType=cv2.LINE_AA)cv2.putText(outimg, self.det_classes[classIds[ind]]+ ":" + str(round(confidences[ind], 2)), (x, y - 5), cv2.FONT_HERSHEY_SIMPLEX, 0.75, (0, 0, 255),thickness=1, lineType=cv2.LINE_AA)outimg[seg_id == 1] = [0, 255, 0]outimg[seg_id == 2] = [255, 0, 0]return outimgif __name__ == "__main__":parser = argparse.ArgumentParser()parser.add_argument('--imgpath', type=str, default='images/test.jpg', help="image path")parser.add_argument('--modelpath', type=str, default='models/hybridnets_768x1280.onnx')parser.add_argument('--anchorpath', type=str, default='models/anchors_768x1280.npy')parser.add_argument('--confThreshold', default=0.3, type=float, help='class confidence')parser.add_argument('--nmsThreshold', default=0.5, type=float, help='nms iou thresh')args = parser.parse_args()yolonet = HybridNets(args.modelpath, args.anchorpath, confThreshold=args.confThreshold,nmsThreshold=args.nmsThreshold)srcimg = cv2.imread(args.imgpath)srcimg = yolonet.detect(srcimg)cv2.namedWindow('dst', 0)cv2.imshow('dst', srcimg)cv2.waitKey(0)cv2.destroyAllWindows()